OpenAI reports significant data breach revealing user names and email addresses, emphasizes commitment to tra…

Published on: 2025-11-27

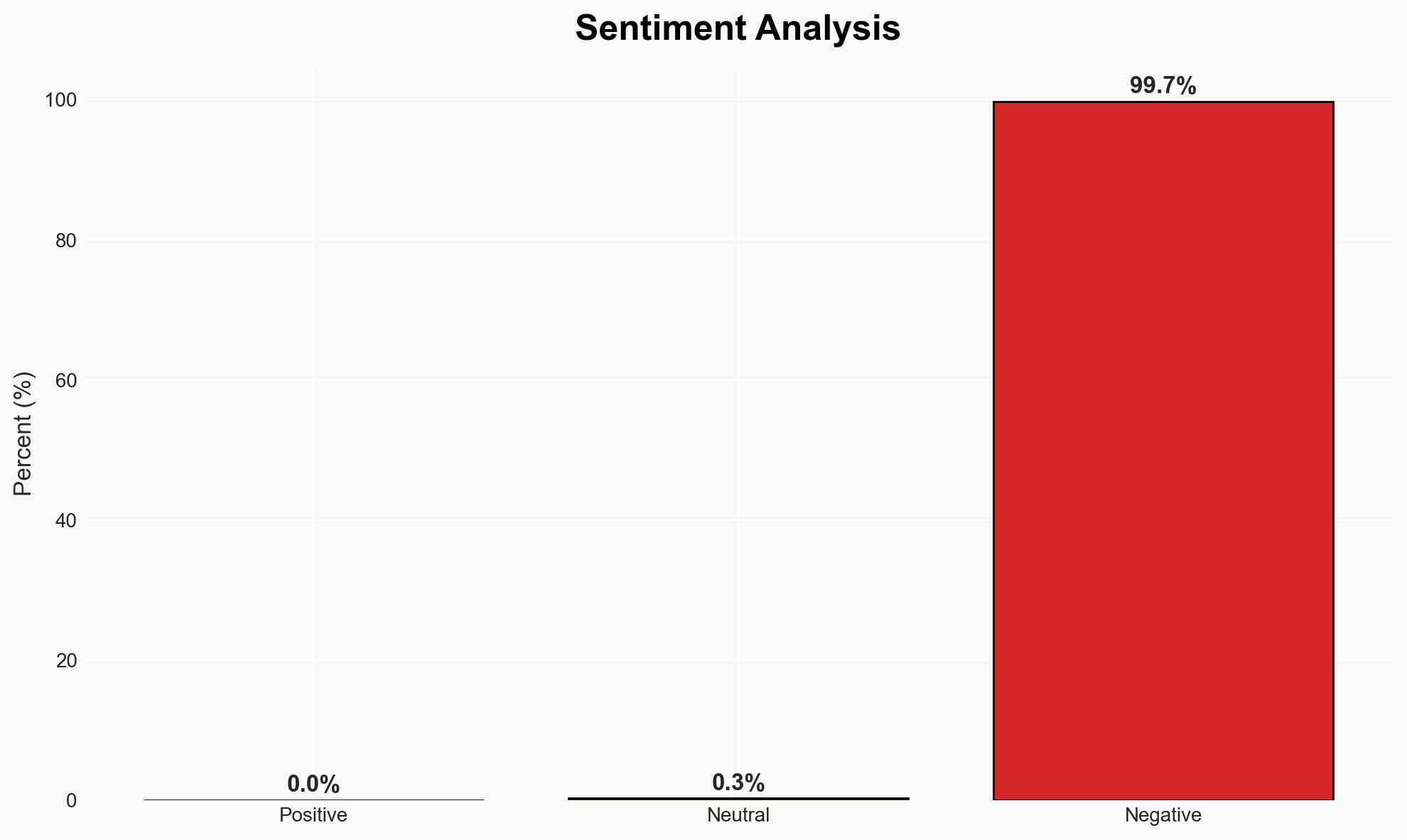

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

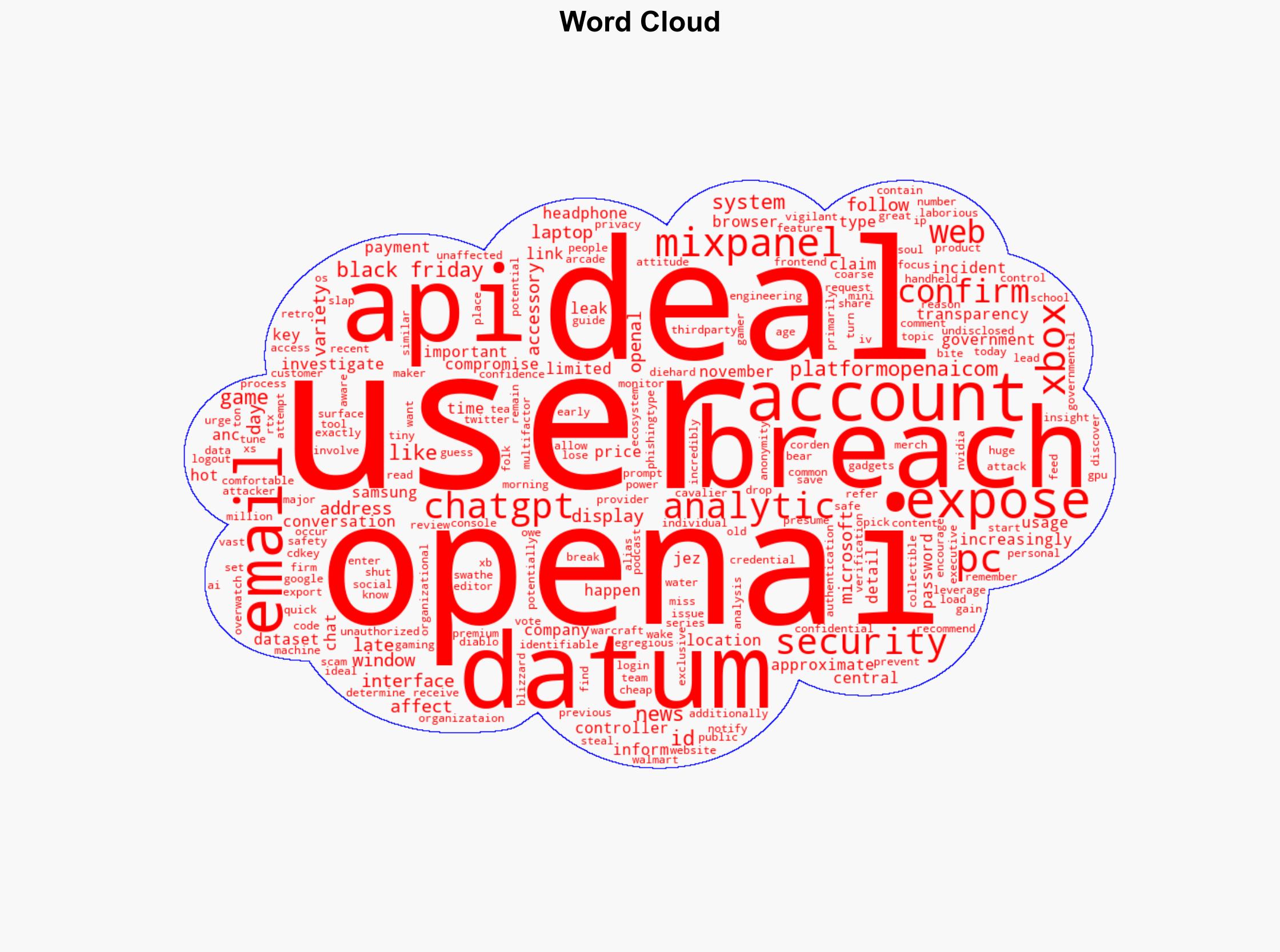

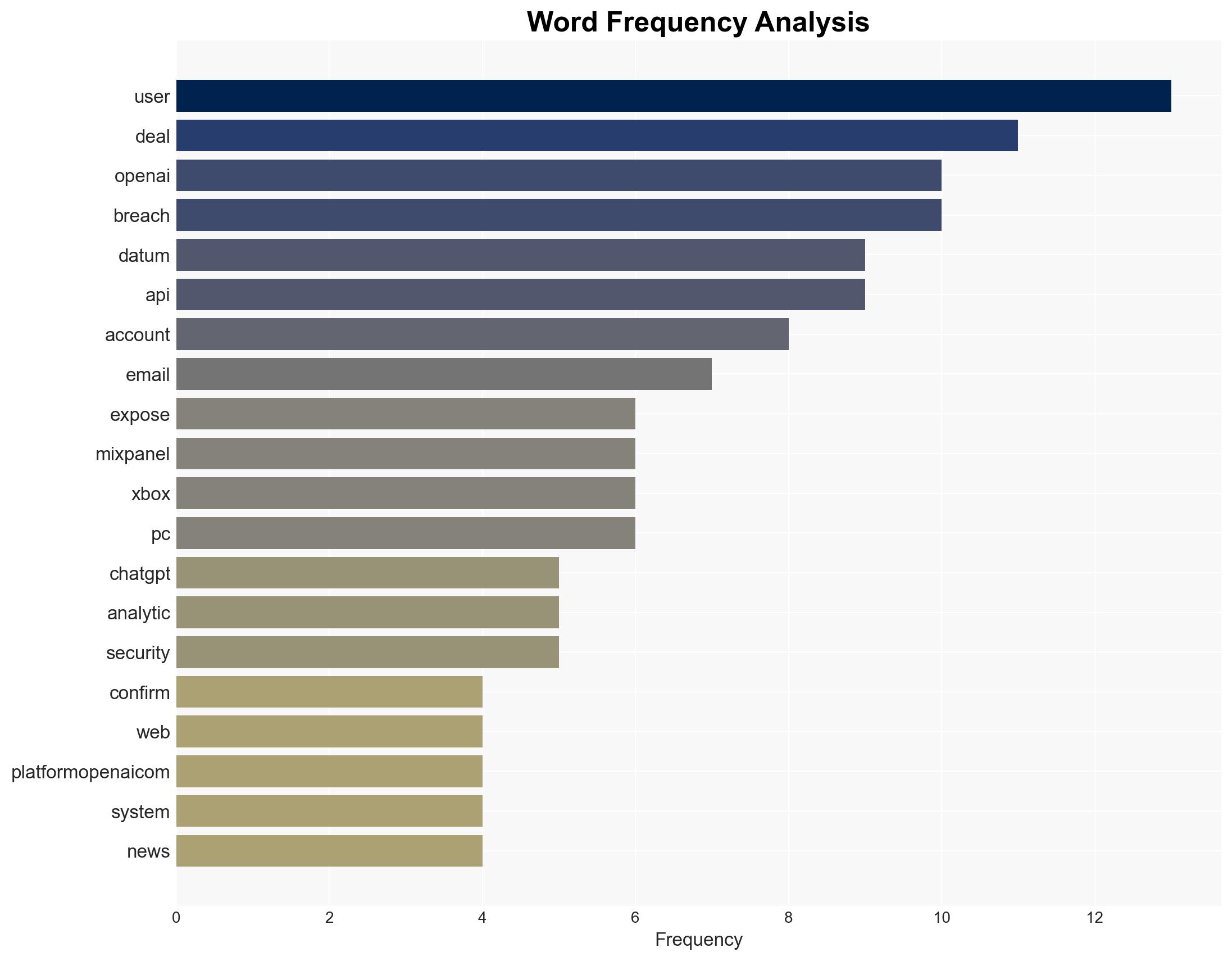

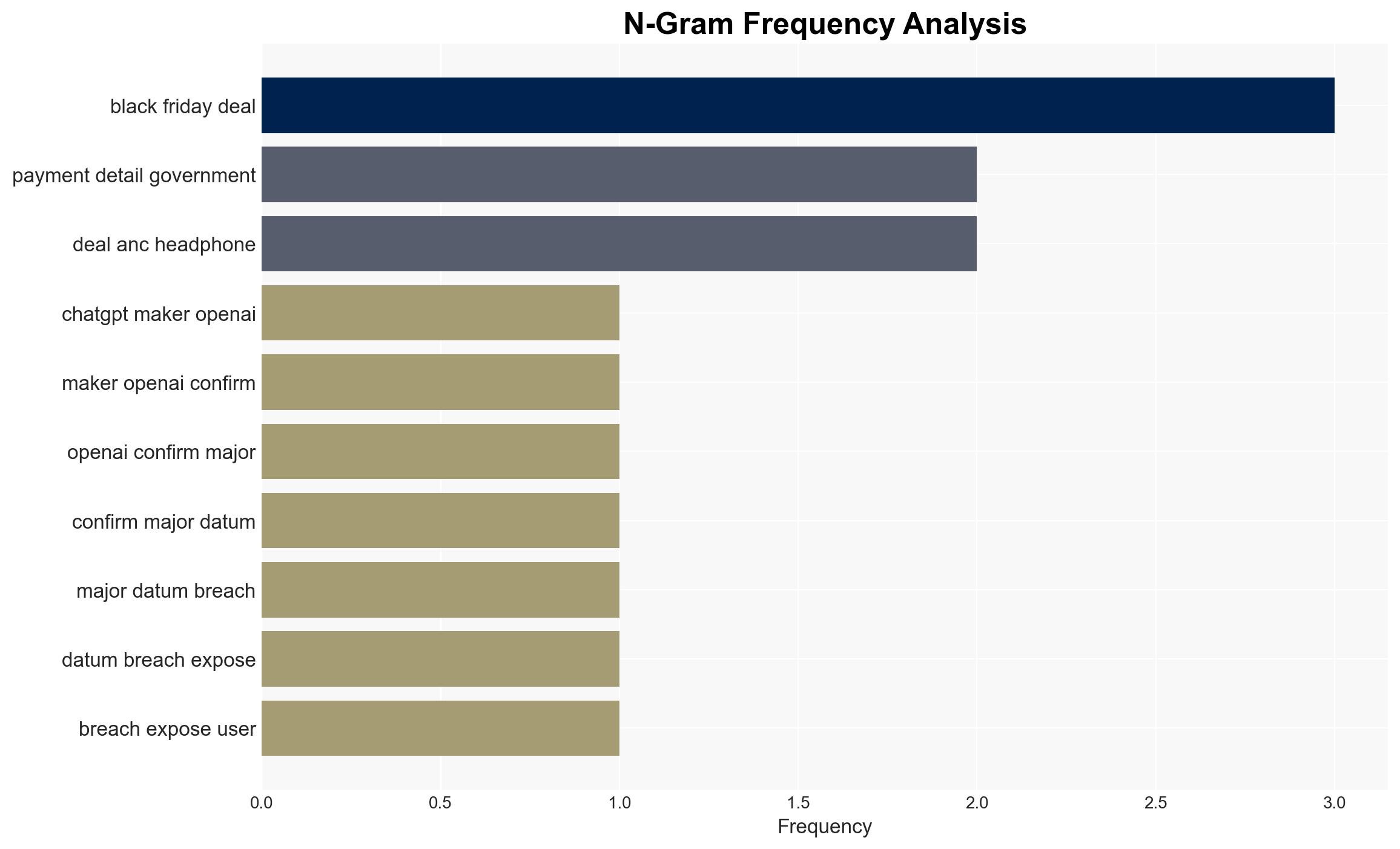

Intelligence Report: ChatGPT maker OpenAI confirms major data breach exposing user’s names email addresses and more Transparency is important to us

1. BLUF (Bottom Line Up Front)

OpenAI has confirmed a significant data breach involving user names, email addresses, and approximate locations due to a vulnerability in a third-party web analytics tool, Mixpanel. The breach does not appear to have compromised sensitive data such as passwords or payment details. The incident highlights potential vulnerabilities in third-party integrations. Overall confidence in the assessment is moderate.

2. Competing Hypotheses

- Hypothesis A: The breach was a result of a targeted cyber-attack exploiting vulnerabilities in Mixpanel’s system. Supporting evidence includes unauthorized access to Mixpanel’s system and the export of customer data. However, the exact method of exploitation remains unclear.

- Hypothesis B: The breach was due to a misconfiguration or oversight in Mixpanel’s security protocols, inadvertently exposing user data. This hypothesis is supported by the lack of evidence indicating a sophisticated attack and the nature of the data exposed.

- Assessment: Hypothesis B is currently better supported given the absence of indicators of a complex cyber-attack and the commonality of security misconfigurations in data breaches. Future revelations about the breach methodology could shift this assessment.

3. Key Assumptions and Red Flags

- Assumptions: OpenAI’s internal systems were not directly compromised; Mixpanel’s breach was isolated to analytic data; OpenAI’s transparency claims are accurate.

- Information Gaps: Details on how Mixpanel’s system was breached; extent of data exposure; specific security measures in place at the time of the breach.

- Bias & Deception Risks: Potential bias in OpenAI’s reporting to minimize reputational damage; lack of independent verification of OpenAI’s claims.

4. Implications and Strategic Risks

This data breach could have far-reaching implications for OpenAI and its users, affecting trust and security perceptions.

- Political / Geopolitical: Potential for increased regulatory scrutiny on data protection practices, especially in jurisdictions with stringent privacy laws.

- Security / Counter-Terrorism: Increased risk of phishing and social engineering attacks targeting affected users.

- Cyber / Information Space: Highlights vulnerabilities in third-party integrations, potentially prompting broader industry reassessment of such dependencies.

- Economic / Social: Possible erosion of user trust in OpenAI, affecting user engagement and adoption of AI technologies.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Conduct a thorough security audit of third-party integrations; enhance monitoring for phishing attempts; communicate transparently with affected users.

- Medium-Term Posture (1–12 months): Develop stronger partnerships with cybersecurity firms; implement robust data protection measures; engage in public relations efforts to rebuild trust.

- Scenario Outlook:

- Best: Quick resolution and enhanced security measures restore user confidence.

- Worst: Further breaches or revelations of data misuse lead to significant reputational and financial damage.

- Most-Likely: Incremental improvements in security posture with gradual recovery of user trust.

6. Key Individuals and Entities

- OpenAI

- Mixpanel

- Not clearly identifiable from open sources in this snippet.

7. Thematic Tags

Cybersecurity, data breach, third-party risk, user privacy, AI technology, regulatory scrutiny, information security

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us