AI-Driven Disinformation Strategies Emerge in Political Campaigns, Blurring Lines Between Truth and Fiction

Published on: 2025-11-29

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: AI fuels a new wave of political lies

1. BLUF (Bottom Line Up Front)

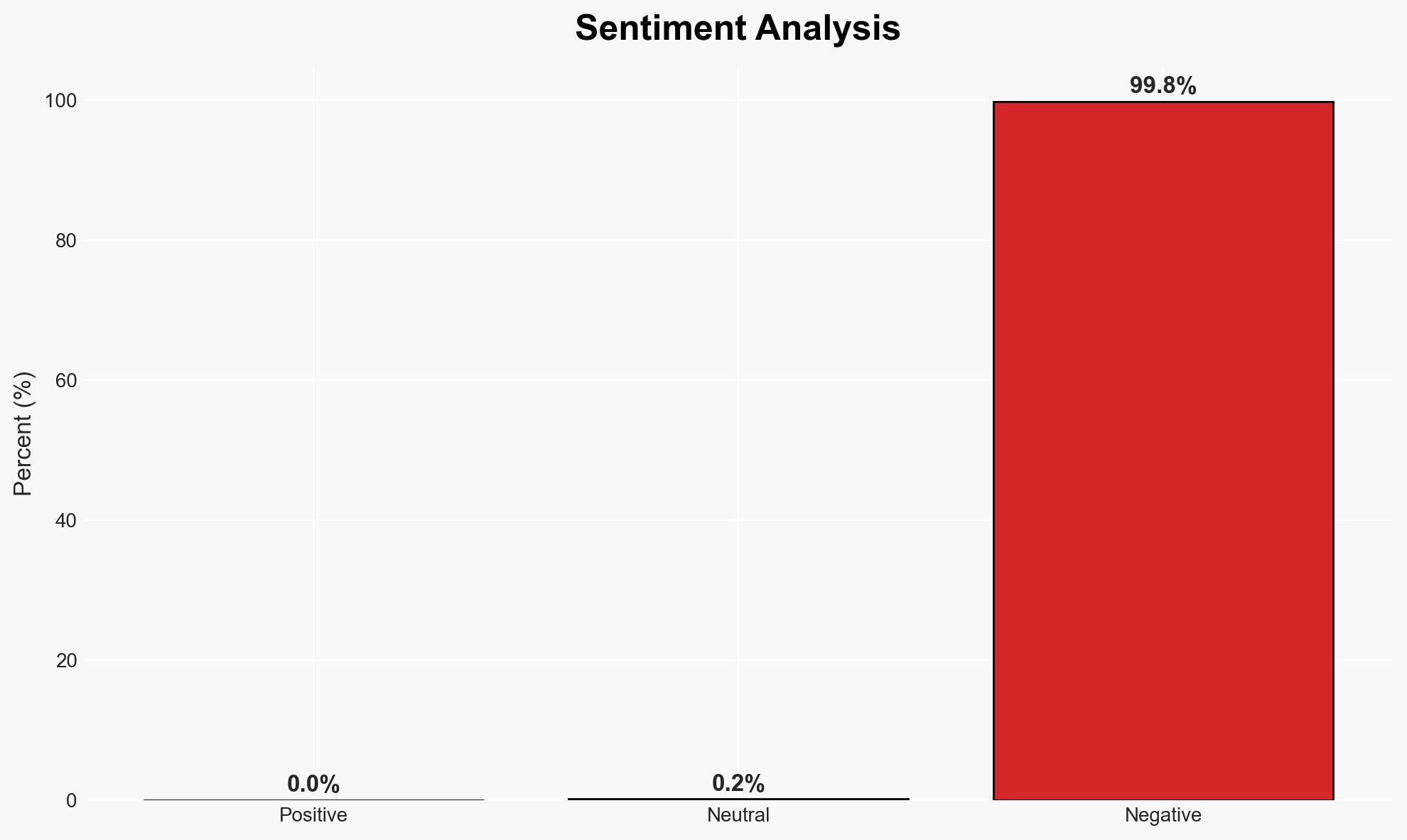

The use of AI-generated content in political campaigns is increasingly blurring the lines between satire and disinformation, potentially undermining democratic discourse and voter trust. This trend is most likely to escalate, affecting political stability and public confidence in electoral processes. Overall confidence in this assessment is moderate due to existing evidence and observable trends.

2. Competing Hypotheses

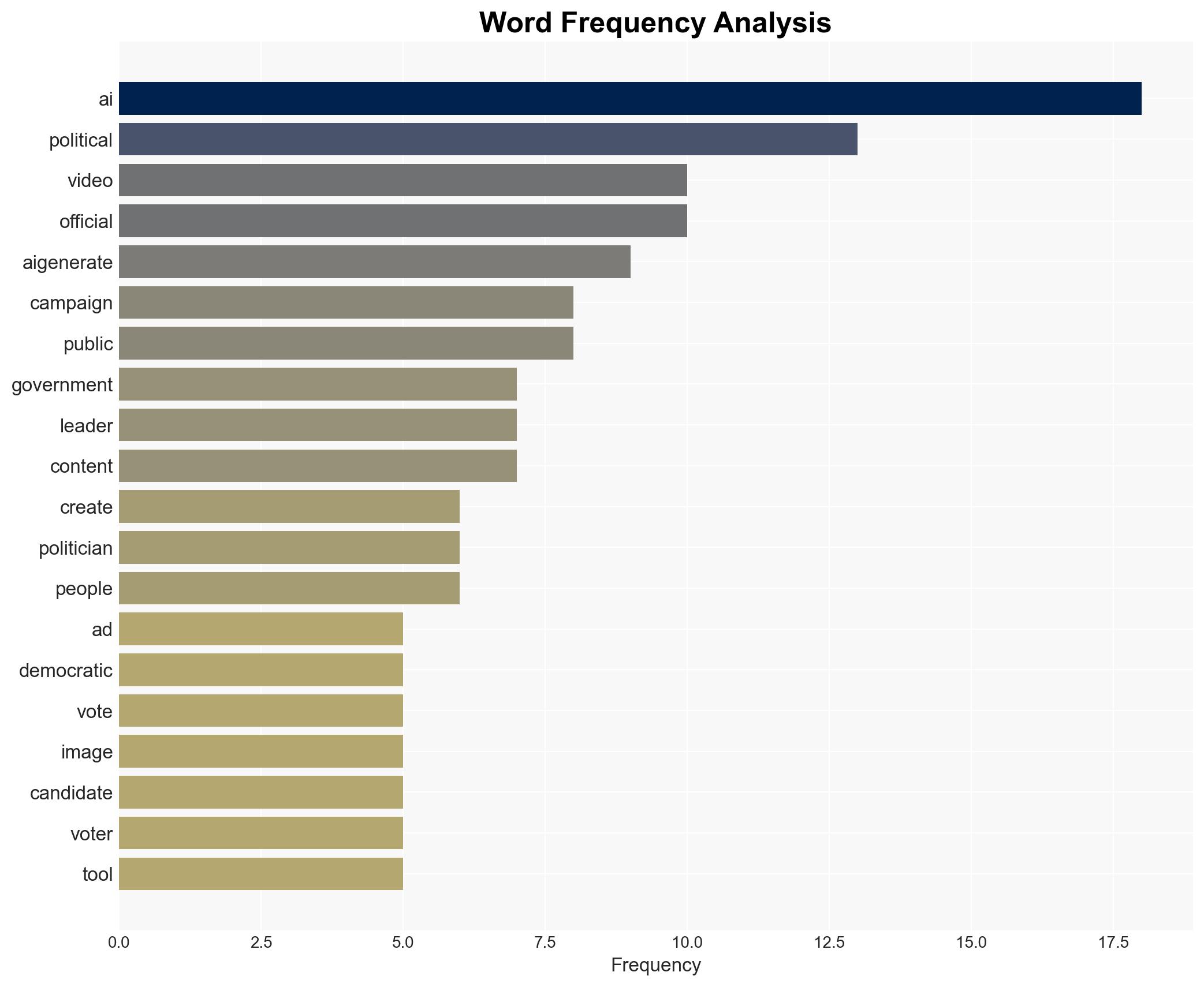

- Hypothesis A: AI-generated political content is primarily used as a tool for satire and creative expression. Supporting evidence includes historical use of satire in political campaigns. However, the increasing sophistication of AI blurs this line, leading to potential misinterpretation as factual information.

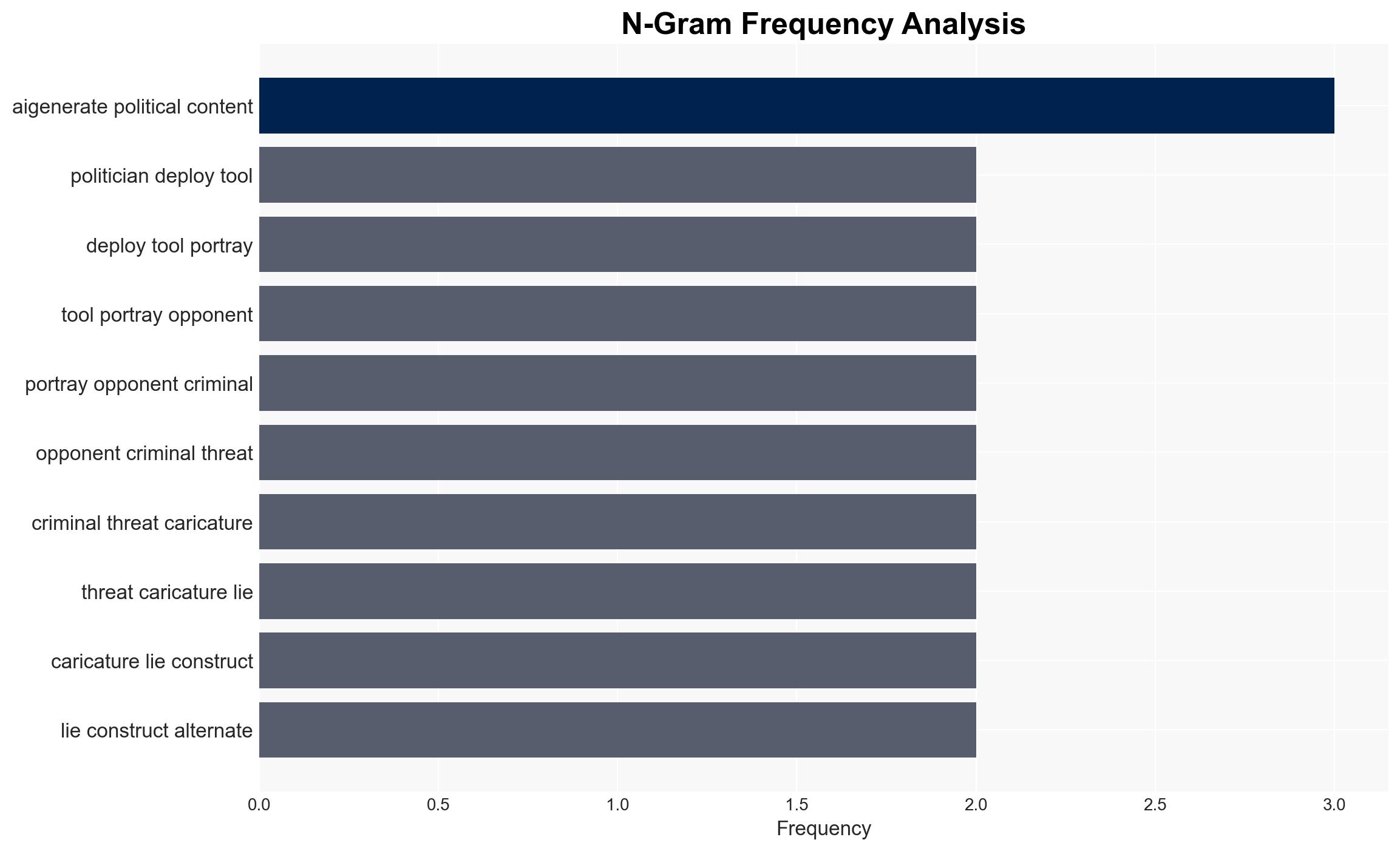

- Hypothesis B: AI-generated content is strategically deployed to mislead voters and manipulate electoral outcomes. This is supported by instances of AI-generated ads that distort political figures’ images and messages. Contradicting evidence includes the lack of widespread documented cases directly linking AI content to altered election results.

- Assessment: Hypothesis B is currently better supported due to the documented use of AI in creating misleading political ads and the potential for these to influence voter perceptions. Key indicators that could shift this judgment include increased regulatory oversight or technological countermeasures.

3. Key Assumptions and Red Flags

- Assumptions: AI technology will continue to advance rapidly; political campaigns will increasingly adopt AI tools; voters have limited ability to discern AI-generated content.

- Information Gaps: Lack of comprehensive data on the prevalence of AI-generated content in recent elections; insufficient understanding of voter response to such content.

- Bias & Deception Risks: Potential cognitive bias towards accepting visually convincing content as true; risk of source bias from political entities using AI for strategic gain.

4. Implications and Strategic Risks

The proliferation of AI-generated political content could significantly alter the landscape of political communication, leading to increased polarization and erosion of trust in democratic institutions.

- Political / Geopolitical: Escalation in political tensions and misinformation campaigns; potential for international actors to exploit AI for geopolitical influence.

- Security / Counter-Terrorism: Increased risk of AI tools being used to incite violence or unrest through fabricated narratives.

- Cyber / Information Space: Heightened challenges in cybersecurity and information integrity; need for advanced detection and verification technologies.

- Economic / Social: Potential destabilization of social cohesion as trust in media and political processes declines.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Enhance monitoring of AI-generated content in political campaigns; develop public awareness campaigns on identifying AI content.

- Medium-Term Posture (1–12 months): Invest in AI detection and verification technologies; foster partnerships between tech companies and regulatory bodies to address AI misuse.

- Scenario Outlook:

- Best: Effective regulation and public education mitigate AI misuse, restoring trust (trigger: successful policy implementation).

- Worst: Unchecked AI proliferation leads to widespread misinformation and electoral manipulation (trigger: lack of regulatory action).

- Most-Likely: Gradual adaptation to AI challenges with mixed success in countermeasures (trigger: ongoing technological advancements).

6. Key Individuals and Entities

- Mike Collins, GOP Representative

- Jon Ossoff, Incumbent Democratic Senator

- Andrew Cuomo, Democratic Governor

- Zohran Mamdani, Political Figure

- Chuck Schumer, Senate Minority Leader

- National Republican Senatorial Committee

7. Thematic Tags

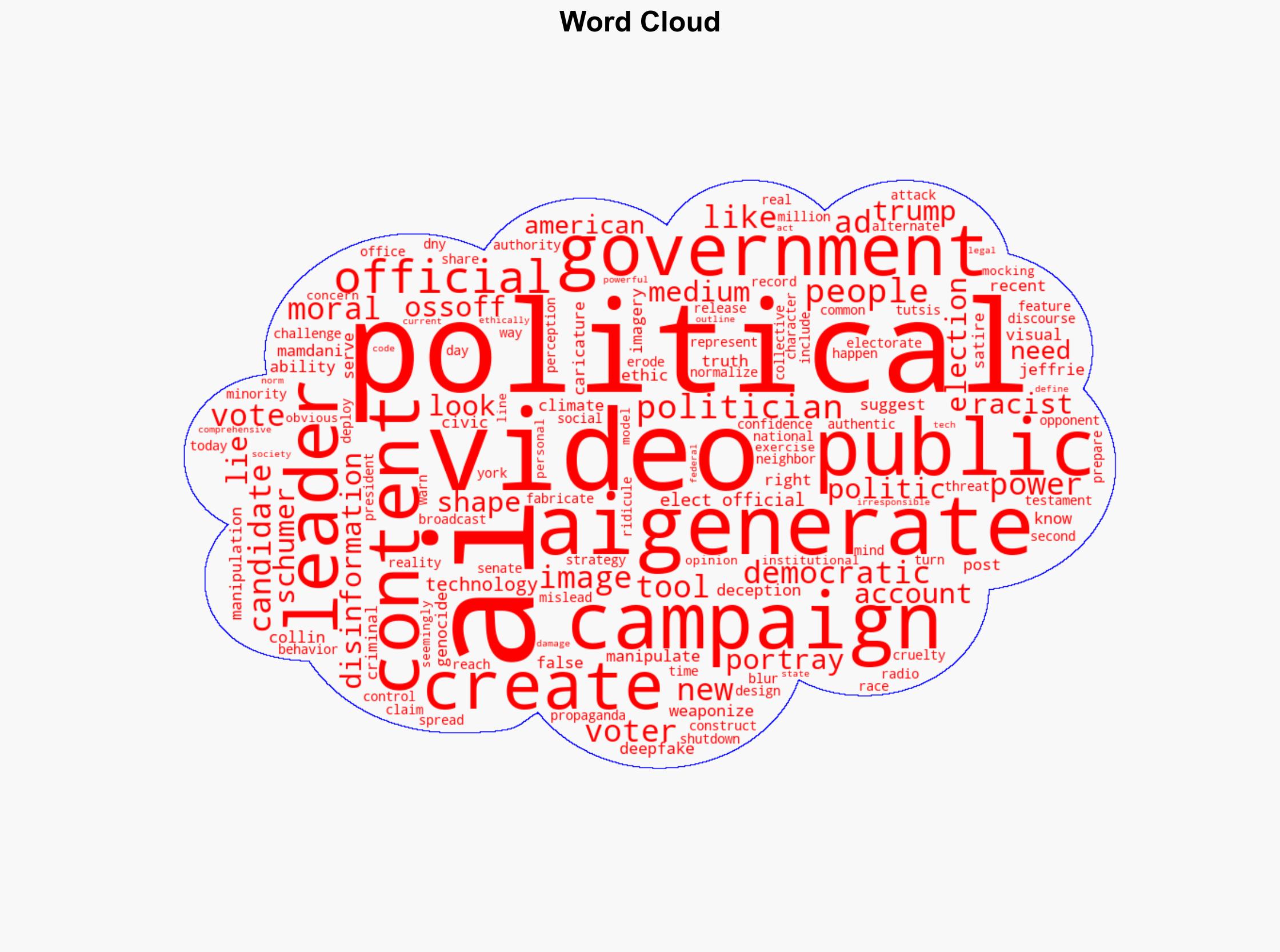

National Security Threats, AI-generated content, political disinformation, electoral integrity, voter manipulation, democratic discourse, cybersecurity, misinformation campaigns

Structured Analytic Techniques Applied

- Cognitive Bias Stress Test: Expose and correct potential biases in assessments through red-teaming and structured challenge.

- Bayesian Scenario Modeling: Use probabilistic forecasting for conflict trajectories or escalation likelihood.

- Network Influence Mapping: Map relationships between state and non-state actors for impact estimation.

Explore more:

National Security Threats Briefs ·

Daily Summary ·

Support us