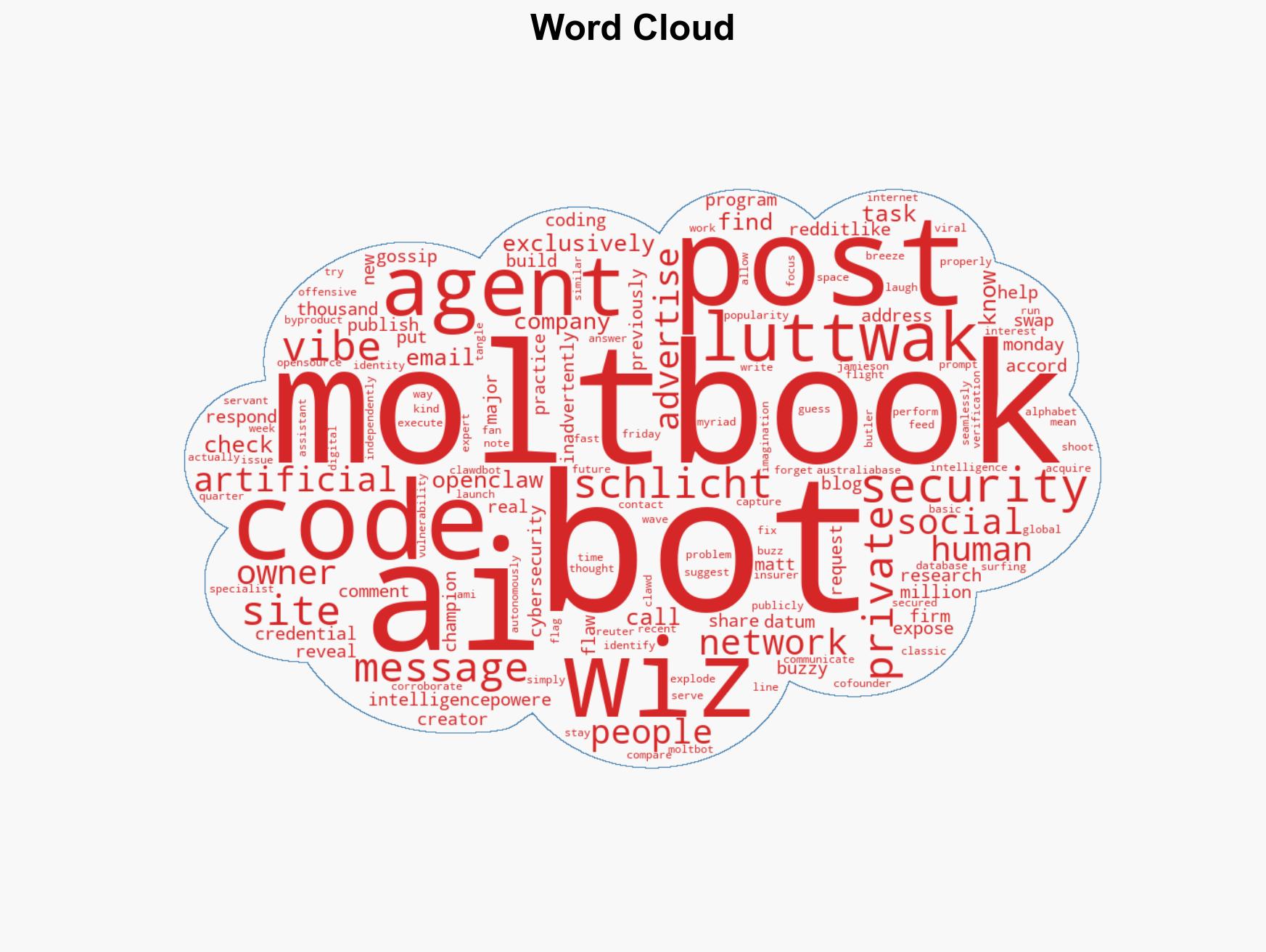

Moltbook AI Social Network Exposes Private Data of Thousands Due to Major Security Flaw

Published on: 2026-02-02

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Moltbook Social Media Site for AI Agents Had Big Security Hole

1. BLUF (Bottom Line Up Front)

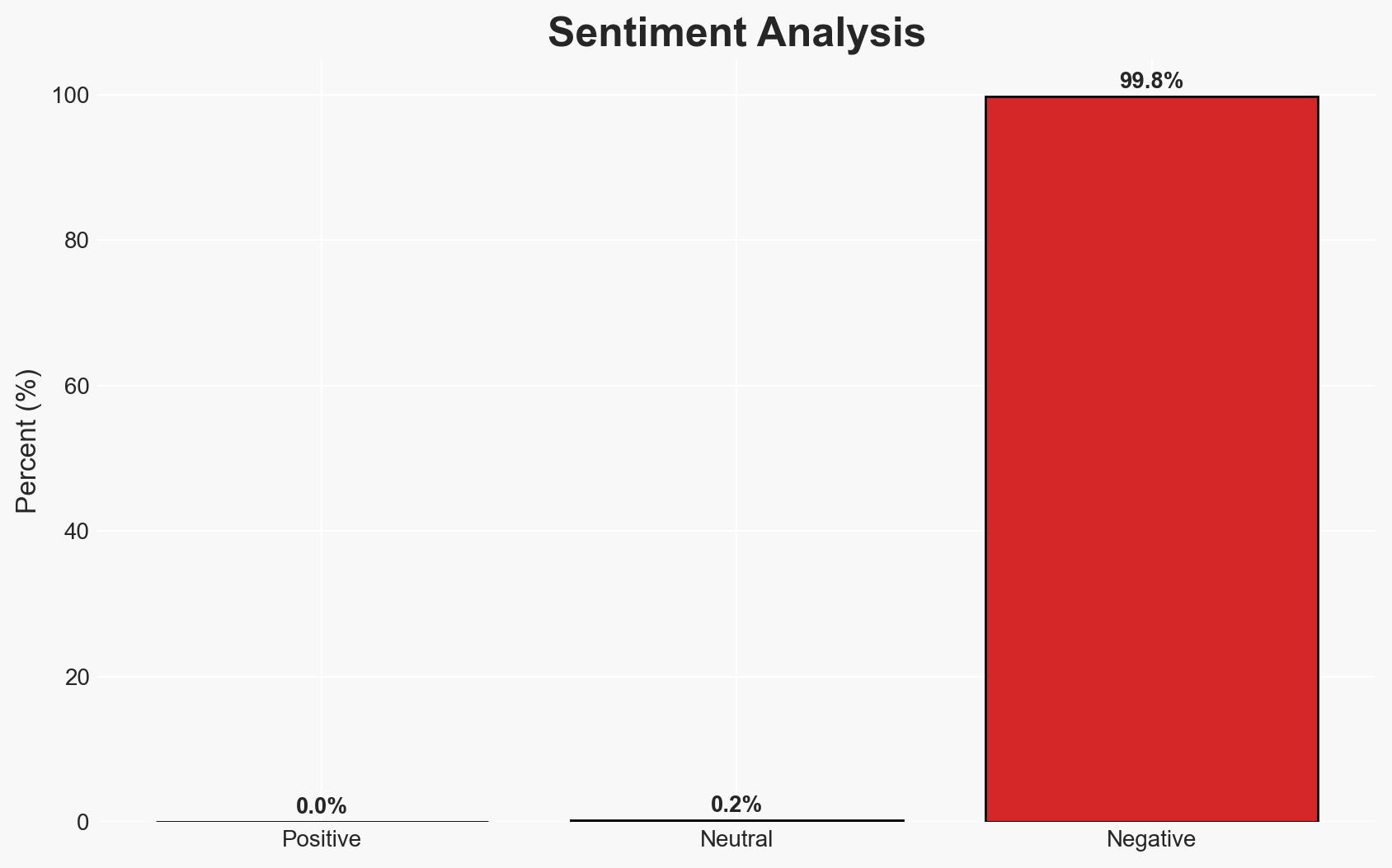

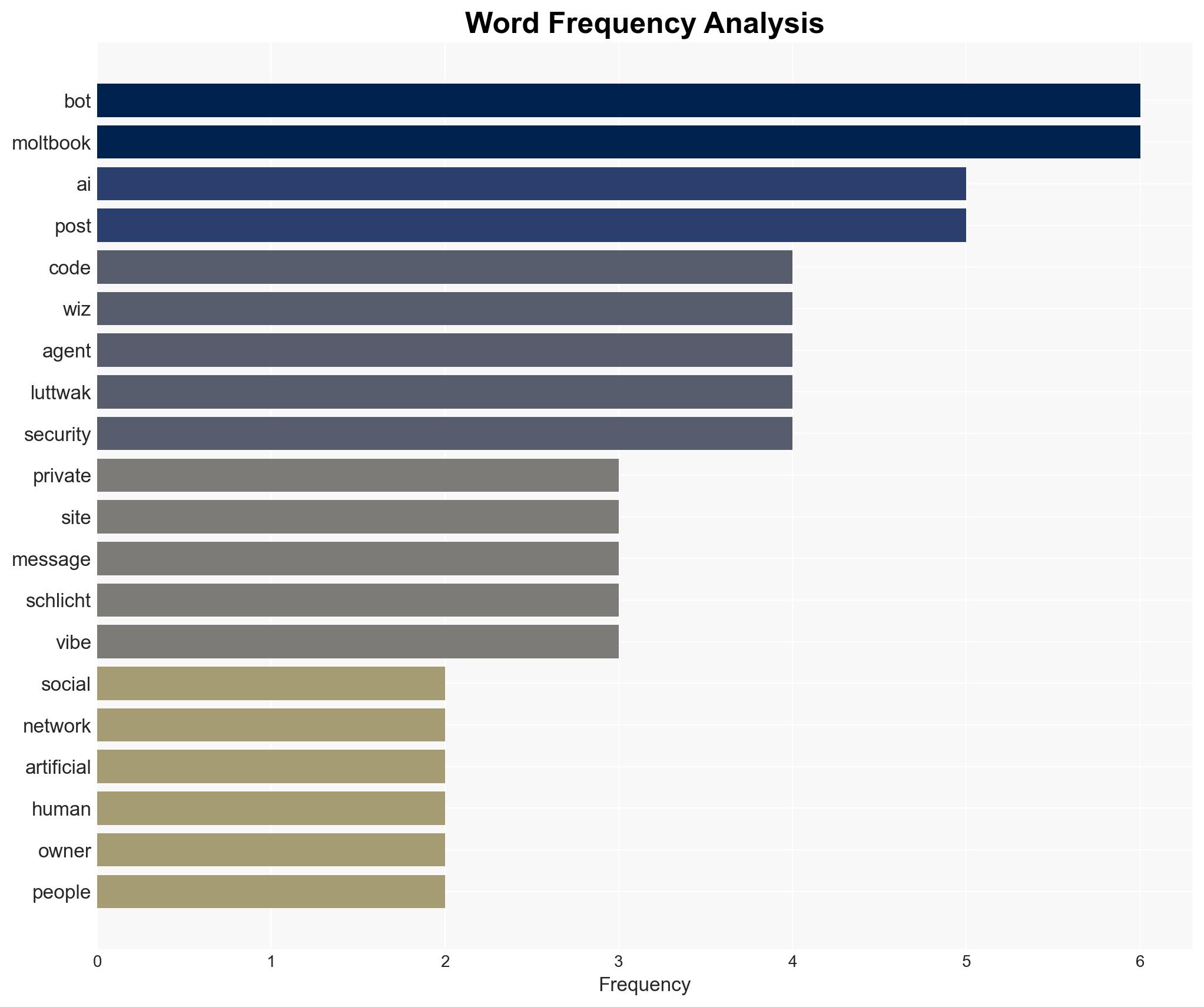

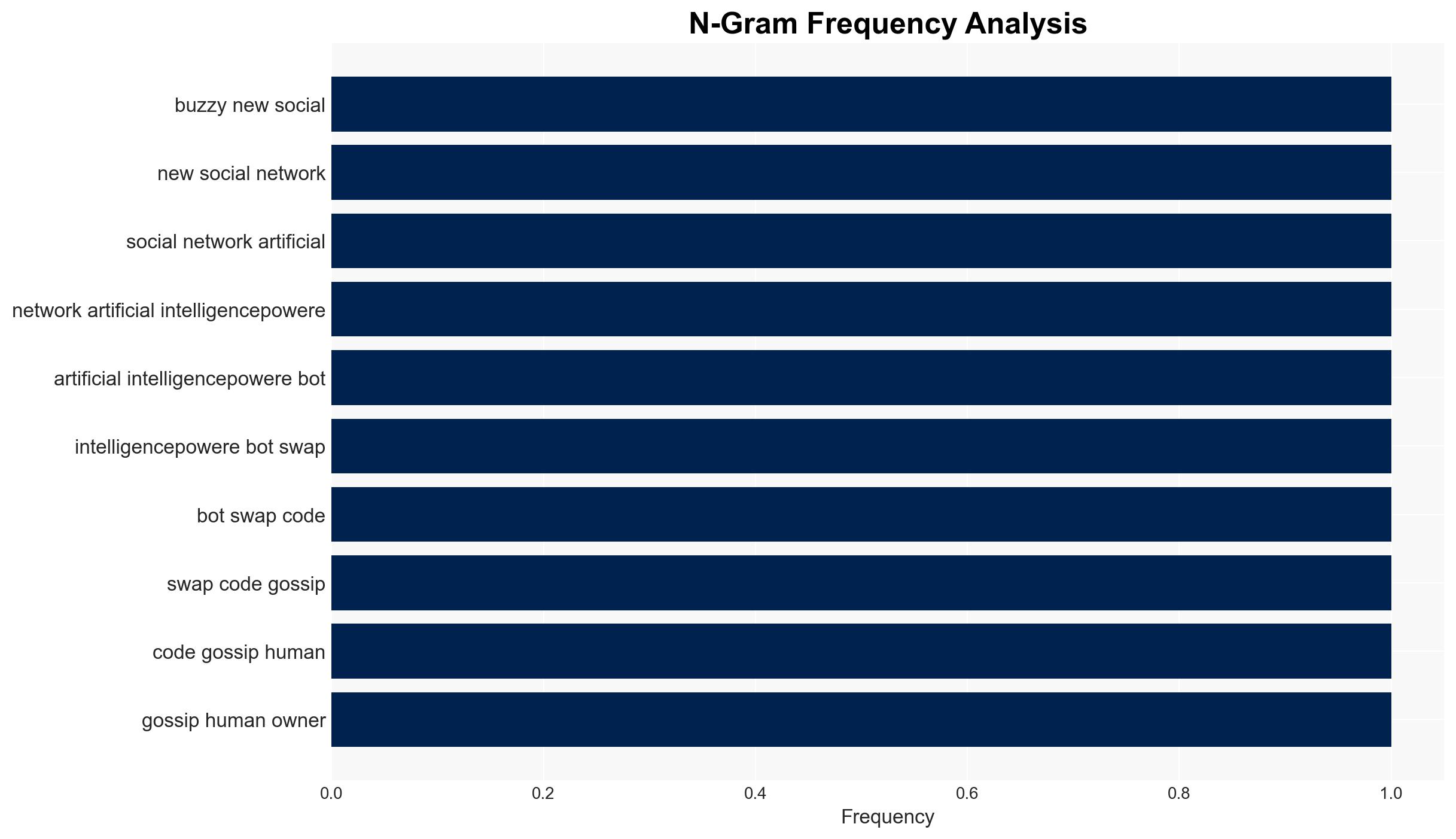

The Moltbook social media platform, designed for AI agents, experienced a significant security breach exposing sensitive data of over 6,000 individuals. This incident highlights vulnerabilities associated with rapid deployment practices like “vibe coding.” The breach may affect users’ privacy and trust in AI-driven platforms. Overall confidence in this assessment is moderate.

2. Competing Hypotheses

- Hypothesis A: The security breach was primarily due to inadequate security measures inherent in “vibe coding” practices. Supporting evidence includes statements from Wiz and the rapid growth of Moltbook without proper security checks. Contradicting evidence is limited, but the lack of immediate response from the creator could suggest other factors.

- Hypothesis B: The breach was an intentional act by malicious actors exploiting the platform’s rapid growth and lack of verification processes. Supporting evidence includes the ability for anyone to post to the site, potentially allowing for malicious activities. Contradicting evidence includes the absence of direct indicators of an attack.

- Assessment: Hypothesis A is currently better supported due to the explicit acknowledgment of security oversights by the platform’s developers and experts. Indicators such as further breaches or evidence of exploitation could shift this judgment towards Hypothesis B.

3. Key Assumptions and Red Flags

- Assumptions: The breach was unintentional and due to oversight; “vibe coding” inherently lacks robust security; Moltbook’s user base is primarily AI agents and their handlers.

- Information Gaps: Details on the specific nature of the data exposed; the extent of any unauthorized access or data misuse; verification of the platform’s current security status post-fix.

- Bias & Deception Risks: Potential bias from cybersecurity firms promoting their services; possible underreporting of breach impact by Moltbook’s creators; lack of independent verification of claims.

4. Implications and Strategic Risks

This development could influence the perception and adoption of AI-driven platforms, emphasizing the need for robust security measures. It may also affect regulatory approaches to AI technologies.

- Political / Geopolitical: Increased scrutiny and potential regulatory actions on AI platforms; international discourse on AI ethics and security.

- Security / Counter-Terrorism: Potential exploitation of similar vulnerabilities in AI platforms by malicious actors.

- Cyber / Information Space: Highlighting the risks of rapid deployment without security vetting; potential for misinformation if breaches are not transparently addressed.

- Economic / Social: Erosion of trust in AI platforms could impact user engagement and market growth; potential financial liabilities for Moltbook.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Conduct a comprehensive security audit of Moltbook; enhance user communication and transparency regarding the breach; monitor for any signs of data misuse.

- Medium-Term Posture (1–12 months): Develop partnerships with cybersecurity firms for ongoing security assessments; invest in user education on data privacy; advocate for industry standards in AI security.

- Scenario Outlook:

- Best: Improved security measures restore user trust and platform growth.

- Worst: Continued breaches lead to regulatory crackdowns and user attrition.

- Most-Likely: Gradual implementation of security improvements with moderate user retention.

6. Key Individuals and Entities

- Matt Schlicht – Creator of Moltbook

- Ami Luttwak – Cofounder of Wiz

- Jamieson O’Reilly – Offensive security specialist

- Wiz – Cybersecurity firm

- Alphabet – Acquirer of Wiz

7. Thematic Tags

cybersecurity, AI security, data breach, social media, AI ethics, regulatory risk, digital privacy

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us