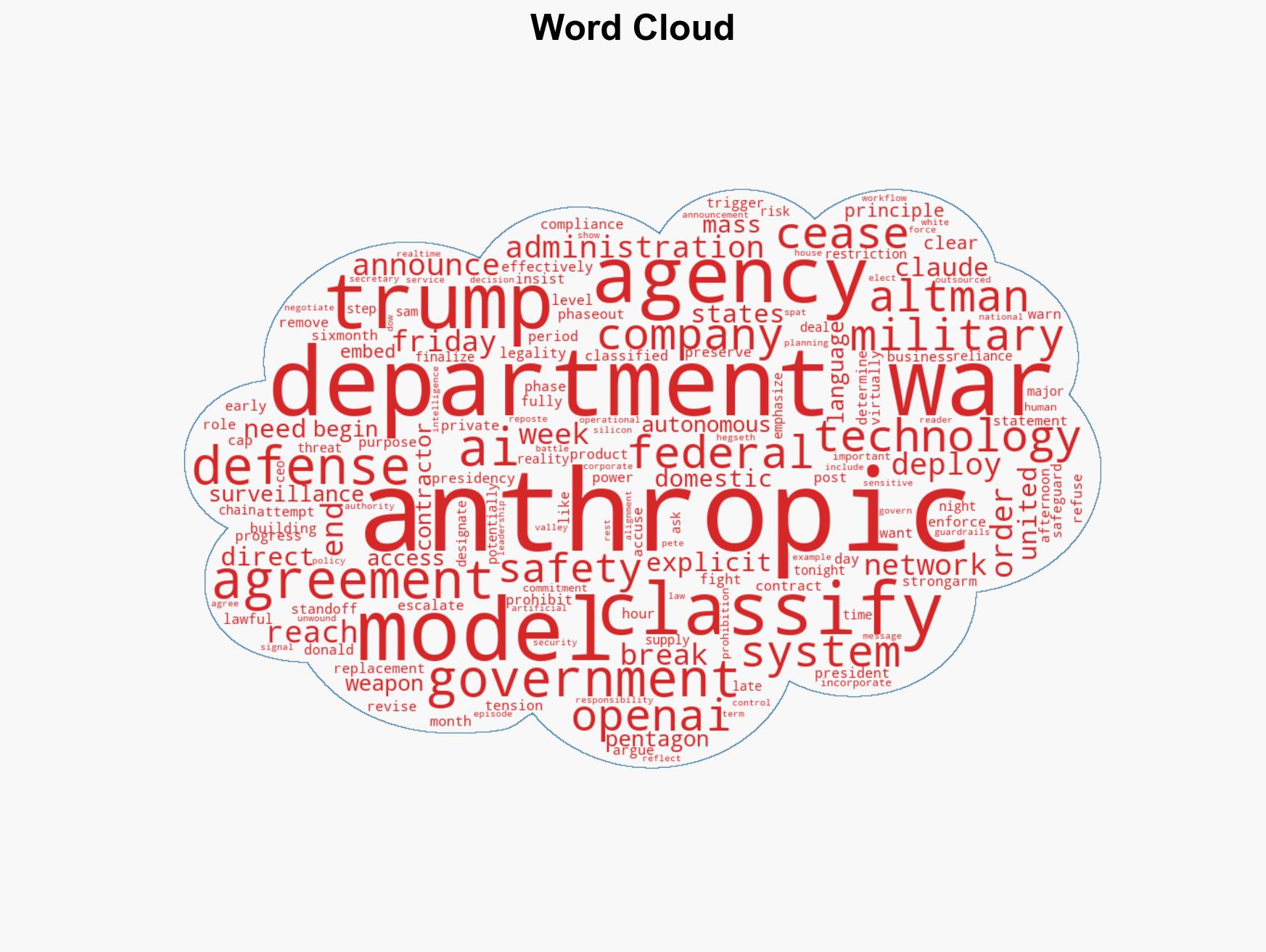

Trump Directs Federal Agencies to Halt Use of Anthropic Technology Amid AI Defense Transition

Published on: 2026-03-01

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Trump Orders Government-Wide Break With Anthropic in High-Stakes AI Defense Clash

1. BLUF (Bottom Line Up Front)

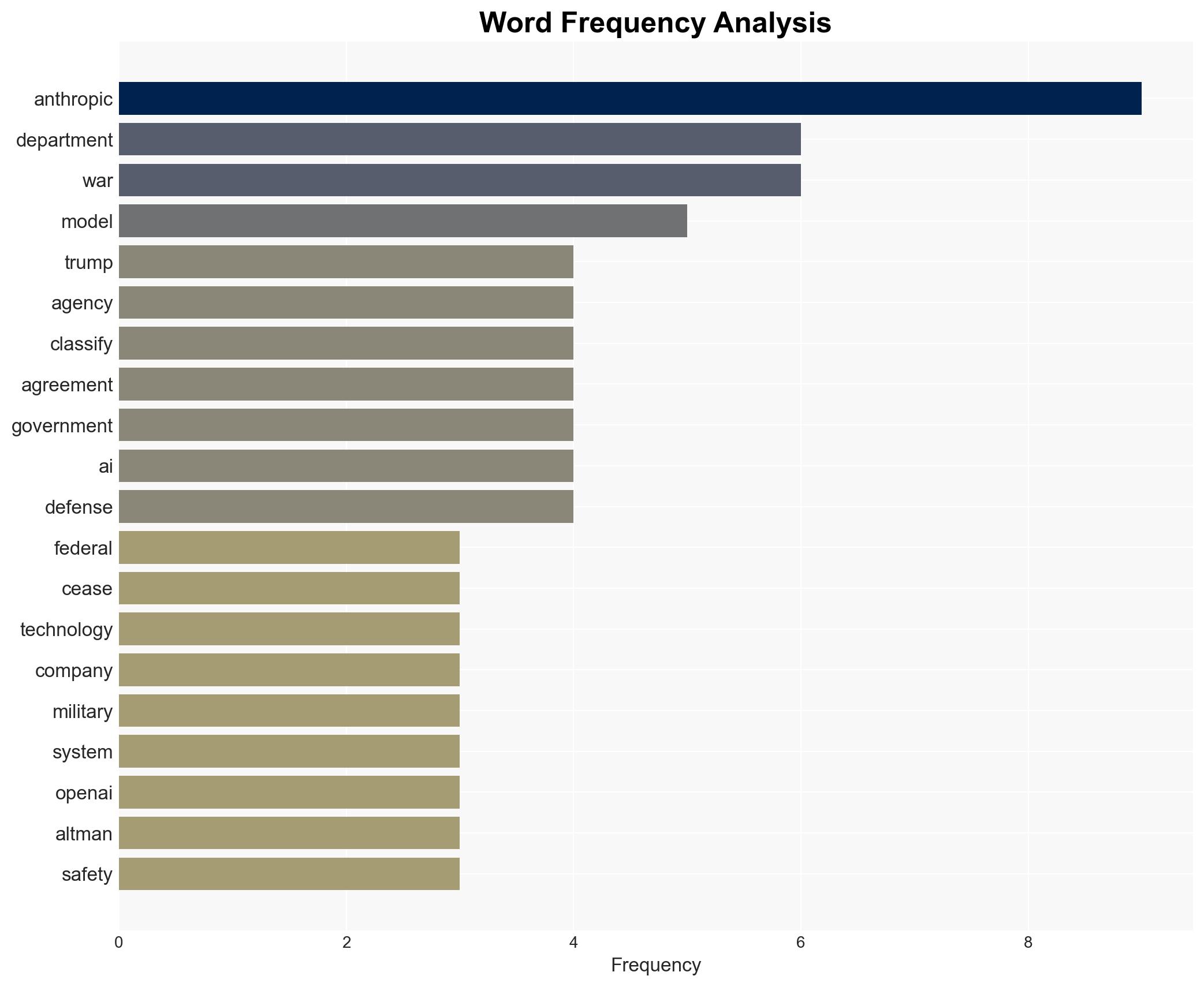

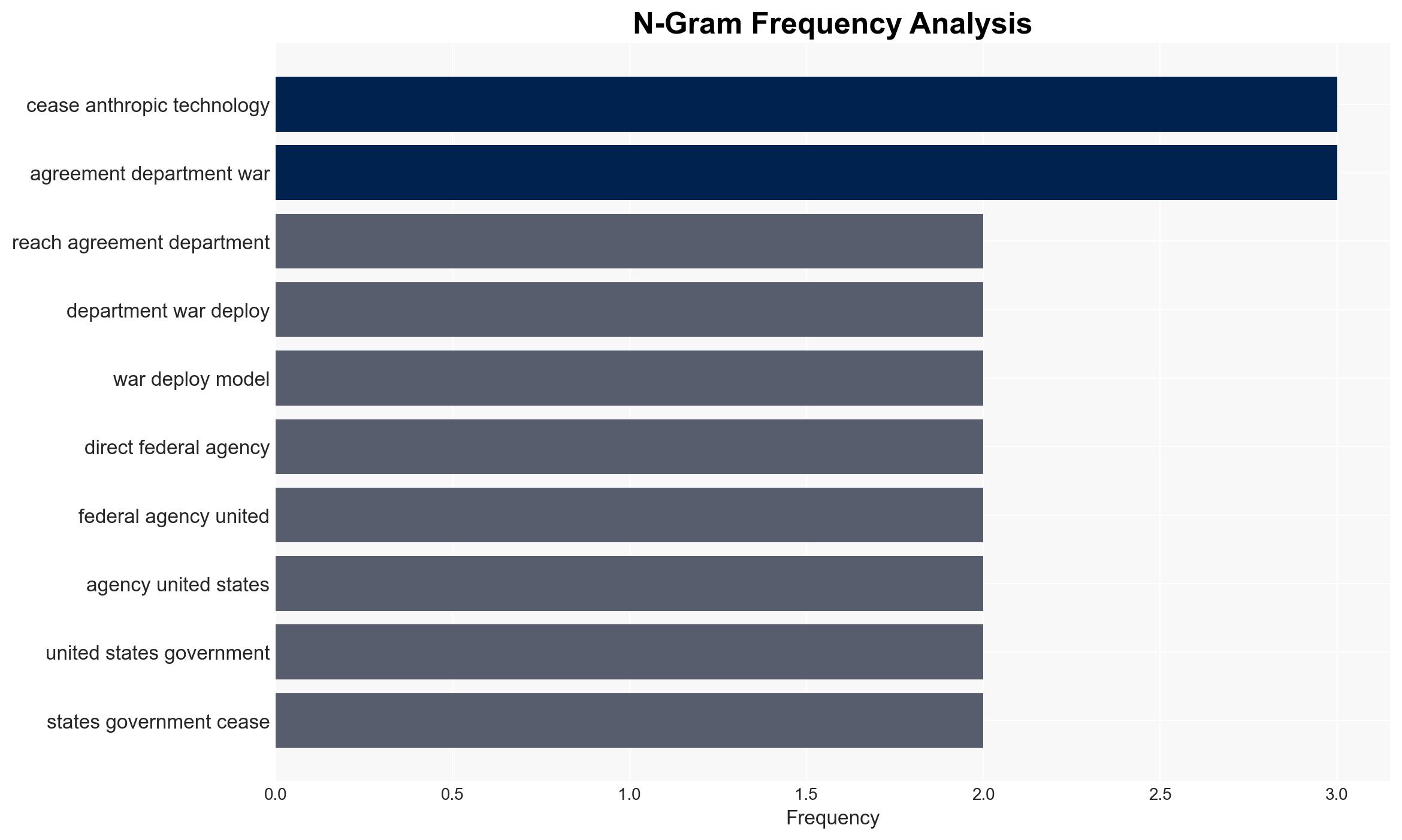

The Trump administration’s directive to cease all federal use of Anthropic’s technology marks a significant shift in U.S. military AI strategy, favoring OpenAI’s models instead. This decision affects federal agencies and defense contractors, with potential implications for military AI governance. Overall, this assessment is made with moderate confidence due to the limited available details on the internal negotiations and strategic intentions of involved parties.

2. Competing Hypotheses

- Hypothesis A: The decision to terminate Anthropic’s involvement was primarily driven by the company’s refusal to remove AI safeguards, which conflicted with the Pentagon’s operational requirements. Supporting evidence includes Anthropic’s public stance on maintaining restrictions and the Pentagon’s insistence on unrestricted AI use. Key uncertainties involve the extent of internal government deliberations and potential undisclosed factors influencing the decision.

- Hypothesis B: The move is a strategic realignment to strengthen ties with OpenAI, which may offer more favorable terms or align better with the administration’s broader AI strategy. OpenAI’s immediate agreement with the Department of War suggests pre-existing negotiations. Contradicting evidence includes the rapidity of the transition, which may indicate a reactionary rather than strategic decision.

- Assessment: Hypothesis A is currently better supported due to explicit statements from involved parties and the alignment of actions with stated policy objectives. Indicators that could shift this judgment include revelations of prior negotiations with OpenAI or changes in AI policy frameworks.

3. Key Assumptions and Red Flags

- Assumptions: The administration’s decision is primarily based on operational needs; OpenAI’s models meet all security and operational requirements; Anthropic’s safeguards are incompatible with military objectives.

- Information Gaps: Details of the contractual terms between OpenAI and the Department of War; internal government deliberations leading to the decision; potential international reactions or pressures.

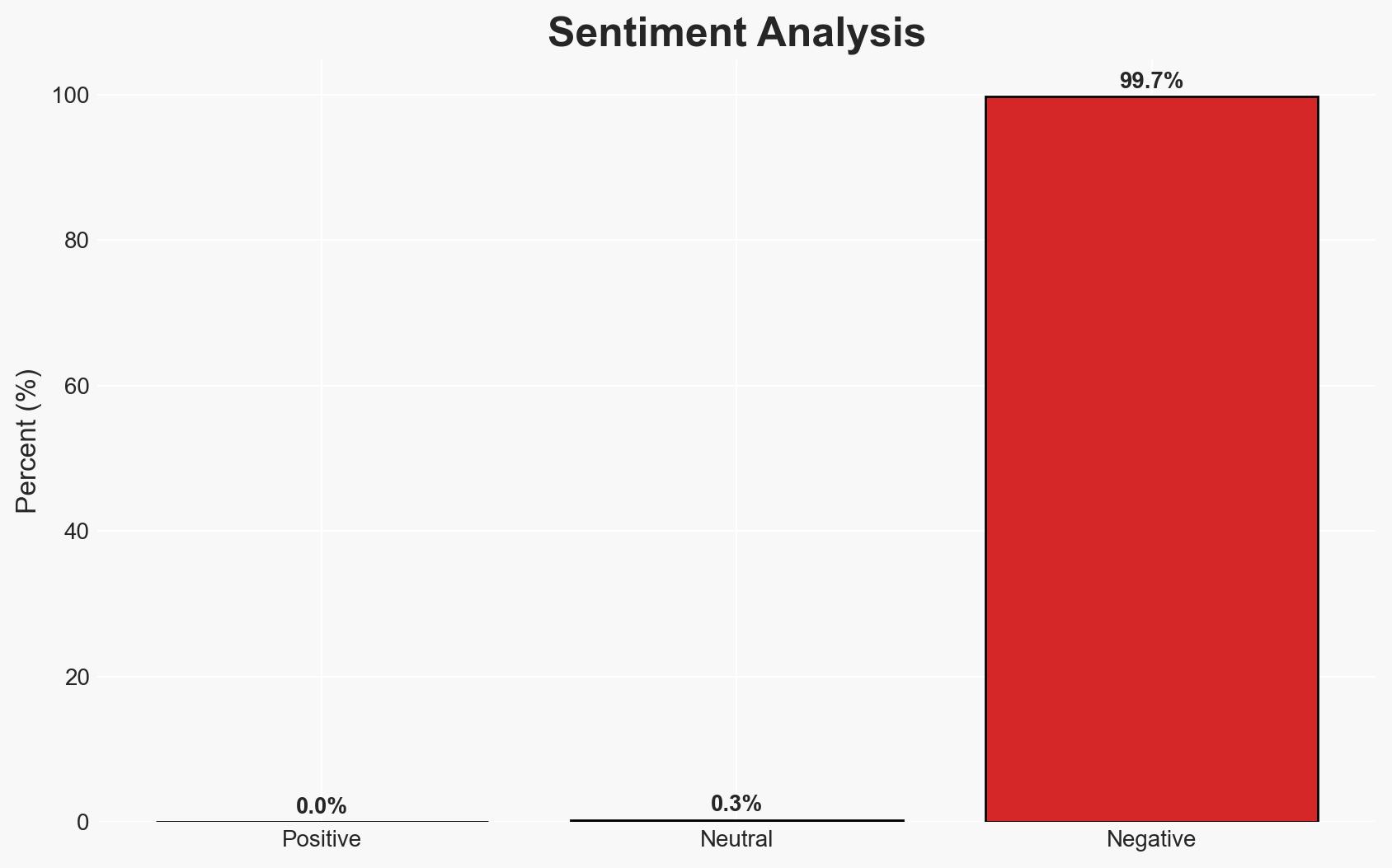

- Bias & Deception Risks: Potential bias in public statements from involved companies; risk of misrepresentation of AI capabilities or strategic intentions; possibility of strategic deception by either party to influence public or governmental perception.

4. Implications and Strategic Risks

This development could reshape the U.S. military’s AI landscape, influencing future AI policy and contractor relationships. It may also affect international perceptions of U.S. AI capabilities and governance.

- Political / Geopolitical: Potential for increased scrutiny or criticism from international allies and adversaries regarding U.S. AI governance and ethical standards.

- Security / Counter-Terrorism: Changes in AI capabilities could alter threat detection and response strategies, impacting operational effectiveness.

- Cyber / Information Space: Transition to new AI models may introduce cybersecurity vulnerabilities or affect information operations.

- Economic / Social: Economic impacts on Anthropic and its partners; potential shifts in AI industry dynamics and innovation incentives.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor implementation of the phase-out; assess OpenAI’s integration into classified systems; evaluate potential security risks.

- Medium-Term Posture (1–12 months): Develop resilience measures to manage transition risks; explore partnerships to diversify AI capabilities; enhance oversight of AI policy compliance.

- Scenario Outlook:

- Best: Successful integration of OpenAI models enhances military capabilities without security lapses.

- Worst: Transition issues lead to operational disruptions or security breaches.

- Most-Likely: Gradual adaptation with minor operational adjustments and policy refinements.

6. Key Individuals and Entities

- Donald Trump – President of the United States

- Anthropic – AI company

- OpenAI – AI company

- Sam Altman – CEO of OpenAI

- Pete Hegseth – Defense Secretary

- Department of War – U.S. government department

7. Thematic Tags

cybersecurity, AI governance, military technology, national security, defense policy, strategic realignment, U.S. government

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us