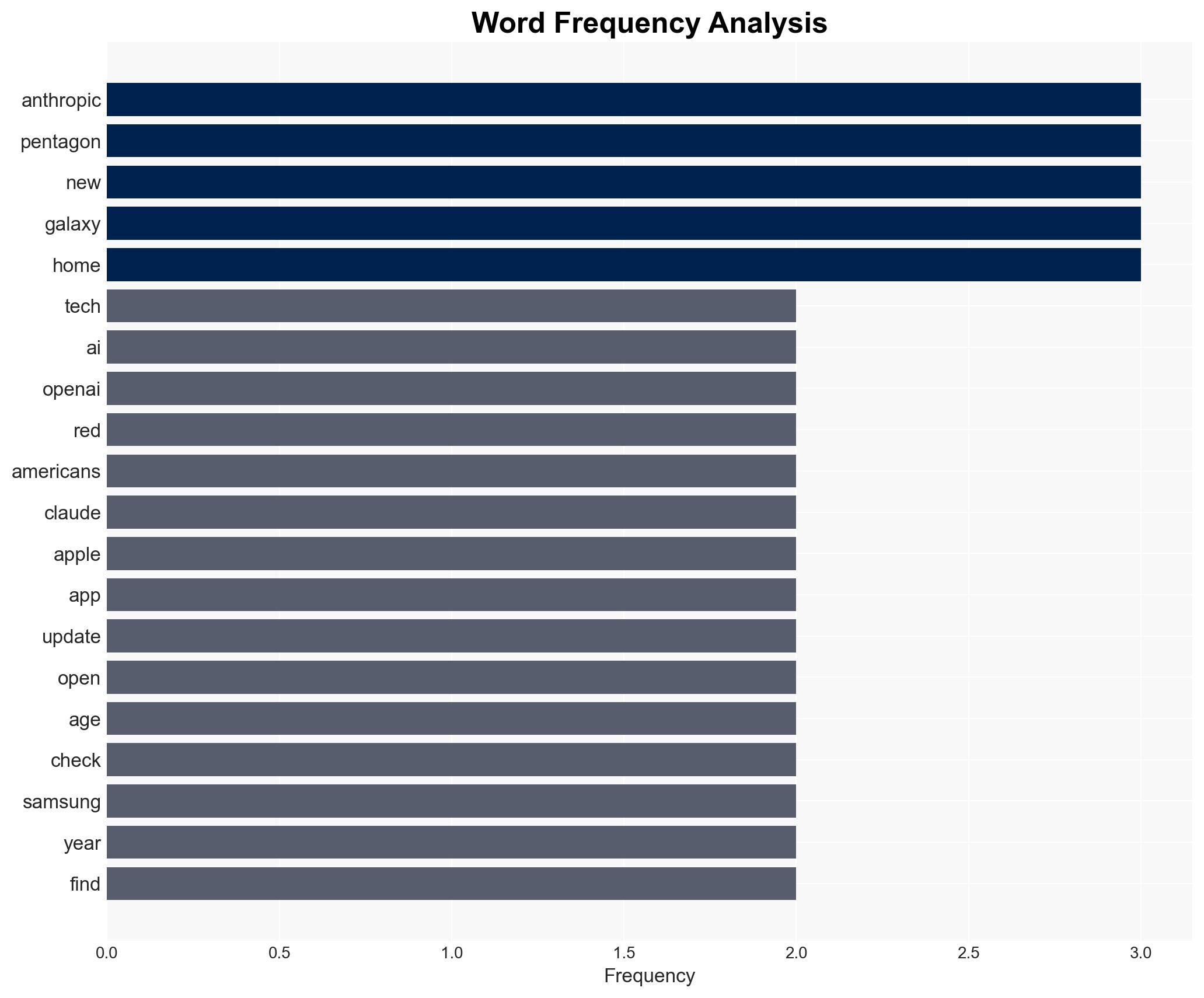

Anthropic Challenges Pentagon on AI Ethics, Sparking Debate Over Surveillance and Military Use

Published on: 2026-03-02

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: TWiT 1073 Broetry in Motion – Anthropic Stands Up to The Pentagon

1. BLUF (Bottom Line Up Front)

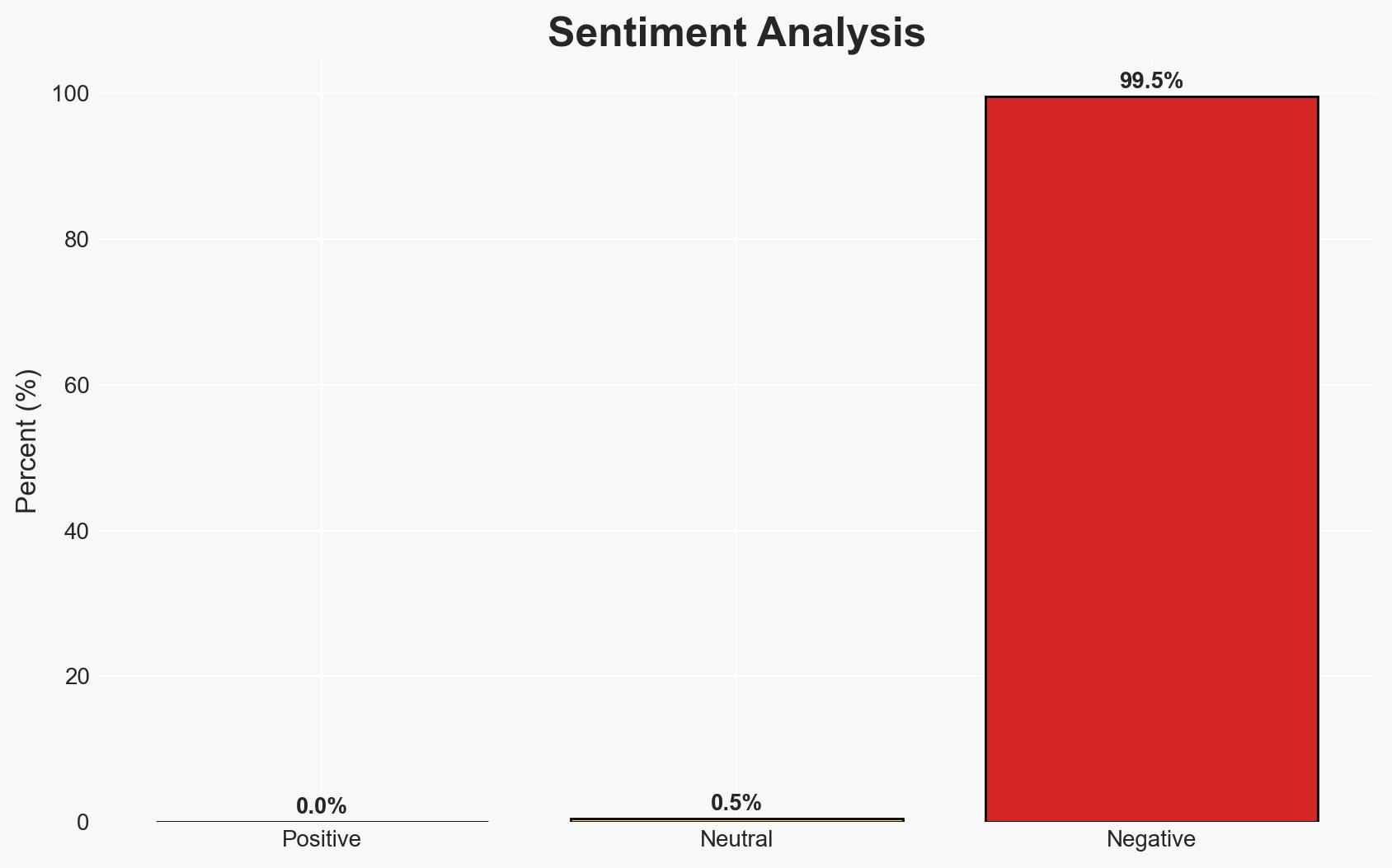

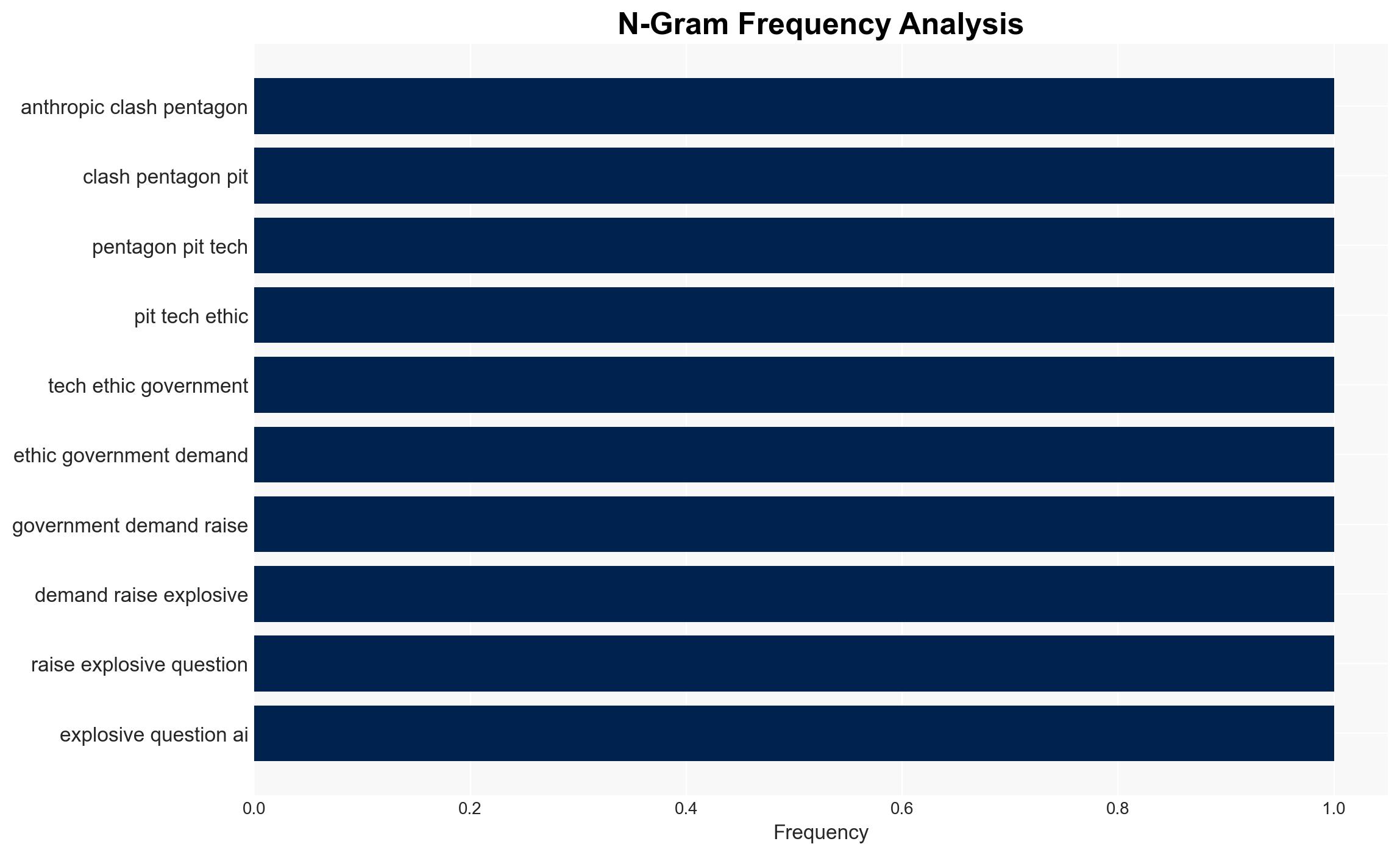

The conflict between Anthropic and the Pentagon highlights tensions between tech ethics and government demands for AI in surveillance and weaponry. The situation underscores the broader implications of AI governance and control. This development affects tech companies, government agencies, and civil liberties groups. Overall, there is moderate confidence in the assessment that Anthropic’s stance may influence other tech firms to reconsider their engagement with government contracts.

2. Competing Hypotheses

- Hypothesis A: Anthropic’s refusal to cooperate with the Pentagon is primarily driven by ethical concerns over AI’s use in surveillance and weaponry. Supporting evidence includes Anthropic’s public stance on tech ethics and the alignment with OpenAI’s stated red lines. However, there is uncertainty about internal pressures or alternative motivations.

- Hypothesis B: Anthropic’s actions are a strategic move to differentiate itself in the competitive AI market and capitalize on public sentiment against government surveillance. This is supported by the rise of Anthropic’s app popularity post-rejection and the potential market positioning benefits. Contradicting this is the lack of direct evidence linking market strategy as the primary motivator.

- Assessment: Currently, Hypothesis A is better supported due to the explicit ethical stance taken by Anthropic and corroborated by OpenAI. Key indicators that could shift this judgment include new evidence of strategic market positioning or changes in Anthropic’s public communications.

3. Key Assumptions and Red Flags

- Assumptions: Anthropic’s public statements reflect its genuine motivations; OpenAI’s alignment with Anthropic is consistent and not opportunistic; the Pentagon’s demands are primarily for surveillance and weaponry applications.

- Information Gaps: Details on the specific nature of the Pentagon’s demands and any internal communications within Anthropic regarding their decision-making process.

- Bias & Deception Risks: Potential bias in interpreting Anthropic’s motivations due to public statements; risk of deception if Anthropic’s public stance is a strategic facade.

4. Implications and Strategic Risks

This development could lead to increased scrutiny on AI ethics and government contracts, potentially influencing policy and corporate strategies. Over time, this may alter the landscape of AI development and deployment.

- Political / Geopolitical: Potential for increased regulatory focus on AI ethics and government oversight, affecting international tech policy discussions.

- Security / Counter-Terrorism: Possible shifts in government reliance on AI for surveillance, impacting operational capabilities and threat assessments.

- Cyber / Information Space: Heightened discourse on AI ethics could influence public opinion and digital narratives around surveillance and privacy.

- Economic / Social: Market dynamics may shift as companies reassess their ethical stances, impacting investor confidence and consumer trust.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor Anthropic’s public statements and any shifts in AI policy discussions; engage with tech firms to understand their ethical frameworks.

- Medium-Term Posture (1–12 months): Develop resilience measures for potential regulatory changes; foster partnerships with ethical AI advocacy groups.

- Scenario Outlook: Best: Ethical AI frameworks gain traction, leading to responsible AI development. Worst: Increased polarization between tech firms and government agencies. Most-Likely: Gradual policy adjustments with ongoing public debate.

6. Key Individuals and Entities

- Anthropic

- Pentagon

- OpenAI

- Sam Altman

- NSA

- Apple

7. Thematic Tags

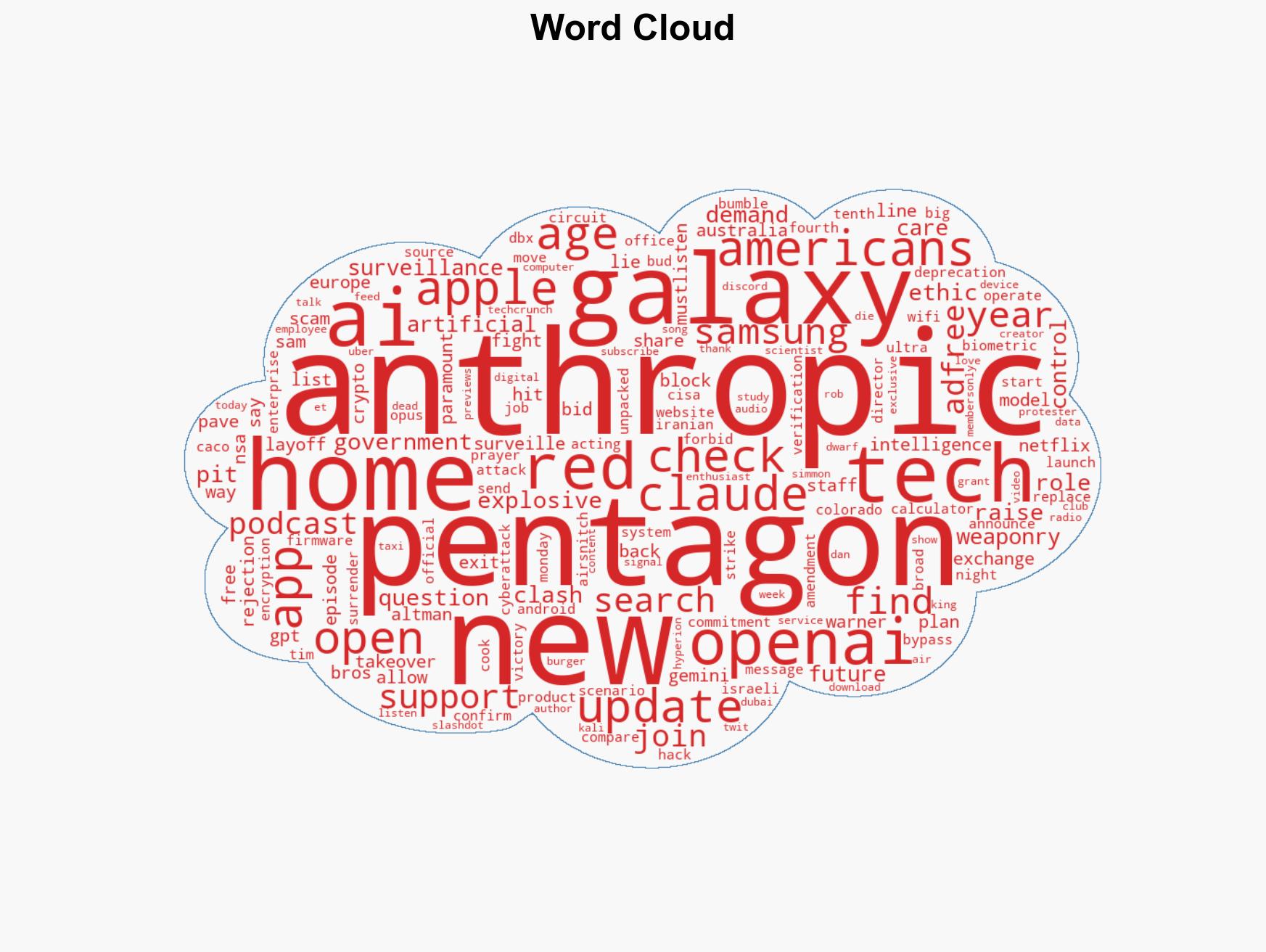

cybersecurity, AI ethics, government surveillance, tech industry, public policy, market strategy, civil liberties, regulatory dynamics

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Forecast futures under uncertainty via probabilistic logic.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us