High-severity vulnerability in Chrome’s Gemini AI allows malicious extensions to compromise user privacy

Published on: 2026-03-02

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: This high-severity Chrome Gemini vulnerability lets malicious extensions spy on your PC

1. BLUF (Bottom Line Up Front)

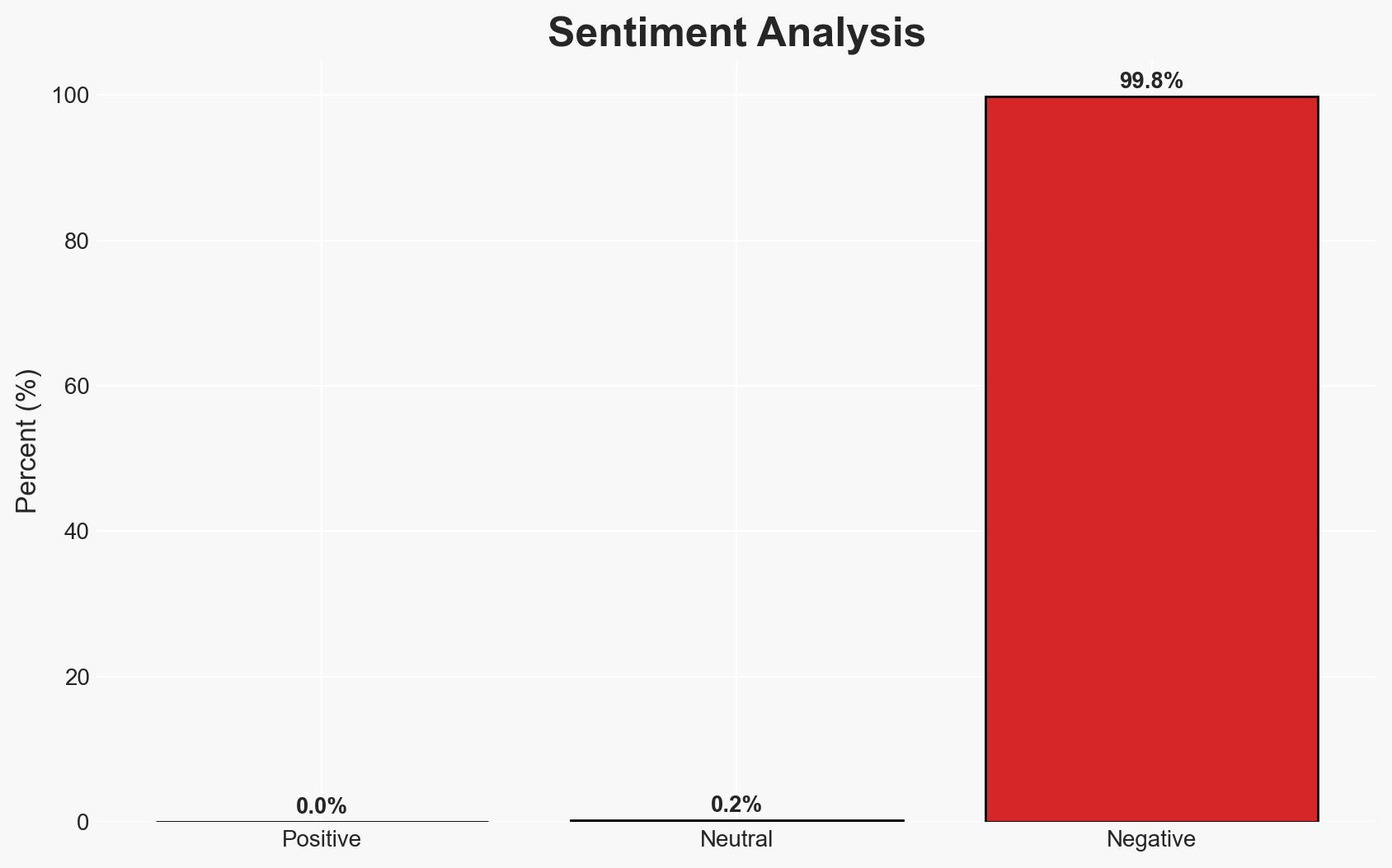

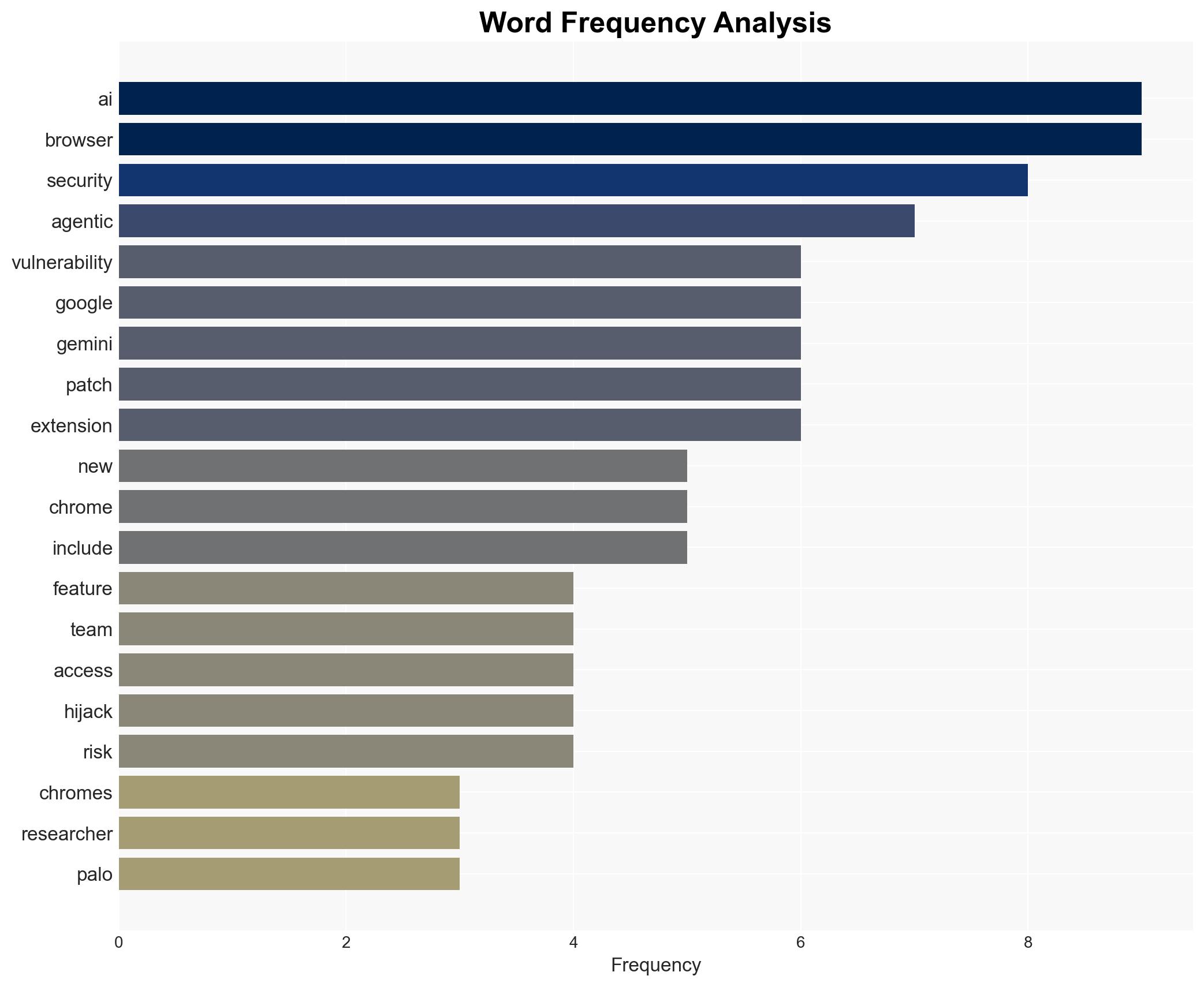

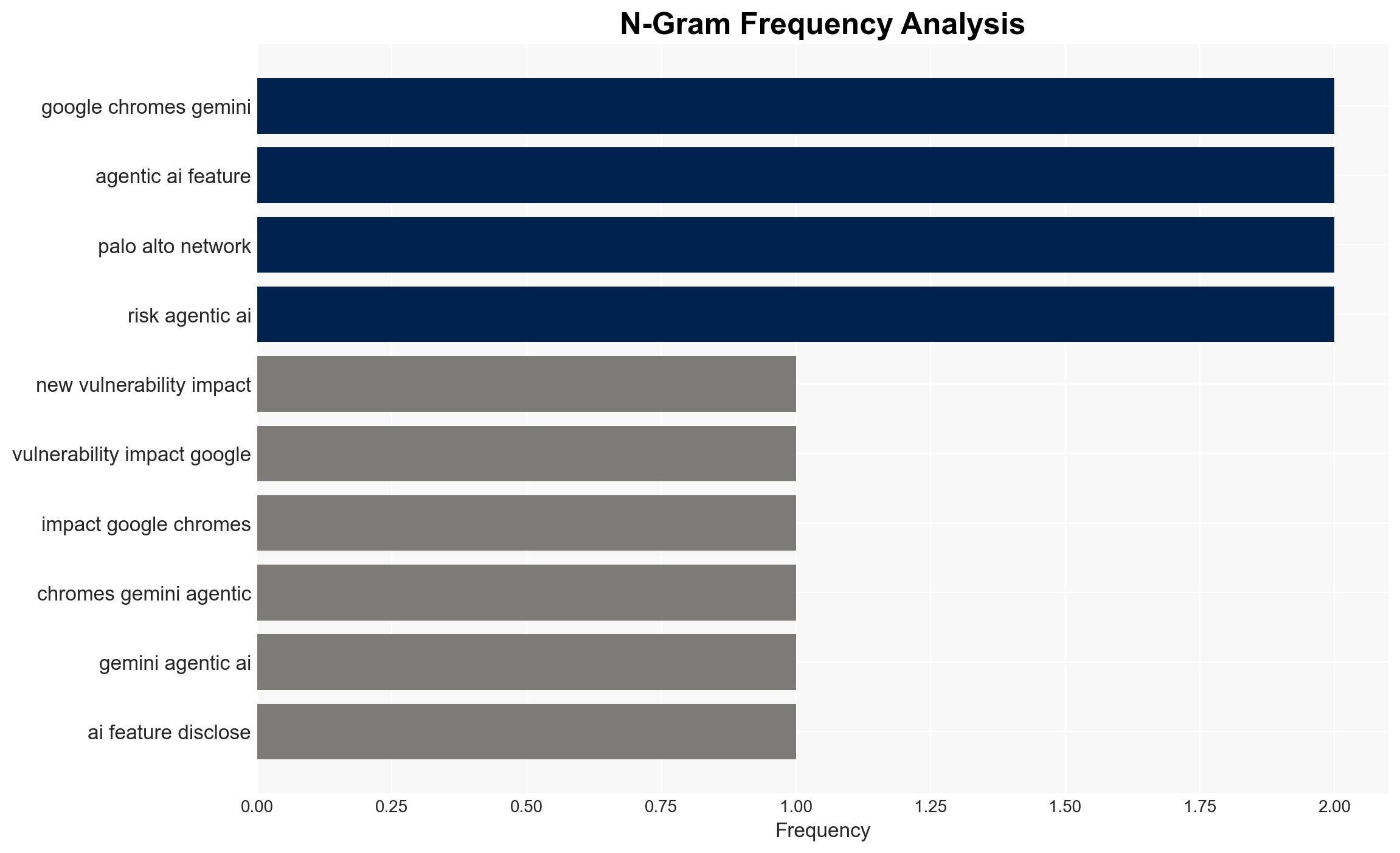

The recently disclosed high-severity vulnerability in Google Chrome’s Gemini AI feature poses a significant risk to user privacy and system security by allowing malicious extensions to hijack the AI assistant. This vulnerability affects users who have not updated to the latest browser version. The most likely hypothesis is that this vulnerability could be exploited as part of a broader cyber attack strategy. Overall confidence in this assessment is moderate.

2. Competing Hypotheses

- Hypothesis A: The vulnerability is primarily a standalone security flaw that can be mitigated by updating the browser. Supporting evidence includes the availability of a patch and the specific nature of the vulnerability. Key uncertainties include the speed and extent of user adoption of the patch.

- Hypothesis B: The vulnerability is part of a larger, coordinated cyber threat campaign targeting Chrome users. This is supported by the potential for integration into broader attack chains and the high-severity rating. Contradicting evidence includes the lack of specific reports of coordinated attacks exploiting this vulnerability.

- Assessment: Hypothesis A is currently better supported due to the immediate availability of a patch and the specific technical nature of the vulnerability. Indicators that could shift this judgment include reports of coordinated exploitation or evidence of widespread attacks leveraging this vulnerability.

3. Key Assumptions and Red Flags

- Assumptions: Users will update their browsers promptly; the patch effectively mitigates the vulnerability; malicious actors have not yet widely exploited the vulnerability; Google will continue to monitor and respond to related threats.

- Information Gaps: Details on the extent of exploitation in the wild; user update rates; potential for similar vulnerabilities in other browser components.

- Bias & Deception Risks: Potential bias in underestimating the capability of threat actors to exploit the vulnerability; reliance on vendor-provided information without independent verification.

4. Implications and Strategic Risks

This vulnerability could lead to increased scrutiny of AI-driven browser features and influence future cybersecurity strategies. If exploited, it may undermine trust in agentic AI technologies.

- Political / Geopolitical: Potential for increased regulatory scrutiny on AI technologies and privacy standards.

- Security / Counter-Terrorism: Could be leveraged by state or non-state actors to conduct espionage or disrupt communications.

- Cyber / Information Space: Highlights the evolving threat landscape of AI integration in consumer software, increasing the attack surface.

- Economic / Social: May affect user trust in technology companies, impacting market dynamics and consumer behavior.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Encourage prompt browser updates; monitor for signs of exploitation; increase awareness among users and IT professionals.

- Medium-Term Posture (1–12 months): Develop partnerships for information sharing on AI vulnerabilities; enhance AI security protocols and user education programs.

- Scenario Outlook: Best: Vulnerability is patched with minimal exploitation. Worst: Widespread exploitation leads to significant data breaches. Most-Likely: Limited exploitation with gradual user adoption of patches.

6. Key Individuals and Entities

- Gal Weizman, Senior Principal Security Researcher, Palo Alto Networks’ Unit 42

- Google Chrome Security Team

- Not clearly identifiable from open sources in this snippet.

7. Thematic Tags

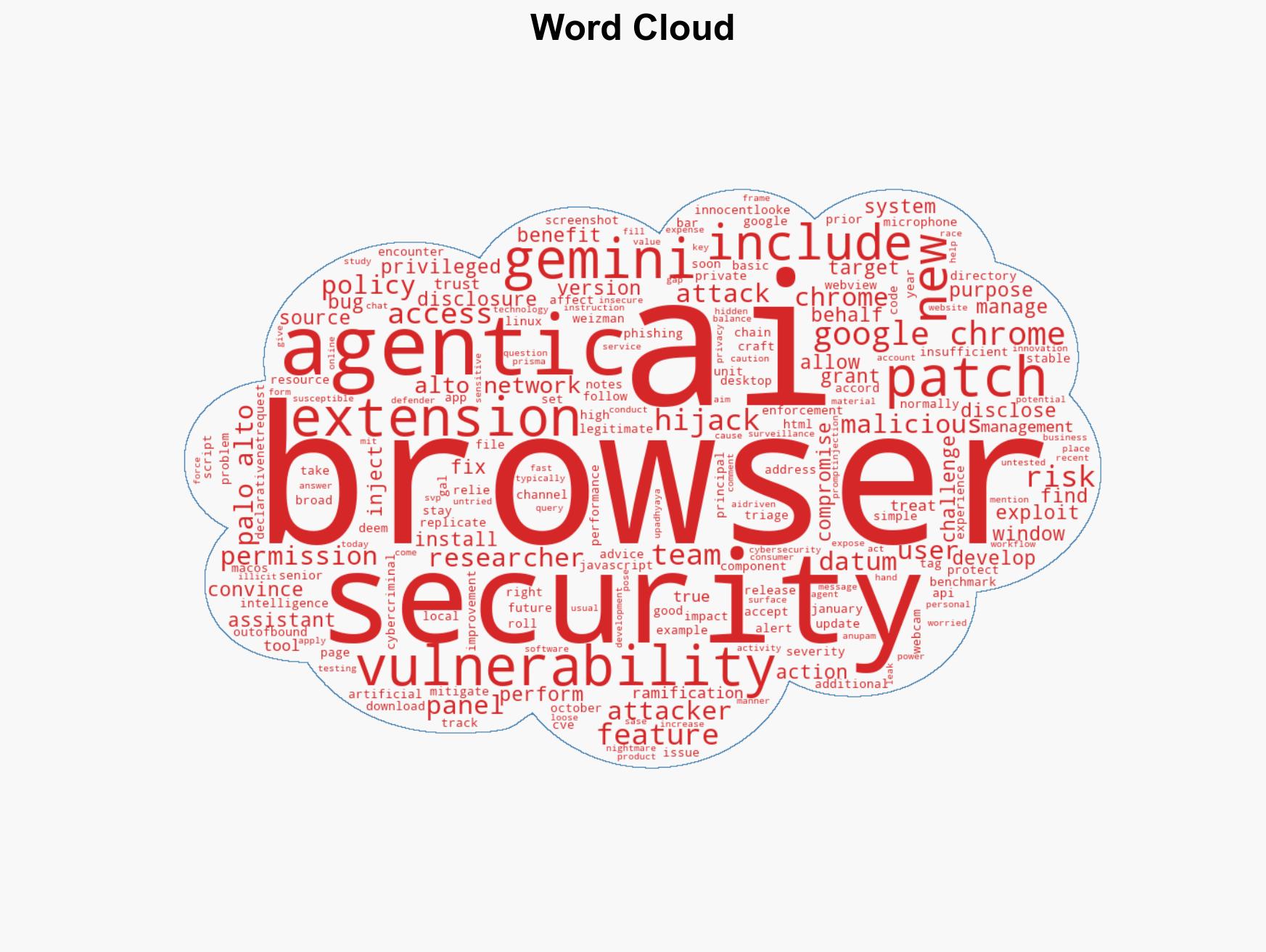

cybersecurity, AI vulnerabilities, browser security, cyber-espionage, information security, software patching, user privacy

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us