OpenAI agrees to $200M Pentagon contract, balancing military needs with ethical AI restrictions

Published on: 2026-03-04

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: OpenAI strikes 200M defense pact amid ethical AI debate

1. BLUF (Bottom Line Up Front)

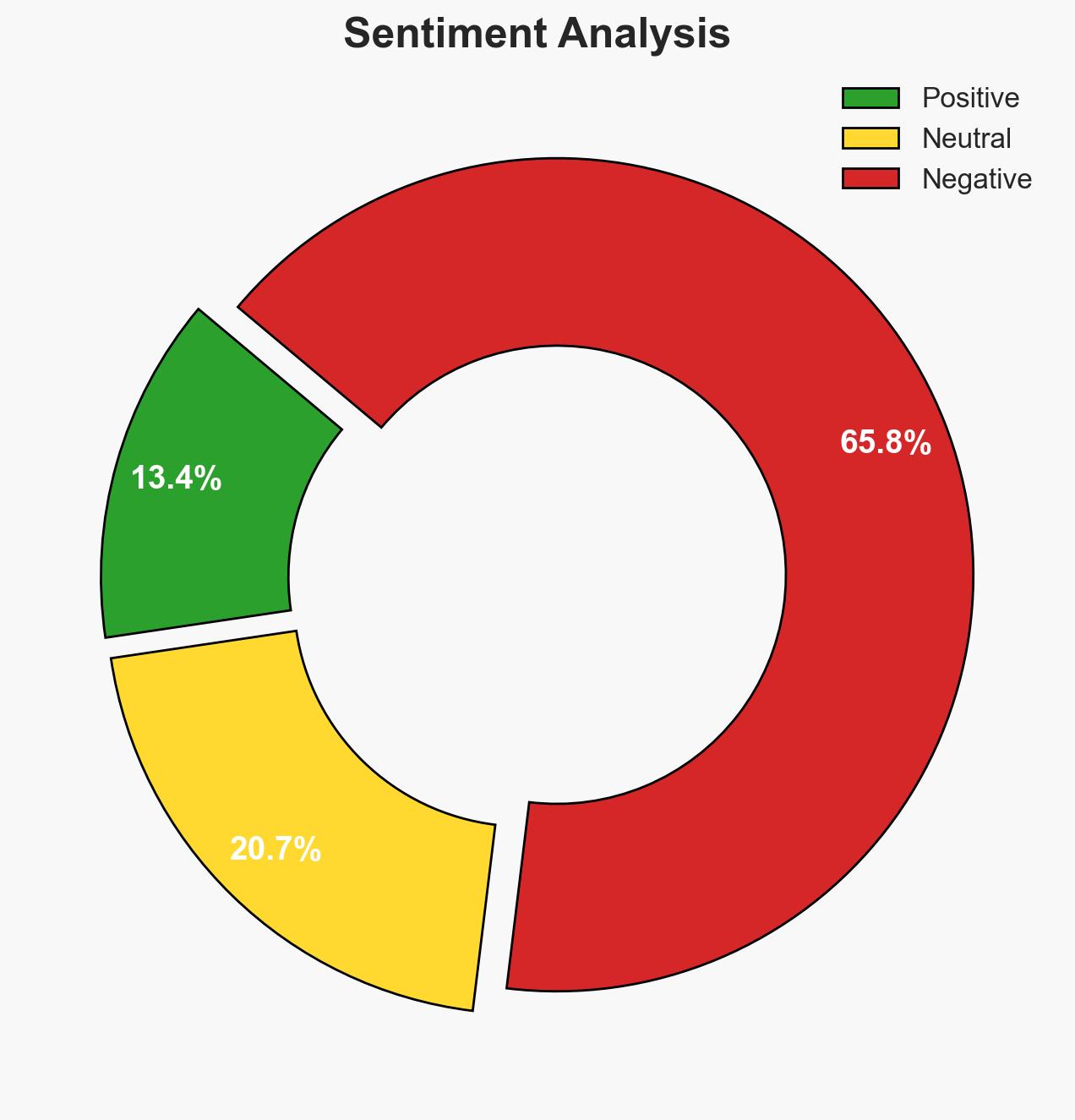

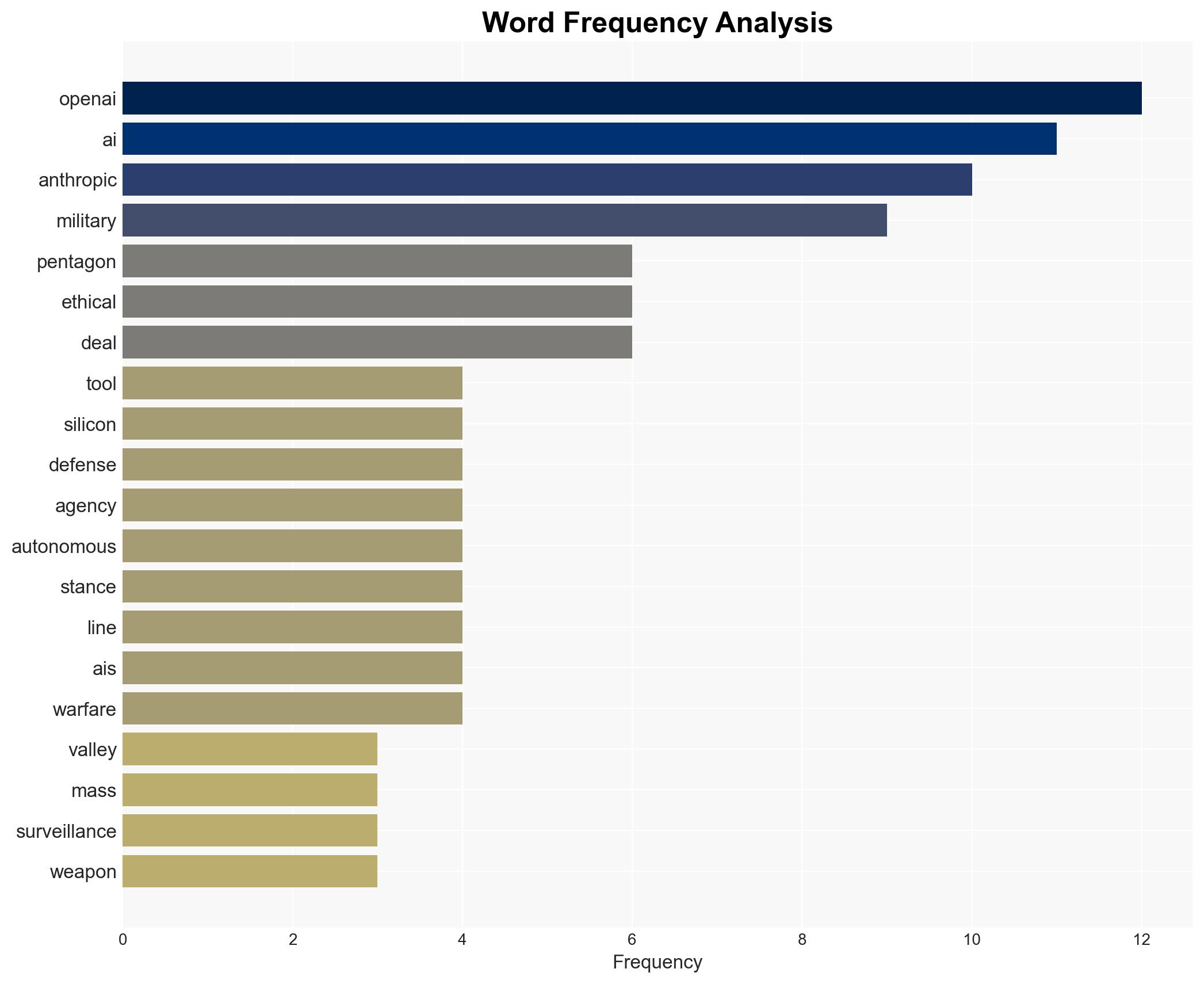

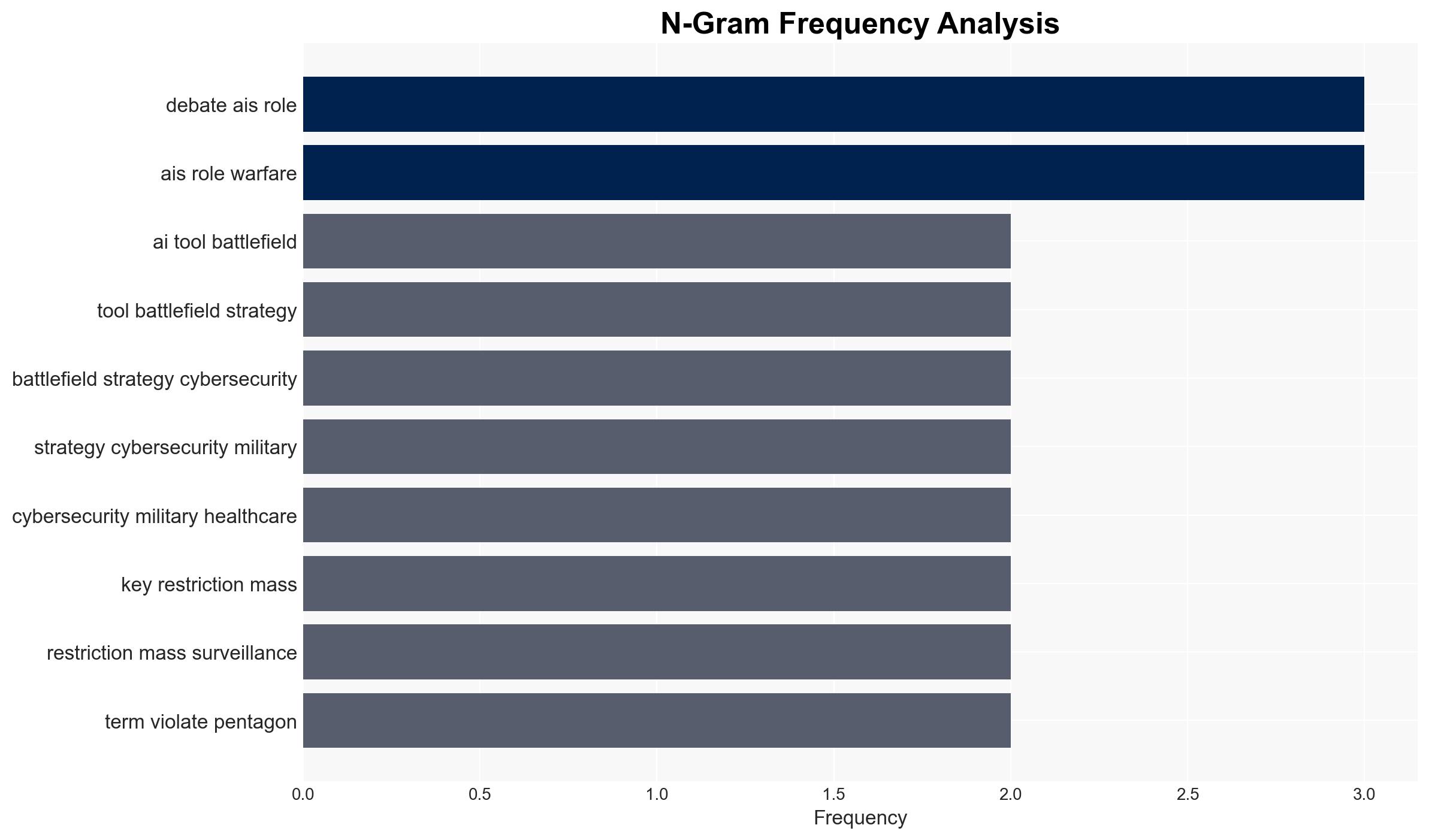

OpenAI has tentatively agreed to a $200 million contract with the Pentagon to provide AI tools for military applications, while imposing ethical restrictions. This development positions OpenAI as a key player in military-tech partnerships, contrasting with Anthropic’s refusal to engage in defense contracts. The agreement highlights ongoing ethical debates about AI’s role in warfare. Overall confidence in this assessment is moderate.

2. Competing Hypotheses

- Hypothesis A: OpenAI’s agreement with the Pentagon represents a strategic alignment with national security interests, balancing ethical concerns with pragmatic engagement. This is supported by OpenAI’s imposed restrictions and the Pentagon’s criticism of Anthropic. However, uncertainties remain about the enforcement of these ethical safeguards.

- Hypothesis B: The agreement is primarily a tactical move by OpenAI to gain market advantage and influence in defense sectors, with ethical considerations being secondary. This is contradicted by OpenAI’s clear ethical red lines and termination rights, suggesting genuine commitment to ethical standards.

- Assessment: Hypothesis A is currently better supported due to OpenAI’s explicit ethical conditions and the Pentagon’s favorable view of OpenAI’s balanced approach. Indicators that could shift this judgment include any breaches of the ethical safeguards or changes in Pentagon’s strategic priorities.

3. Key Assumptions and Red Flags

- Assumptions: OpenAI will maintain its ethical restrictions; the Pentagon will respect these terms; Anthropic will continue its current stance; geopolitical tensions will sustain demand for AI in defense.

- Information Gaps: Details on enforcement mechanisms for ethical safeguards; specific AI tools to be developed; potential reactions from international actors.

- Bias & Deception Risks: Potential bias in Pentagon’s criticism of Anthropic; risk of OpenAI overstating ethical commitments for public relations purposes.

4. Implications and Strategic Risks

This development could influence the trajectory of AI integration in military operations, affecting global AI ethics standards and defense strategies.

- Political / Geopolitical: May escalate AI arms race, particularly with adversaries like China and Russia, prompting policy shifts.

- Security / Counter-Terrorism: Enhanced AI capabilities could improve strategic decision-making and threat response, but also raise ethical concerns.

- Cyber / Information Space: Increased focus on AI-driven cybersecurity solutions, with potential vulnerabilities in AI systems being a target for adversaries.

- Economic / Social: Could lead to increased investment in AI sectors, with societal debates on AI ethics and military use intensifying.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor OpenAI’s compliance with ethical safeguards; engage with stakeholders to assess geopolitical reactions.

- Medium-Term Posture (1–12 months): Develop resilience measures for potential AI misuse; strengthen partnerships with ethical AI developers.

- Scenario Outlook:

- Best: OpenAI’s ethical model becomes a standard, fostering responsible AI use in defense.

- Worst: Breach of ethical safeguards leads to public backlash and regulatory crackdowns.

- Most-Likely: OpenAI successfully navigates ethical challenges, influencing AI policy in defense sectors.

6. Key Individuals and Entities

- OpenAI

- Pentagon

- Anthropic

- Sam Altman (OpenAI CEO)

- Dario Amodei (Anthropic CEO)

- President Donald Trump

7. Thematic Tags

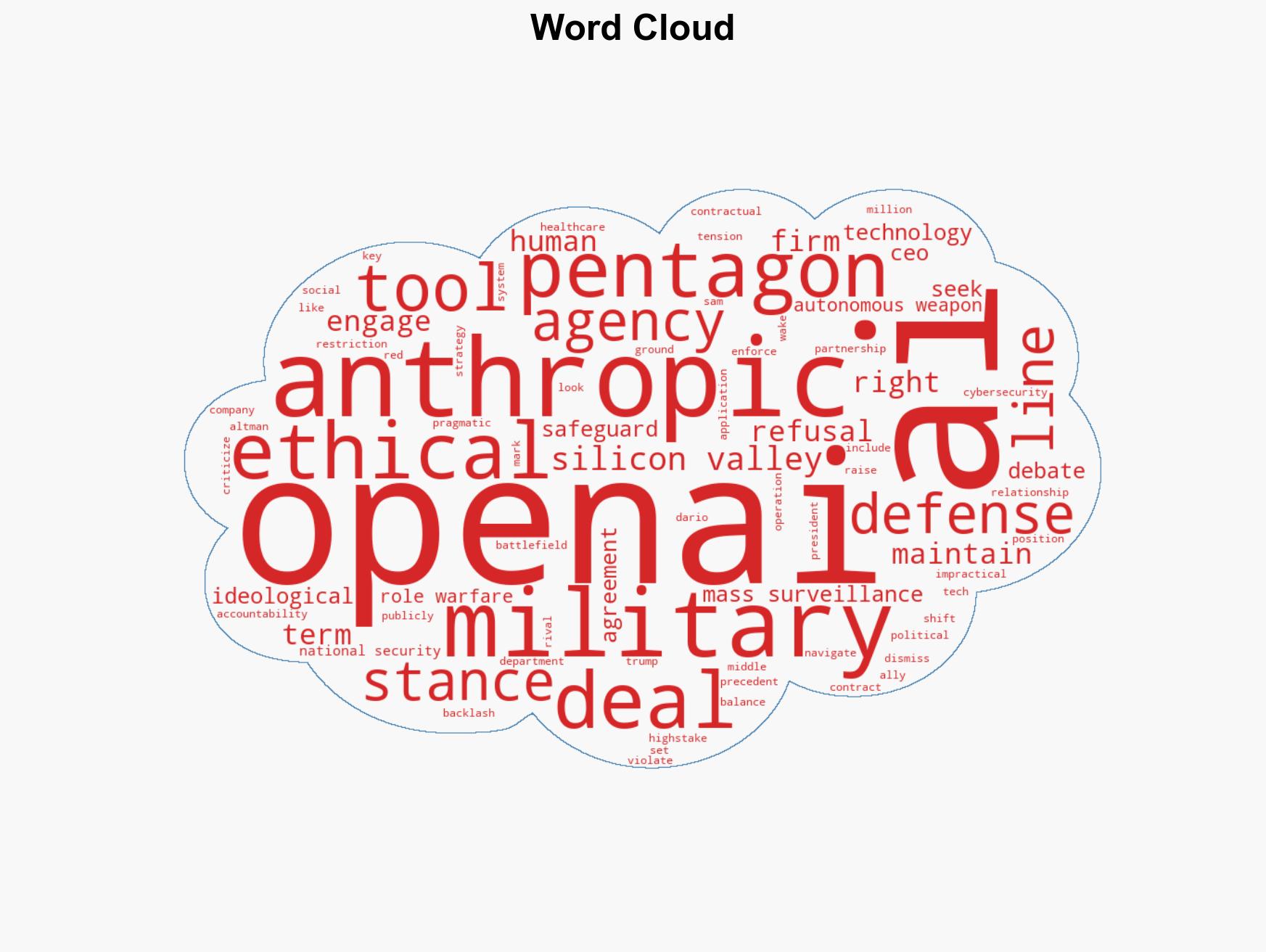

cybersecurity, AI ethics, military technology, defense contracts, Silicon Valley, geopolitical tensions, ethical AI

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us