Pentagon classifies Anthropic as a national security threat, halting its military contracts and AI services.

Published on: 2026-03-06

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Anthropic says that the Pentagon has declared it a national security risk

1. BLUF (Bottom Line Up Front)

The Pentagon has designated Anthropic as a national security risk, prohibiting its AI services in defense operations. This decision may significantly impact the U.S. AI industry and defense capabilities. The most likely hypothesis is that this move is driven by unresolved concerns over the use of AI in military applications. Overall confidence in this judgment is moderate.

2. Competing Hypotheses

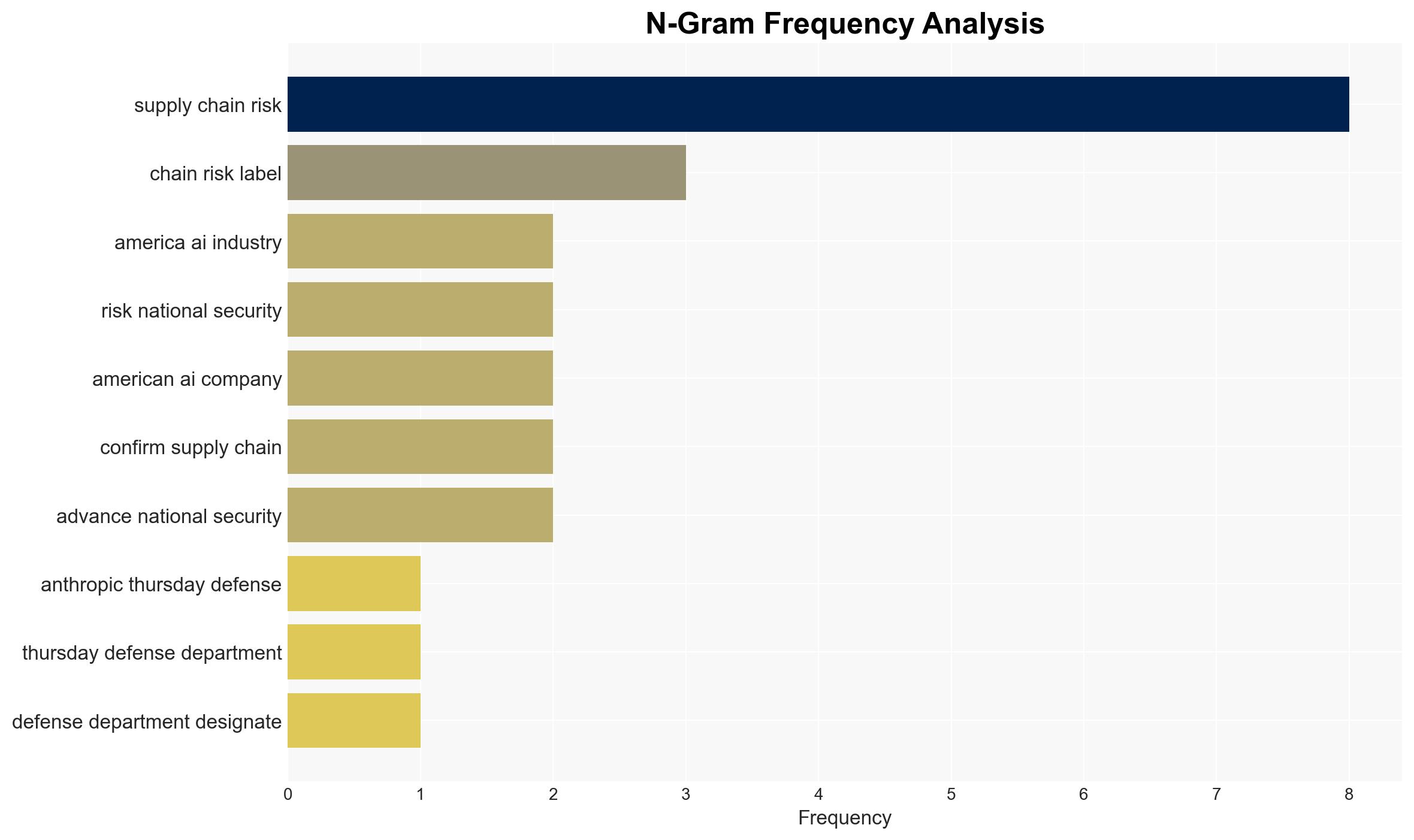

- Hypothesis A: The Pentagon’s designation of Anthropic as a national security risk is primarily due to unresolved concerns about the use of its AI systems in lethal autonomous weapons and mass surveillance. Supporting evidence includes Anthropic’s insistence on stronger guarantees against such uses, which may have led to a breakdown in negotiations. However, the exact nature of the perceived supply chain risk is unclear.

- Hypothesis B: The designation is a strategic move to favor other AI companies, such as OpenAI and xAI, which have recently secured agreements with the Pentagon. This hypothesis is supported by the timing of these agreements following the designation. Contradicting evidence includes Anthropic’s extensive existing contracts with tech companies that also work with the Pentagon.

- Assessment: Hypothesis A is currently better supported due to the explicit concerns raised by Anthropic about the use of its AI technology. Key indicators that could shift this judgment include further details on the supply chain risk or changes in Pentagon policy towards AI use.

3. Key Assumptions and Red Flags

- Assumptions: The Pentagon’s decision is based on genuine security concerns; Anthropic’s AI capabilities are critical to defense operations; Other AI companies can adequately replace Anthropic’s services.

- Information Gaps: Specific details of the supply chain risk; Internal Pentagon deliberations on AI use; Potential influence of external actors on the decision.

- Bias & Deception Risks: Potential bias in Pentagon’s assessment favoring certain AI providers; Risk of Anthropic overstating its alignment with national security interests to mitigate business impact.

4. Implications and Strategic Risks

This development could lead to shifts in the AI industry landscape and affect U.S. military AI capabilities. It may also influence international perceptions of U.S. AI policy and partnerships.

- Political / Geopolitical: Potential strain on U.S. relations with allies relying on Anthropic’s technology; Influence on global AI governance discussions.

- Security / Counter-Terrorism: Possible gaps in AI capabilities for defense operations; Increased reliance on other AI providers may introduce new vulnerabilities.

- Cyber / Information Space: Risk of cyber espionage or information leaks during transition to new AI systems; Potential for misinformation campaigns exploiting the situation.

- Economic / Social: Disruption in the AI market; Potential job losses or shifts within the tech industry; Public debate on ethical AI use in defense.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor legal proceedings and public statements from Anthropic and the Pentagon; Assess potential impacts on current defense operations.

- Medium-Term Posture (1–12 months): Develop contingency plans for AI capability gaps; Strengthen partnerships with alternative AI providers; Engage in dialogue on ethical AI use in defense.

- Scenario Outlook: Best: Resolution of legal challenges and reinstatement of Anthropic’s services; Worst: Prolonged legal battle and significant capability gaps; Most-Likely: Gradual transition to alternative AI providers with some operational adjustments.

6. Key Individuals and Entities

- Anthropic

- Defense Department

- Pete Hegseth (Defense Secretary)

- Dario Amodei (Anthropic CEO)

- OpenAI

- Sam Altman (OpenAI CEO)

- xAI

- Elon Musk (xAI)

7. Thematic Tags

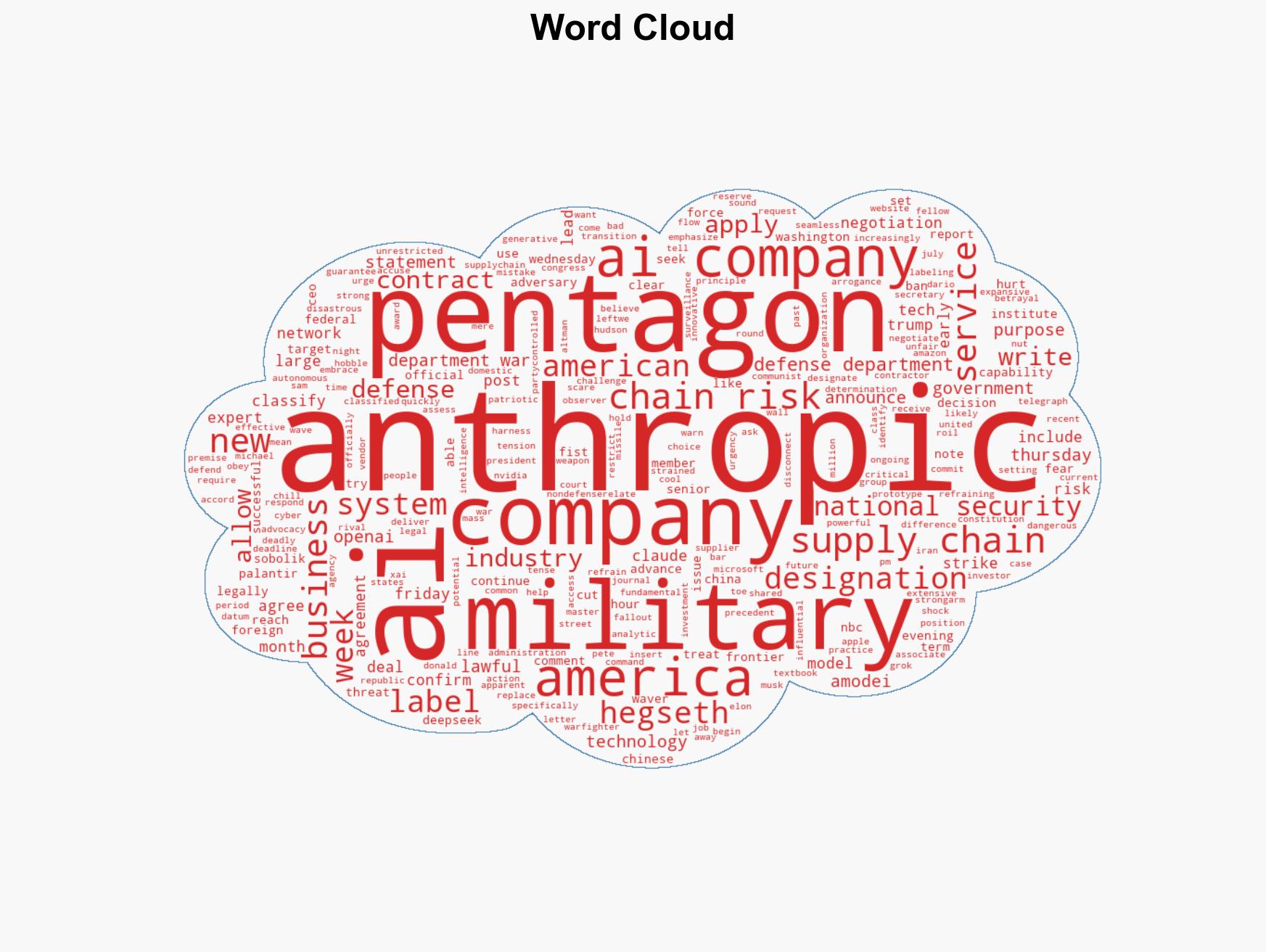

national security threats, national security, AI ethics, defense policy, supply chain risk, military technology, AI industry, legal challenges

Structured Analytic Techniques Applied

- Cognitive Bias Stress Test: Expose and correct potential biases in assessments through red-teaming and structured challenge.

- Bayesian Scenario Modeling: Use probabilistic forecasting for conflict trajectories or escalation likelihood.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

National Security Threats Briefs ·

Daily Summary ·

Support us