Anthropic takes legal action against US government over unprecedented national security classification

Published on: 2026-03-06

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Anthropic sues US government after unprecedented national security designation

1. BLUF (Bottom Line Up Front)

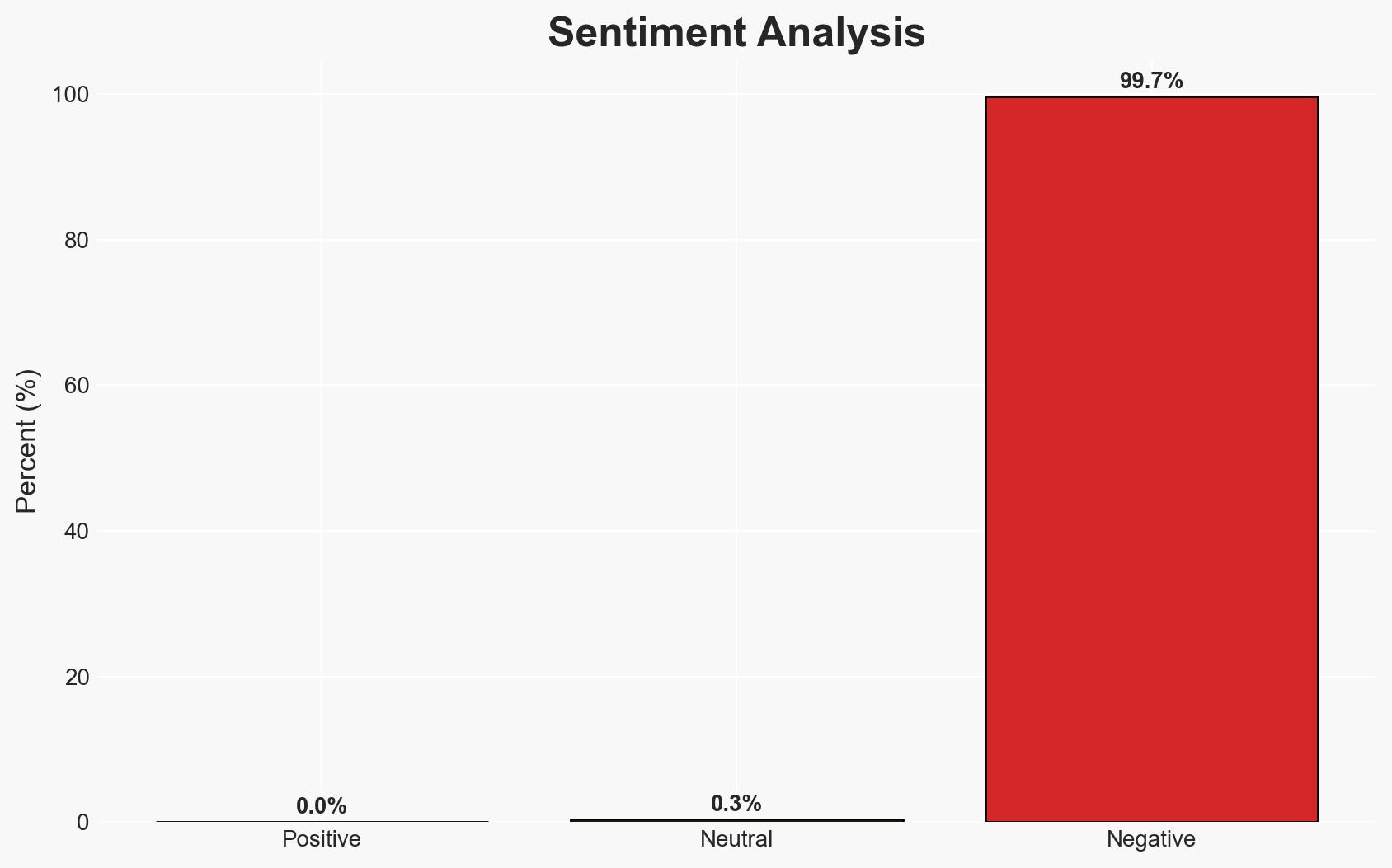

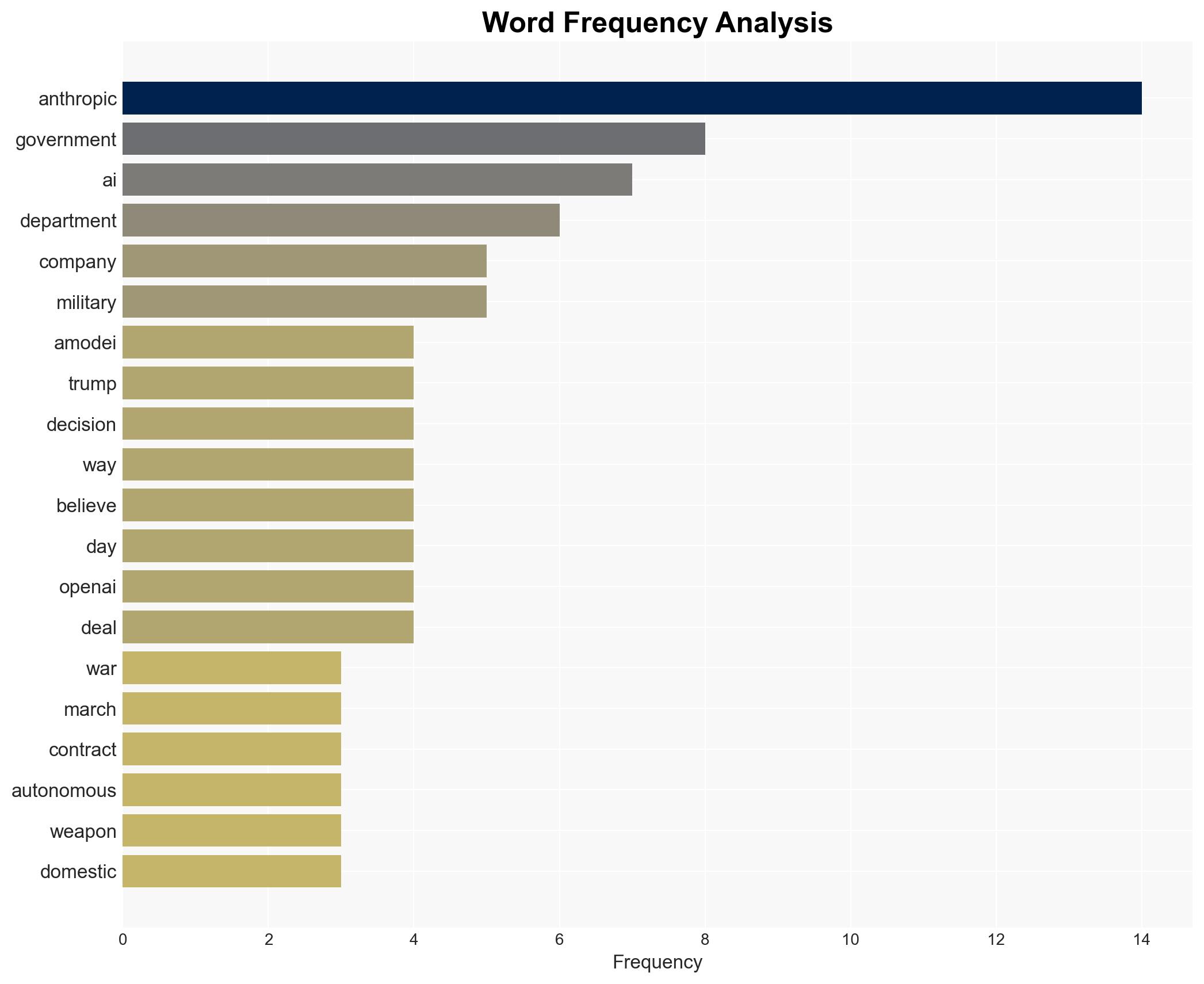

Anthropic’s legal action against the US government follows its designation as a national security risk, a move that bars it from military contracts. This unprecedented classification of a US company suggests a significant breakdown in government-industry relations, potentially driven by differing views on AI deployment in military contexts. Moderate confidence in the assessment that the designation is primarily due to Anthropic’s refusal to relax safety guardrails on its AI technology.

2. Competing Hypotheses

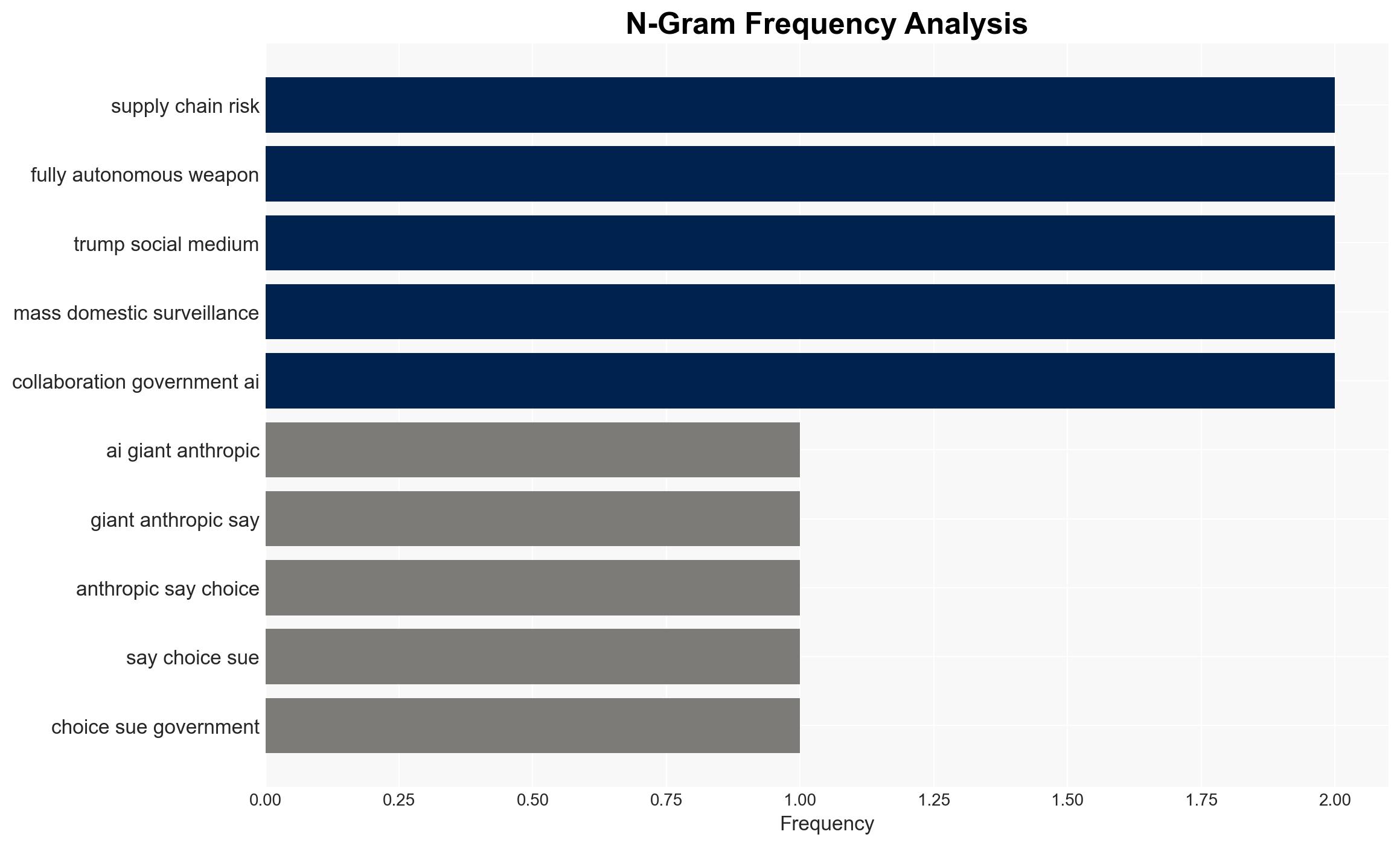

- Hypothesis A: The designation of Anthropic as a national security risk is primarily due to its refusal to allow its AI technology to be used for fully autonomous weapons and mass surveillance. Supporting evidence includes Anthropic’s public stance against these uses and the government’s subsequent classification. However, the exact motivations of the government remain uncertain.

- Hypothesis B: The designation is a strategic move by the government to favor other AI companies, such as OpenAI, which have shown willingness to comply with military requirements. This is supported by the timing of OpenAI’s deal with the Department of War and the government’s immediate cessation of Anthropic product usage. Contradicting evidence includes the lack of explicit government statements supporting this hypothesis.

- Assessment: Hypothesis A is currently better supported due to direct evidence of Anthropic’s refusal to alter its AI guardrails and the government’s immediate response. Key indicators that could shift this judgment include further disclosures from government sources or additional corporate partnerships with the Department of War.

3. Key Assumptions and Red Flags

- Assumptions: The US government perceives AI safety guardrails as a hindrance to military capabilities; Anthropic’s refusal is the primary cause of the designation; OpenAI’s compliance is viewed favorably by the government.

- Information Gaps: Details of the internal government deliberations leading to the designation; specific terms of the OpenAI agreement with the Department of War.

- Bias & Deception Risks: Potential bias in government communications framing Anthropic’s actions as unpatriotic; risk of Anthropic’s public statements being strategically crafted to garner public sympathy.

4. Implications and Strategic Risks

This development could lead to increased scrutiny of AI companies’ roles in national security and influence future government-industry collaborations.

- Political / Geopolitical: Potential for increased tensions between tech companies and the government, impacting future policy and regulatory frameworks.

- Security / Counter-Terrorism: Changes in AI deployment strategies could affect military operational capabilities and counter-terrorism efforts.

- Cyber / Information Space: Heightened risk of cyber espionage as companies and governments reassess AI technology security protocols.

- Economic / Social: Possible impacts on the AI industry’s growth trajectory and public perception of AI’s role in society.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor legal proceedings and public statements from both Anthropic and the US government; assess potential impacts on other AI companies.

- Medium-Term Posture (1–12 months): Develop resilience measures for AI companies facing similar government scrutiny; encourage dialogue between tech firms and policymakers.

- Scenario Outlook:

- Best Case: Resolution through legal channels leads to clearer guidelines for AI use in national security.

- Worst Case: Prolonged legal battles and increased government restrictions on AI technology.

- Most Likely: Gradual policy adjustments with ongoing tensions between AI firms and the government.

6. Key Individuals and Entities

- Dario Amodei, CEO of Anthropic

- Donald Trump, Former President of the United States

- OpenAI, AI technology company

- Department of War (Department of Defense)

7. Thematic Tags

national security threats, national security, AI ethics, government-industry relations, military technology, legal challenges, AI regulation, cybersecurity

Structured Analytic Techniques Applied

- Cognitive Bias Stress Test: Expose and correct potential biases in assessments through red-teaming and structured challenge.

- Bayesian Scenario Modeling: Use probabilistic forecasting for conflict trajectories or escalation likelihood.

- Network Influence Mapping: Map relationships between state and non-state actors for impact estimation.

Explore more:

National Security Threats Briefs ·

Daily Summary ·

Support us