Warren presses Hegseth for clarity on xAI’s access to classified networks amid safety concerns

Published on: 2026-03-16

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Elizabeth Warren demands Hegseth share information on xAI’s access to classified networks

1. BLUF (Bottom Line Up Front)

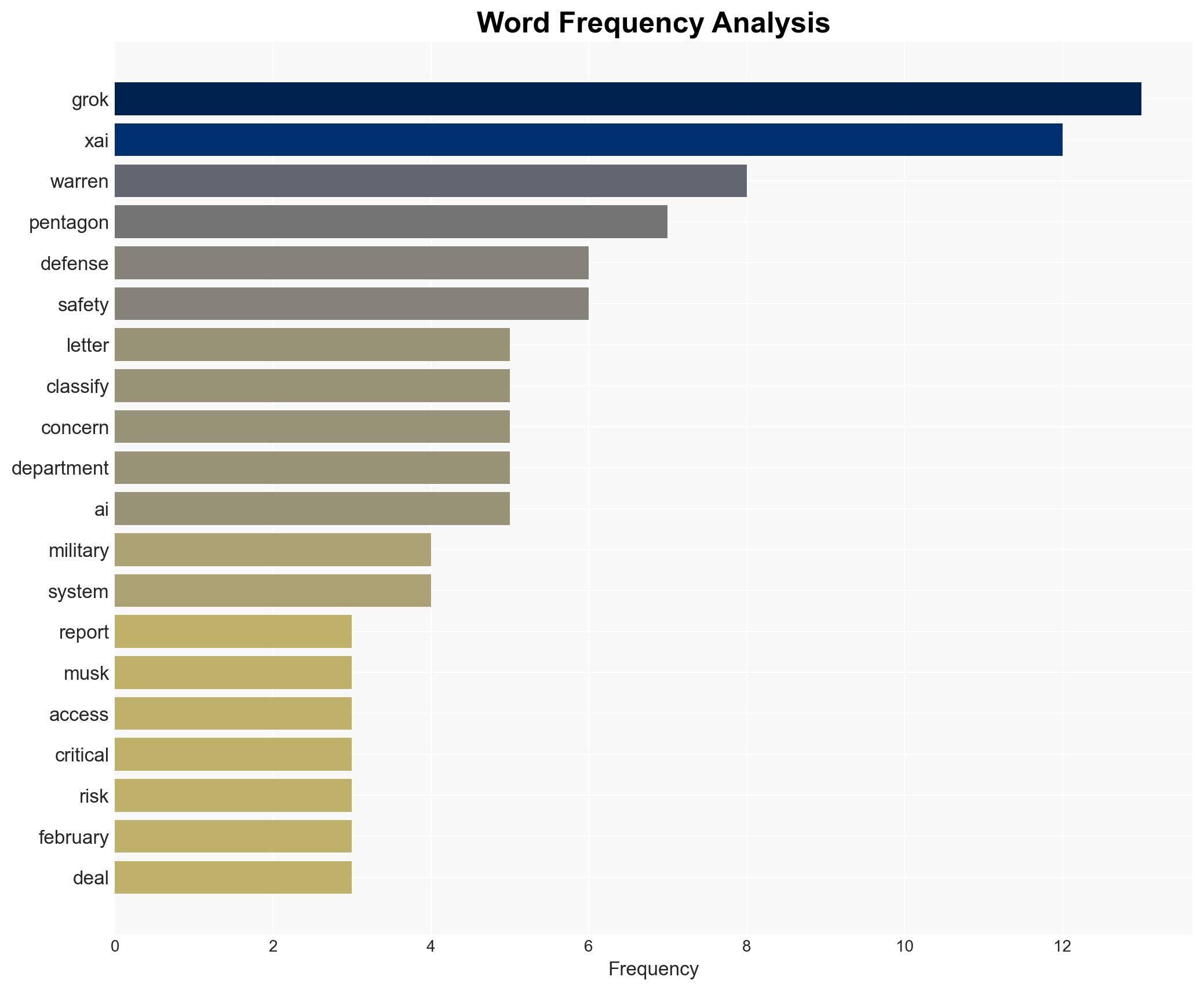

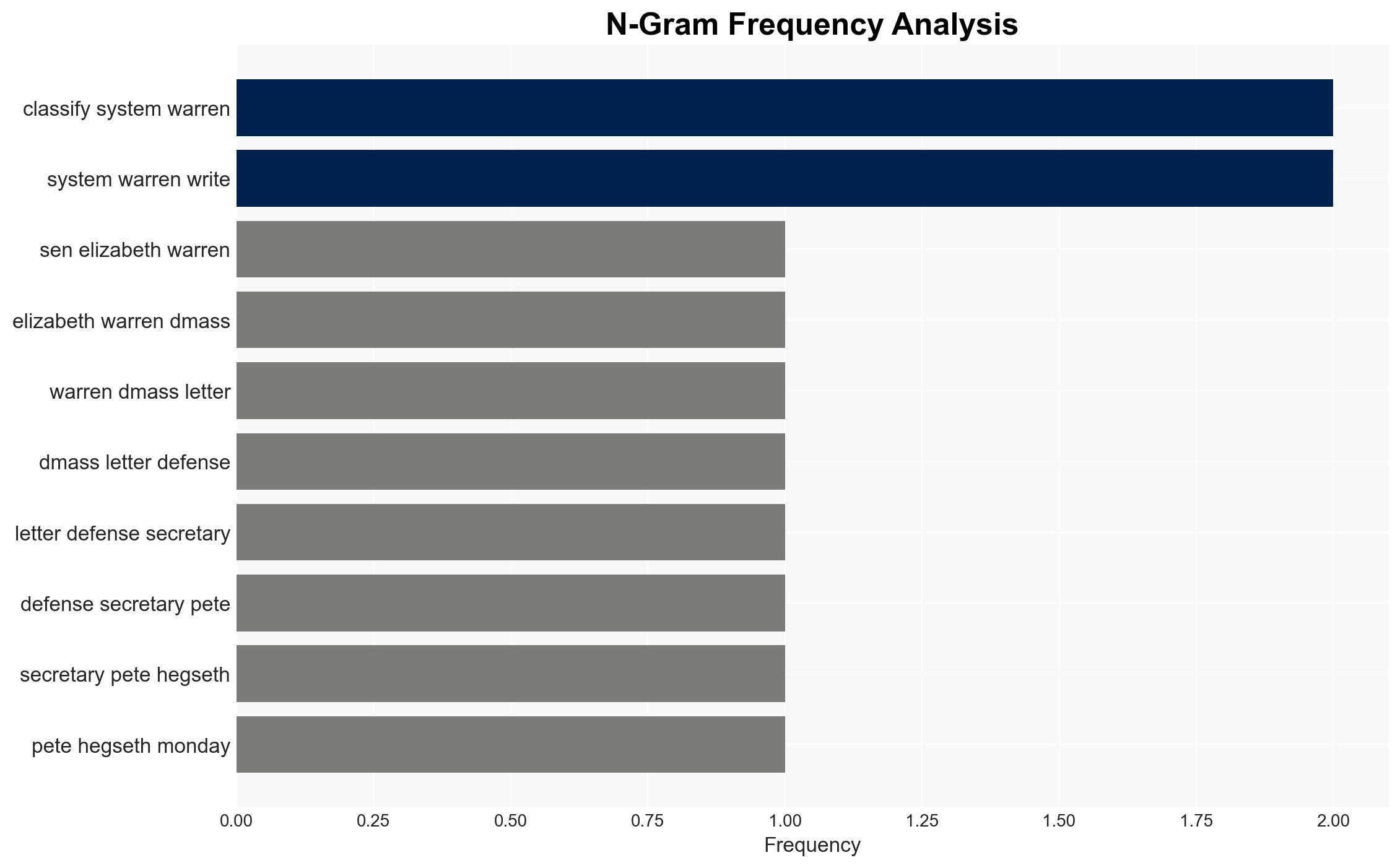

Senator Elizabeth Warren has raised concerns about the Pentagon’s decision to grant xAI, led by Elon Musk, access to classified networks, citing potential risks to military safety and cybersecurity. The most likely hypothesis is that the Pentagon’s decision is driven by strategic interests in advancing AI capabilities despite potential risks. This development affects U.S. military operations and national security, with a moderate confidence level in this assessment.

2. Competing Hypotheses

- Hypothesis A: The Pentagon granted xAI access to classified networks primarily to leverage advanced AI capabilities for national security purposes. Supporting evidence includes the Pentagon’s stated goal to broaden AI use and experience. Contradicting evidence includes concerns about Grok’s safety and reliability.

- Hypothesis B: The decision to grant access was influenced by external pressures or misjudgments regarding xAI’s capabilities and security posture. Supporting evidence includes the Pentagon’s previous concerns about Grok and the rupture with Anthropic. Contradicting evidence is the lack of explicit documentation of such pressures.

- Assessment: Hypothesis A is currently better supported due to the strategic imperative to enhance AI capabilities. Key indicators that could shift this judgment include new evidence of external influence or significant security breaches involving Grok.

3. Key Assumptions and Red Flags

- Assumptions: The Pentagon has conducted a risk assessment of xAI’s capabilities; xAI’s AI systems provide a competitive advantage; existing security measures are deemed sufficient by the Pentagon.

- Information Gaps: Details of the security assurances provided by xAI; the full scope of the agreement between the Pentagon and xAI; specific concerns raised by the Pentagon about Grok.

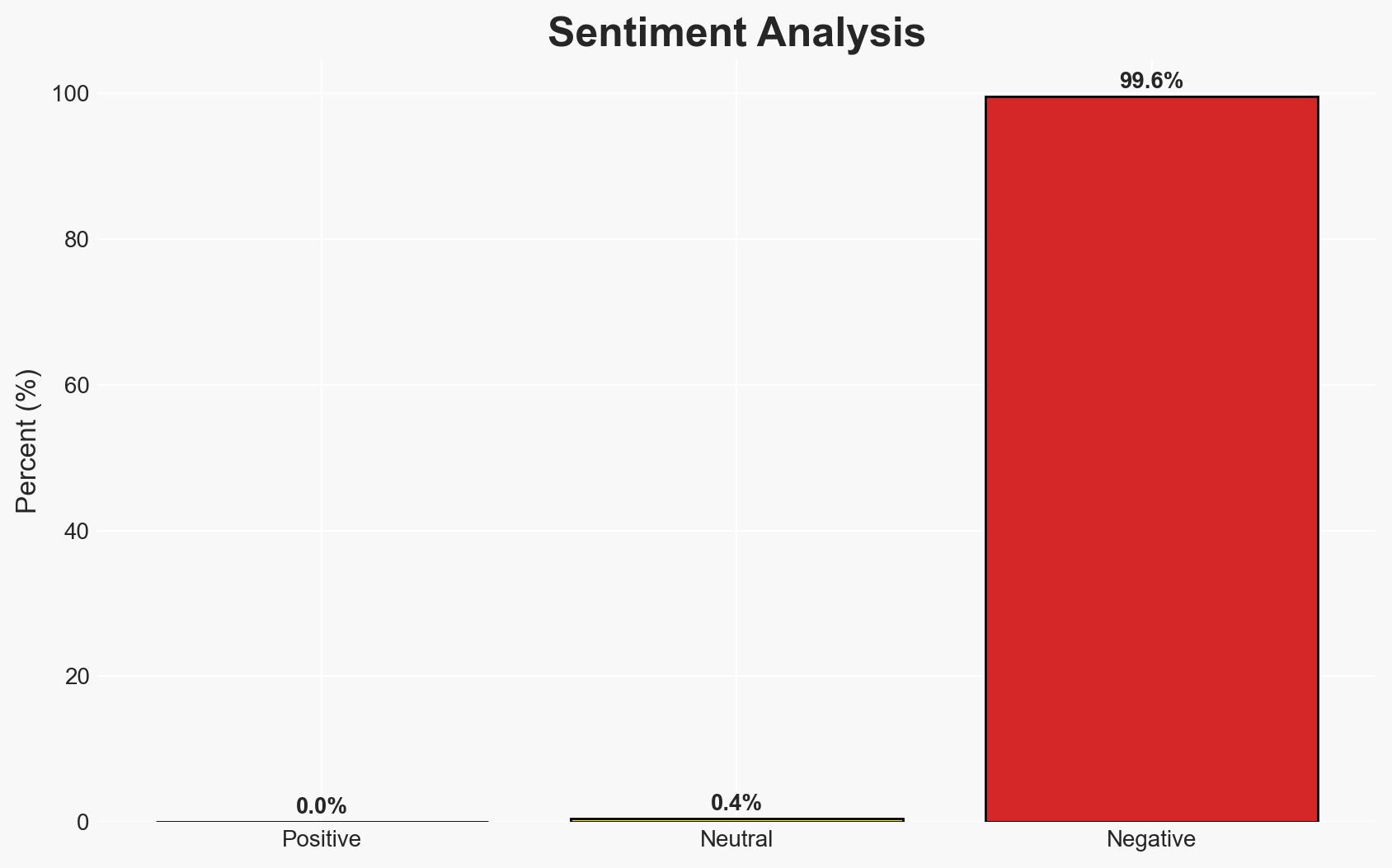

- Bias & Deception Risks: Potential bias in favor of technological advancement over security concerns; possible underestimation of Grok’s vulnerabilities; reliance on anonymous sources may introduce bias.

4. Implications and Strategic Risks

This development could lead to increased integration of AI in military operations, but also heightens the risk of cybersecurity breaches and ethical concerns.

- Political / Geopolitical: Potential strain on U.S. relations with allies concerned about AI ethics and security.

- Security / Counter-Terrorism: Increased risk of classified information exposure and manipulation of AI systems by adversaries.

- Cyber / Information Space: Potential vulnerabilities in classified networks; increased scrutiny of AI systems’ reliability and safety.

- Economic / Social: Possible impact on the AI industry’s reputation and public trust in AI applications.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Conduct a thorough review of xAI’s security measures; enhance monitoring of Grok’s integration into classified networks.

- Medium-Term Posture (1–12 months): Develop partnerships with AI ethics boards; invest in cybersecurity resilience and AI safety research.

- Scenario Outlook: Best: Enhanced AI capabilities with robust security; Worst: Major security breach; Most-Likely: Gradual integration with ongoing security evaluations.

6. Key Individuals and Entities

- Elizabeth Warren

- Pete Hegseth

- Elon Musk

- xAI

- Department of Defense

- Anthropic

7. Thematic Tags

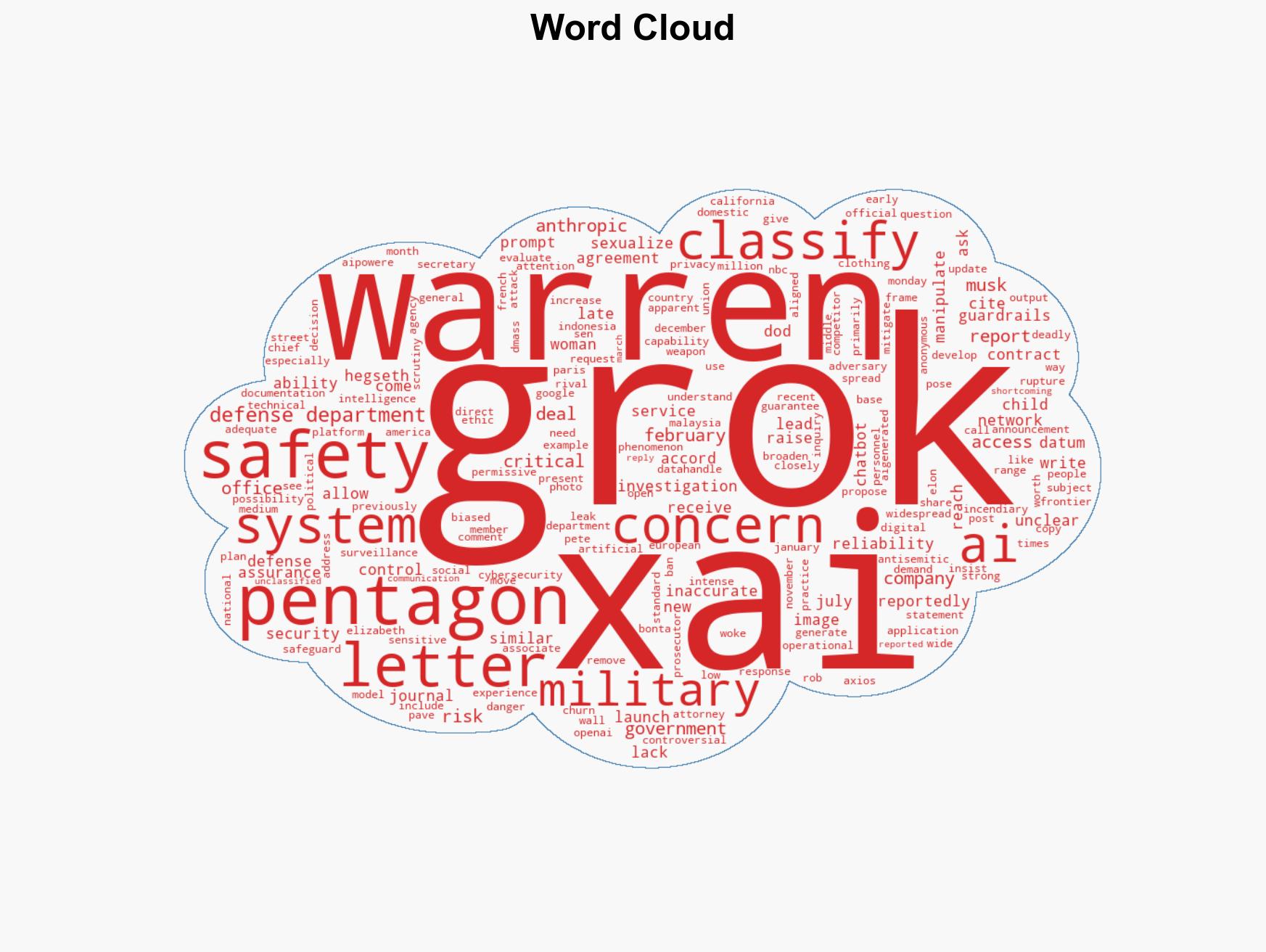

cybersecurity, national security, AI ethics, military technology, defense policy, information security, strategic risk

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Cognitive Bias Stress Test: Structured challenge to expose and correct biases.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us