Cybersecurity must adapt to human behavior to effectively mitigate risks in an evolving digital landscape.

Published on: 2026-03-20

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Its time cyber security understood human behavior and acted accordingly

1. BLUF (Bottom Line Up Front)

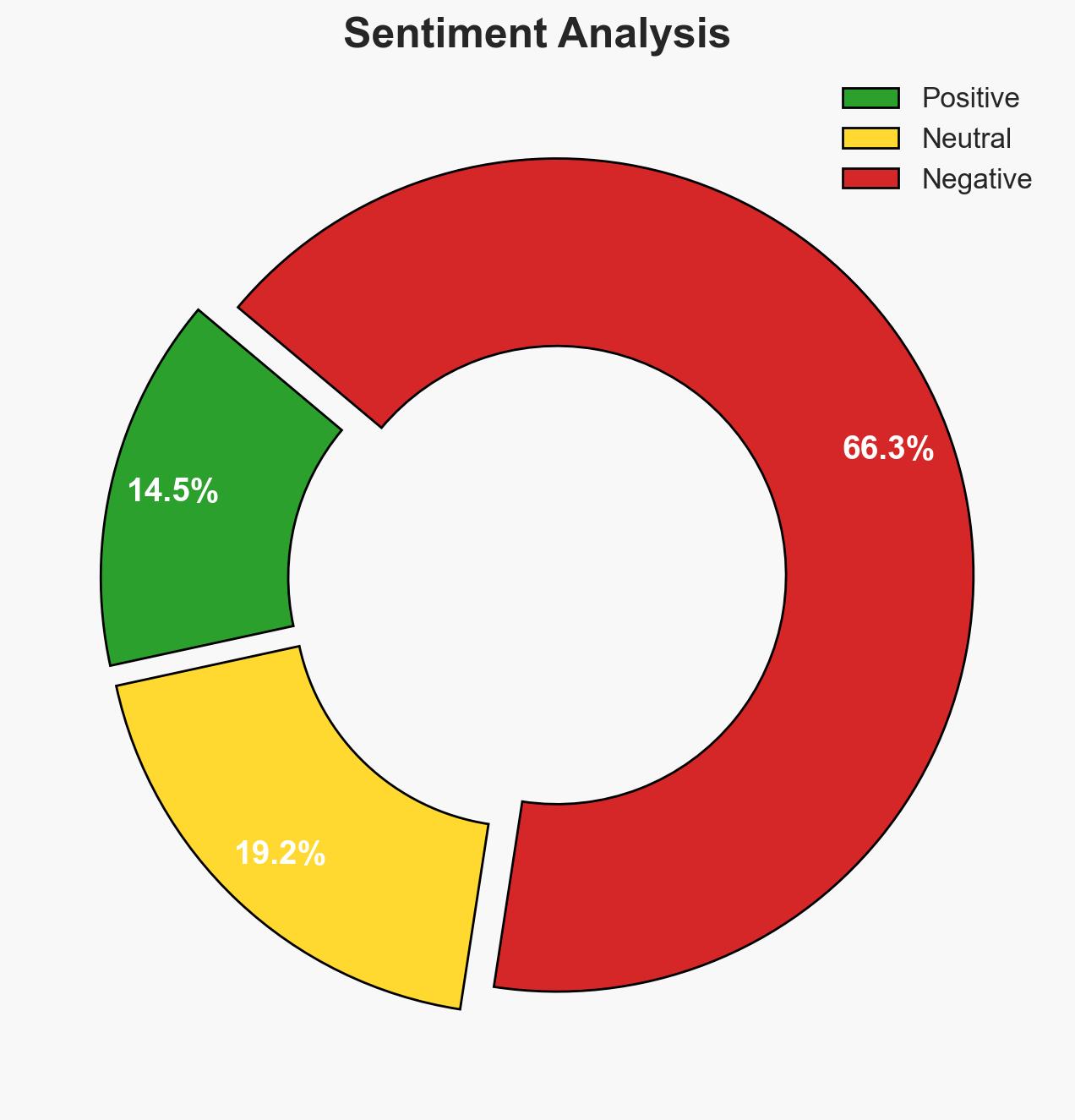

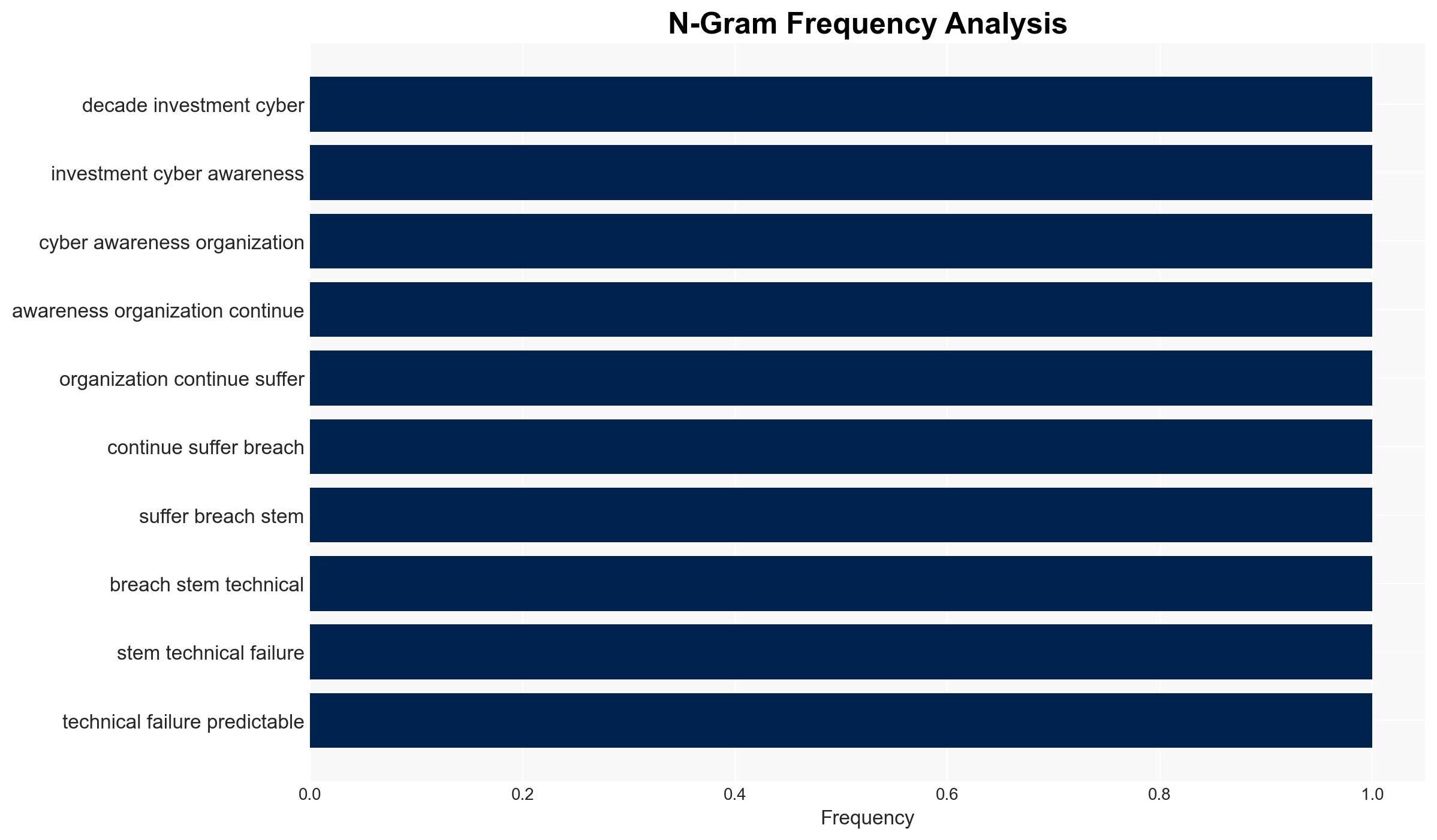

The increasing exploitation of predictable human behavior by cybercriminals, facilitated by advancements in AI, poses a significant threat to organizational cybersecurity. The shift from technical breaches to social engineering attacks highlights the need for adaptive defense mechanisms that account for human factors. This trend is likely to intensify, impacting businesses globally. Overall confidence in this assessment is moderate.

2. Competing Hypotheses

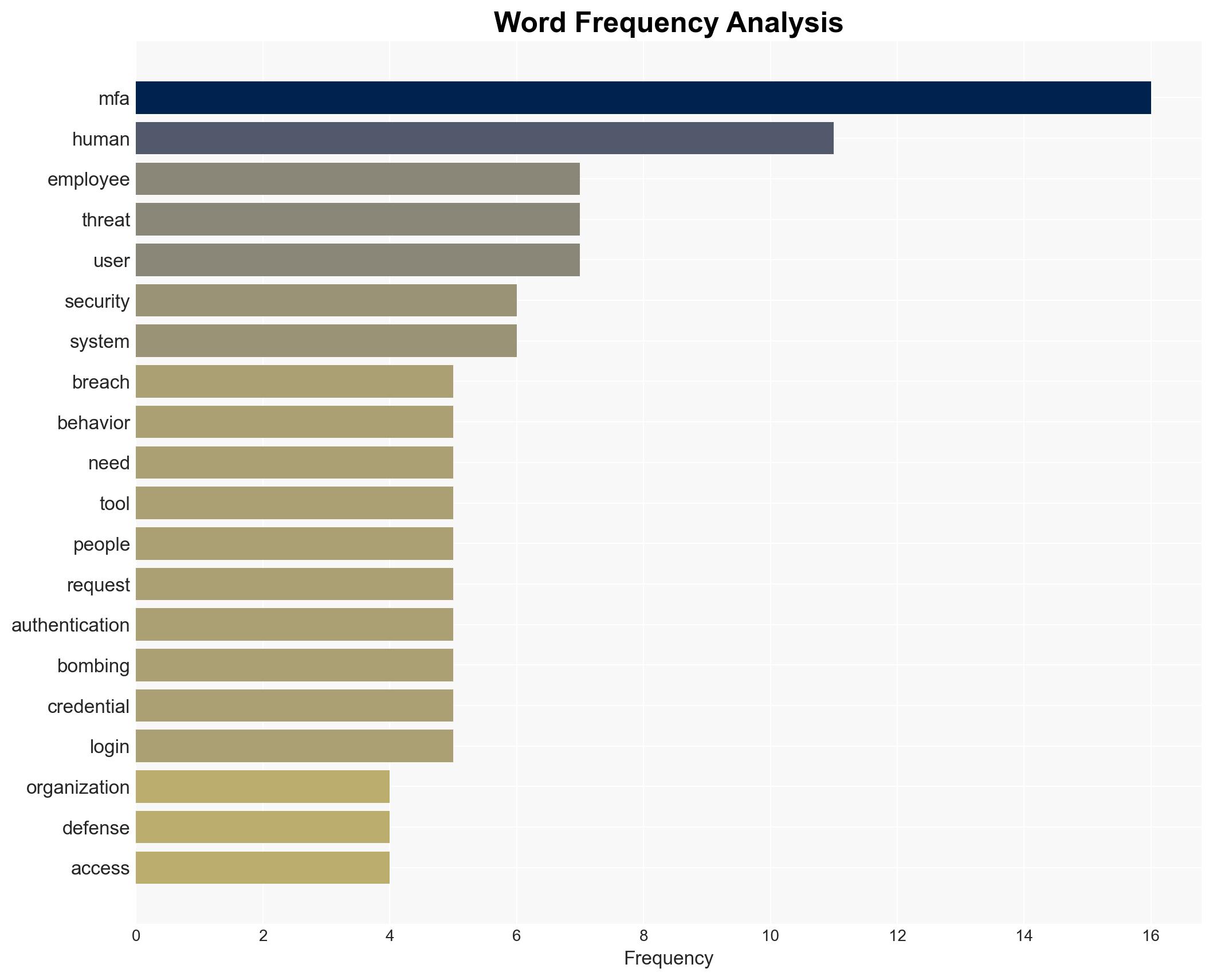

- Hypothesis A: Cybersecurity breaches are primarily due to human error and predictable behavior, exacerbated by cognitive overload and social engineering tactics. Supporting evidence includes the reliance on mental shortcuts and the success of MFA bombing. Key uncertainties involve the extent to which technical solutions can mitigate these human factors.

- Hypothesis B: Breaches are still predominantly caused by technical vulnerabilities, with human error being a secondary factor. This hypothesis is less supported given the current trend of social engineering attacks and the diminishing role of traditional network perimeters.

- Assessment: Hypothesis A is currently better supported due to the documented shift towards exploiting human behavior and the effectiveness of social engineering tactics. Indicators that could shift this judgment include a resurgence of significant technical vulnerabilities being exploited.

3. Key Assumptions and Red Flags

- Assumptions: Human behavior under pressure is predictable and exploitable; AI advancements will continue to enhance social engineering tactics; MFA remains a critical defense layer.

- Information Gaps: Detailed statistics on the proportion of breaches caused by human error versus technical vulnerabilities; effectiveness of current training programs in mitigating human error.

- Bias & Deception Risks: Potential over-reliance on anecdotal evidence of human error; bias towards underestimating the role of technical vulnerabilities; deception by threat actors in reporting breach causes.

4. Implications and Strategic Risks

The evolution of cyber threats towards exploiting human behavior could lead to increased frequency and sophistication of breaches, necessitating a paradigm shift in cybersecurity strategies.

- Political / Geopolitical: Potential for increased state-sponsored social engineering campaigns targeting critical infrastructure.

- Security / Counter-Terrorism: Heightened risk of cyber attacks being used as a tool for terrorism or political coercion.

- Cyber / Information Space: Greater emphasis on identity management and behavioral analytics in cybersecurity frameworks.

- Economic / Social: Increased financial losses for businesses, potential erosion of consumer trust, and social disruption from high-profile breaches.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Enhance employee training on social engineering tactics; implement stricter MFA protocols; monitor AI-driven social engineering developments.

- Medium-Term Posture (1–12 months): Develop partnerships with AI firms for advanced threat detection; invest in behavioral analytics tools; strengthen identity management systems.

- Scenario Outlook:

- Best: Improved defenses significantly reduce breach incidents.

- Worst: Widespread breaches lead to severe economic and social impacts.

- Most-Likely: Continued adaptation by both defenders and attackers, with gradual improvements in security posture.

6. Key Individuals and Entities

- Not clearly identifiable from open sources in this snippet.

7. Thematic Tags

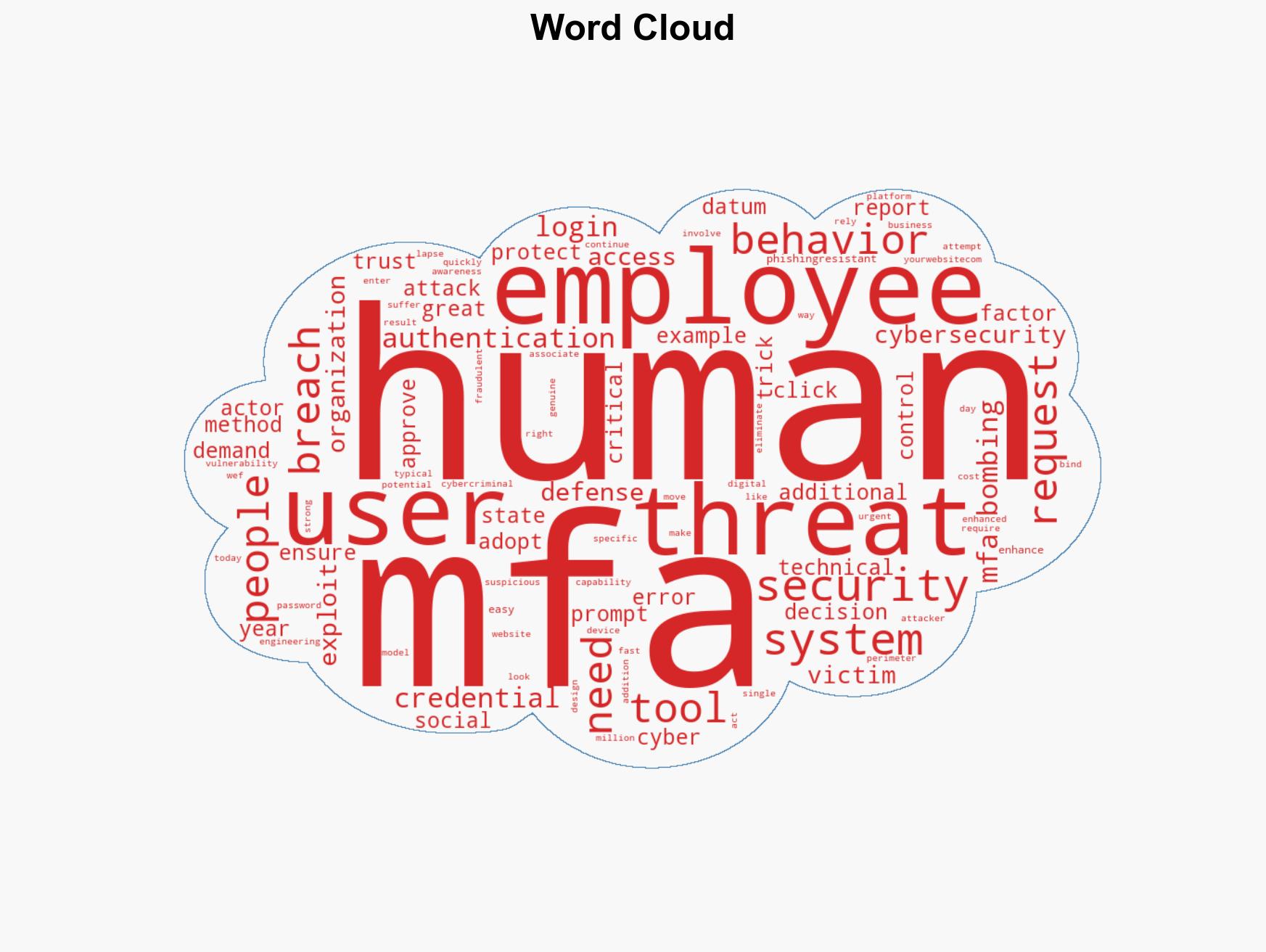

cybersecurity, human behavior, social engineering, AI in cyber threats, MFA, identity management, cognitive overload

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us