LiteLLM Python library compromised, enabling unauthorized access to user machines through malicious updates.

Published on: 2026-03-24

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: A popular Python library just became a backdoor to your entire machine

1. BLUF (Bottom Line Up Front)

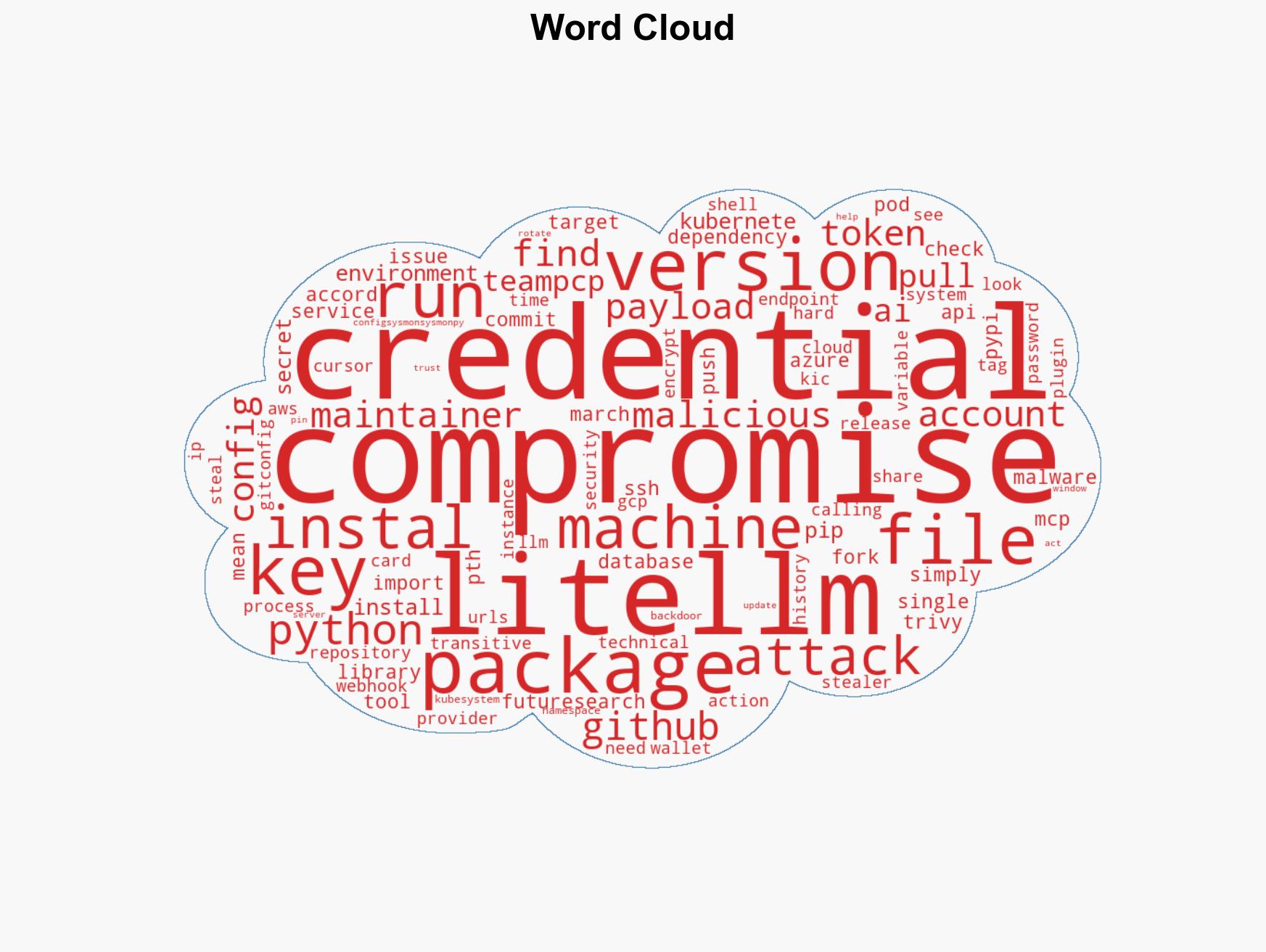

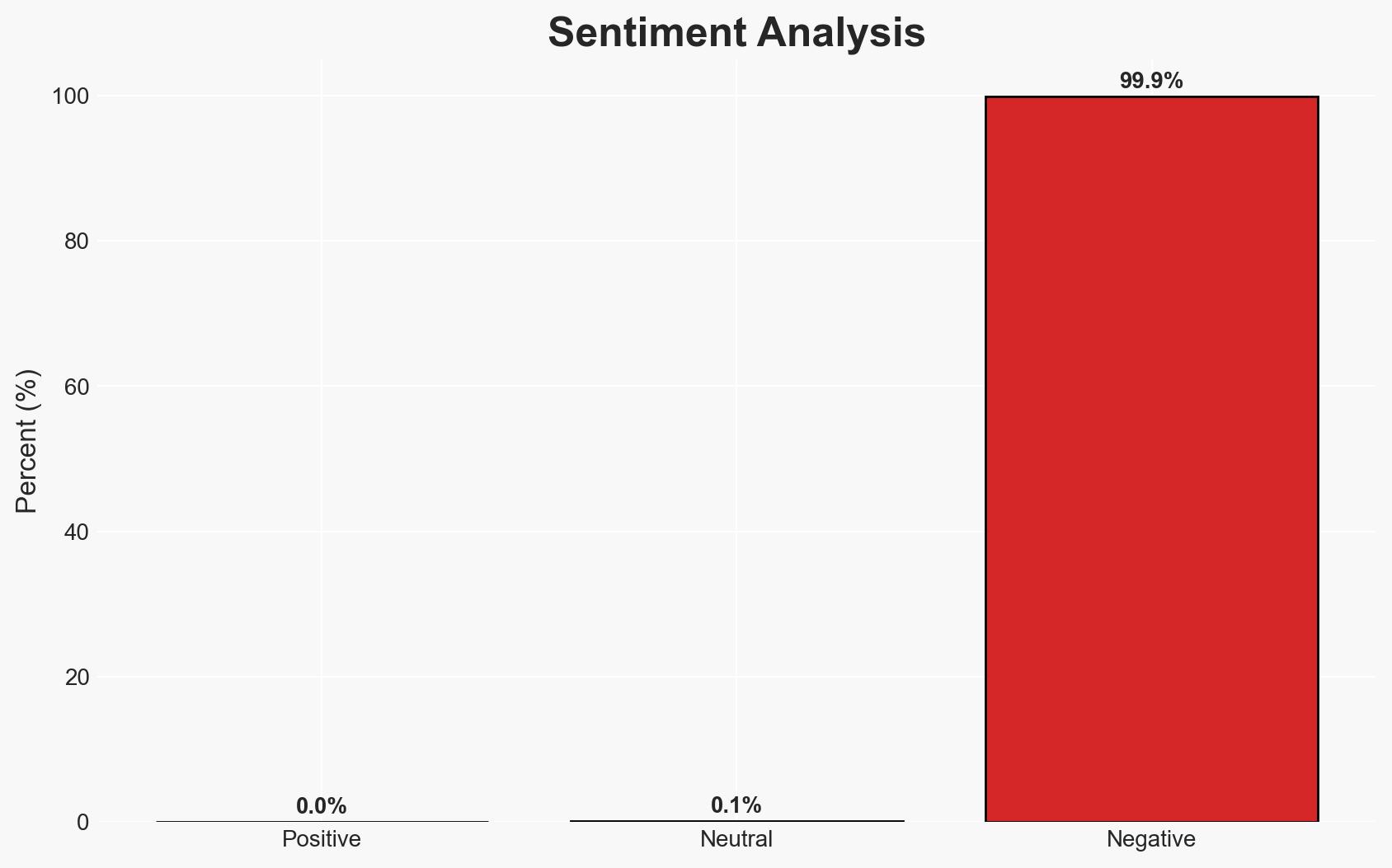

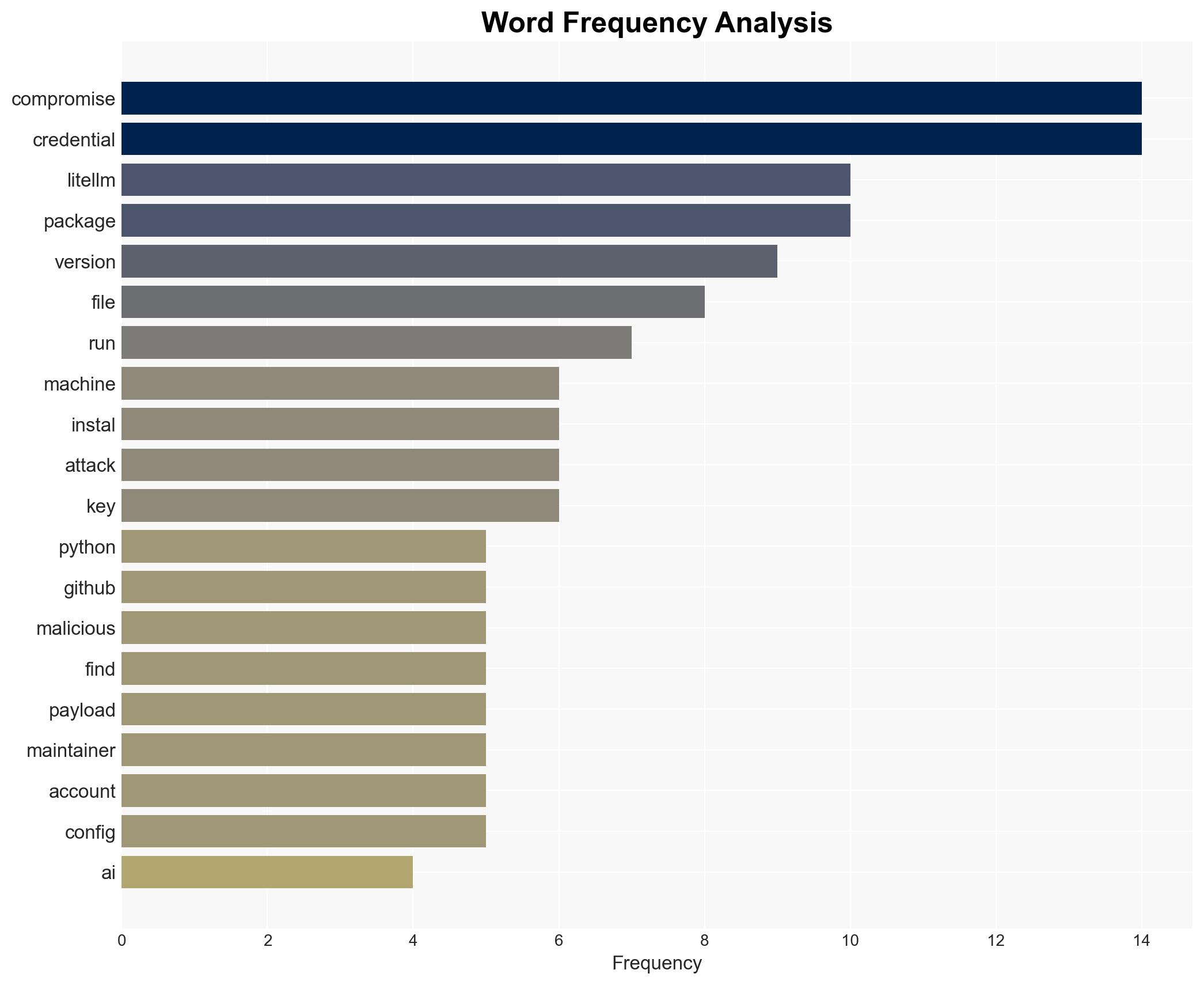

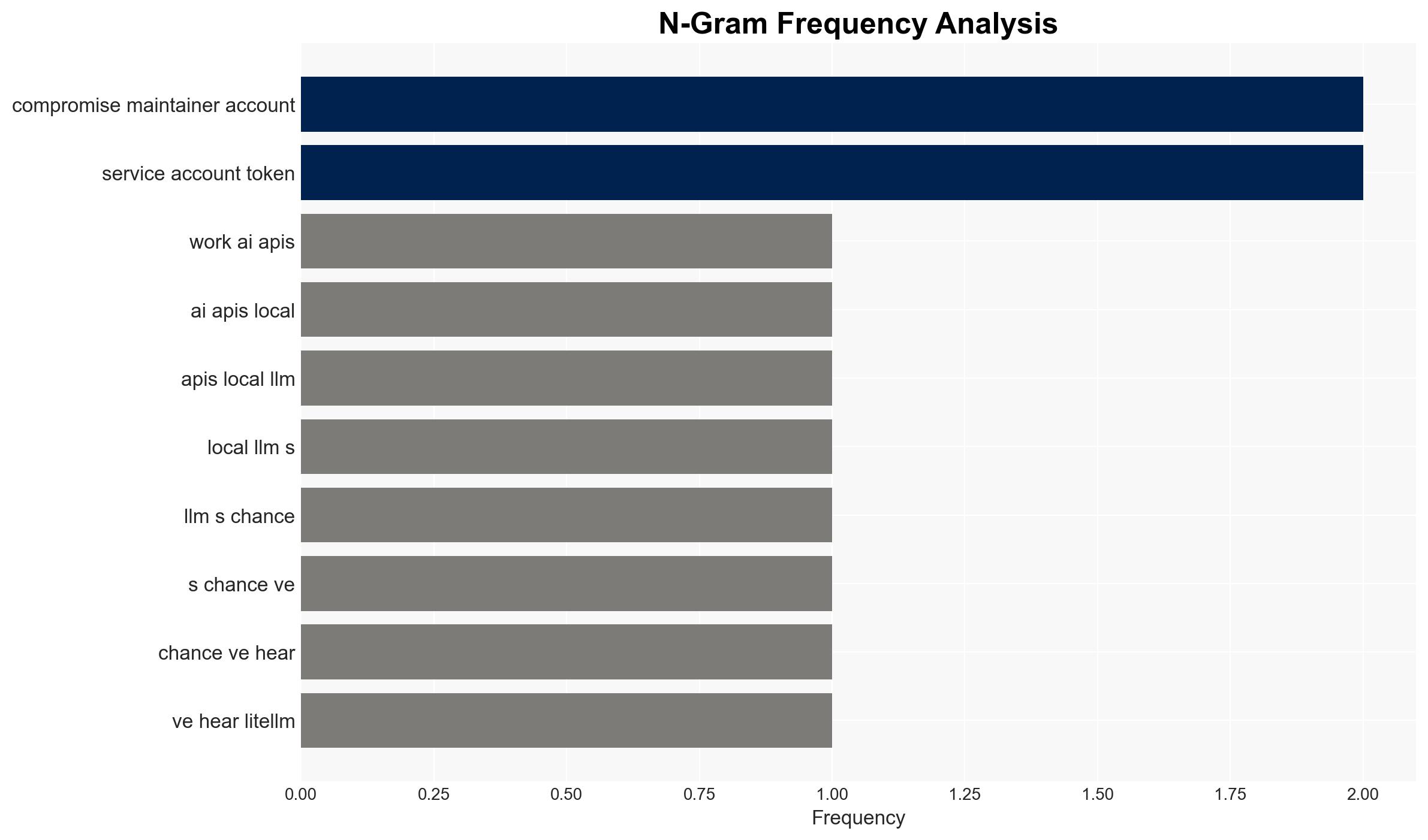

The LiteLLM Python library, widely used for AI model interactions, has been compromised with a backdoor, potentially affecting numerous systems relying on AI APIs. The attack is likely orchestrated by the threat actor TeamPCP, known for similar recent exploits. This poses a significant cyber risk to organizations using the library. Overall confidence in this assessment is moderate, given the technical overlap with known TeamPCP methods.

2. Competing Hypotheses

- Hypothesis A: TeamPCP is responsible for the LiteLLM compromise, as evidenced by the similar attack patterns and technical methods used in recent attacks on Aqua Security and Checkmarx. However, the possibility of a copycat using publicly available techniques cannot be entirely ruled out.

- Hypothesis B: A separate actor is mimicking TeamPCP’s methods to obscure attribution, leveraging known techniques to exploit LiteLLM. The explicit calling card could be a deliberate attempt to mislead investigators.

- Assessment: Hypothesis A is currently better supported due to the consistent use of encryption schemes and exfiltration patterns specific to TeamPCP. Indicators that could shift this judgment include new evidence of different operational tactics or attribution to another actor.

3. Key Assumptions and Red Flags

- Assumptions: The LiteLLM compromise is part of a broader campaign by TeamPCP; the attack vector involved compromised maintainer credentials; the affected versions are limited to those identified.

- Information Gaps: Confirmation of the identity behind the “teampcp owns BerriAI” commit; details on the full scope of affected systems and data exfiltrated.

- Bias & Deception Risks: Potential bias in attributing the attack to TeamPCP based on previous incidents; risk of deception through false flag operations.

4. Implications and Strategic Risks

The compromise of LiteLLM could lead to significant disruptions in AI-dependent operations and erode trust in open-source software security. This development may prompt increased scrutiny and security measures in software supply chains.

- Political / Geopolitical: Potential for increased tensions between states if state-sponsored actors are suspected; impact on international cybersecurity cooperation.

- Security / Counter-Terrorism: Heightened threat environment for organizations using AI tools; potential exploitation by adversarial entities.

- Cyber / Information Space: Increased focus on securing software supply chains; potential for further attacks using similar methods.

- Economic / Social: Disruption to businesses reliant on AI technologies; potential loss of consumer trust in digital services.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Conduct audits of systems using LiteLLM; remove compromised versions; enhance monitoring for unusual activity.

- Medium-Term Posture (1–12 months): Strengthen partnerships with cybersecurity firms; invest in supply chain security measures; develop rapid response capabilities.

- Scenario Outlook:

- Best: Quick attribution and patching restore trust and security.

- Worst: Widespread exploitation leads to significant data breaches and operational disruptions.

- Most-Likely: Continued sporadic incidents as vulnerabilities are patched and security measures are enhanced.

6. Key Individuals and Entities

- TeamPCP (Threat Actor)

- LiteLLM Maintainer (Compromised Account)

- FutureSearch (Security Researcher)

- Not clearly identifiable from open sources in this snippet.

7. Thematic Tags

cybersecurity, software supply chain, open-source security, threat attribution, AI tools, malware, cyber-espionage

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us