Financial Sector Unites to Combat Rising Threat of AI-Driven Identity Fraud

Published on: 2026-04-01

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Financial groups lay out a plan to fight AI identity attacks

1. BLUF (Bottom Line Up Front)

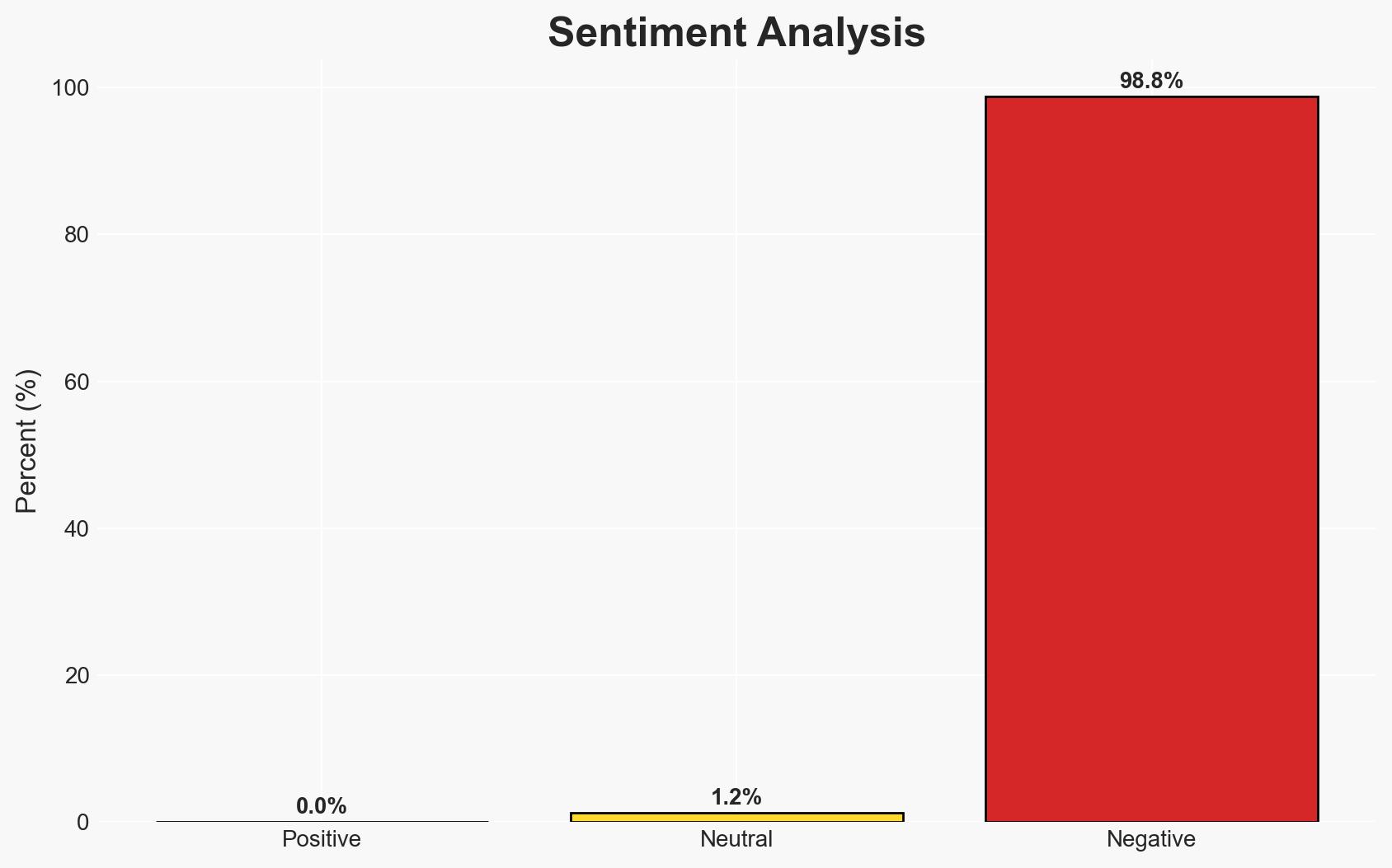

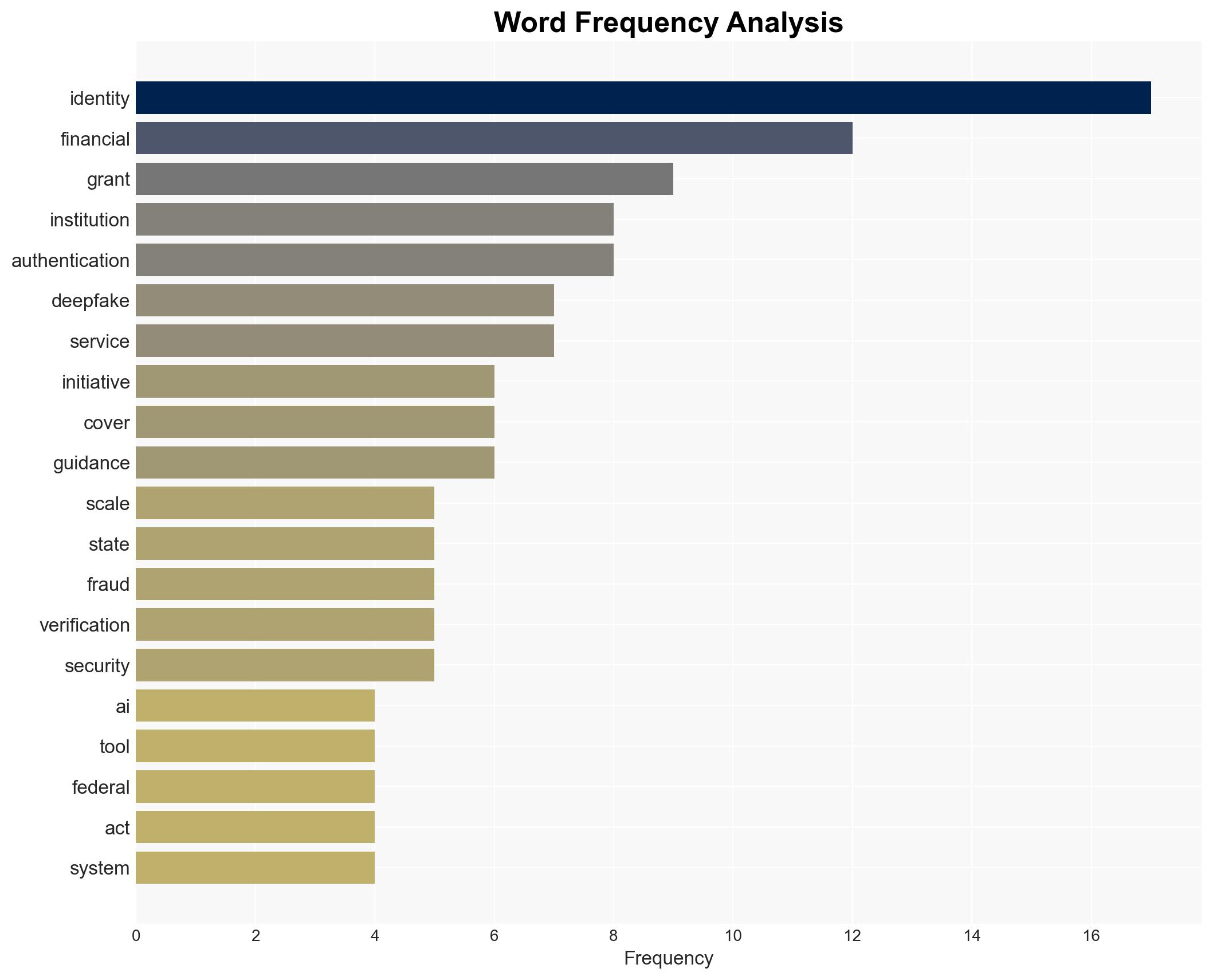

The increasing use of generative AI tools for identity attacks poses a significant threat to financial institutions, with projected fraud losses escalating sharply. The most likely hypothesis is that AI-driven identity fraud will continue to grow unless robust countermeasures are implemented. This situation affects financial institutions, policymakers, and consumers. Overall confidence in this assessment is moderate.

2. Competing Hypotheses

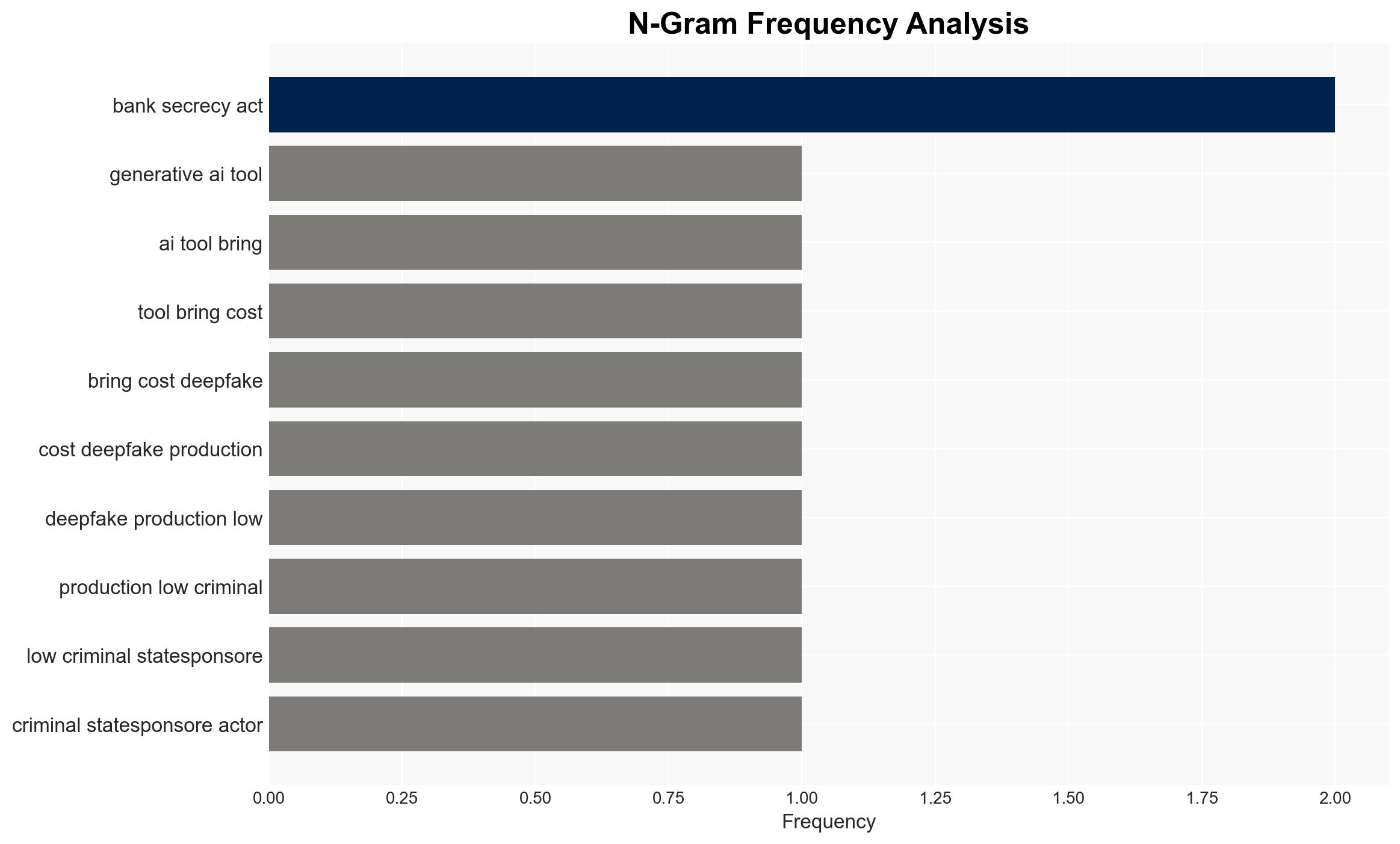

- Hypothesis A: The rise in AI-enabled identity attacks will continue to escalate, driven by the decreasing cost and increasing sophistication of AI tools. Supporting evidence includes the 700% increase in deepfake incidents and the projected $40 billion in fraud losses by 2027. Key uncertainties include the pace of technological advancements in AI defenses.

- Hypothesis B: Financial institutions and policymakers will successfully implement countermeasures that significantly mitigate the threat of AI-enabled identity attacks. This is supported by the outlined initiatives for identity proofing and verification. Contradicting evidence includes the current scale of the problem and the potential lag in policy implementation.

- Assessment: Hypothesis A is currently better supported due to the rapid increase in incidents and the projected growth in fraud losses. Indicators that could shift this judgment include successful implementation of the proposed countermeasures and a measurable decrease in attack incidents.

3. Key Assumptions and Red Flags

- Assumptions: AI tools will continue to evolve, making attacks more sophisticated; financial institutions will prioritize security investments; policymakers will act on recommendations.

- Information Gaps: Detailed data on the effectiveness of current countermeasures and the timeline for policy implementation.

- Bias & Deception Risks: Potential bias in reporting from financial institutions seeking to downplay vulnerabilities; manipulation of data by adversaries to obscure attack origins.

4. Implications and Strategic Risks

The development of AI-driven identity attacks could lead to increased financial instability and erosion of consumer trust in digital financial services. This could evolve into broader economic challenges if not addressed.

- Political / Geopolitical: Increased pressure on governments to regulate AI technologies and enhance cybersecurity frameworks.

- Security / Counter-Terrorism: Potential for AI tools to be used in broader cyber warfare or terrorism-related activities.

- Cyber / Information Space: Escalation in the sophistication and frequency of cyber-attacks targeting critical infrastructure.

- Economic / Social: Potential loss of consumer confidence in digital financial systems, leading to decreased economic activity.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Increase monitoring of AI-driven fraud activities; enhance public-private information sharing on emerging threats.

- Medium-Term Posture (1–12 months): Develop and implement robust identity verification systems; foster international cooperation on AI regulation and cybersecurity standards.

- Scenario Outlook: Best: Successful implementation of countermeasures reduces fraud incidents. Worst: Continued escalation in attacks leads to significant financial losses. Most-Likely: Gradual improvement in defenses with ongoing challenges.

6. Key Individuals and Entities

- American Bankers Association

- Better Identity Coalition

- Financial Services Sector Coordinating Council

- Deloitte’s Center for Financial Services

- U.S. Treasury Department

- Social Security Administration

7. Thematic Tags

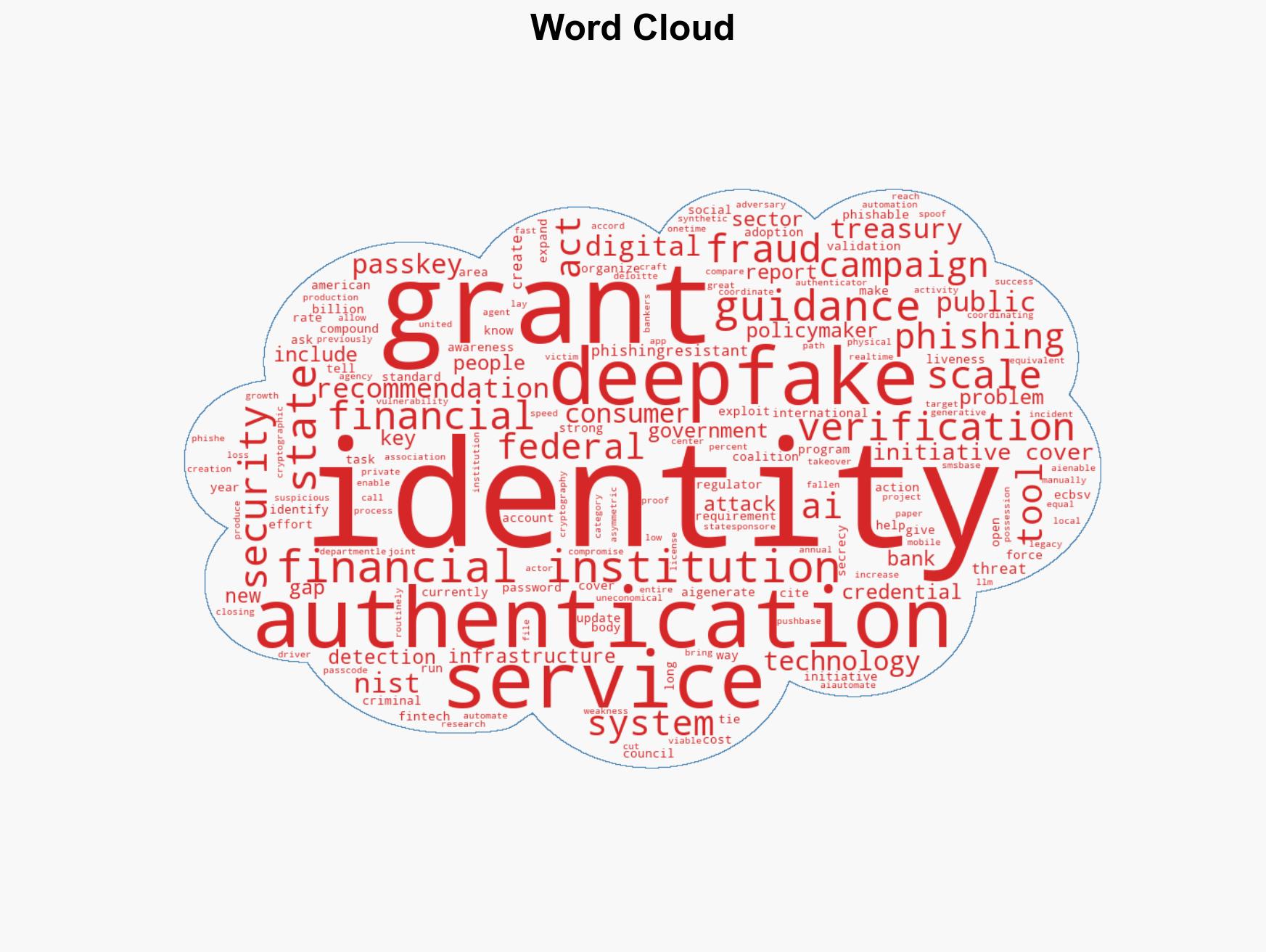

cybersecurity, financial fraud, AI identity attacks, deepfakes, policy recommendations, identity verification, financial institutions

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us