AI Conflict: Pentagon’s Blacklisting of Anthropic vs. OpenAI’s Defense Contract Shapes Military Tech Control

Published on: 2026-03-01

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

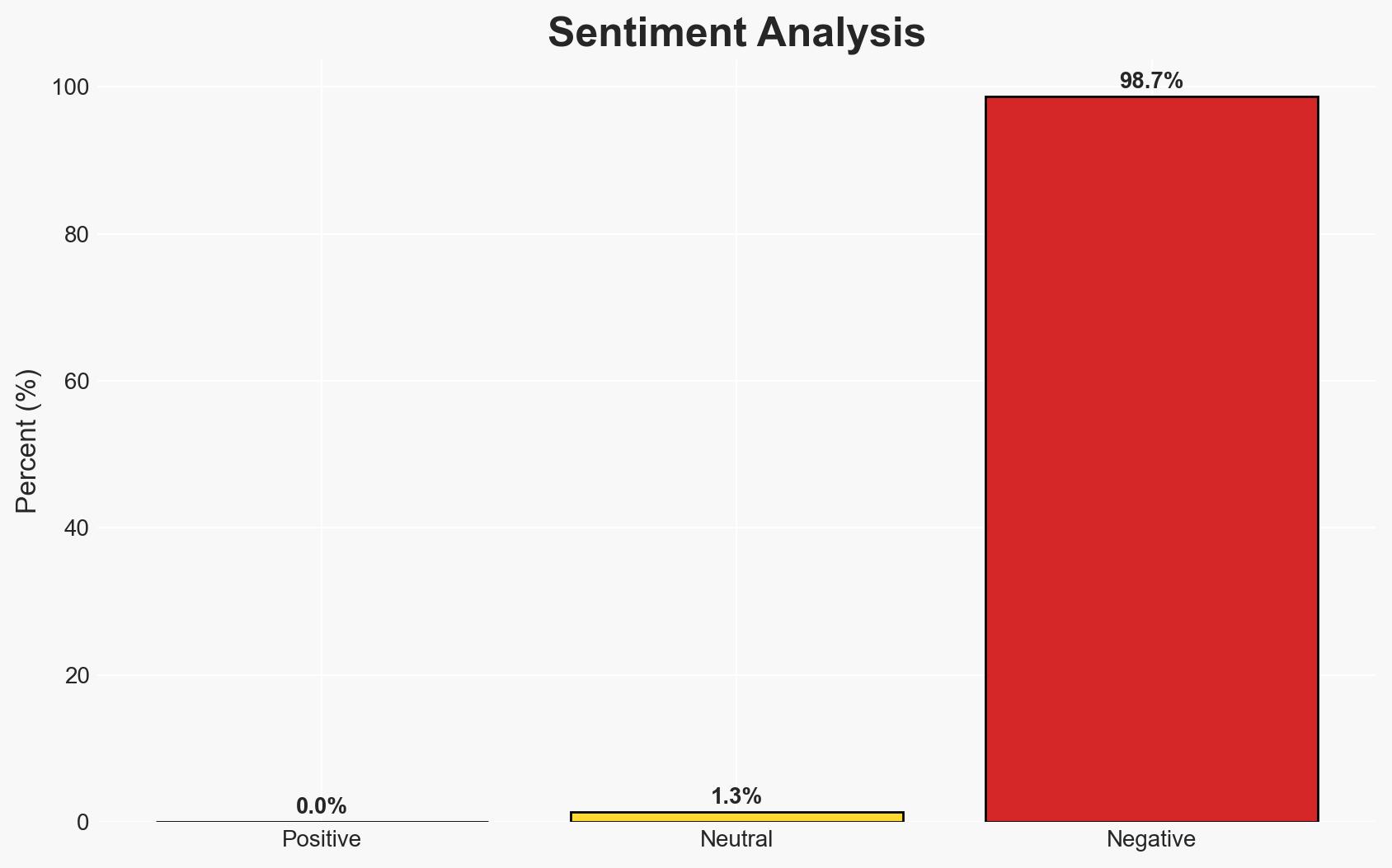

Intelligence Report: The government’s AI standoff could decide who really controls America’s military tech

1. BLUF (Bottom Line Up Front)

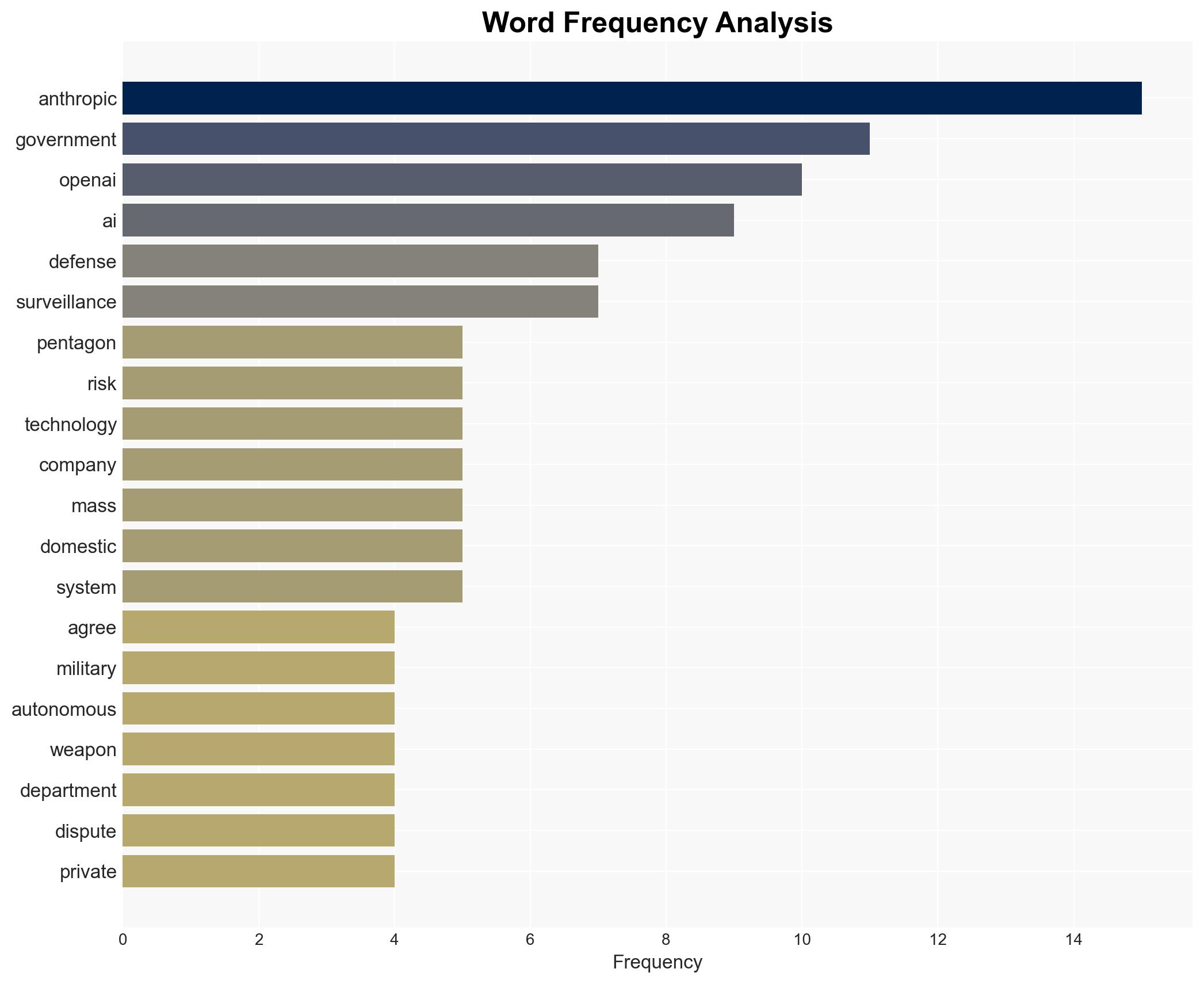

The recent decision by the Trump administration to blacklist Anthropic while awarding OpenAI a defense contract highlights a significant shift in control over military AI technology. The standoff underscores tensions between government and private sector priorities regarding AI use in national security. The most likely hypothesis is that OpenAI’s agreement with the Pentagon will set a precedent for future AI contracts, with moderate confidence in this assessment.

2. Competing Hypotheses

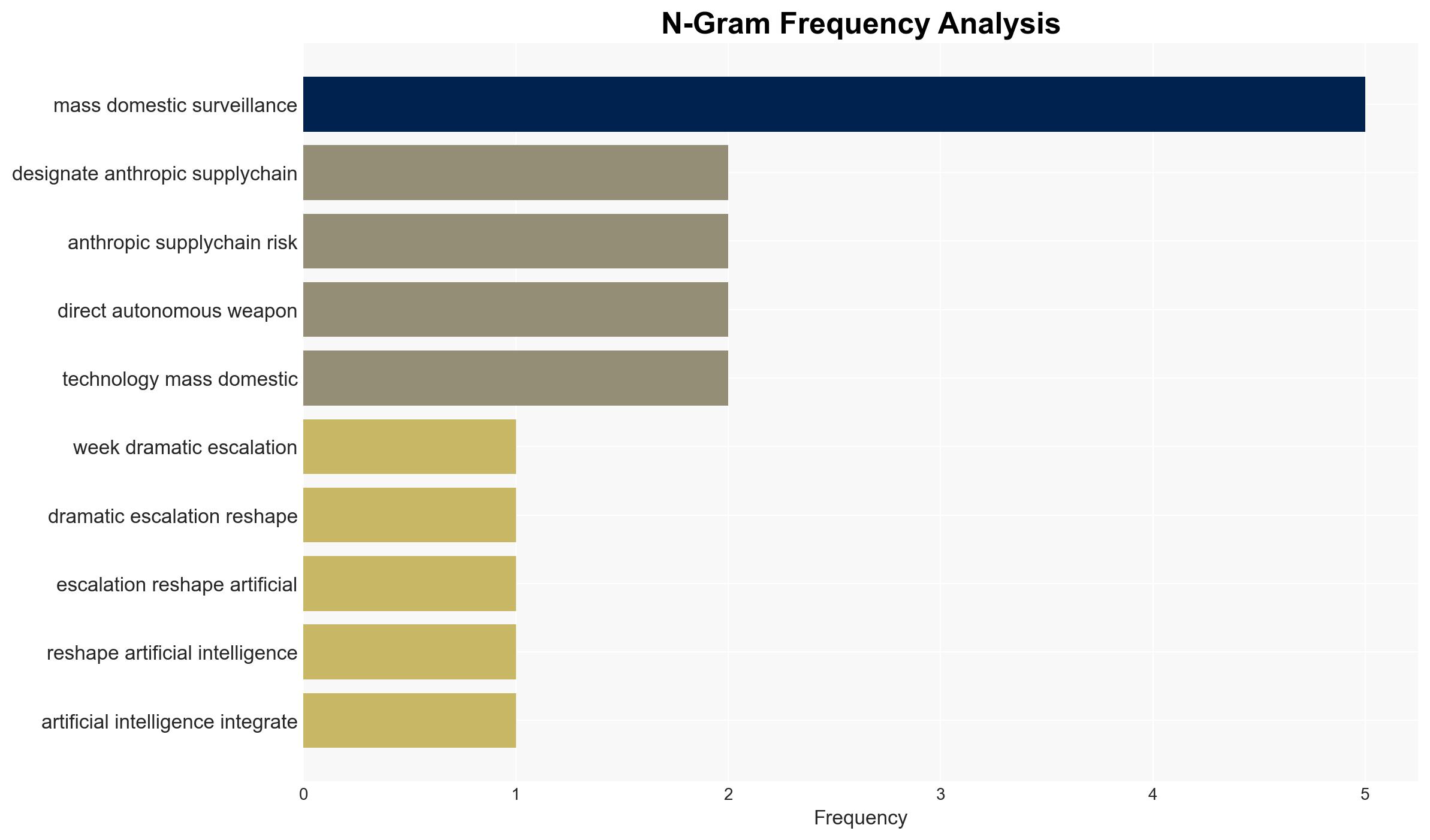

- Hypothesis A: The Pentagon’s decision to blacklist Anthropic and contract with OpenAI is primarily driven by the need for AI systems that align with military objectives, suggesting a preference for companies willing to compromise on ethical constraints. This is supported by the immediate contract award to OpenAI and the Pentagon’s broad interpretation of “lawful use.” However, the long-term sustainability of such partnerships is uncertain.

- Hypothesis B: The Pentagon’s actions are a strategic move to establish a framework for AI use that balances ethical considerations with military needs, using OpenAI as a model for future contracts. OpenAI’s agreement includes guardrails similar to those Anthropic sought, indicating a potential shift towards more ethically constrained AI applications in defense.

- Assessment: Hypothesis B is currently better supported due to OpenAI’s explicit agreement on ethical guardrails, which suggests a compromise between military needs and ethical considerations. Key indicators that could shift this judgment include changes in government policy or further disputes over AI use cases.

3. Key Assumptions and Red Flags

- Assumptions: The Pentagon will enforce the agreed-upon guardrails; OpenAI will adhere to its ethical commitments; Anthropic’s blacklisting is final and not subject to reversal; the current administration’s policies will remain consistent.

- Information Gaps: Details on the specific terms of OpenAI’s contract with the Pentagon; the potential for other AI companies to enter similar agreements; internal Pentagon deliberations on AI ethics.

- Bias & Deception Risks: Potential bias in source reporting favoring OpenAI; risk of deception in public statements by involved parties to influence public or stakeholder perception.

4. Implications and Strategic Risks

This development could lead to a redefinition of the relationship between the government and AI companies, potentially influencing global AI governance standards.

- Political / Geopolitical: May prompt other nations to reassess their AI policies and partnerships, potentially leading to international regulatory discussions.

- Security / Counter-Terrorism: Could alter the operational landscape by setting new precedents for AI deployment in military contexts.

- Cyber / Information Space: Raises concerns about the security of AI systems and the potential for misuse if ethical constraints are not rigorously enforced.

- Economic / Social: May impact the AI industry’s growth and investment patterns, influencing public trust in AI technologies.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor compliance with OpenAI’s contract terms; engage with Anthropic to understand potential future collaborations; assess other AI companies’ positions.

- Medium-Term Posture (1–12 months): Develop a framework for AI ethics in defense; strengthen partnerships with AI firms that align with ethical standards; invest in oversight mechanisms.

- Scenario Outlook: Best: Ethical AI frameworks become standard in defense contracts. Worst: Ethical breaches lead to public backlash and regulatory crackdowns. Most-Likely: Gradual adoption of ethical standards with periodic disputes over specific use cases.

6. Key Individuals and Entities

- Anthropic

- OpenAI

- Pentagon

- Dario Amodei (CEO, Anthropic)

- Sam Altman (CEO, OpenAI)

- Donald Trump (President)

- Dean Ball (Senior Fellow, Foundation for American Innovation)

7. Thematic Tags

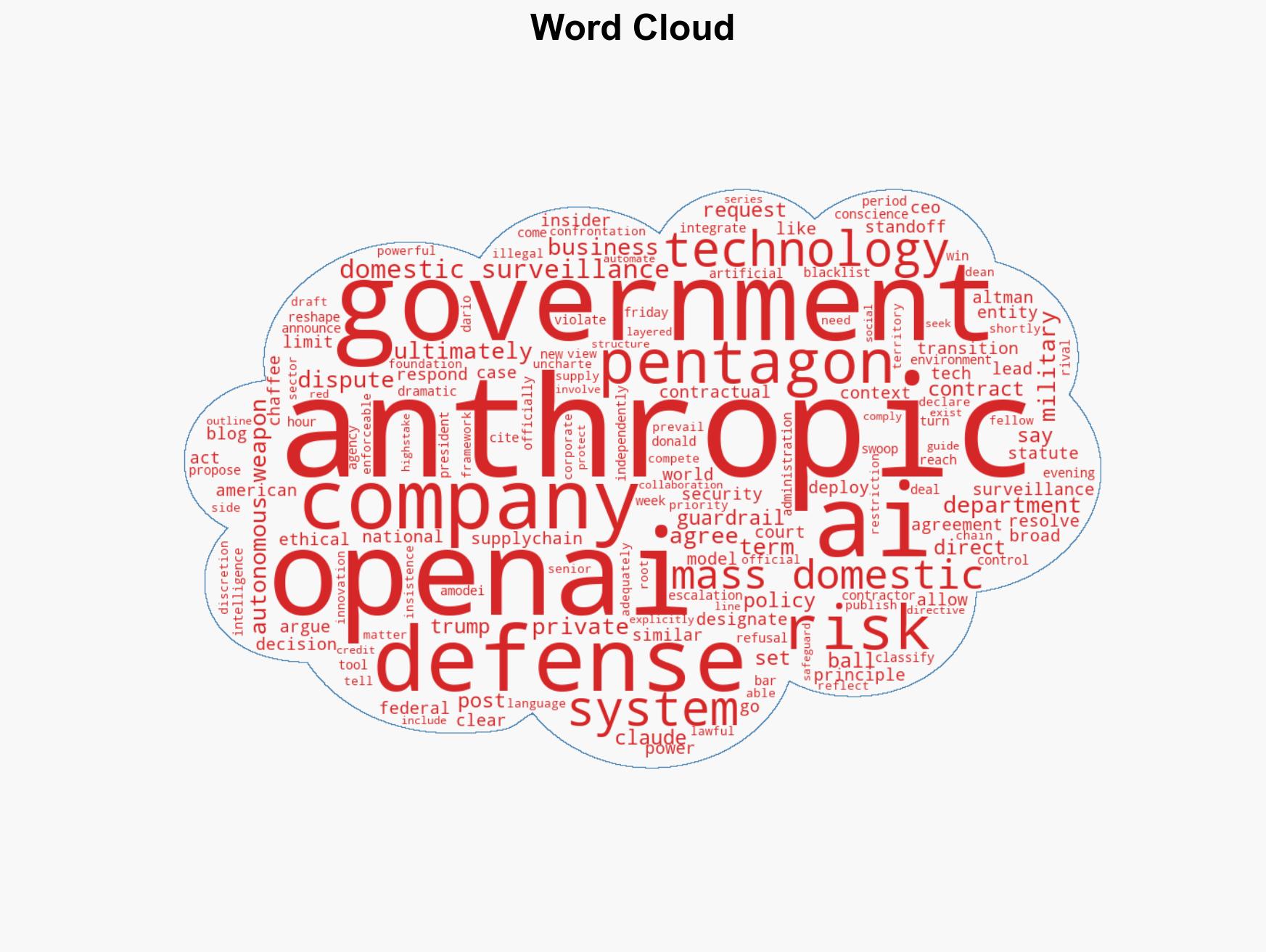

cybersecurity, AI ethics, military technology, defense contracts, national security, government-private sector relations, AI governance, ethical constraints

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Narrative Pattern Analysis: Deconstruct and track propaganda or influence narratives.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us