AI Firms Face Pentagon Pressure Over Military Use and Ethical Boundaries

Published on: 2026-02-27

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: AI vs the Pentagon killer robots mass surveillance and red lines

1. BLUF (Bottom Line Up Front)

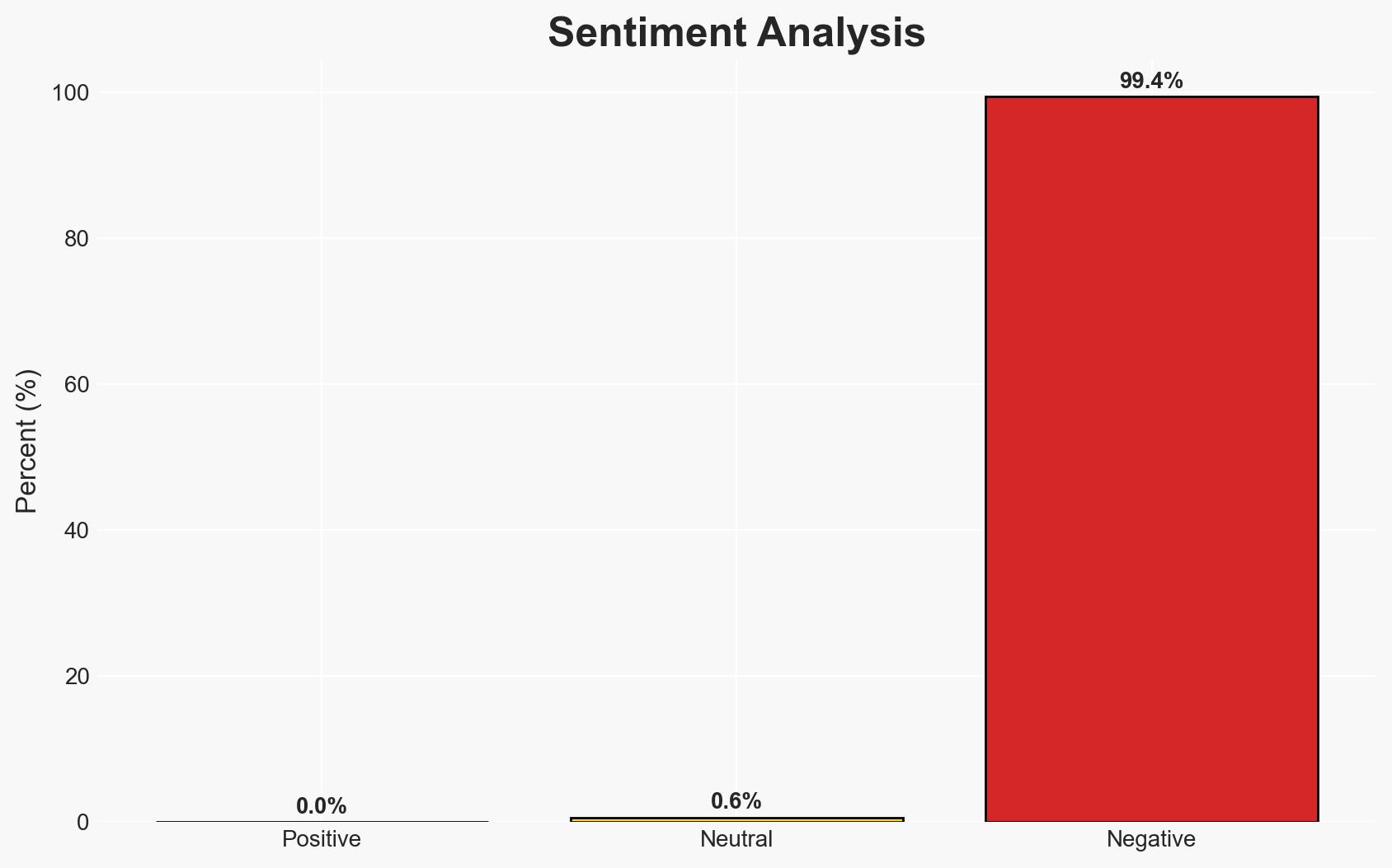

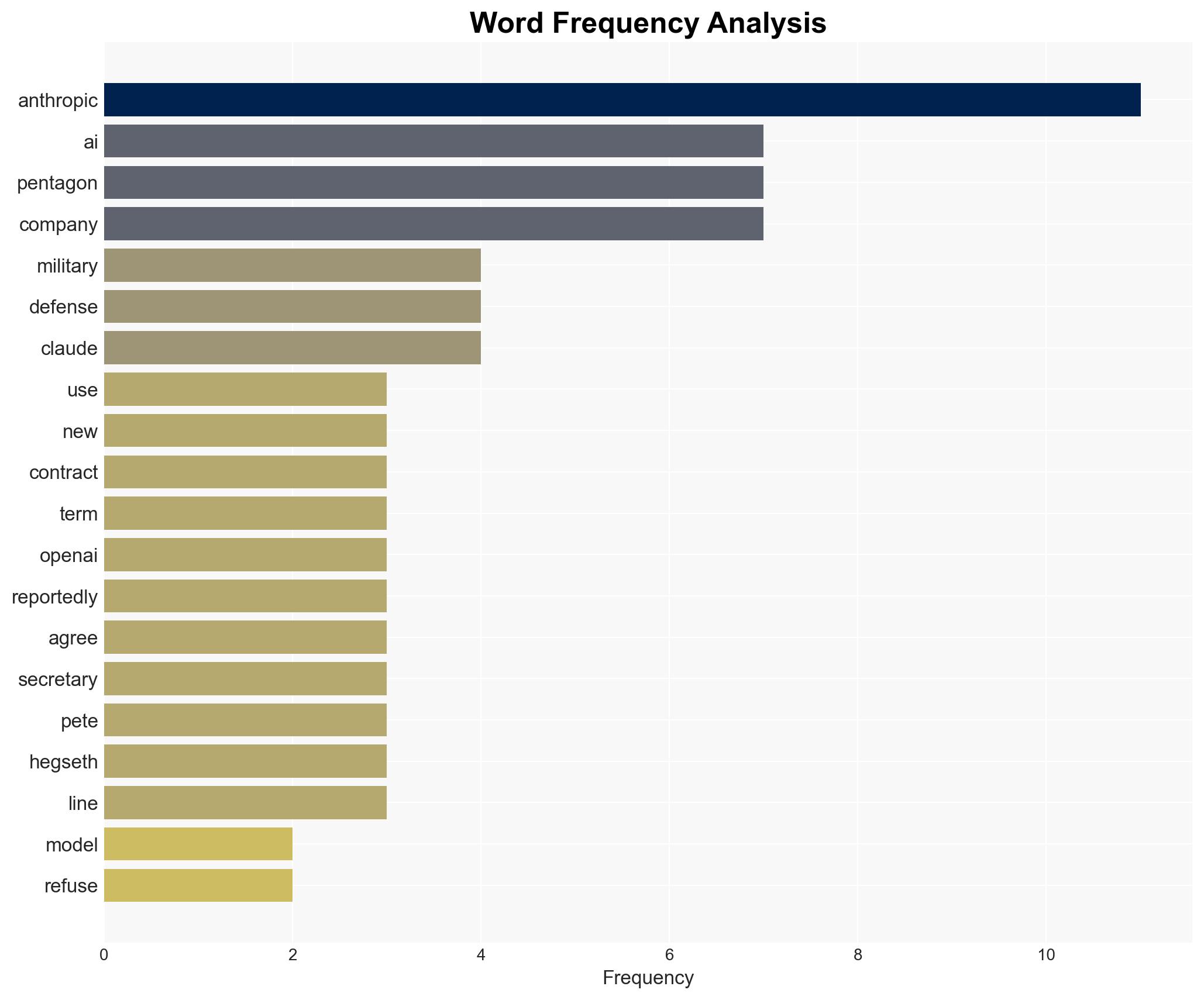

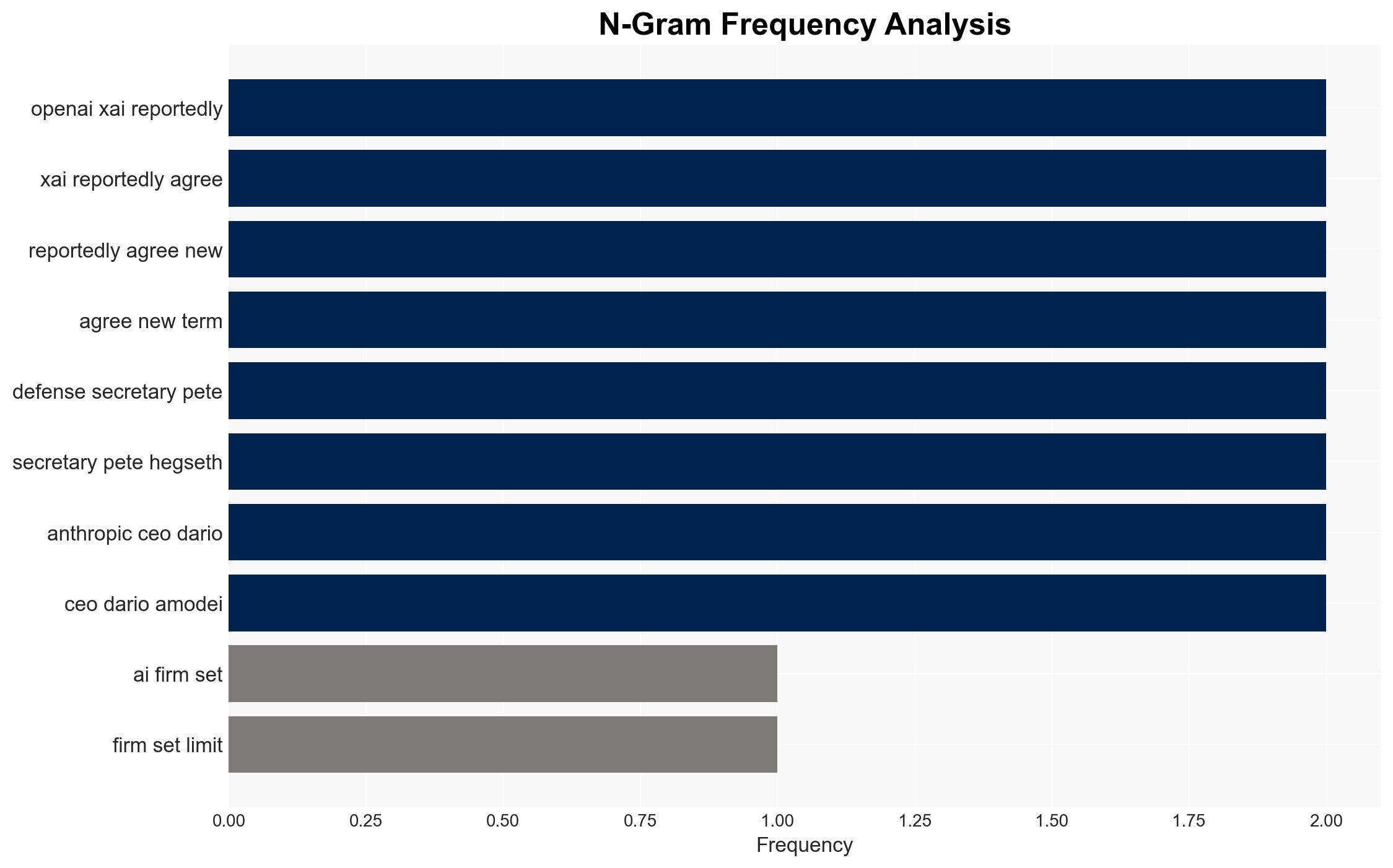

The ongoing dispute between Anthropic and the Pentagon over AI usage terms highlights significant tensions in military-tech partnerships, particularly concerning ethical boundaries in AI deployment. The refusal by Anthropic to comply with Pentagon demands, contrasted with its competitors’ compliance, suggests a potential shift in AI governance norms. This situation could have broad implications for national security and tech industry dynamics, with moderate confidence in the assessment that Anthropic’s stance may influence future policy discussions.

2. Competing Hypotheses

- Hypothesis A: Anthropic’s refusal to comply with Pentagon terms is a principled stand on ethical AI use, aiming to set industry standards. Supporting evidence includes Anthropic’s consistent red-line policy and public statements. Contradicting evidence is the compliance of competitors, which suggests industry norms may not align with Anthropic’s stance. Key uncertainties include internal motivations and potential undisclosed negotiations.

- Hypothesis B: Anthropic’s actions are primarily strategic, leveraging public and political pressure to negotiate more favorable terms with the Pentagon. Supporting evidence includes the timing of public statements and potential legal challenges. Contradicting evidence is the lack of immediate concessions from the Pentagon, indicating limited leverage.

- Assessment: Hypothesis A is currently better supported due to Anthropic’s consistent public messaging and ethical framing. However, shifts in Pentagon policy or industry reactions could alter this assessment. Key indicators include changes in competitor stances or new legislative proposals.

3. Key Assumptions and Red Flags

- Assumptions: The Pentagon’s designation of Anthropic as a “supply chain risk” will have significant operational impacts; Anthropic’s competitors will maintain their current compliance stance; public and political opinion will influence Pentagon decisions.

- Information Gaps: Details of the negotiations between Anthropic and the Pentagon; the specific terms agreed upon by OpenAI and xAI; potential internal dissent within Anthropic or its competitors.

- Bias & Deception Risks: Potential bias in public statements from both Anthropic and the Pentagon; risk of strategic misinformation by involved parties to influence public opinion or negotiations.

4. Implications and Strategic Risks

This development could set precedents for AI governance in military applications, influencing future policy and industry standards. The outcome may affect the balance between national security imperatives and ethical AI deployment.

- Political / Geopolitical: Potential for increased legislative scrutiny on AI military applications; influence on international norms regarding autonomous weapons.

- Security / Counter-Terrorism: Changes in AI deployment could impact operational capabilities and threat response strategies.

- Cyber / Information Space: Heightened focus on AI ethics may lead to increased cyber and information operations targeting AI firms.

- Economic / Social: Potential disruptions in tech industry partnerships; influence on public perception of AI technologies.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor developments in Anthropic-Pentagon negotiations; assess potential impacts on existing contracts with tech firms.

- Medium-Term Posture (1–12 months): Develop frameworks for ethical AI use in military contexts; engage with industry stakeholders to align on standards.

- Scenario Outlook:

- Best: Consensus on ethical AI use leads to strengthened partnerships and innovation.

- Worst: Escalation leads to significant industry disruptions and weakened national security capabilities.

- Most-Likely: Incremental policy adjustments with ongoing negotiations and industry adaptation.

6. Key Individuals and Entities

- Anthropic (AI company)

- Dario Amodei (Anthropic CEO)

- Pentagon CTO Emil Michael

- Defense Secretary Pete Hegseth

- OpenAI (AI company)

- xAI (AI company)

- Donald Trump (Former President)

7. Thematic Tags

cybersecurity, AI ethics, military technology, national security, autonomous weapons, tech industry, government policy, supply chain risk

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us