AI Security Budgets Rise Amid Increasing Threats from Deepfakes and Misinformation

Published on: 2026-03-02

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: AI risk moves into the security budget spotlight

1. BLUF (Bottom Line Up Front)

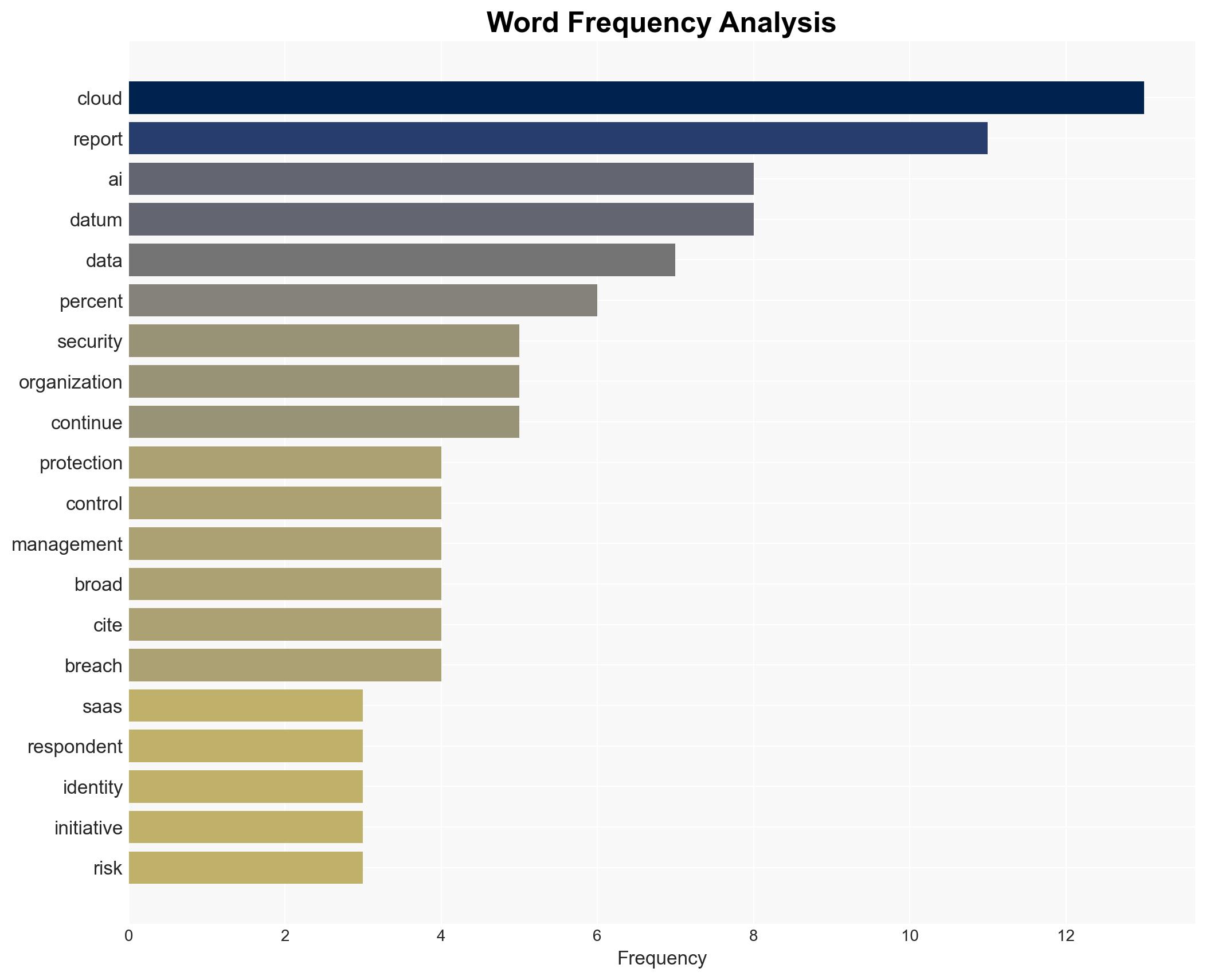

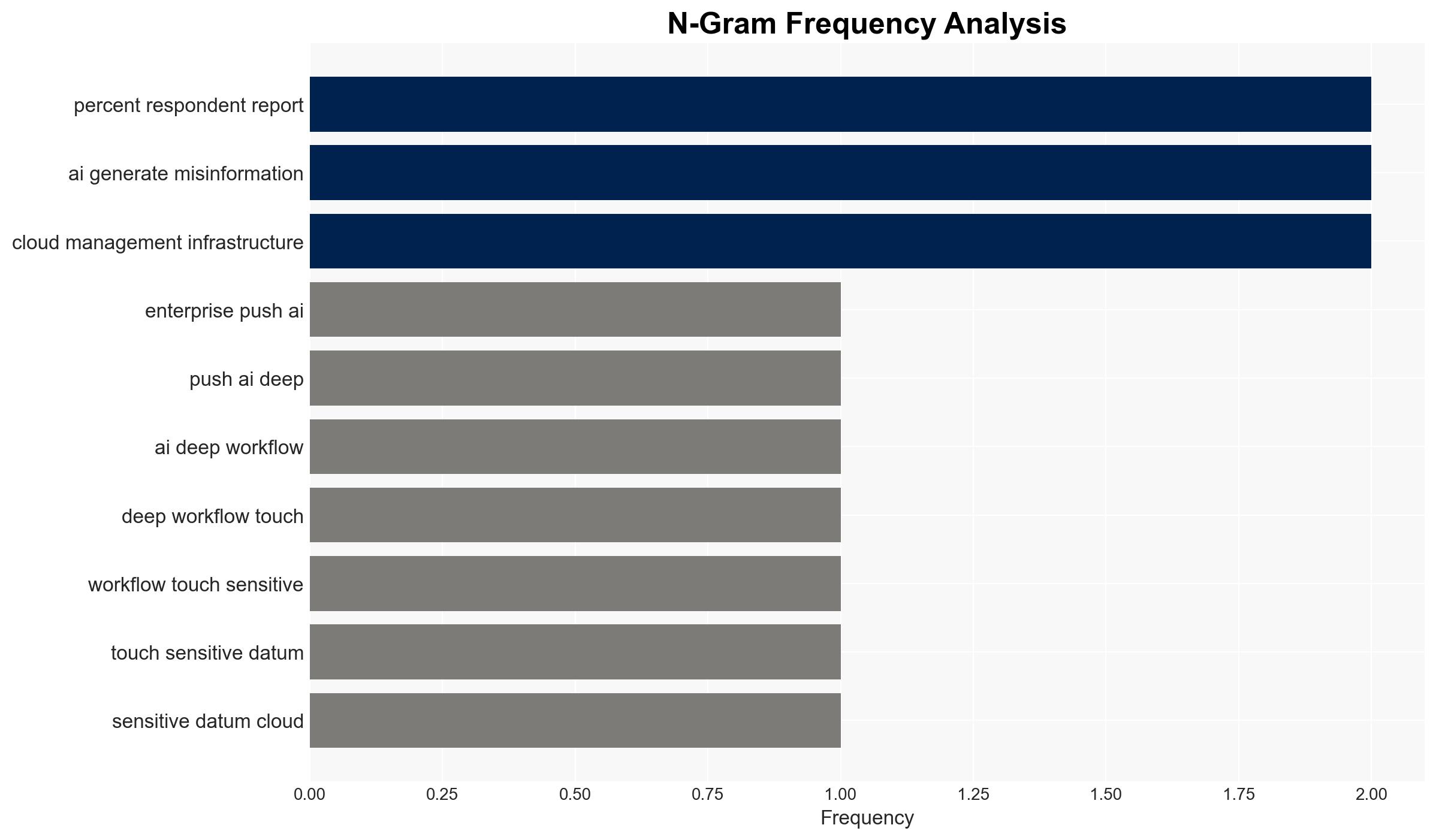

The integration of AI into enterprise workflows is increasing, accompanied by a rise in dedicated AI security budgets. This trend highlights the growing recognition of AI-related risks, such as deepfake attacks and misinformation. The current security landscape is characterized by vulnerabilities in cloud environments and identity management. The most likely hypothesis is that AI security will become a central component of cybersecurity strategies, with moderate confidence.

2. Competing Hypotheses

- Hypothesis A: AI security will become a central focus in cybersecurity strategies, driven by the increasing integration of AI into sensitive workflows and the rise in AI-related threats. Supporting evidence includes the increase in dedicated AI security budgets and the prevalence of deepfake attacks. Key uncertainties include the pace of technological advancements and regulatory responses.

- Hypothesis B: AI security will remain a peripheral concern, with organizations continuing to address AI risks through broader cybersecurity programs. This is supported by the fact that many organizations still fund AI initiatives through existing security allocations. Contradicting evidence includes the reported increase in dedicated AI security budgets.

- Assessment: Hypothesis A is currently better supported due to the observed increase in dedicated AI security budgets and the specific threats posed by AI technologies. Indicators that could shift this judgment include changes in regulatory frameworks or significant technological breakthroughs in AI security.

3. Key Assumptions and Red Flags

- Assumptions: The integration of AI into workflows will continue to grow; AI-related threats will increase in sophistication; organizations will prioritize AI security in response to these threats.

- Information Gaps: Detailed data on the effectiveness of current AI security measures; insights into regulatory developments related to AI security.

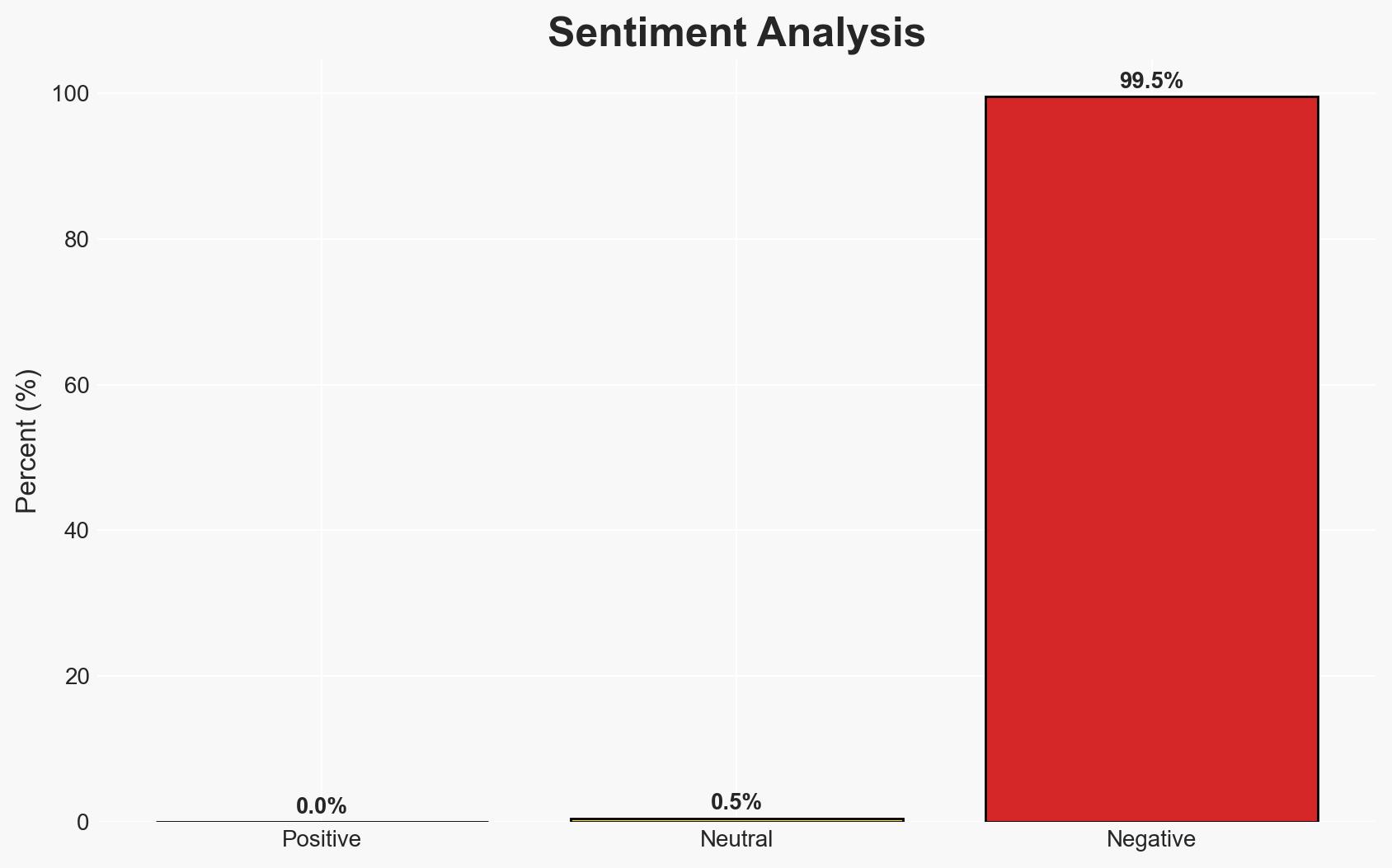

- Bias & Deception Risks: Potential bias in the survey data due to self-reporting; risk of underreporting AI-related incidents due to reputational concerns.

4. Implications and Strategic Risks

The increasing focus on AI security could lead to significant changes in cybersecurity strategies and resource allocation. This development may interact with broader technological and regulatory dynamics, influencing how organizations manage risk.

- Political / Geopolitical: Potential for international regulatory harmonization on AI security standards.

- Security / Counter-Terrorism: Enhanced threat modeling and response capabilities for AI-related threats.

- Cyber / Information Space: Increased focus on securing cloud environments and identity management systems.

- Economic / Social: Potential economic impacts from AI-related incidents, including reputational damage and financial losses.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Increase monitoring of AI-related threats; conduct a review of current AI security measures and identify gaps.

- Medium-Term Posture (1–12 months): Develop partnerships with AI security experts; invest in AI-specific security tools and training programs.

- Scenario Outlook:

- Best: AI security becomes integrated into standard cybersecurity practices, reducing incident frequency.

- Worst: AI-related incidents increase, leading to significant economic and reputational damage.

- Most-Likely: Gradual integration of AI security measures with incremental improvements in threat detection and response.

6. Key Individuals and Entities

- Not clearly identifiable from open sources in this snippet.

7. Thematic Tags

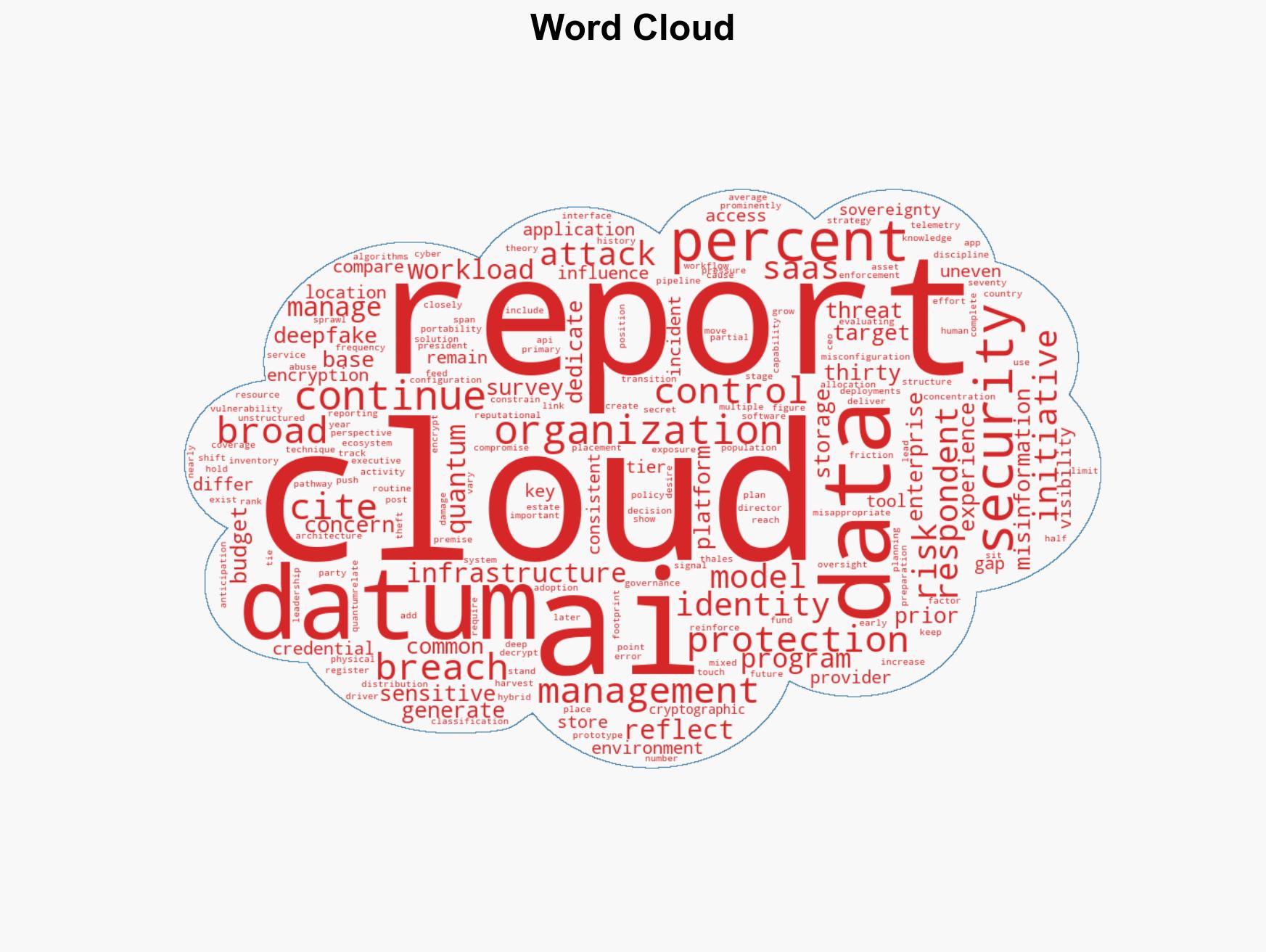

cybersecurity, AI security, cloud vulnerabilities, identity management, deepfake threats, cybersecurity strategy, data protection, misinformation

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Narrative Pattern Analysis: Deconstruct and track propaganda or influence narratives.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us