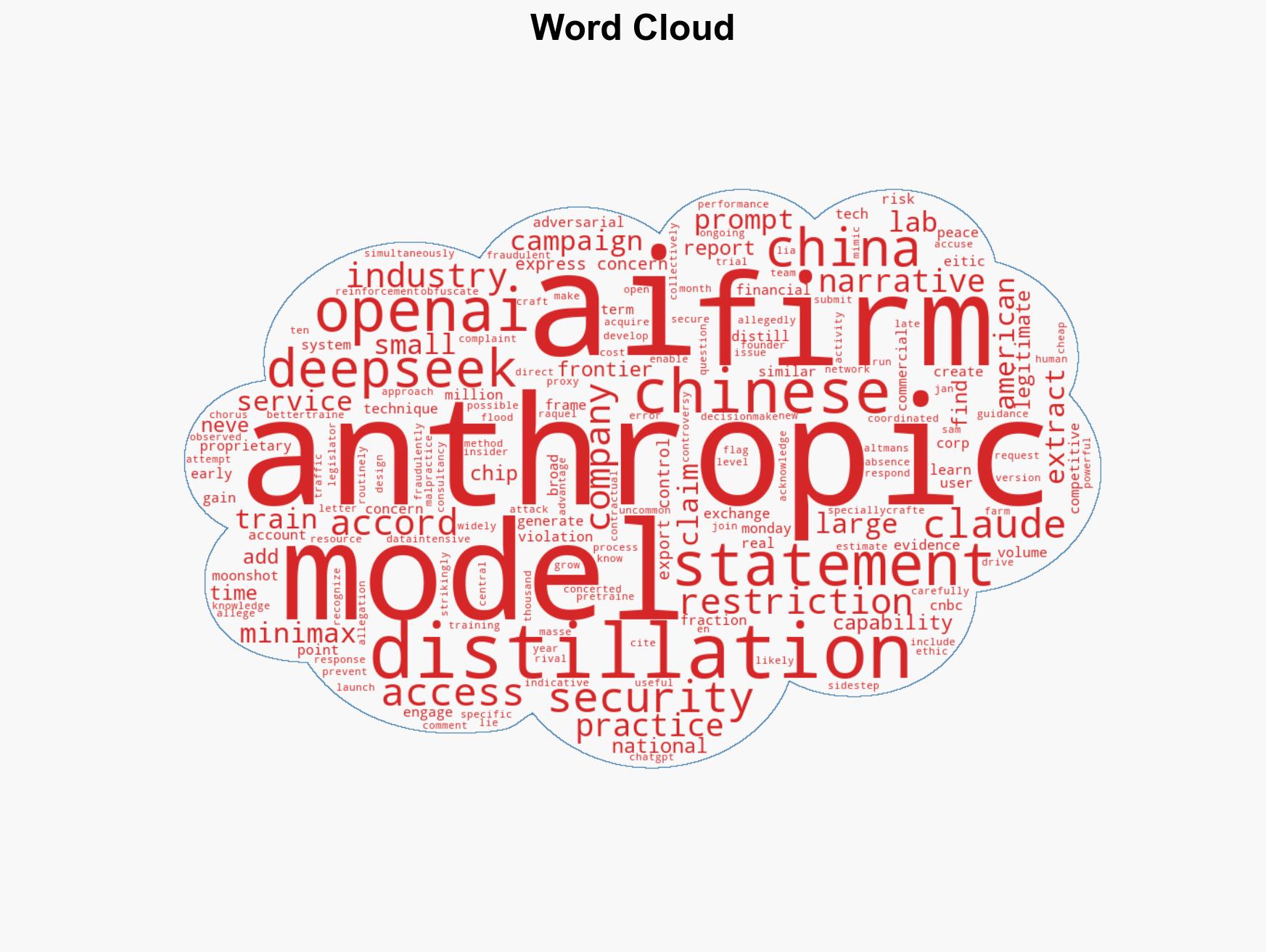

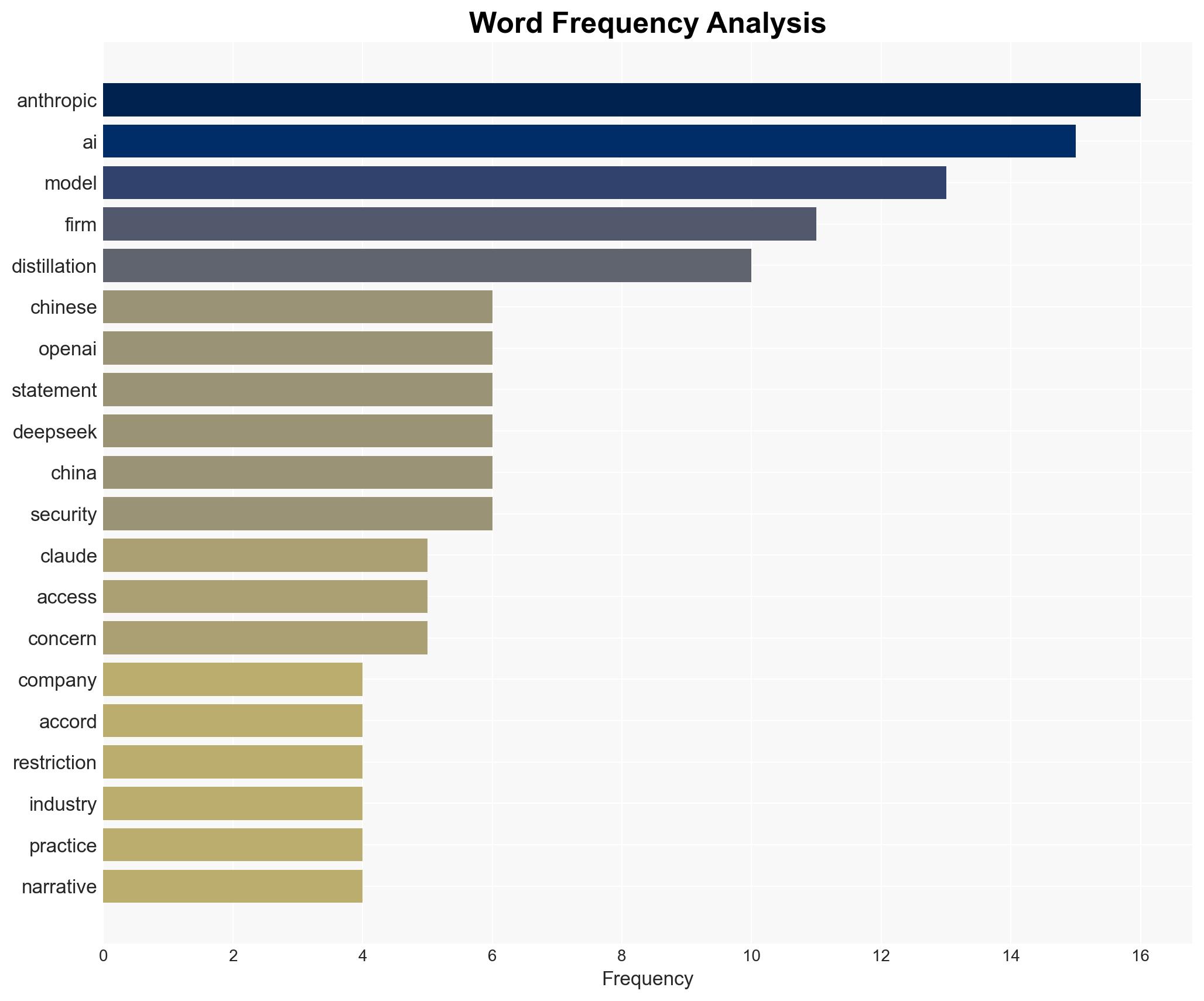

Anthropic accuses Chinese AI firms of coordinated efforts to exploit its model through distillation attacks

Published on: 2026-02-24

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Anthropic joins OpenAI in flagging ‘industrial-scale’ distillation campaigns by Chinese AI firms

1. BLUF (Bottom Line Up Front)

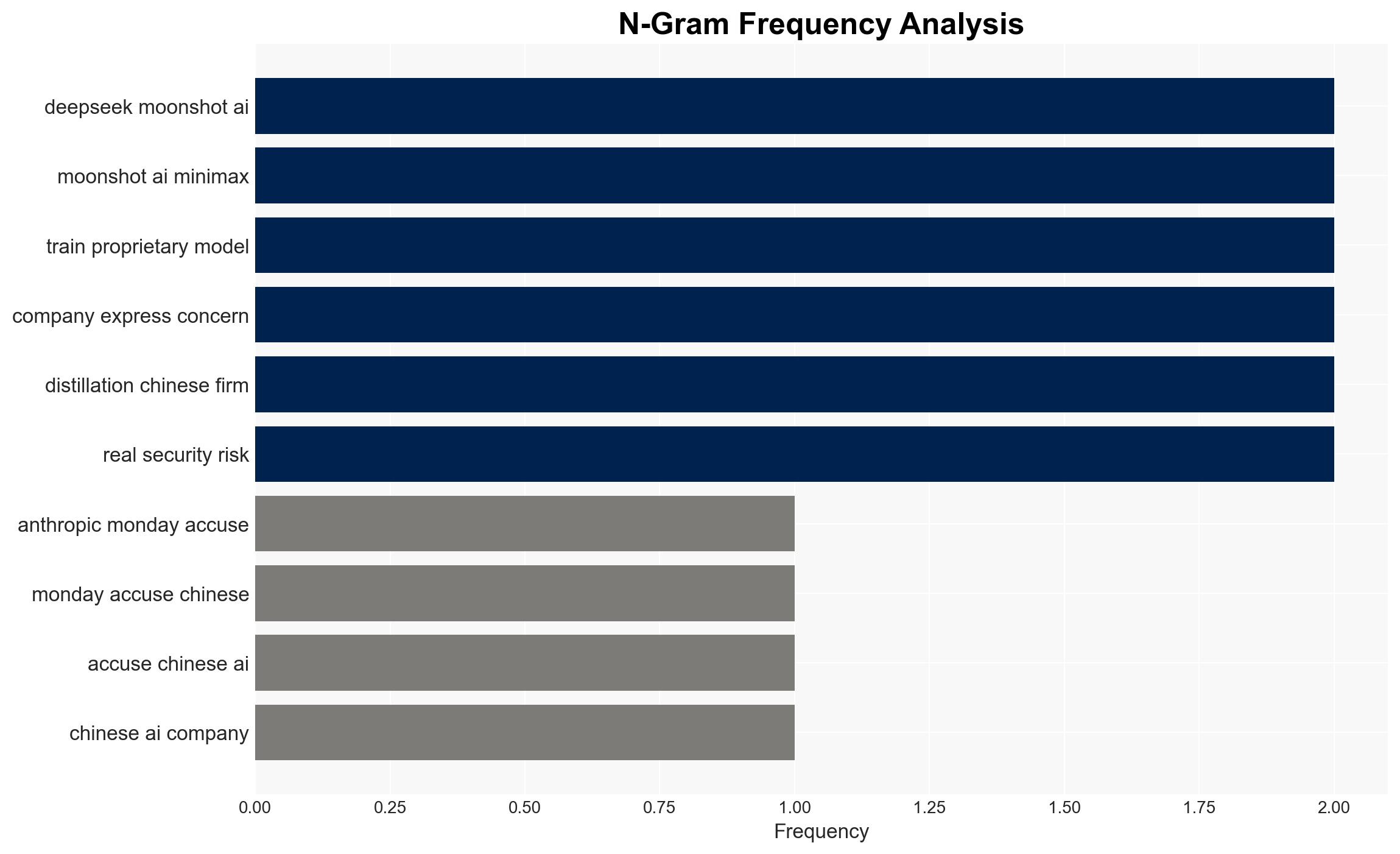

Anthropic and OpenAI have accused Chinese AI firms of conducting large-scale distillation attacks to extract information from their models, potentially violating terms of service. This activity, if confirmed, could have significant implications for intellectual property protection and international AI competition. The most likely hypothesis is that these actions are part of a broader strategy by Chinese firms to enhance their AI capabilities. Overall confidence in this judgment is moderate, given the lack of direct responses from the accused firms.

2. Competing Hypotheses

- Hypothesis A: Chinese AI firms are deliberately engaging in coordinated distillation attacks to enhance their models by circumventing restrictions. This is supported by the reported use of proxy services and the scale of interactions with Anthropic’s model. However, the lack of direct evidence from the accused firms and their non-response introduces uncertainty.

- Hypothesis B: The alleged activities are exaggerated or misinterpreted, possibly due to miscommunication or technical errors in identifying the source of traffic. This hypothesis is less supported due to consistent reporting from multiple U.S. firms and the scale of the alleged activities.

- Assessment: Hypothesis A is currently better supported due to the detailed nature of the accusations and the patterns of behavior reported by both Anthropic and OpenAI. Key indicators that could shift this judgment include responses from the accused firms or independent verification of the traffic sources.

3. Key Assumptions and Red Flags

- Assumptions: The accused firms have the technical capability to conduct such attacks; Anthropic’s detection methods are accurate; the reported scale of interactions is indicative of malicious intent.

- Information Gaps: Direct evidence from the accused firms; independent verification of the traffic sources; clarity on the legal frameworks governing such activities.

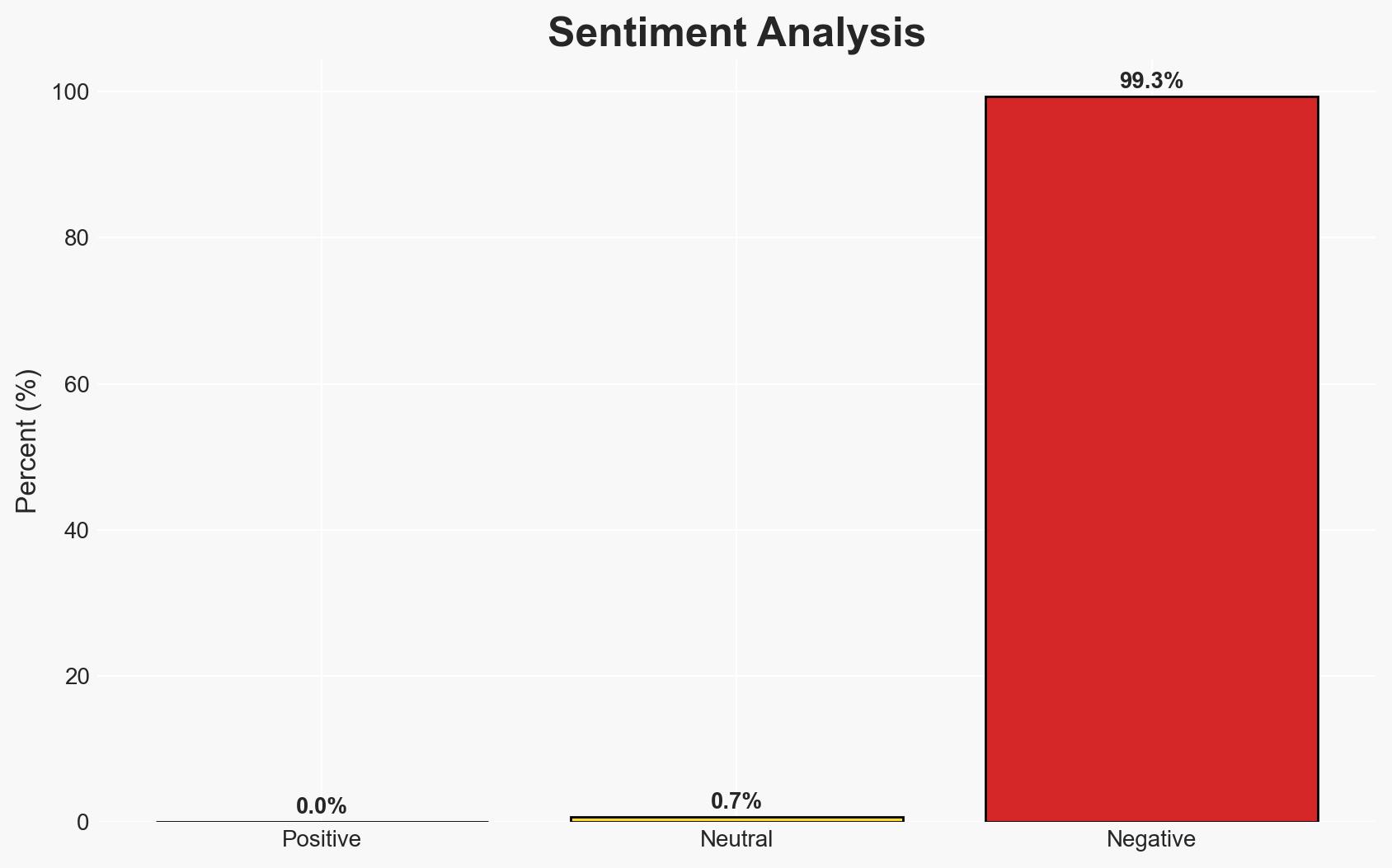

- Bias & Deception Risks: Potential bias in reporting from U.S. firms; risk of misinterpretation of technical data; possible strategic deception by the accused firms to mask true intentions.

4. Implications and Strategic Risks

This development could exacerbate tensions in the global AI race, influencing international policy and competitive dynamics. If unresolved, it may lead to stricter regulatory measures and impact cross-border collaborations.

- Political / Geopolitical: Potential escalation in U.S.-China tech tensions; influence on international AI policy discussions.

- Security / Counter-Terrorism: Increased scrutiny on AI model security and potential vulnerabilities.

- Cyber / Information Space: Highlighting the need for robust cybersecurity measures in AI development; potential for increased cyber espionage activities.

- Economic / Social: Impact on the competitive landscape of AI firms; potential for economic repercussions if regulatory actions are taken.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Enhance monitoring of AI model interactions; engage with international partners to share intelligence and best practices.

- Medium-Term Posture (1–12 months): Develop resilience measures for AI models; explore partnerships for joint cybersecurity initiatives.

- Scenario Outlook:

- Best: Resolution through diplomatic channels and industry collaboration.

- Worst: Escalation into a broader tech conflict impacting global AI development.

- Most-Likely: Ongoing tensions with incremental regulatory adjustments and increased security measures.

6. Key Individuals and Entities

- Anthropic

- OpenAI

- DeepSeek

- Moonshot AI

- MiniMax

- Lia Raquel Neves (EITIC)

7. Thematic Tags

cybersecurity, AI security, intellectual property, U.S.-China relations, cyber-espionage, technology competition, regulatory challenges, international cooperation

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model hostile behavior to identify vulnerabilities.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us