Anthropic Challenges Pentagon’s Demands Amid Controversy Over AI Use in Military Operations

Published on: 2026-02-25

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Anthropic vs the Pentagon Why AI firm is taking on Trump administration

1. BLUF (Bottom Line Up Front)

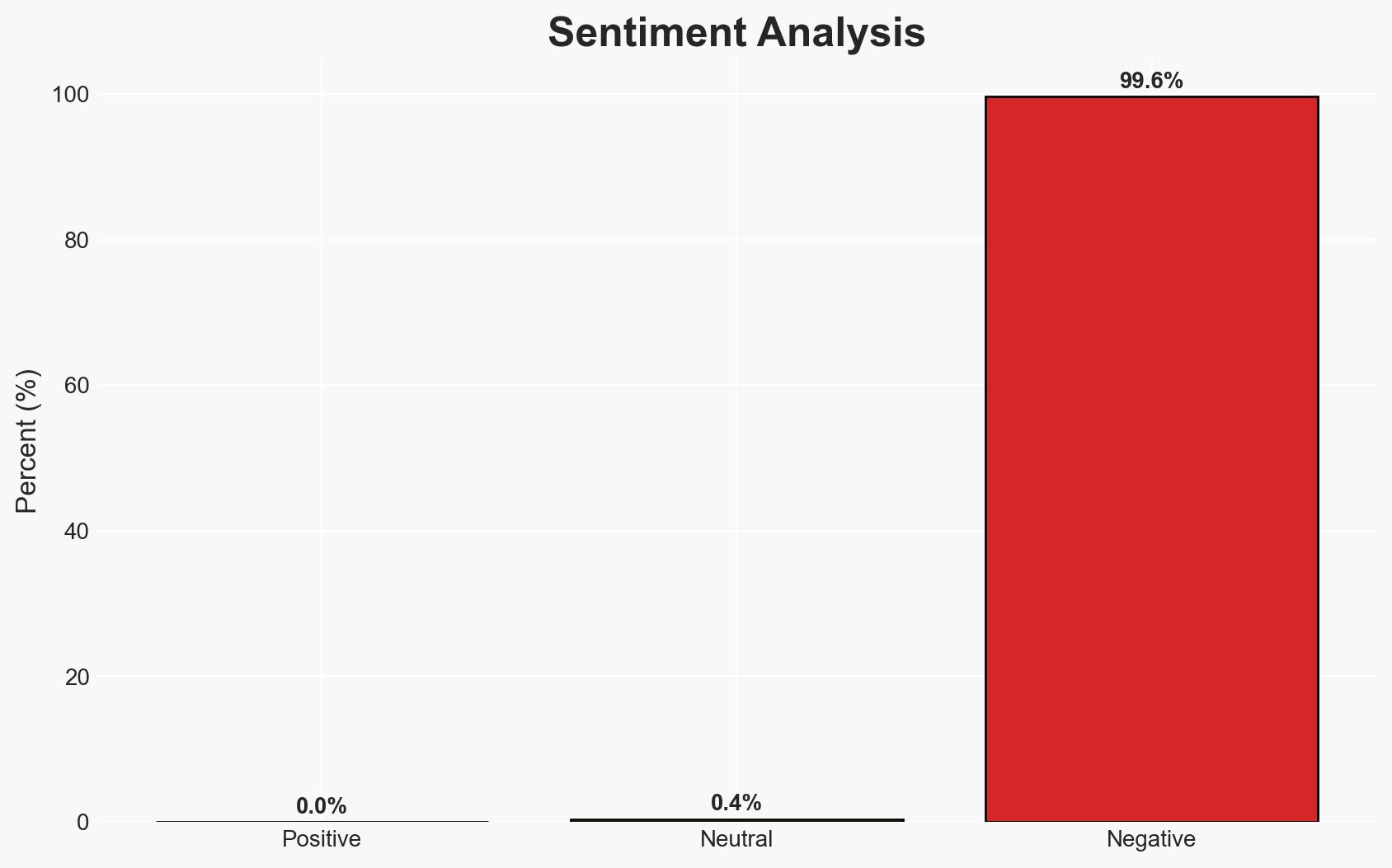

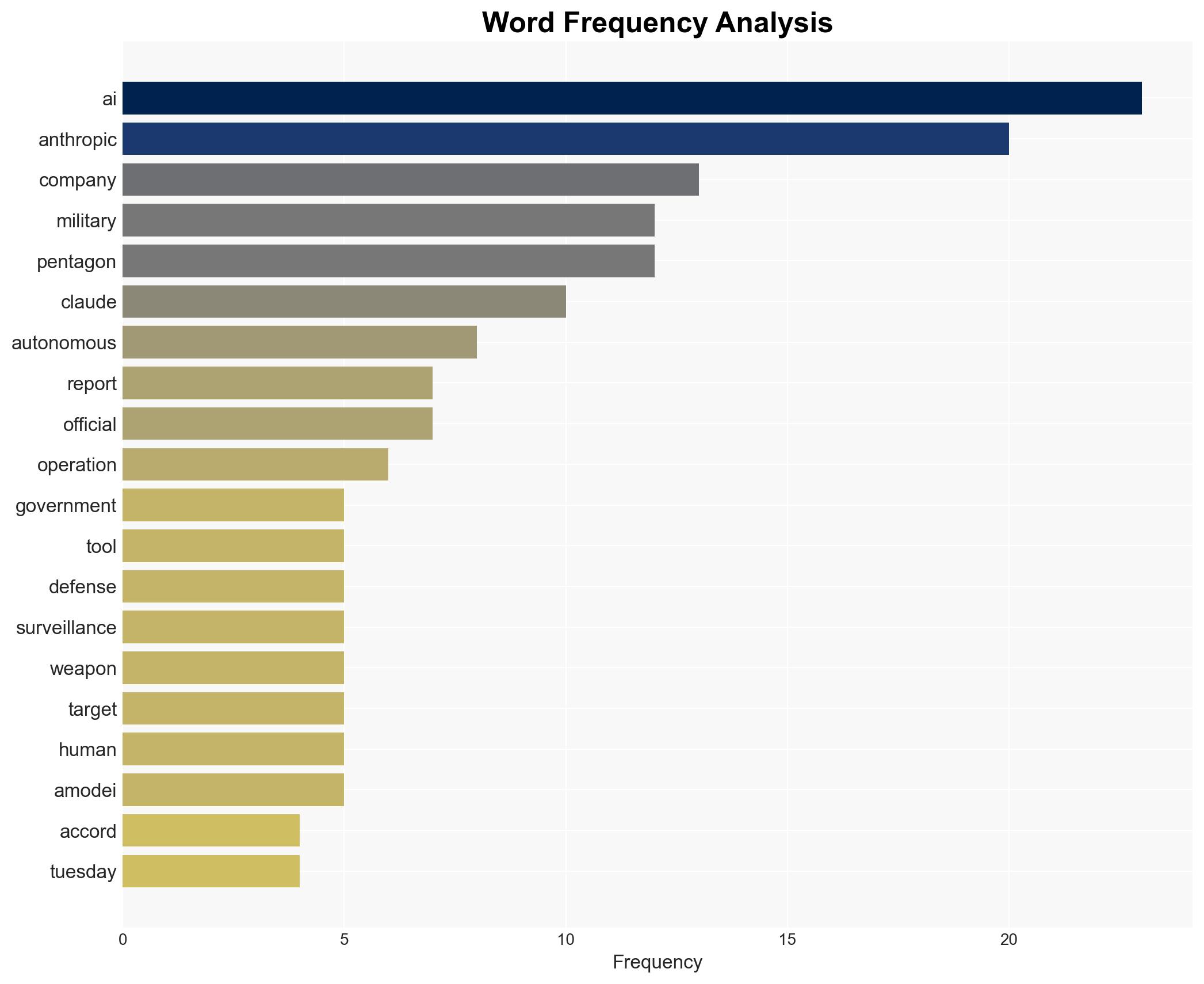

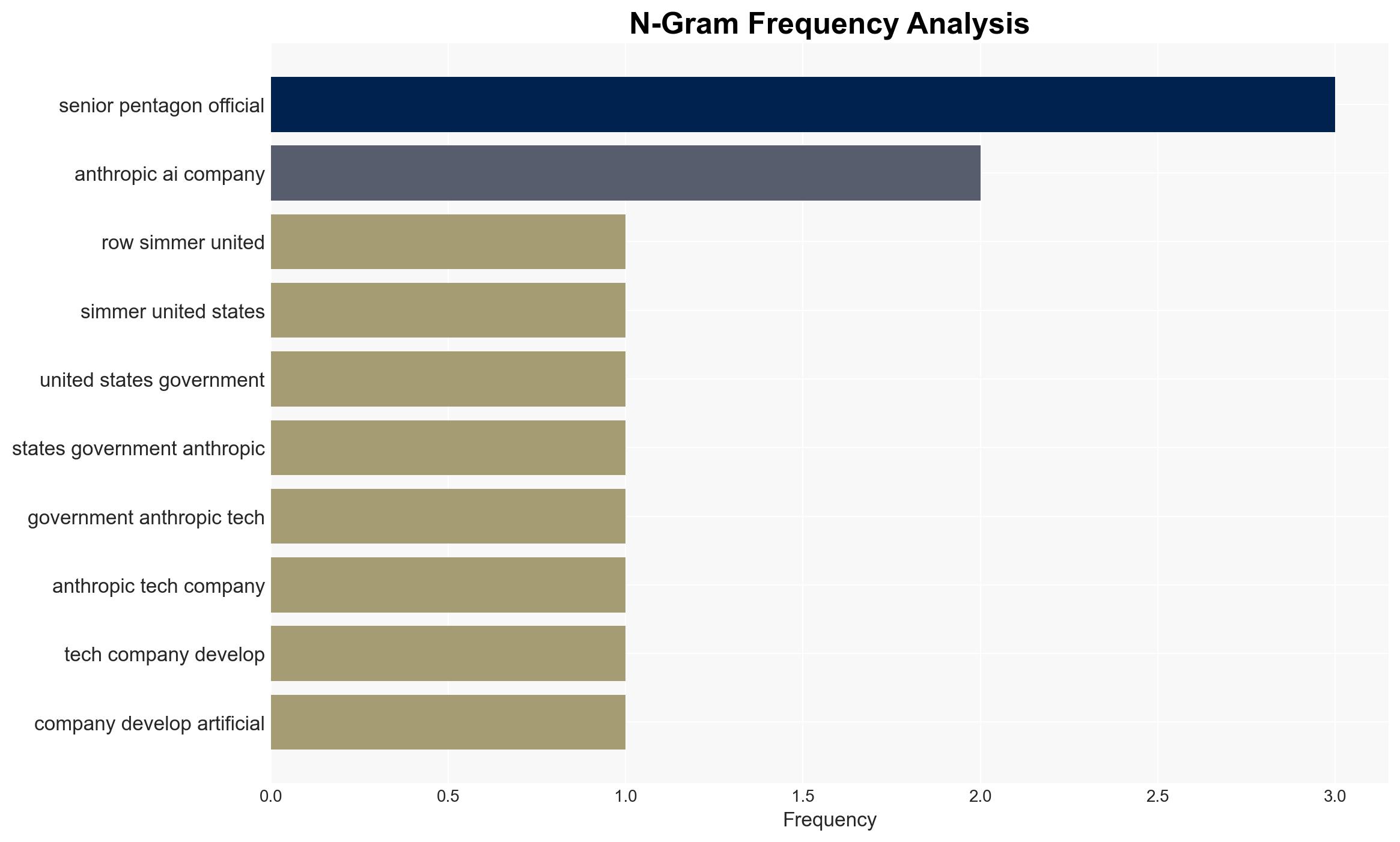

The confrontation between Anthropic and the U.S. Department of Defense over the use of AI technology in military operations underscores a significant tension between ethical AI development and national security imperatives. The most likely hypothesis is that Anthropic will maintain its stance on AI safeguards, potentially leading to a loss of its government contract. This situation affects U.S. military capabilities and the broader AI industry. Overall confidence in this assessment is moderate.

2. Competing Hypotheses

- Hypothesis A: Anthropic will concede to the Pentagon’s demands to relax AI usage restrictions to retain its government contract. Supporting evidence includes the potential financial and strategic benefits of maintaining a government partnership. Contradicting evidence is Anthropic’s strong public commitment to ethical AI use.

- Hypothesis B: Anthropic will uphold its ethical guidelines, risking the loss of its government contract. Supporting evidence includes the company’s established reputation as a responsible AI developer and its public benefit corporation status. Contradicting evidence is the potential financial impact of losing the contract.

- Assessment: Hypothesis B is currently better supported due to Anthropic’s consistent public stance on ethical AI use and its corporate identity. Indicators that could shift this judgment include changes in leadership at Anthropic or significant financial pressures.

3. Key Assumptions and Red Flags

- Assumptions: Anthropic prioritizes ethical guidelines over financial incentives; the Pentagon is unwilling to compromise on AI usage policies; the U.S. government values AI capabilities for military operations.

- Information Gaps: Details on the specific terms of the government contract and the financial impact on Anthropic if the contract is lost.

- Bias & Deception Risks: Potential bias in reporting due to unnamed sources; risk of manipulation in public statements by involved parties to influence public opinion or negotiation outcomes.

4. Implications and Strategic Risks

This development could lead to increased scrutiny on AI ethics in defense applications and influence other AI companies’ policies. The situation may also affect U.S. military AI capabilities and international perceptions of U.S. technology ethics.

- Political / Geopolitical: Potential strain on U.S. relations with countries concerned about AI ethics in military applications.

- Security / Counter-Terrorism: Possible reduction in AI-enhanced military capabilities if Anthropic withdraws its technology.

- Cyber / Information Space: Increased risk of cyber operations targeting AI companies to influence or disrupt their policies.

- Economic / Social: Impact on the AI industry’s approach to ethical guidelines and potential shifts in public perception of AI technologies.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor negotiations between Anthropic and the Pentagon; assess potential impacts on military operations; engage with other AI developers on ethical standards.

- Medium-Term Posture (1–12 months): Develop resilience measures for AI capabilities in defense; explore partnerships with other AI firms; enhance ethical AI frameworks.

- Scenario Outlook:

- Best: Anthropic and the Pentagon reach a compromise, enhancing ethical AI use in defense.

- Worst: Anthropic withdraws, leading to a gap in AI capabilities and negative industry repercussions.

- Most-Likely: Anthropic maintains its stance, resulting in contract loss but bolstering its ethical reputation.

6. Key Individuals and Entities

- Anthropic

- U.S. Department of Defense

- Pete Hegseth (U.S. Defense Secretary)

- Mrinank Sharma (Former AI safety researcher at Anthropic)

- Chinese state-sponsored hacking group (Unnamed)

7. Thematic Tags

cybersecurity, AI ethics, military technology, U.S. defense policy, cyber security, corporate responsibility, international relations, AI industry dynamics

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us