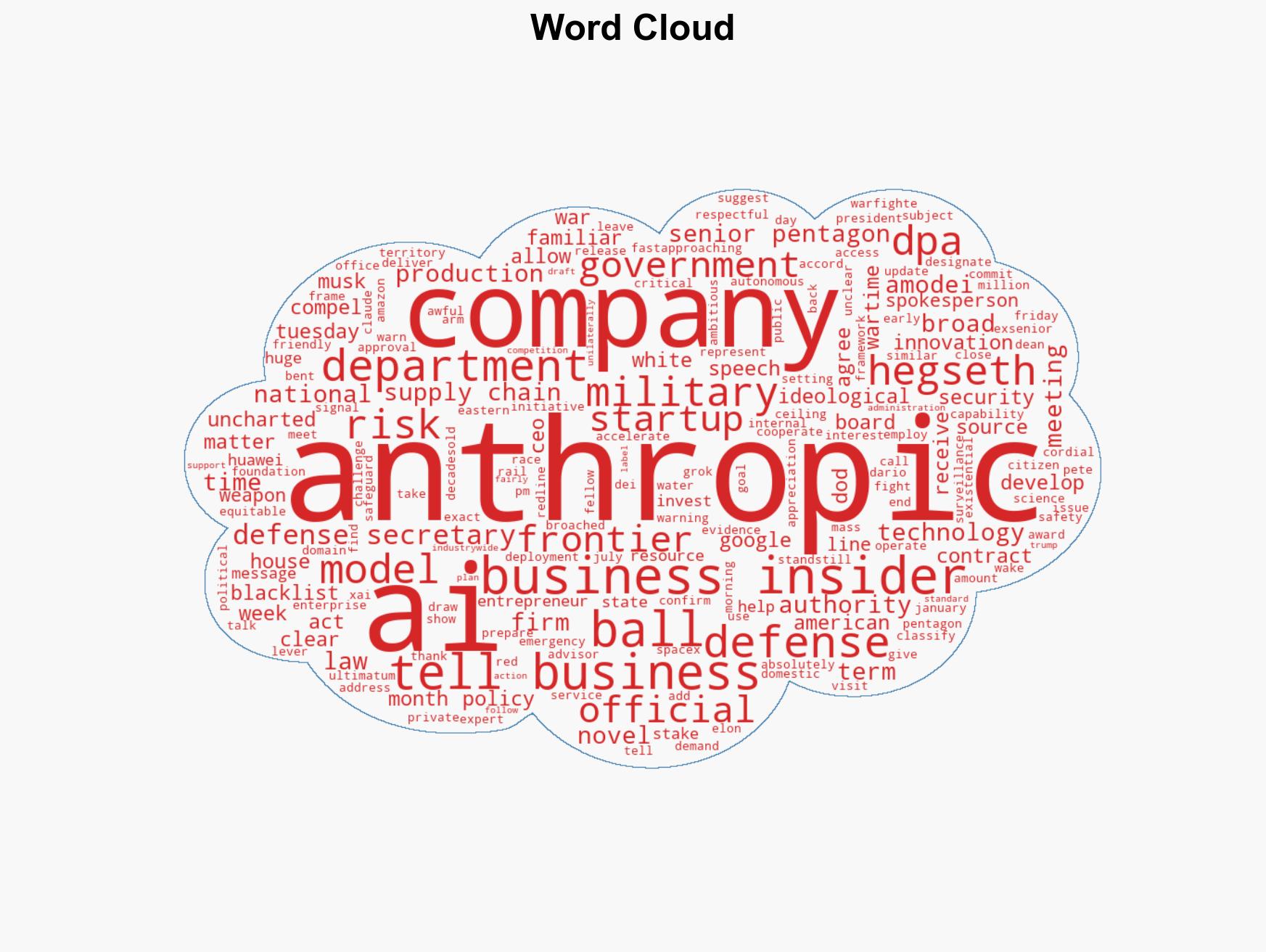

Anthropic Faces Urgent Deadline to Align with US Military or Risk Government Blacklisting

Published on: 2026-02-26

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

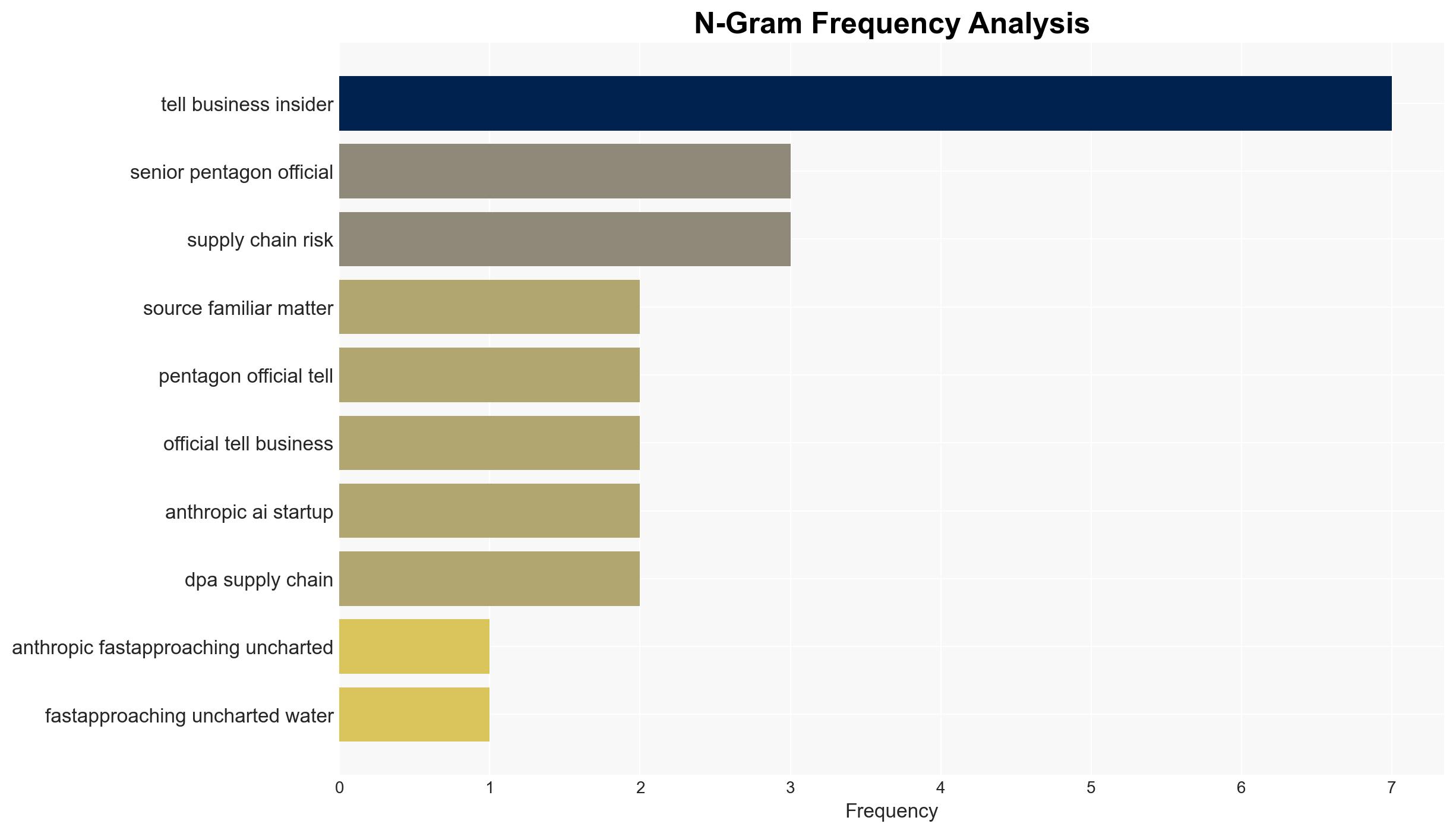

Intelligence Report: Anthropic has less than 36 hours before it barrels toward uncharted territory with the US government

1. BLUF (Bottom Line Up Front)

Anthropic is under pressure to comply with US Department of Defense demands regarding its AI model, Claude, with a deadline looming. The US government may use the Defense Production Act to compel cooperation, indicating significant national security implications. This situation presents a novel intersection of AI technology and governmental authority, with moderate confidence in the assessment that Anthropic will likely seek a compromise to avoid severe repercussions.

2. Competing Hypotheses

- Hypothesis A: Anthropic will agree to the Department of Defense’s terms to avoid being blacklisted and maintain its operational status. This is supported by the high stakes involved and the potential use of the Defense Production Act, but contradicted by Anthropic’s stated red lines on autonomous weapons and domestic surveillance.

- Hypothesis B: Anthropic will resist the Department of Defense’s demands, prioritizing its ethical stance over potential governmental pressure. This is supported by its previous contract terms and ethical concerns, but contradicted by the significant financial and operational risks of non-compliance.

- Assessment: Hypothesis A is currently better supported due to the severe consequences of non-compliance and the potential for negotiation to address ethical concerns. Key indicators that could shift this judgment include public statements from Anthropic or changes in governmental stance.

3. Key Assumptions and Red Flags

- Assumptions: The US government is willing to enforce the Defense Production Act; Anthropic values its US operations and contracts; the Department of Defense’s demands are non-negotiable; Anthropic’s ethical concerns are genuine and significant.

- Information Gaps: Specific terms of the Department of Defense’s demands; internal decision-making processes within Anthropic; potential international reactions or pressures.

- Bias & Deception Risks: Potential bias in government sources towards emphasizing national security threats; Anthropic’s public statements may downplay internal willingness to compromise; possible strategic deception by either party to influence negotiations.

4. Implications and Strategic Risks

This development could set a precedent for governmental intervention in AI technologies, impacting future industry-government relations and technological innovation policies.

- Political / Geopolitical: Potential for increased tension between technology firms and government over national security priorities.

- Security / Counter-Terrorism: Enhanced military capabilities if Anthropic’s AI is integrated, but ethical concerns may affect public perception and trust.

- Cyber / Information Space: Risk of increased cyber espionage targeting Anthropic or its partners due to heightened profile.

- Economic / Social: Possible economic impact on Anthropic and its investors if blacklisted; social discourse on ethical AI use may intensify.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor Anthropic’s public communications and government statements; engage in dialogue with stakeholders to clarify terms and explore compromise solutions.

- Medium-Term Posture (1–12 months): Develop resilience measures for AI firms facing governmental pressure; foster partnerships to balance innovation with security needs.

- Scenario Outlook: Best: Anthropic and the government reach a mutually beneficial agreement. Worst: Anthropic is blacklisted, leading to significant operational and financial setbacks. Most-Likely: A compromise is reached, with some adjustments to Anthropic’s operational guidelines.

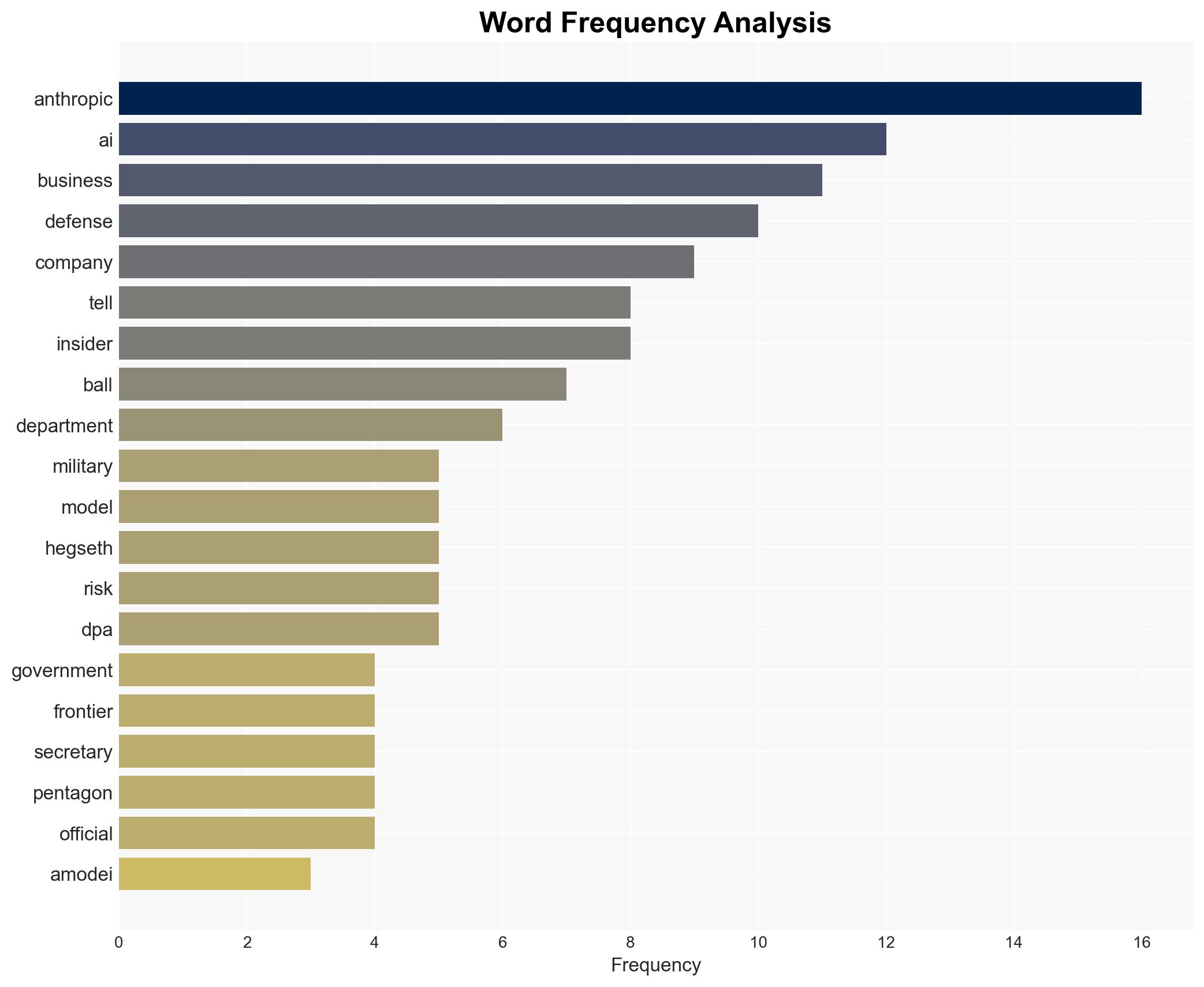

6. Key Individuals and Entities

- Dario Amodei, CEO of Anthropic

- Pete Hegseth, US Defense Secretary

- Anthropic, AI startup

- US Department of Defense

7. Thematic Tags

national security threats, national security, artificial intelligence, defense policy, government intervention, ethical AI, military technology, US government

Structured Analytic Techniques Applied

- Cognitive Bias Stress Test: Expose and correct potential biases in assessments through red-teaming and structured challenge.

- Bayesian Scenario Modeling: Use probabilistic forecasting for conflict trajectories or escalation likelihood.

- Network Influence Mapping: Map relationships between state and non-state actors for impact estimation.

Explore more:

National Security Threats Briefs ·

Daily Summary ·

Support us