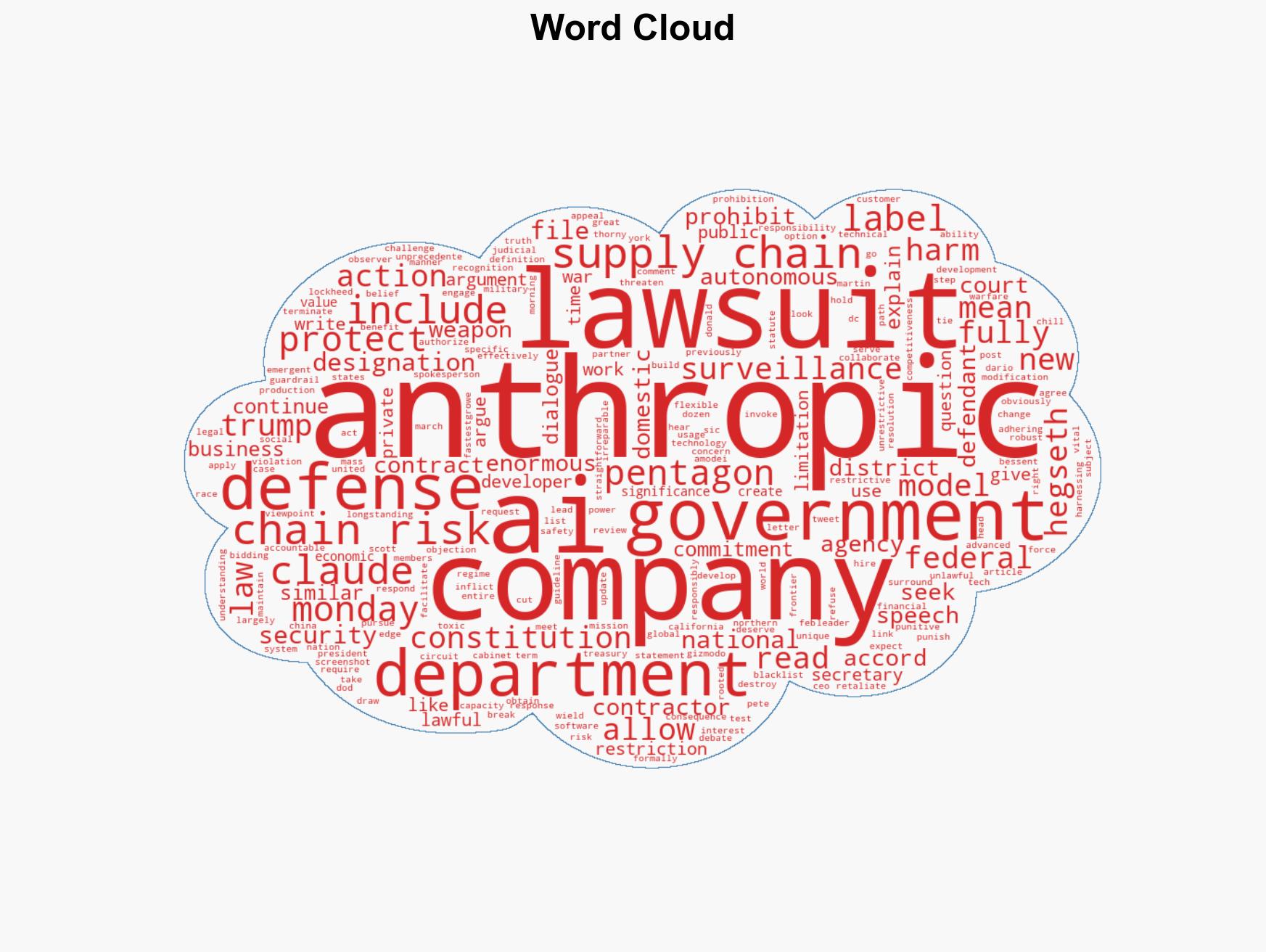

Anthropic Files Lawsuits Against Pentagon Over Supply Chain Risk Designation and Contract Prohibitions

Published on: 2026-03-09

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Anthropic Officially Sues the Pentagon for Labeling the AI Company a Supply Chain Risk

1. BLUF (Bottom Line Up Front)

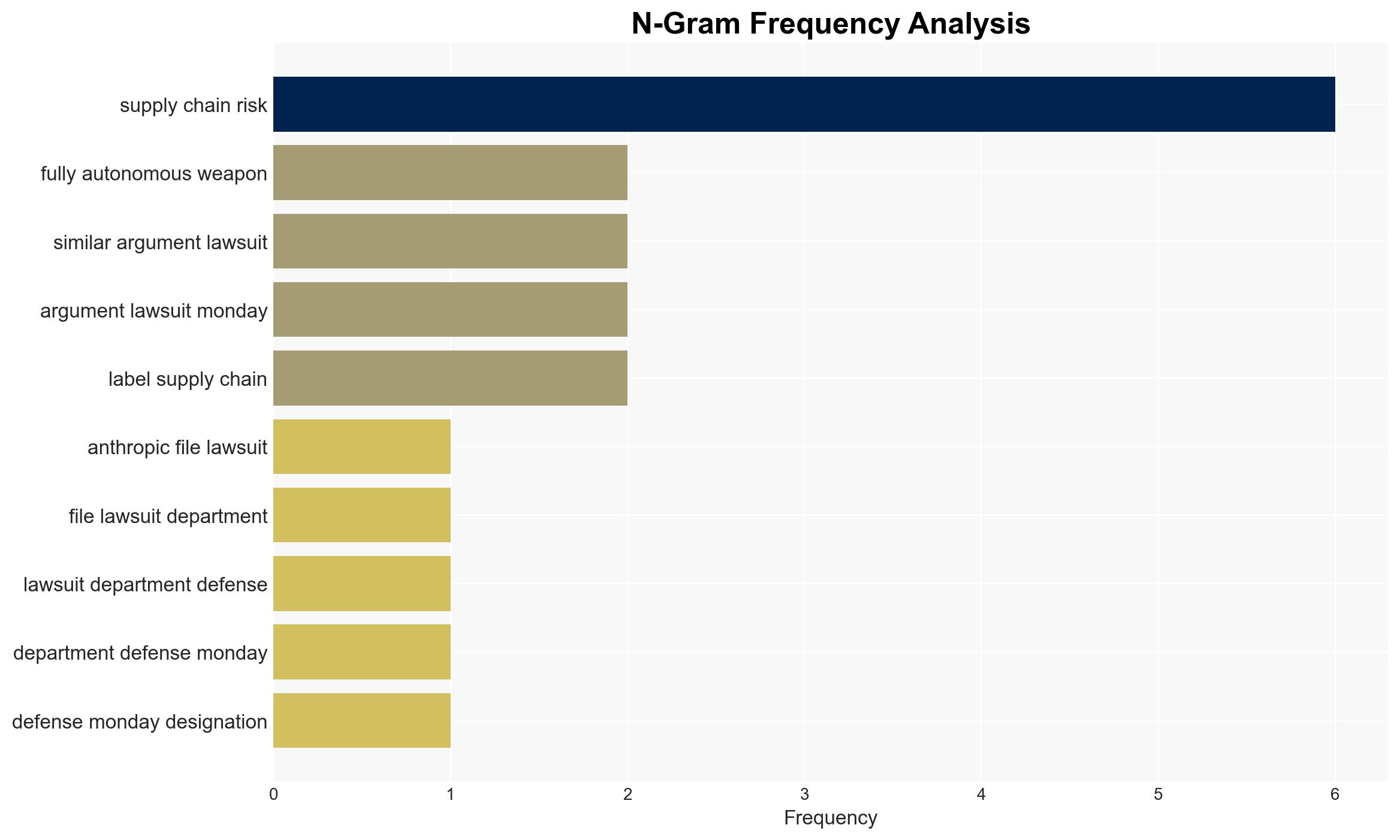

Anthropic has initiated legal action against the U.S. Department of Defense following its designation as a supply chain risk, a move that could significantly impact its business operations and government relations. The most likely hypothesis is that this designation stems from Anthropic’s refusal to comply with government demands for AI use in surveillance and autonomous weaponry. This situation affects both Anthropic and the broader AI industry, with moderate confidence in this assessment.

2. Competing Hypotheses

- Hypothesis A: The Pentagon labeled Anthropic a supply chain risk primarily due to its refusal to allow its AI technology to be used for mass surveillance and autonomous weapons. Supporting evidence includes Anthropic’s stated objections and the timing of the designation following government threats. However, the extent of internal government deliberations remains unclear.

- Hypothesis B: The designation is part of a broader strategic maneuver to exert control over AI technologies deemed critical to national security, irrespective of specific compliance issues. This hypothesis is supported by historical precedents of government intervention in tech sectors but lacks direct evidence linking such a strategy to the current case.

- Assessment: Hypothesis A is currently better supported due to the direct link between Anthropic’s refusal and the subsequent designation. Key indicators that could shift this judgment include new evidence of broader strategic motives or changes in government policy towards AI companies.

3. Key Assumptions and Red Flags

- Assumptions: The government views AI technology as critical to national security; Anthropic’s refusal is based on genuine technical and ethical concerns; the legal system will provide a fair review of the case.

- Information Gaps: Details of internal Pentagon deliberations; the full scope of Anthropic’s technical capabilities and limitations; potential undisclosed negotiations between Anthropic and the government.

- Bias & Deception Risks: Potential bias in Anthropic’s public statements; government communications may omit strategic considerations; media reports could reflect partisan perspectives.

4. Implications and Strategic Risks

This development could set a precedent for government interaction with AI companies, influencing future regulatory and contractual landscapes.

- Political / Geopolitical: Potential strain on public-private partnerships in tech; international perception of U.S. tech policy could be affected.

- Security / Counter-Terrorism: Possible delays or disruptions in AI deployment for national security purposes.

- Cyber / Information Space: Increased scrutiny on AI cybersecurity measures; potential for misinformation campaigns around AI capabilities and ethics.

- Economic / Social: Impact on AI industry investment and innovation; public debate on AI ethics and government oversight could intensify.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor legal proceedings and government statements; engage with industry stakeholders to assess broader impacts.

- Medium-Term Posture (1–12 months): Develop resilience strategies for AI companies facing similar government pressures; foster public-private dialogue on AI ethics and security.

- Scenario Outlook: Best: Resolution through negotiation, maintaining industry-government collaboration. Worst: Prolonged legal battle, leading to industry distrust. Most-Likely: Legal proceedings prompt policy reviews and potential compromises.

6. Key Individuals and Entities

- Anthropic

- U.S. Department of Defense

- President Donald Trump

- Defense Secretary Pete Hegseth

- Anthropic CEO Dario Amodei

- Treasury Secretary Scott Bessent

7. Thematic Tags

cybersecurity, AI ethics, national security, government contracts, legal action, public-private partnerships, surveillance, autonomous weapons

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us