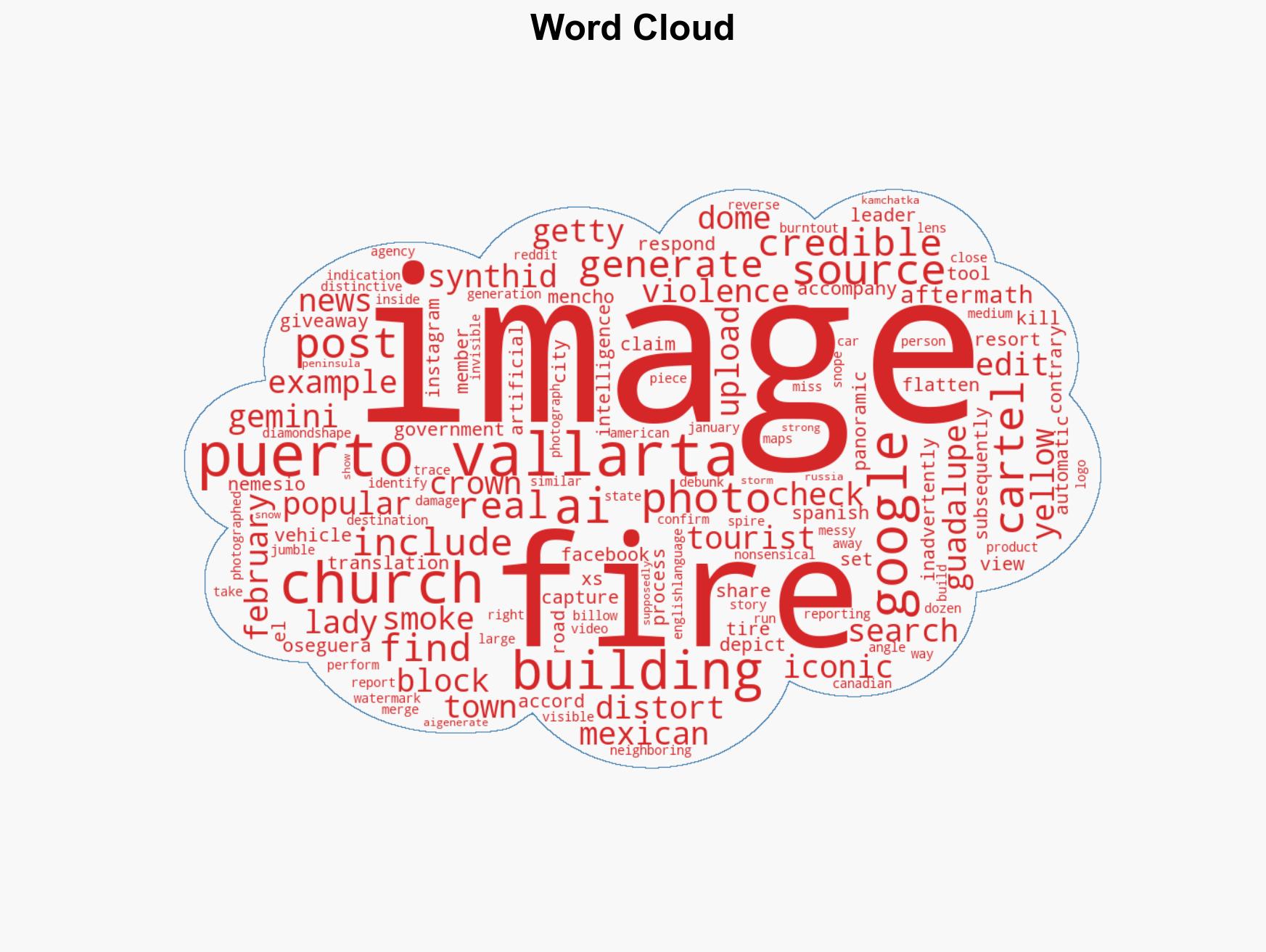

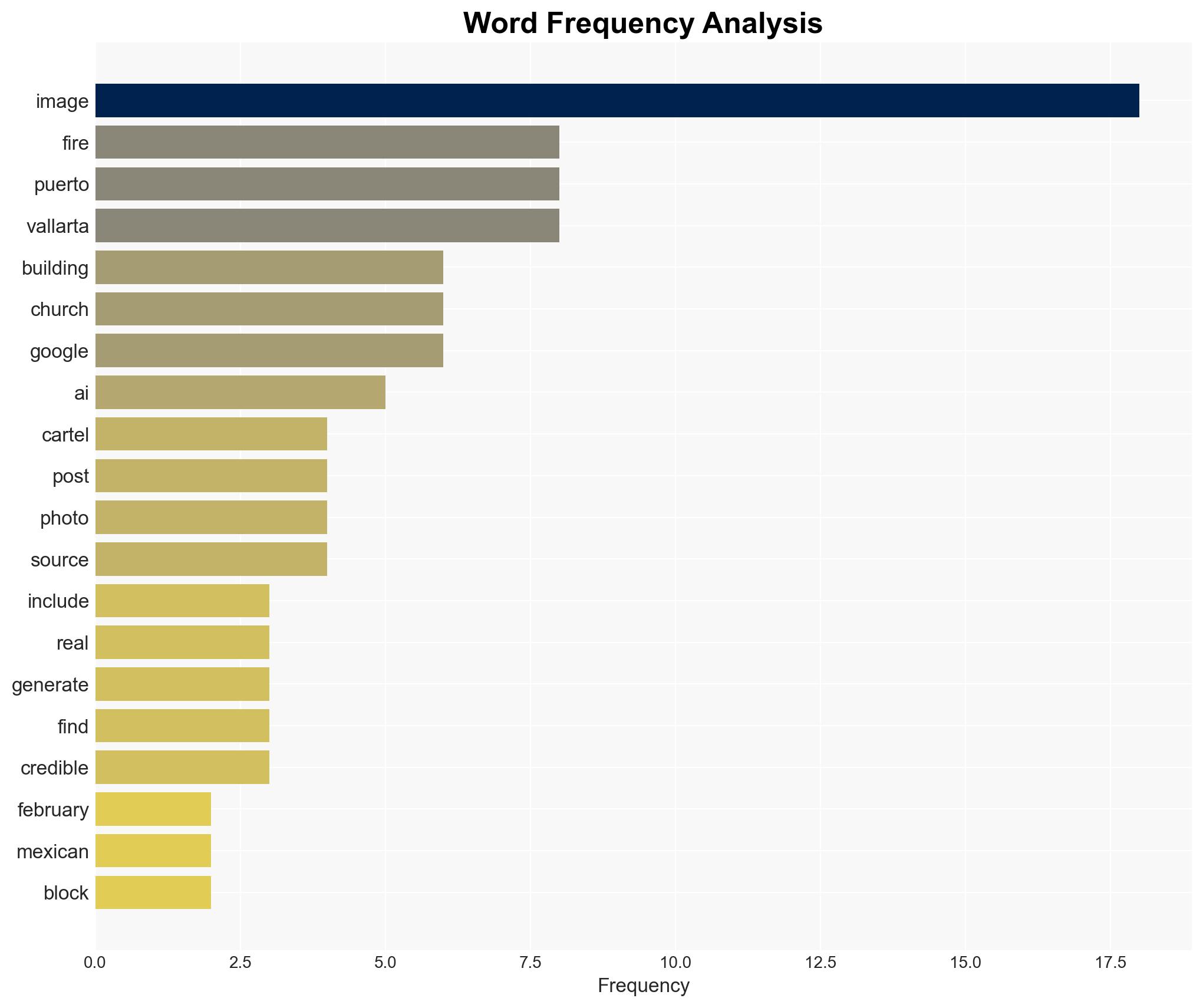

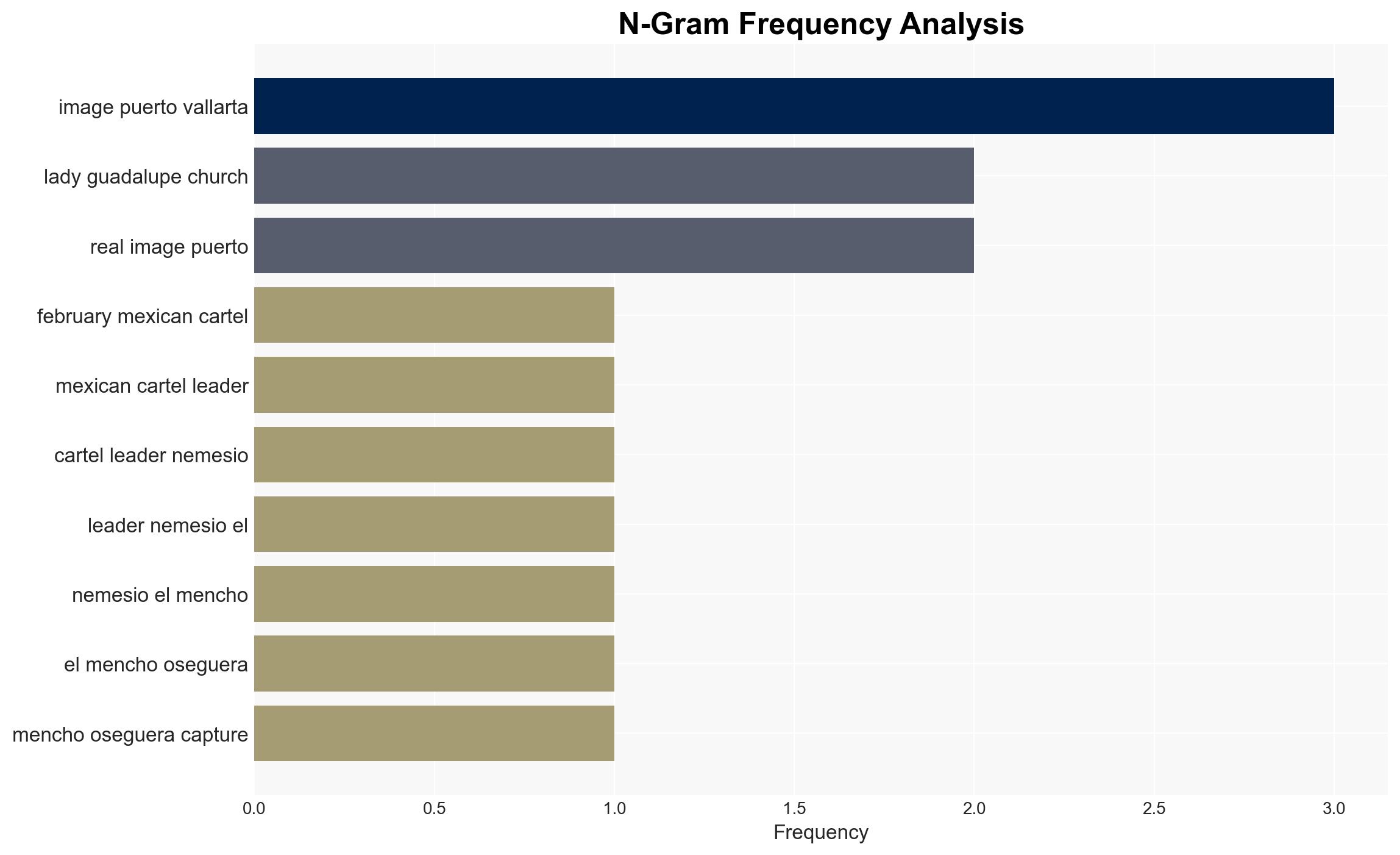

Beware of AI-generated images misrepresenting Puerto Vallarta amid cartel violence aftermath

Published on: 2026-02-25

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Don’t fall for AI-generated images of Puerto Vallarta after cartel attacks

1. BLUF (Bottom Line Up Front)

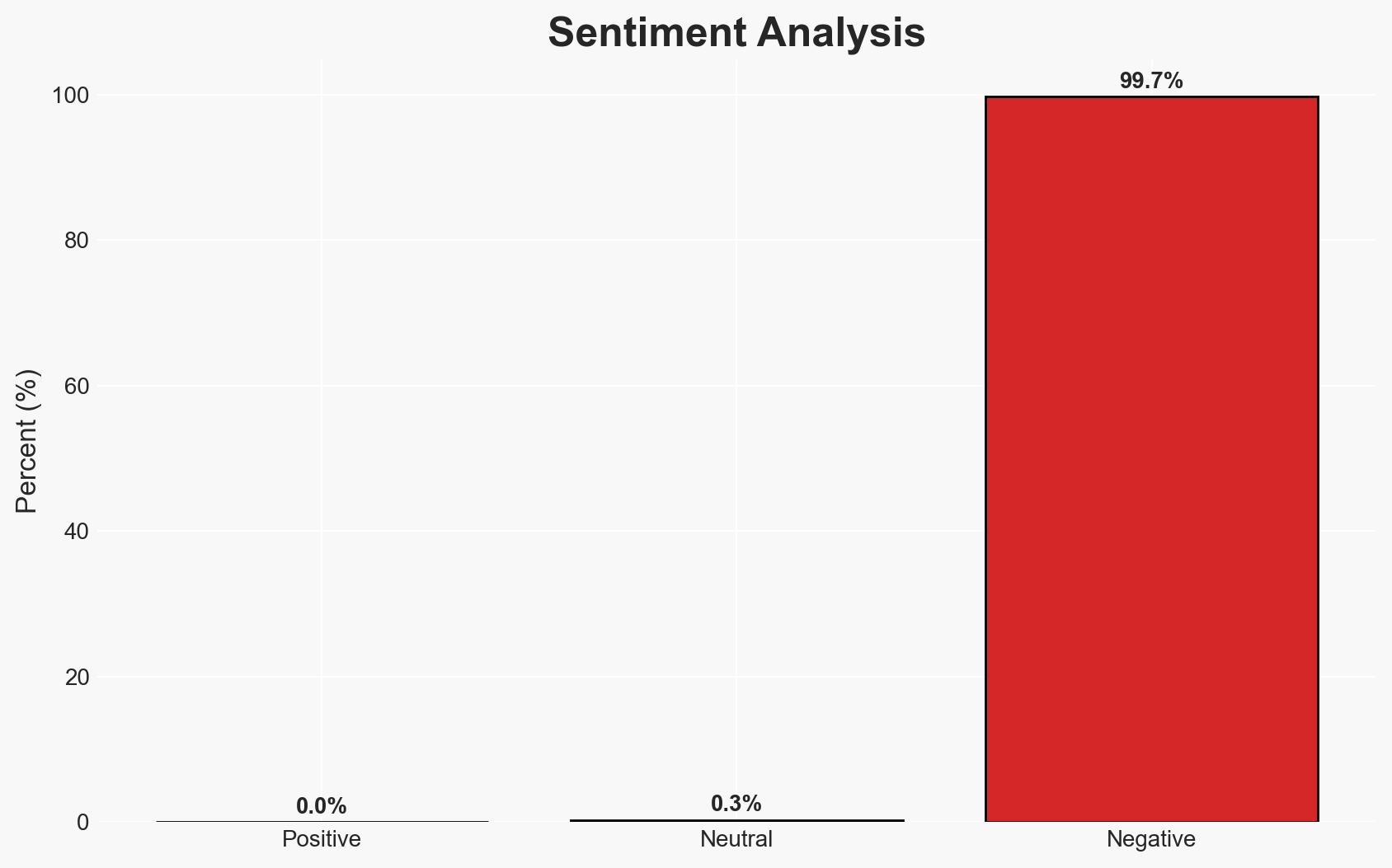

The dissemination of AI-generated images falsely depicting Puerto Vallarta in flames following cartel violence is likely an attempt to manipulate public perception and incite fear. The most supported hypothesis is that these images are part of a disinformation campaign. This affects local authorities, tourists, and international stakeholders. Overall confidence in this assessment is moderate due to limited direct evidence of intent behind the image creation.

2. Competing Hypotheses

- Hypothesis A: The AI-generated images are part of a deliberate disinformation campaign aimed at destabilizing the region or damaging its tourism industry. Supporting evidence includes the use of AI tools to create realistic yet false images and the lack of credible sources corroborating the depicted events. Key uncertainties include the identity and motivations of the perpetrators.

- Hypothesis B: The images are the result of individuals experimenting with AI technology without malicious intent. Supporting evidence includes the presence of AI tool watermarks and the possibility of amateur users sharing the images without understanding the consequences. Contradicting evidence includes the timing and context of the images’ release.

- Assessment: Hypothesis A is currently better supported due to the context of cartel violence and the potential strategic benefits of spreading fear and misinformation. Indicators that could shift this judgment include credible claims of responsibility or further evidence of coordinated dissemination efforts.

3. Key Assumptions and Red Flags

- Assumptions: The images were created using AI tools; the dissemination was intended to mislead; the images have been widely viewed and shared.

- Information Gaps: The identity of the image creators and their motivations; the extent of the images’ impact on public perception.

- Bias & Deception Risks: Confirmation bias in interpreting the images as part of a broader disinformation campaign; potential over-reliance on AI detection tools without corroborating evidence.

4. Implications and Strategic Risks

The use of AI-generated images in disinformation campaigns could escalate tensions in regions already experiencing instability. This development may influence public perception and policy decisions.

- Political / Geopolitical: Potential for strained relations between Mexico and tourist-origin countries if perceived as unsafe.

- Security / Counter-Terrorism: Increased operational challenges for law enforcement in distinguishing real threats from fabricated ones.

- Cyber / Information Space: Growing sophistication in digital misinformation tactics, necessitating enhanced detection and response capabilities.

- Economic / Social: Potential decline in tourism revenue and social unrest due to heightened fear and misinformation.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Enhance monitoring of social media for similar disinformation attempts; issue public advisories clarifying the situation.

- Medium-Term Posture (1–12 months): Develop partnerships with tech companies to improve AI-generated content detection; invest in public awareness campaigns about misinformation.

- Scenario Outlook:

- Best: Effective counter-disinformation measures lead to restored public confidence.

- Worst: Continued spread of misinformation results in significant economic and social disruption.

- Most-Likely: Ongoing challenges in managing misinformation with gradual improvements in detection and public education.

6. Key Individuals and Entities

- Nemesio “El Mencho” Oseguera, Mexican government, Google AI

7. Thematic Tags

cybersecurity, disinformation, AI-generated content, cartel violence, tourism impact, social media, public perception, misinformation

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us