Hegseth Issues Ultimatum to Anthropic for AI Access Amid Pentagon Dispute

Published on: 2026-02-25

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Report Pete Hegseth Sets Friday Deadline for Anthropic in Pentagon AI Dispute

1. BLUF (Bottom Line Up Front)

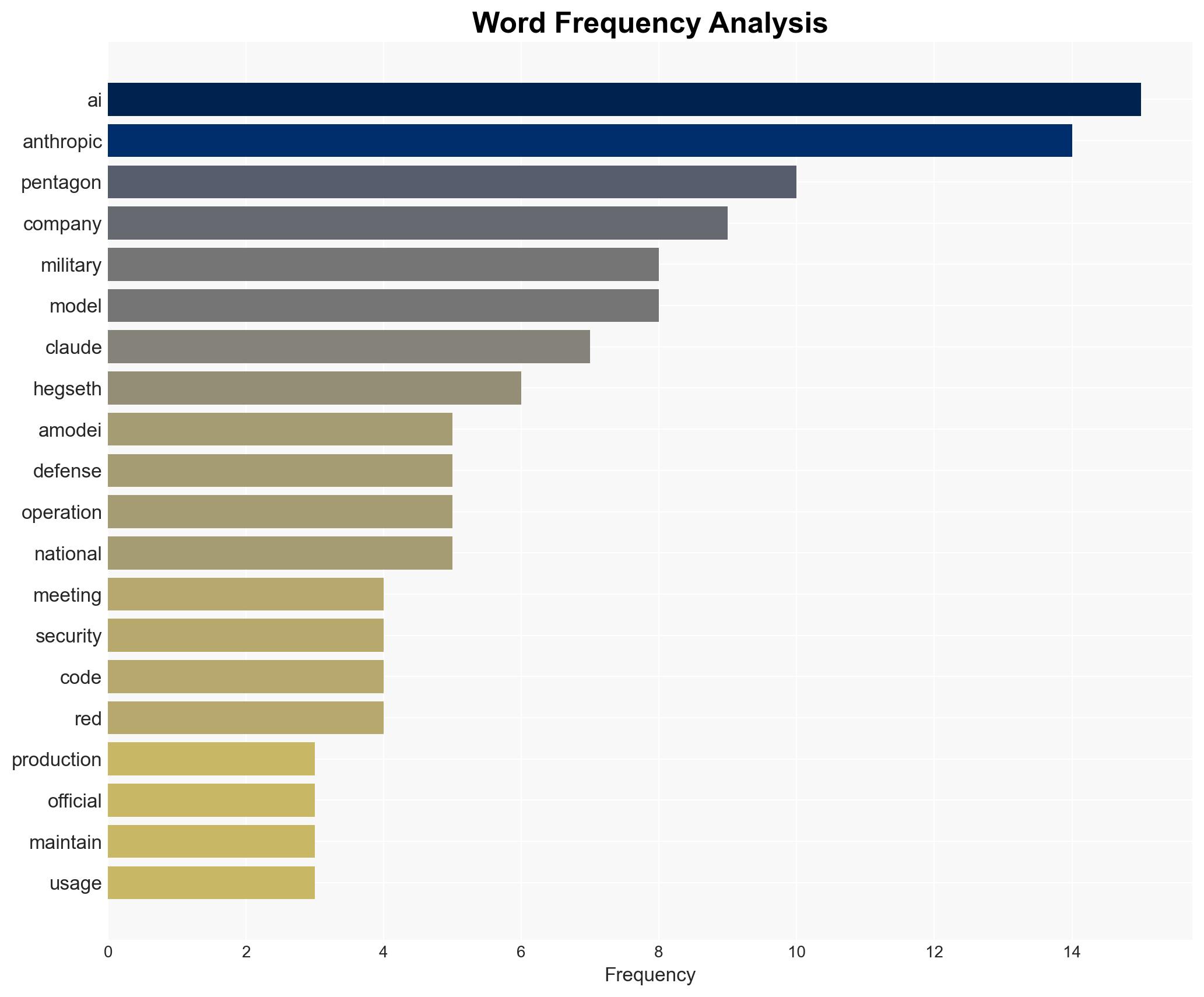

The Pentagon has issued an ultimatum to Anthropic to provide unrestricted access to its AI model, Claude, by Friday, or face penalties. The dispute centers on AI safety protocols and usage restrictions, with potential implications for military operations and AI governance. This assessment is made with moderate confidence, considering the current evidence and uncertainties.

2. Competing Hypotheses

- Hypothesis A: The Pentagon’s ultimatum is primarily a negotiation tactic to secure more favorable terms from Anthropic without intending to follow through on severe penalties. This is supported by the ongoing discussions and the Pentagon’s recognition of its dependence on Claude. However, the ultimatum’s severity suggests a genuine willingness to escalate.

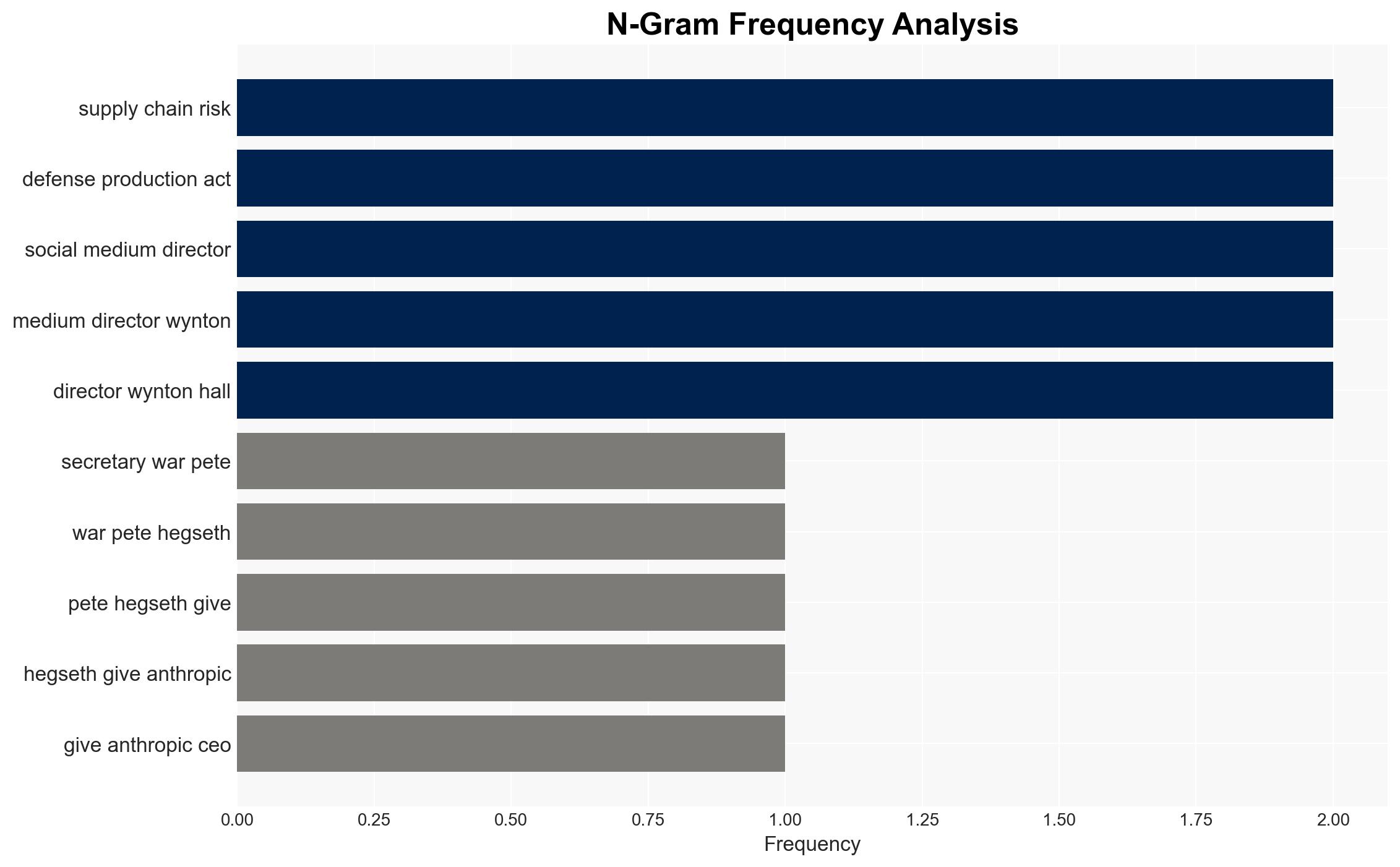

- Hypothesis B: The Pentagon is prepared to enforce penalties, including invoking the Defense Production Act, to ensure compliance. This hypothesis is supported by the explicit threat of penalties and the strategic importance of AI capabilities. Contradictory evidence includes the ongoing negotiations and Anthropic’s willingness to adjust policies.

- Assessment: Hypothesis A is currently better supported due to the continued dialogue and mutual recognition of the need for Claude’s capabilities. Indicators such as a sudden breakdown in talks or public statements from either party could shift this judgment.

3. Key Assumptions and Red Flags

- Assumptions: The Pentagon’s need for Claude is critical to current operations; Anthropic’s policy adjustments can meet security needs without compromising ethical standards; the Defense Production Act is a viable enforcement tool.

- Information Gaps: Details on the specific AI capabilities required by the Pentagon; the full scope of Anthropic’s willingness to adjust policies; potential alternative AI providers for the Pentagon.

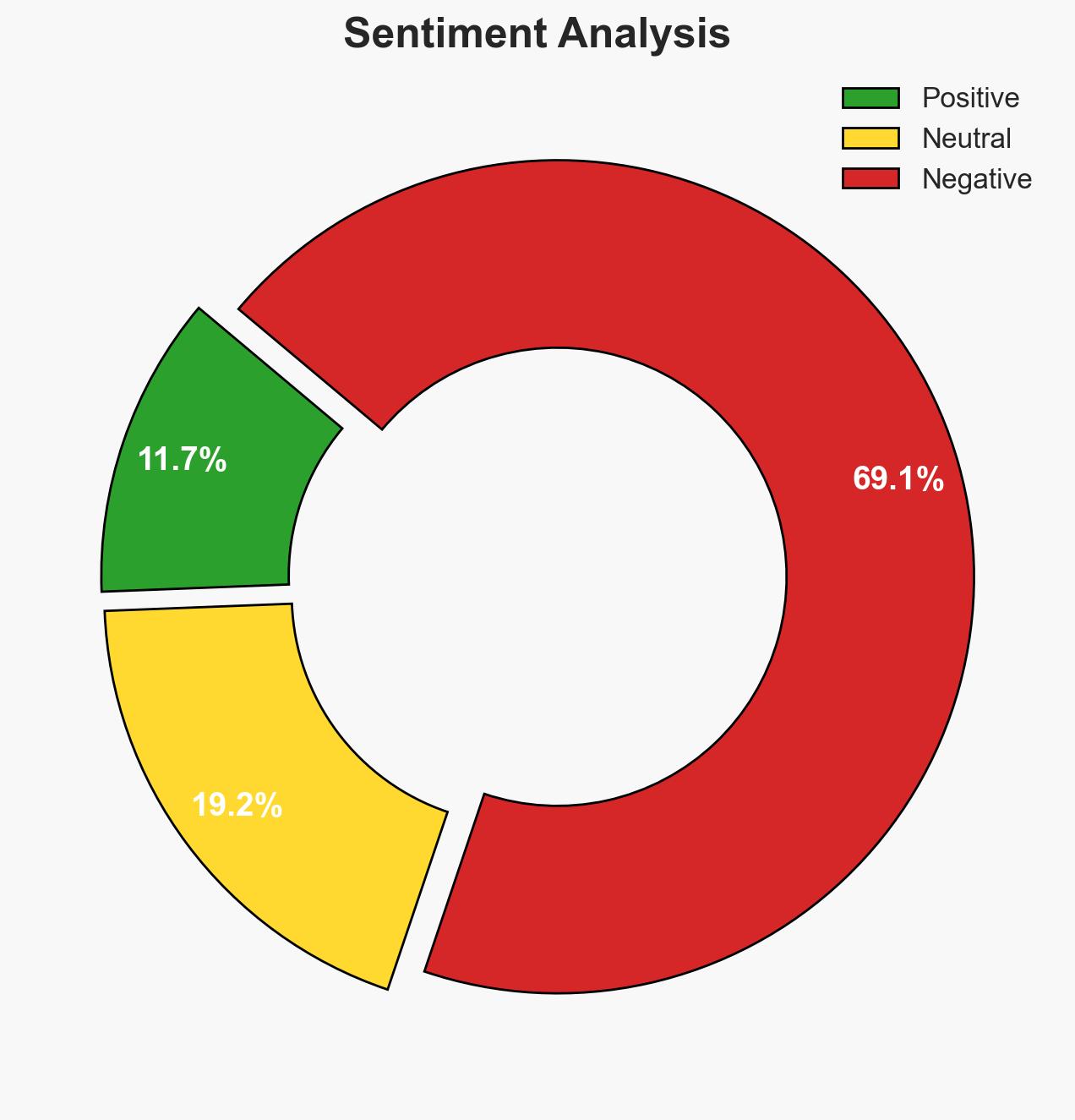

- Bias & Deception Risks: Potential bias in sources favoring Pentagon perspectives; risk of Anthropic overstating its ethical commitments to leverage negotiations; possible mischaracterization of the meeting’s tone.

4. Implications and Strategic Risks

The outcome of this dispute could set precedents for military-AI partnerships and influence future AI governance frameworks. The resolution will impact operational capabilities and ethical standards in AI deployment.

- Political / Geopolitical: Potential strain on public-private partnerships in defense; influence on international AI governance norms.

- Security / Counter-Terrorism: Possible delays or disruptions in military operations reliant on AI capabilities.

- Cyber / Information Space: Increased scrutiny on AI cybersecurity and ethical use in military contexts.

- Economic / Social: Impact on Anthropic’s market position and investor confidence; broader societal debate on AI ethics.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor negotiations closely; prepare contingency plans for alternative AI solutions; engage with Anthropic to clarify policy adjustments.

- Medium-Term Posture (1–12 months): Develop resilience measures for AI supply chain risks; strengthen partnerships with multiple AI providers; enhance AI governance frameworks.

- Scenario Outlook:

- Best: Successful negotiation leads to a mutually beneficial agreement, enhancing AI capabilities without ethical compromise.

- Worst: Breakdown in talks results in severe penalties, disrupting military operations and damaging public-private trust.

- Most-Likely: Compromise reached with some policy adjustments, maintaining operational capabilities and ethical standards.

6. Key Individuals and Entities

- Pete Hegseth – Secretary of War

- Dario Amodei – CEO of Anthropic

- Anthropic – AI company

- Pentagon – U.S. Department of Defense

- Palantir – Partner company

7. Thematic Tags

national security threats, AI governance, military technology, defense policy, public-private partnerships, ethical AI, supply chain risk, Defense Production Act

Structured Analytic Techniques Applied

- Cognitive Bias Stress Test: Expose and correct potential biases in assessments through red-teaming and structured challenge.

- Bayesian Scenario Modeling: Use probabilistic forecasting for conflict trajectories or escalation likelihood.

- Network Influence Mapping: Map relationships between state and non-state actors for impact estimation.

Explore more:

National Security Threats Briefs ·

Daily Summary ·

Support us