Leveraging AI Strategies to Enhance Security Analysts’ Defense Against Evolving Cyber Threats

Published on: 2026-03-17

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: AI use cases for security analysts

1. BLUF (Bottom Line Up Front)

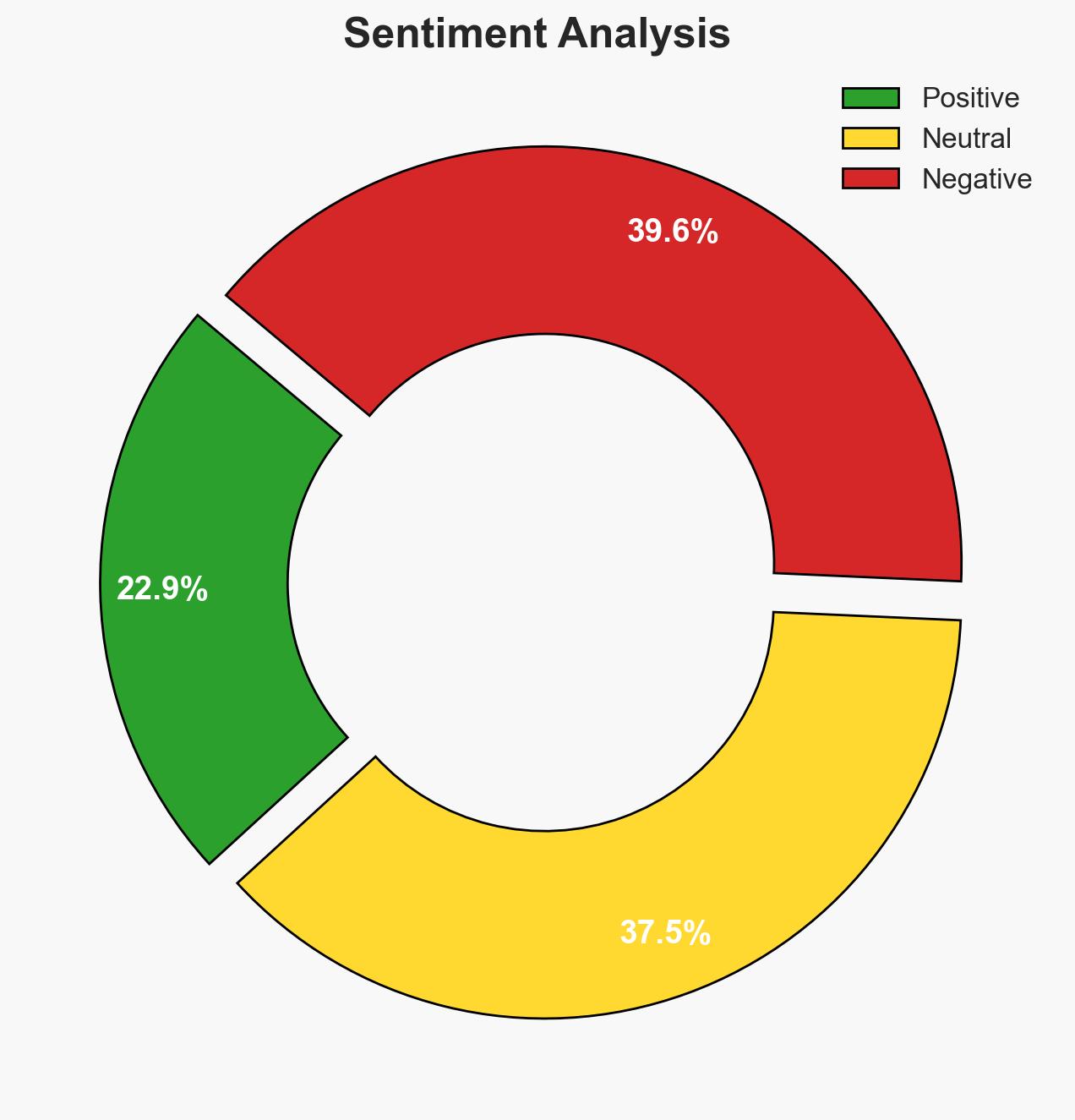

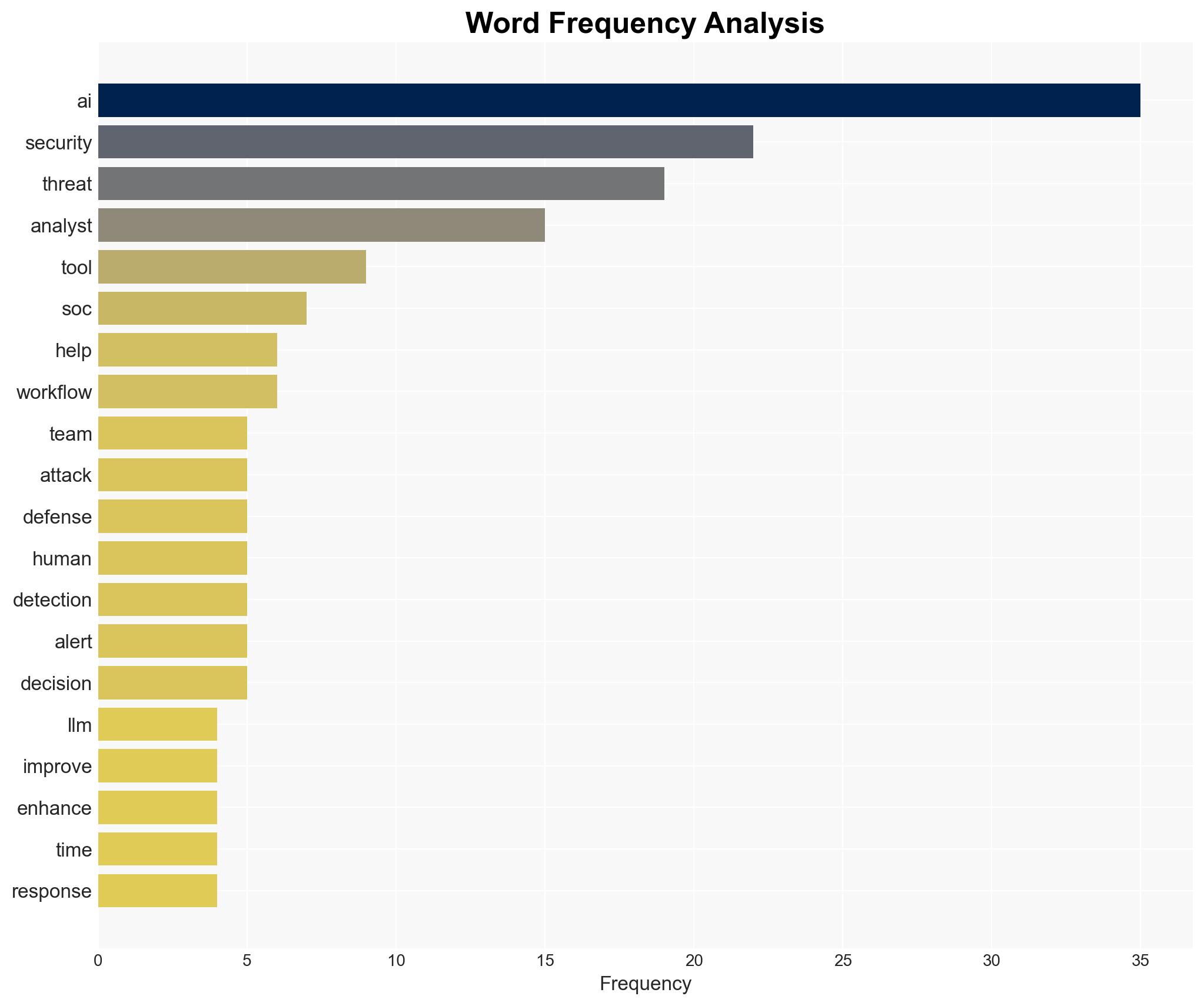

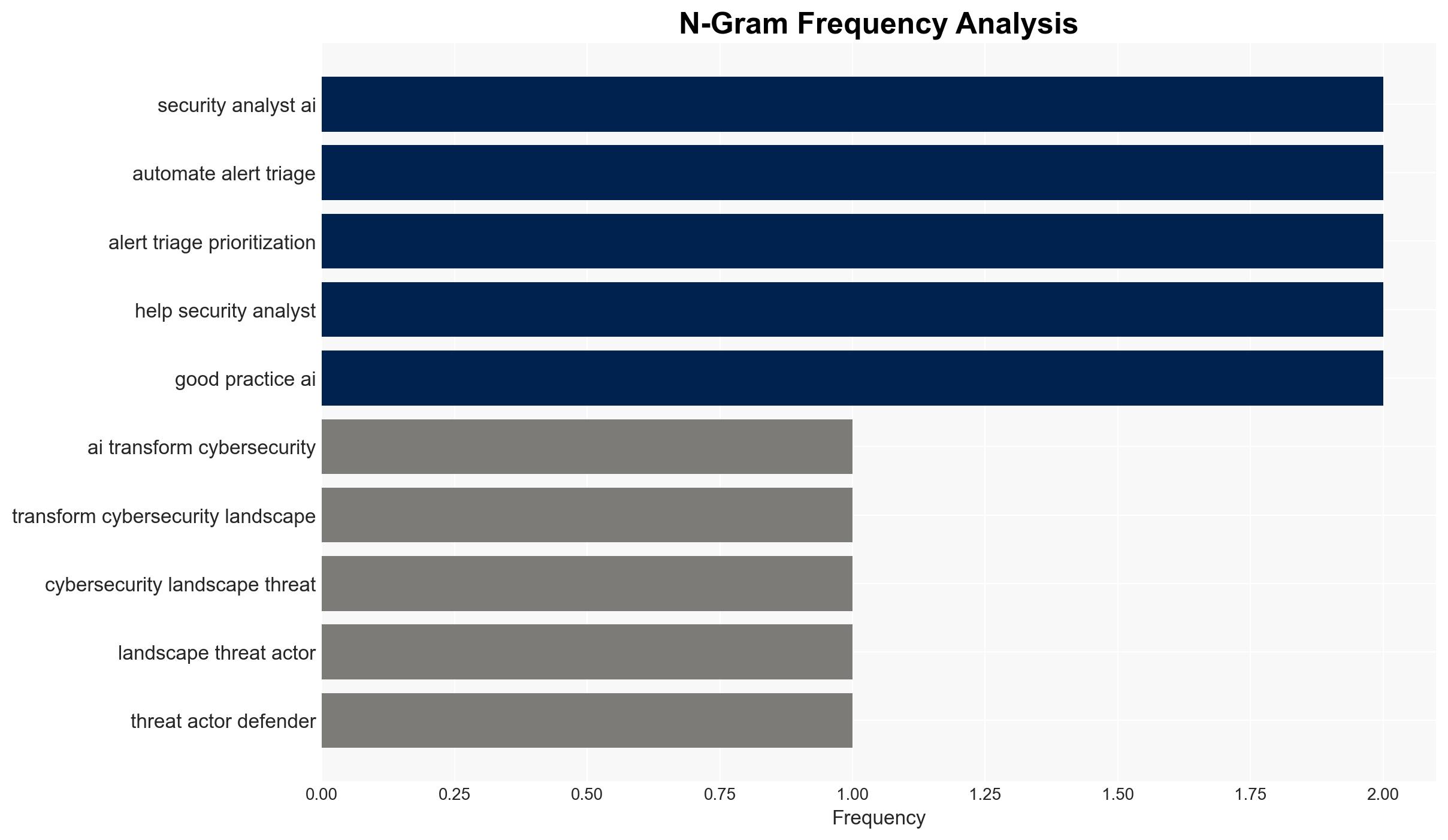

AI is significantly altering the cybersecurity landscape, enhancing both offensive and defensive capabilities. The most likely hypothesis is that AI will continue to be a critical factor in cybersecurity, with defenders needing to adopt proactive AI strategies to counteract AI-driven threats. This affects security teams, policy-makers, and threat actors. Overall confidence in this assessment is moderate.

2. Competing Hypotheses

- Hypothesis A: AI will predominantly benefit cyber defenders by enabling faster detection and response to threats. This is supported by the ability of AI to analyze large datasets in real time and improve incident response times. However, uncertainty remains regarding the adaptability of human teams to integrate AI effectively.

- Hypothesis B: AI will primarily empower threat actors, making it easier to launch sophisticated attacks. Evidence includes the reported increase in generic threats due to adversaries using AI to generate malware. Contradicting this is the potential for defenders to use similar AI tools to counteract these threats.

- Assessment: Hypothesis A is currently better supported due to the proactive AI strategies being developed by security teams, although the rapid evolution of AI tools by threat actors could shift this balance. Key indicators to watch include advancements in AI defensive capabilities and the rate of AI adoption by threat actors.

3. Key Assumptions and Red Flags

- Assumptions: AI tools will continue to improve in accuracy and speed; security teams will have the resources to implement AI solutions; threat actors will not outpace defenders in AI adoption.

- Information Gaps: Detailed data on the specific AI tools used by threat actors; effectiveness metrics of AI tools in real-world defensive operations.

- Bias & Deception Risks: Potential bias in AI training data; over-reliance on AI could lead to complacency; threat actors may use deception techniques to mislead AI systems.

4. Implications and Strategic Risks

The integration of AI in cybersecurity is likely to evolve rapidly, influencing global security dynamics and potentially leading to an arms race in AI capabilities.

- Political / Geopolitical: Nations may escalate AI development for cybersecurity, impacting international relations and leading to new policy frameworks.

- Security / Counter-Terrorism: Increased AI capabilities could shift the threat landscape, necessitating new counter-terrorism strategies.

- Cyber / Information Space: AI-driven cyber operations could become more prevalent, affecting information security and privacy.

- Economic / Social: The economic burden of cyber defense may increase, affecting businesses and potentially leading to social unrest if AI-driven attacks become widespread.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Conduct an audit of current AI capabilities within security teams; enhance monitoring of AI developments among threat actors.

- Medium-Term Posture (1–12 months): Develop partnerships with AI research institutions; invest in training programs for security analysts to effectively use AI tools.

- Scenario Outlook: Best: AI significantly reduces cyber threats through enhanced detection. Worst: AI tools are predominantly used by threat actors, overwhelming defenses. Most-Likely: A balanced evolution where both defenders and attackers enhance their capabilities, leading to a continuous arms race.

6. Key Individuals and Entities

- Not clearly identifiable from open sources in this snippet.

7. Thematic Tags

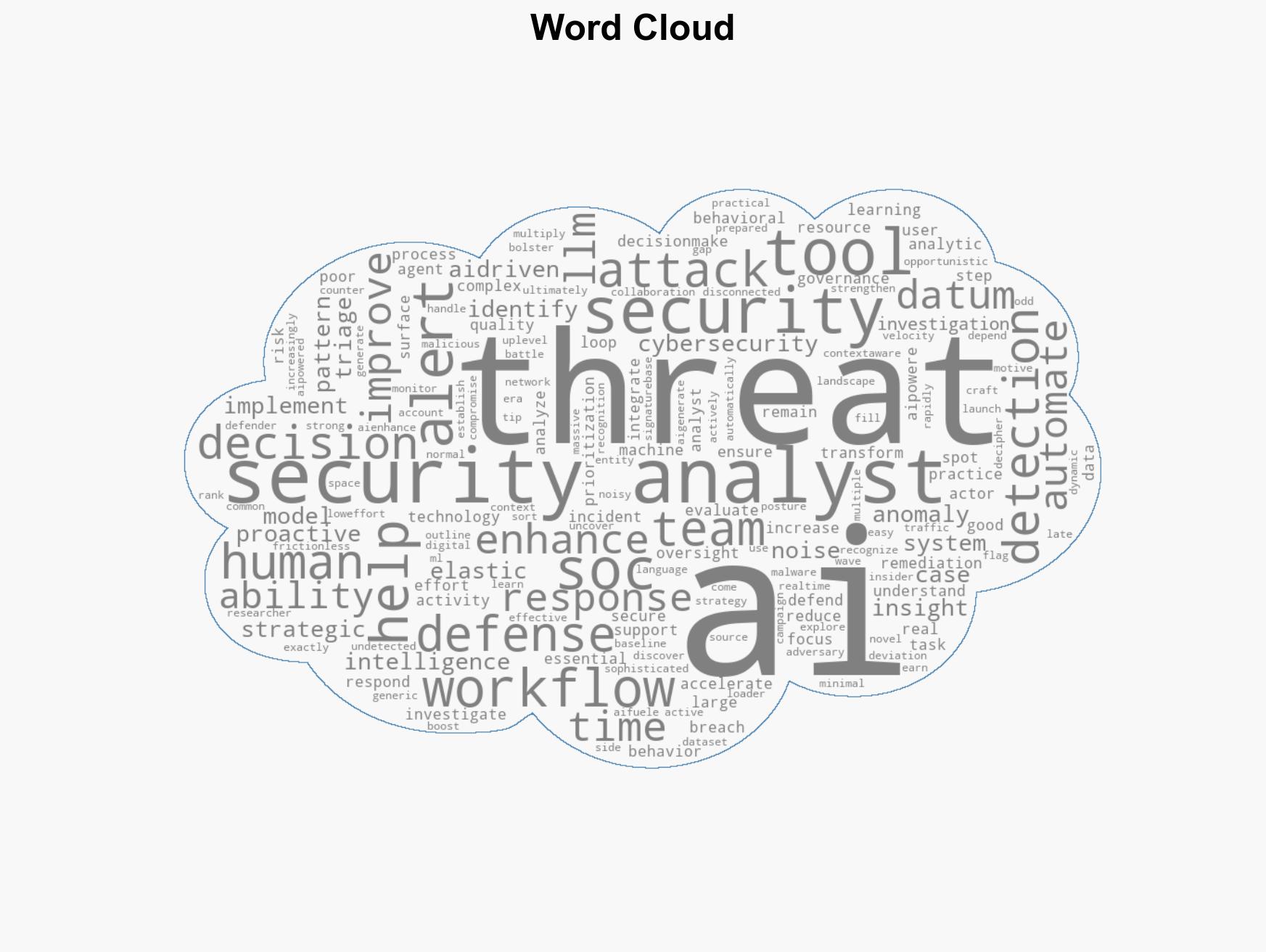

cybersecurity, AI strategy, threat intelligence, anomaly detection, behavioral analytics, cyber defense, AI-driven threats

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

- Cross-Impact Simulation: Simulate cascading interdependencies and system risks.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us