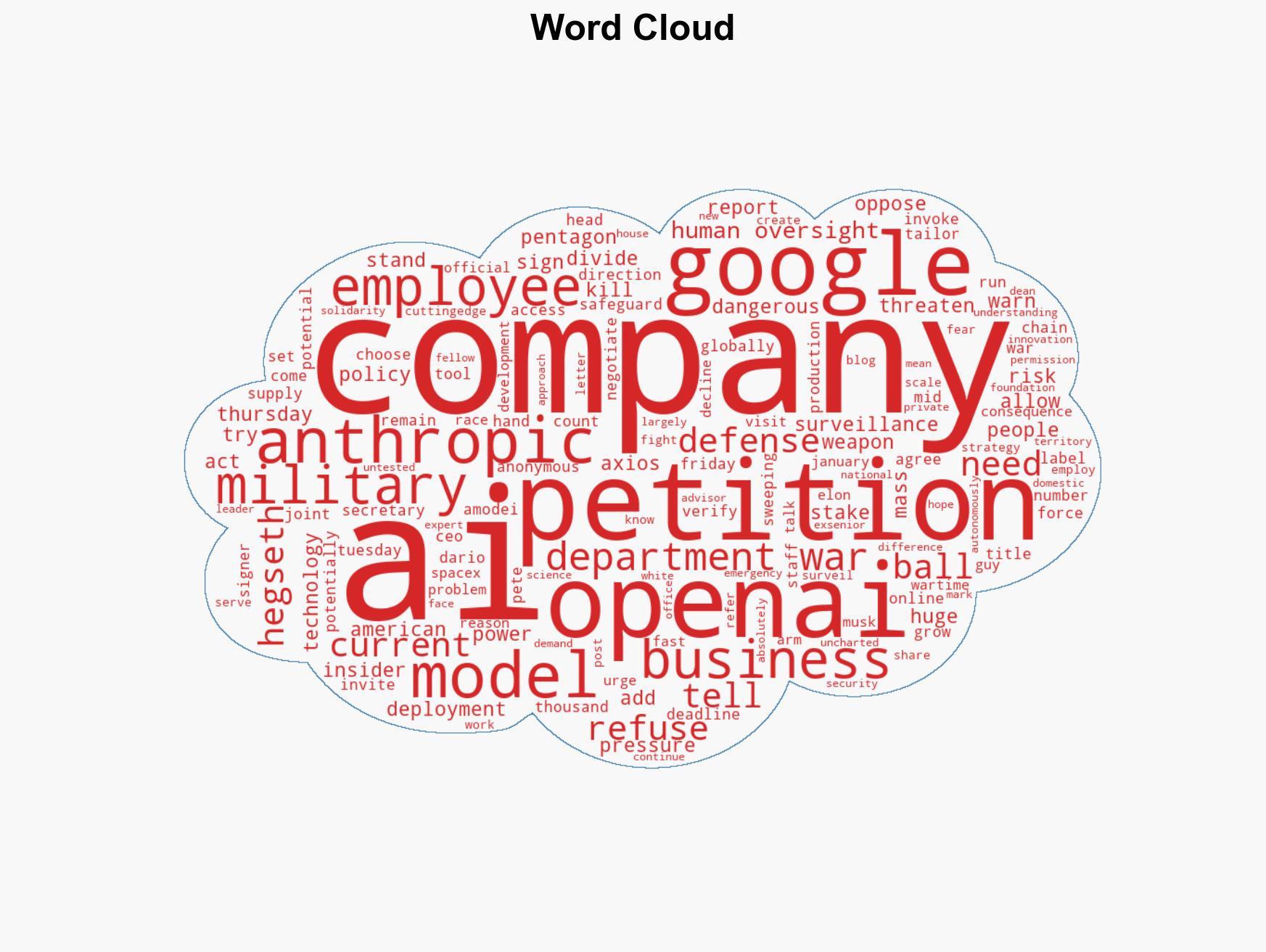

OpenAI and Google staff petition against military use of AI, citing concerns over surveillance and autonomous…

Published on: 2026-02-27

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: OpenAI and Google employees have signed a petition opposing the military’s AI use

1. BLUF (Bottom Line Up Front)

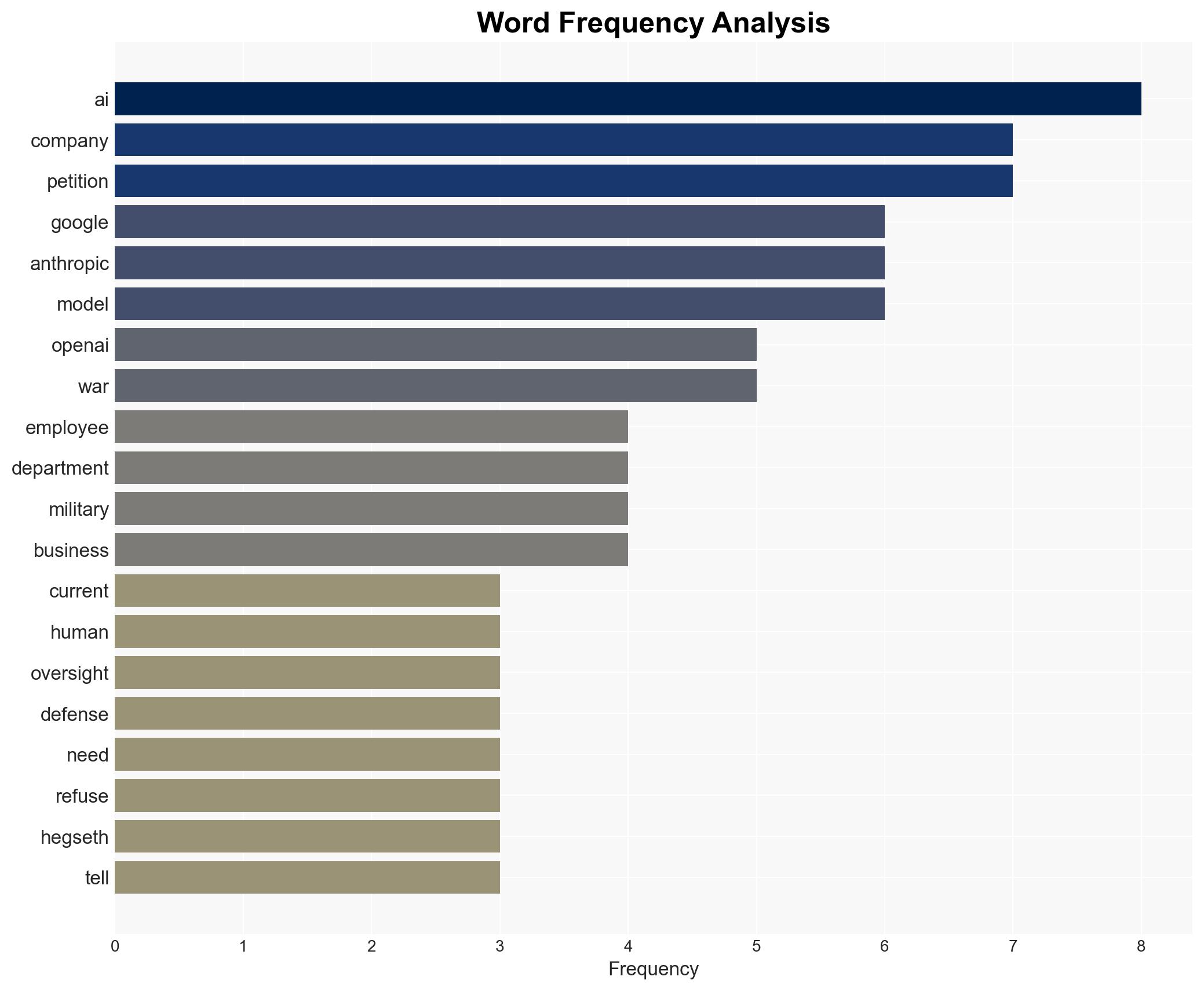

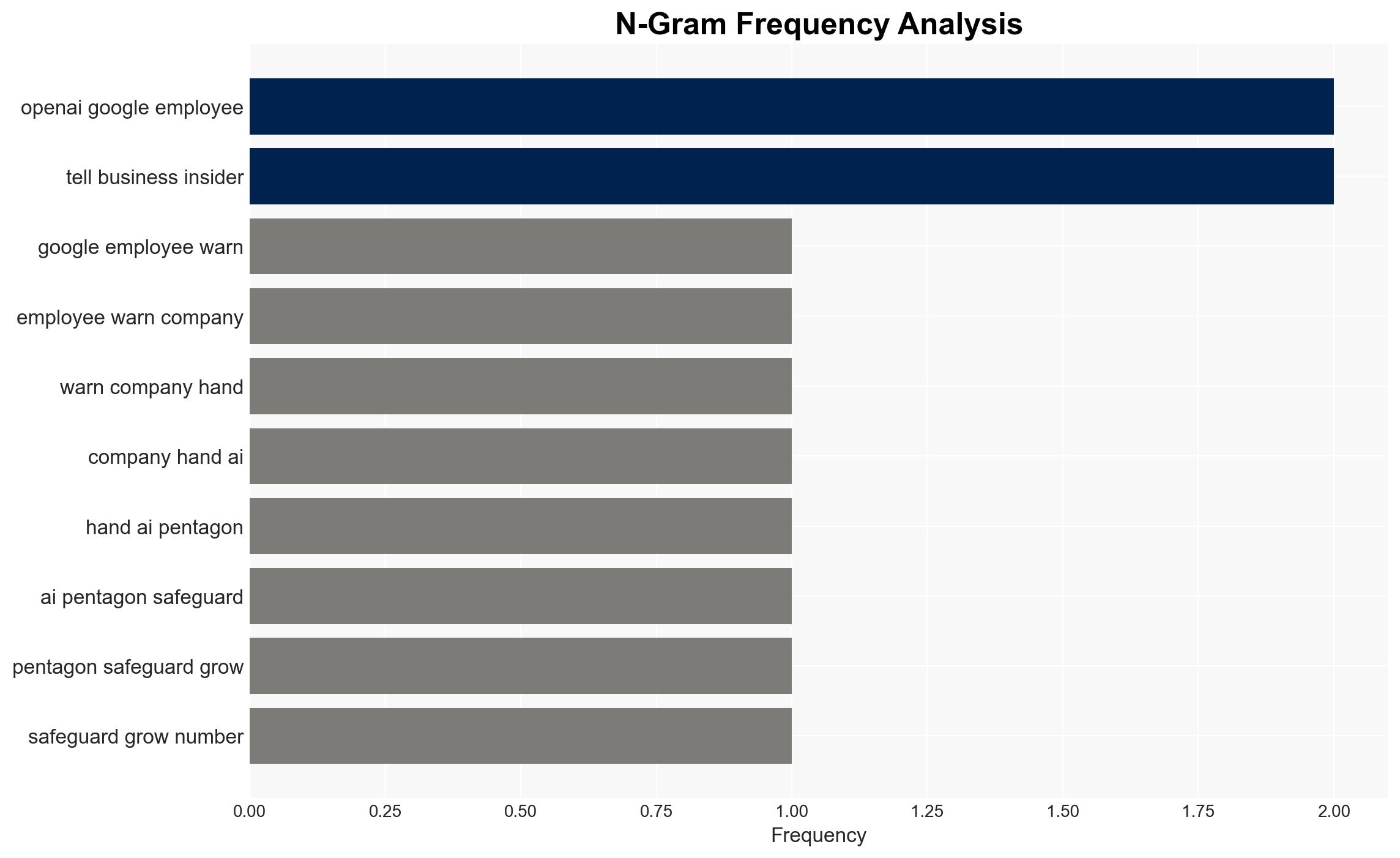

The petition by OpenAI and Google employees against military use of AI highlights significant internal resistance to defense collaborations, potentially impacting future AI deployment strategies. The most likely hypothesis is that this resistance will delay or alter the terms of AI technology integration with military applications. This situation affects tech companies, the Department of Defense, and broader national security strategies. Overall confidence in this judgment is moderate.

2. Competing Hypotheses

- Hypothesis A: The employee petition will successfully prevent or significantly delay the integration of AI technologies from OpenAI and Google into military applications. Supporting evidence includes the substantial number of signatories and the public nature of the petition. However, the ultimate decision-making power rests with company leadership and governmental pressure, which are uncertain factors.

- Hypothesis B: Despite employee resistance, the Department of Defense will secure access to AI technologies from these companies, either through negotiation or legal compulsion. This is supported by the Defense Production Act’s potential invocation and the strategic importance of AI in military applications. Contradicting evidence includes the public opposition and potential reputational risks for the companies involved.

- Assessment: Hypothesis B is currently better supported due to the strategic imperative for the Department of Defense to acquire advanced AI capabilities and the legal mechanisms available to compel compliance. Key indicators that could shift this judgment include changes in company leadership stance or legislative actions limiting the Defense Production Act’s application.

3. Key Assumptions and Red Flags

- Assumptions: The Department of Defense views AI as critical to national security; employee petitions can influence corporate policy; legal mechanisms like the Defense Production Act can be effectively applied.

- Information Gaps: Specific details on company leadership’s stance, the exact legal framework being considered, and the potential for legislative intervention.

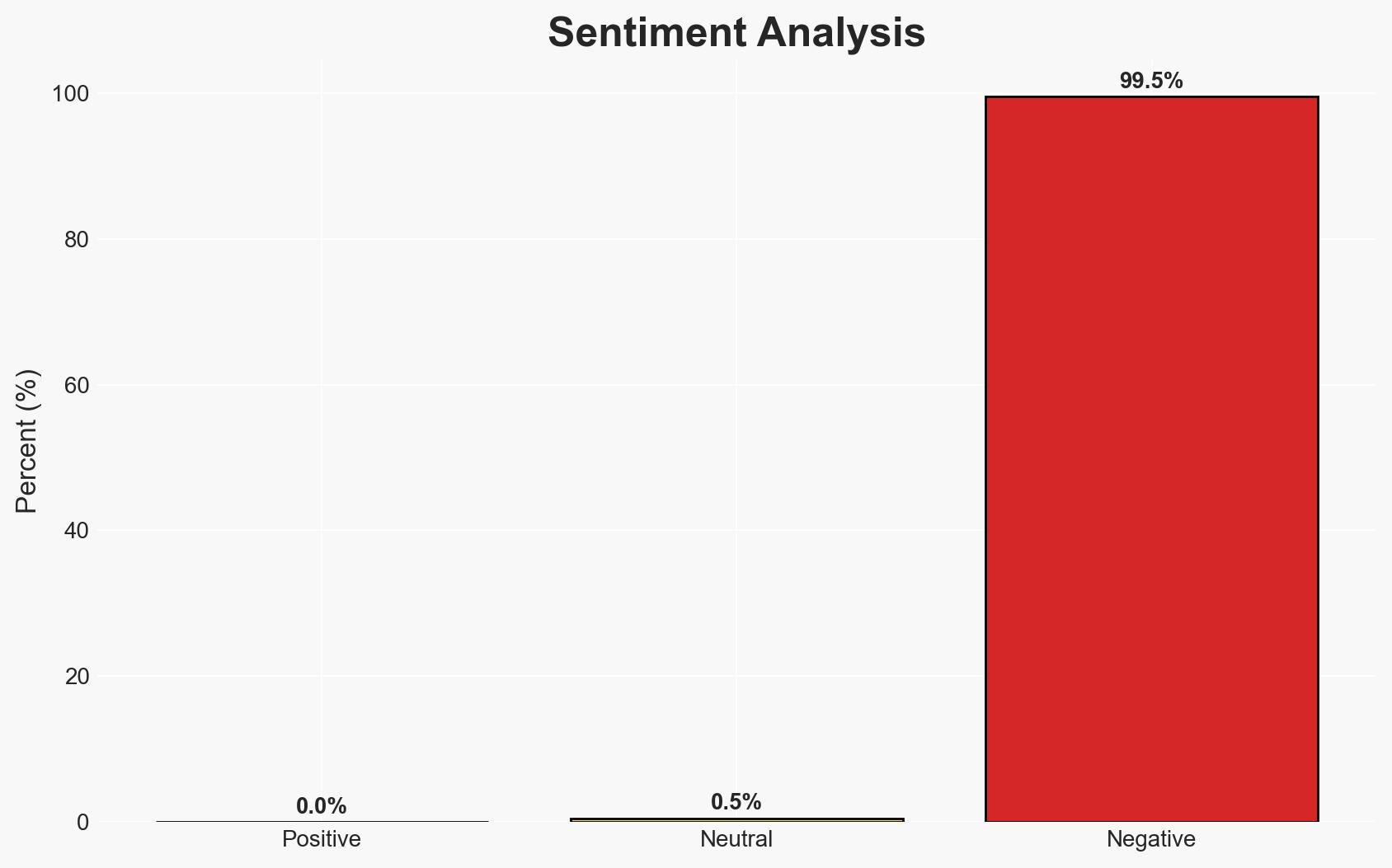

- Bias & Deception Risks: Potential bias in employee-driven narratives; risk of strategic misinformation from both corporate and governmental sources to influence public or internal opinion.

4. Implications and Strategic Risks

This development could lead to a reevaluation of tech-military partnerships and influence future policy on AI deployment. It may also affect employee morale and corporate reputation.

- Political / Geopolitical: Increased scrutiny on tech-military collaborations could lead to legislative reviews and policy changes.

- Security / Counter-Terrorism: Delays in AI integration may impact military readiness and innovation in defense capabilities.

- Cyber / Information Space: Potential for increased cyber threats targeting AI technologies and misinformation campaigns around AI use in military contexts.

- Economic / Social: Possible impacts on tech sector employment dynamics and public perception of AI ethics.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor company statements and legal developments; engage with stakeholders to assess potential impacts on AI policy.

- Medium-Term Posture (1–12 months): Develop resilience strategies for tech-military collaborations; consider partnerships to address ethical concerns in AI deployment.

- Scenario Outlook: Best: Companies and DoD reach a mutually agreeable framework; Worst: Legal battles and public backlash; Most-Likely: Negotiated terms with some concessions on both sides.

6. Key Individuals and Entities

- OpenAI

- Anthropic

- Department of Defense

- Defense Secretary Pete Hegseth

- Anthropic CEO Dario Amodei

- Not clearly identifiable from open sources in this snippet.

7. Thematic Tags

cybersecurity, AI ethics, military technology, employee activism, defense policy, tech industry, national security, corporate governance

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us