OpenAI Executives Justify Pentagon Agreement Amid Employee Uncertainty and Public Backlash

Published on: 2026-03-02

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: OpenAI Leadership Defends Deal With Pentagon as Employees Wait in Limbo

1. BLUF (Bottom Line Up Front)

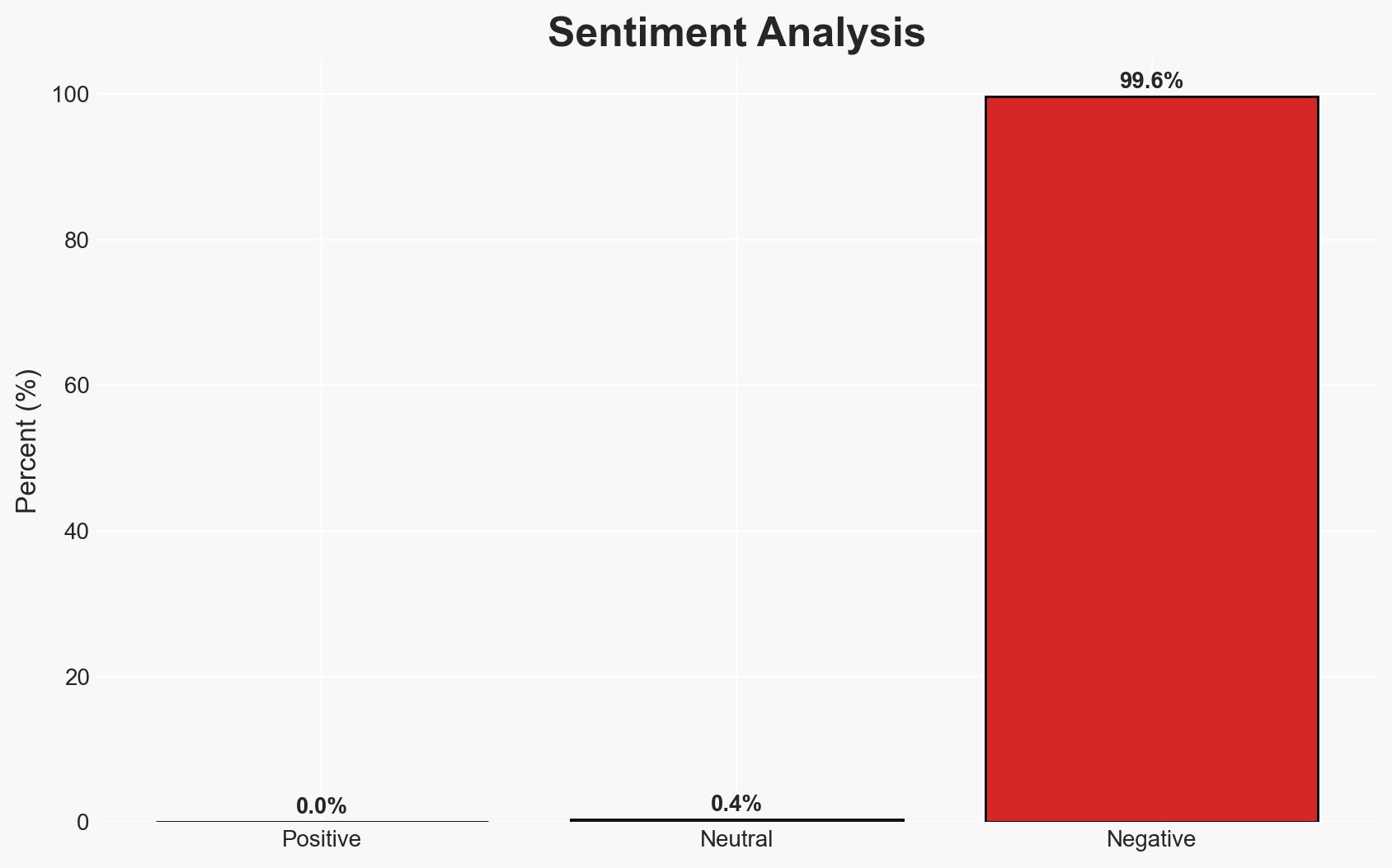

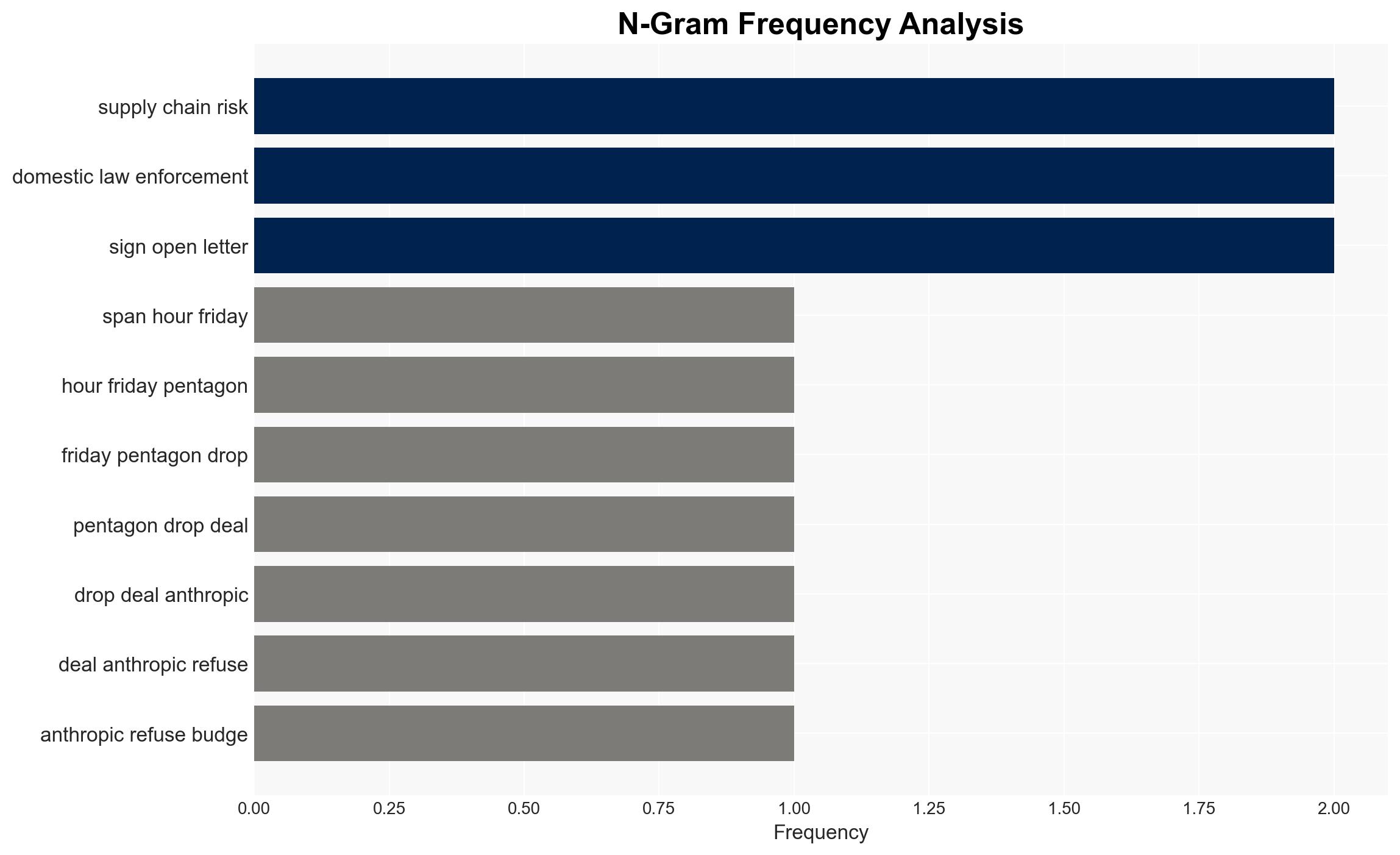

The recent agreement between OpenAI and the Pentagon has sparked public backlash and raised concerns about the ethical use of AI in military applications. The most likely hypothesis is that OpenAI’s leadership believes the deal aligns with legal and ethical standards, despite public criticism. This situation affects OpenAI, the Department of Defense, and potentially international relations. Overall confidence in this judgment is moderate due to limited transparency in the contract details.

2. Competing Hypotheses

- Hypothesis A: OpenAI entered the agreement with the Pentagon believing it could maintain ethical standards and legal compliance. Supporting evidence includes statements from OpenAI leadership about adherence to applicable laws and oversight protocols. Contradicting evidence includes public backlash and concerns over potential misuse.

- Hypothesis B: OpenAI’s decision was primarily driven by strategic business interests, potentially at the expense of ethical considerations. This is supported by the rushed nature of the deal and the lack of transparency in contract details. However, OpenAI’s public statements emphasize ethical constraints, which contradict this hypothesis.

- Assessment: Hypothesis A is currently better supported due to OpenAI’s explicit statements about legal and ethical compliance. Key indicators that could shift this judgment include future revelations about the contract’s implementation and any evidence of misuse.

3. Key Assumptions and Red Flags

- Assumptions: OpenAI’s leadership is sincere in its commitment to ethical standards; the U.S. government will adhere to stated legal constraints; public backlash is based on perceived rather than actual contract details.

- Information Gaps: Specific terms and limitations of the contract; how oversight and compliance will be enforced; potential internal dissent within OpenAI.

- Bias & Deception Risks: Potential cognitive bias in OpenAI’s leadership underestimating public concern; source bias from OpenAI’s public statements; risk of government manipulation in contract interpretation.

4. Implications and Strategic Risks

This development could influence the future of AI ethics in military applications and affect OpenAI’s public image and market position. It may also impact U.S. relations with other nations concerned about AI in military contexts.

- Political / Geopolitical: Potential strain on international relations if perceived as aggressive militarization of AI.

- Security / Counter-Terrorism: Possible shifts in operational capabilities and threat perceptions related to AI-enhanced military operations.

- Cyber / Information Space: Increased scrutiny on AI cybersecurity and potential for misinformation campaigns targeting OpenAI.

- Economic / Social: Impact on OpenAI’s market value and employee morale; potential influence on AI industry standards and public trust.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor public and governmental reactions; engage with stakeholders to clarify contract terms and ethical commitments.

- Medium-Term Posture (1–12 months): Develop and communicate robust oversight mechanisms; strengthen partnerships with ethical AI organizations.

- Scenario Outlook: Best: Transparent implementation builds public trust; Worst: Misuse leads to significant backlash and regulatory action; Most-Likely: Continued scrutiny with gradual acceptance if ethical standards are maintained.

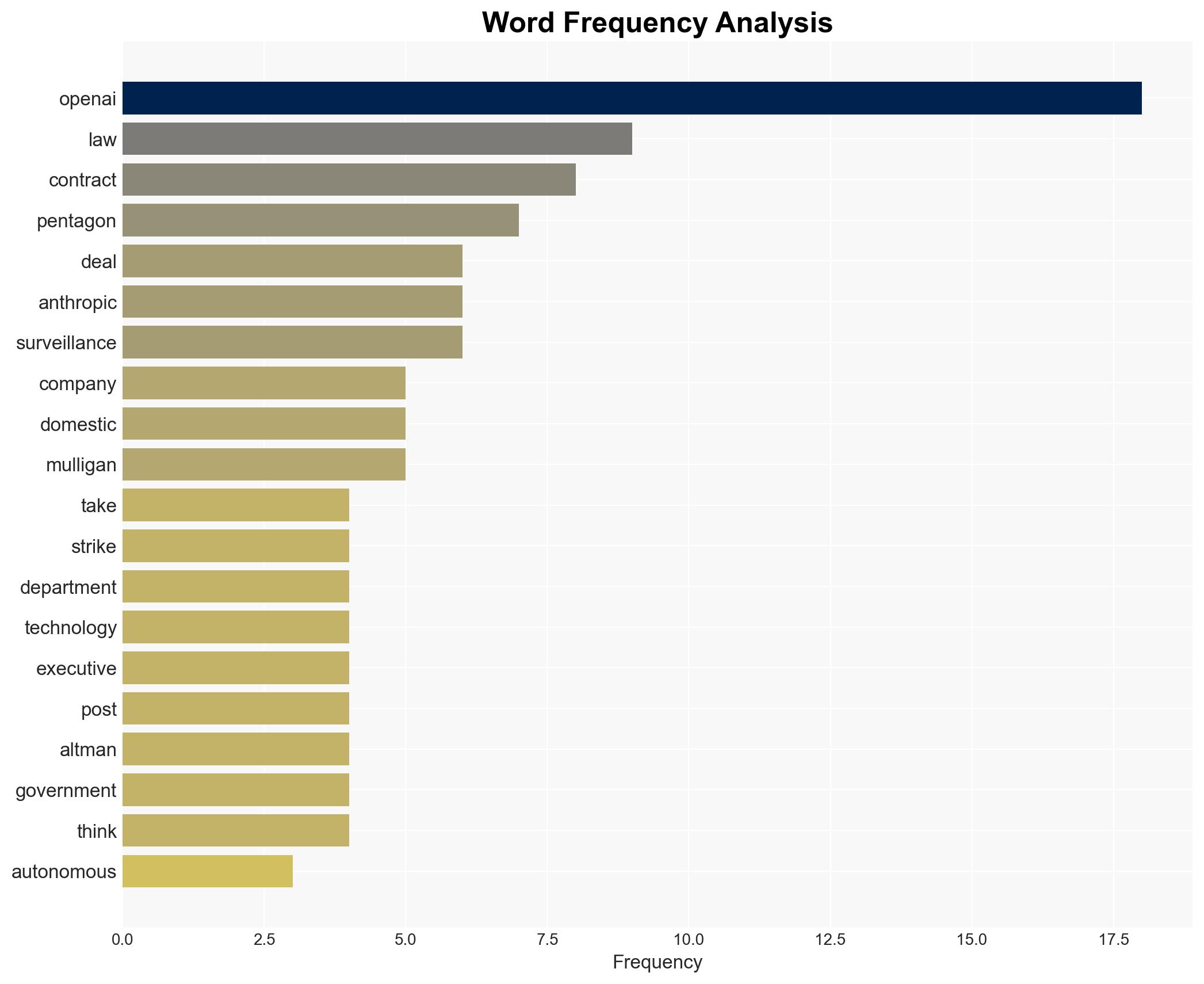

6. Key Individuals and Entities

- OpenAI

- Department of Defense

- Katrina Mulligan, Head of National Security Partnerships, OpenAI

- Sam Altman, CEO, OpenAI

7. Thematic Tags

national security threats, AI ethics, military technology, public backlash, government contracts, surveillance, international relations, corporate strategy

Structured Analytic Techniques Applied

- Cognitive Bias Stress Test: Expose and correct potential biases in assessments through red-teaming and structured challenge.

- Bayesian Scenario Modeling: Use probabilistic forecasting for conflict trajectories or escalation likelihood.

- Network Influence Mapping: Map relationships between state and non-state actors for impact estimation.

Explore more:

National Security Threats Briefs ·

Daily Summary ·

Support us