OpenAI Faces Profitability Challenges, Projected to Require $207 Billion in Funding by 2030

Published on: 2025-11-29

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

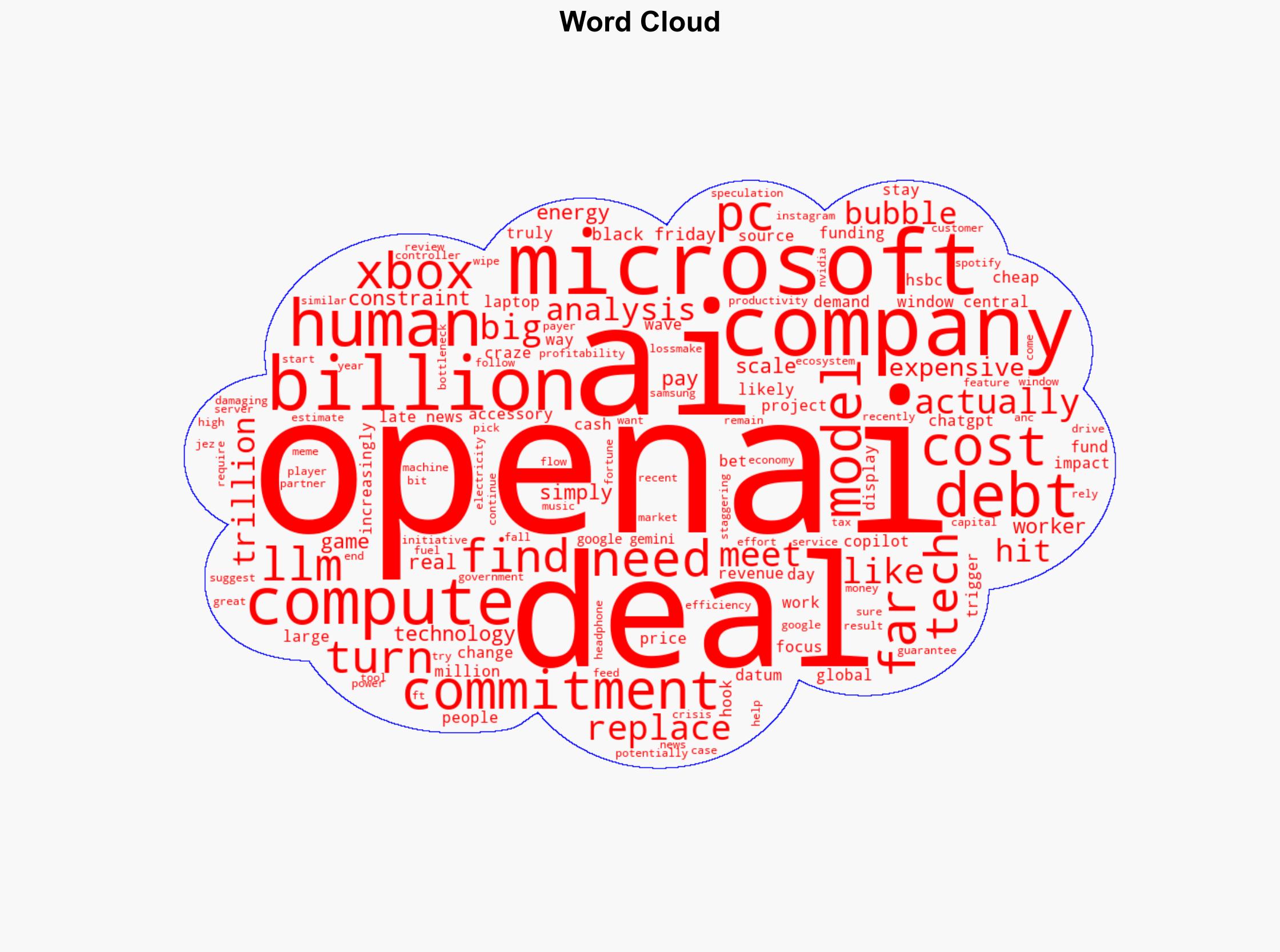

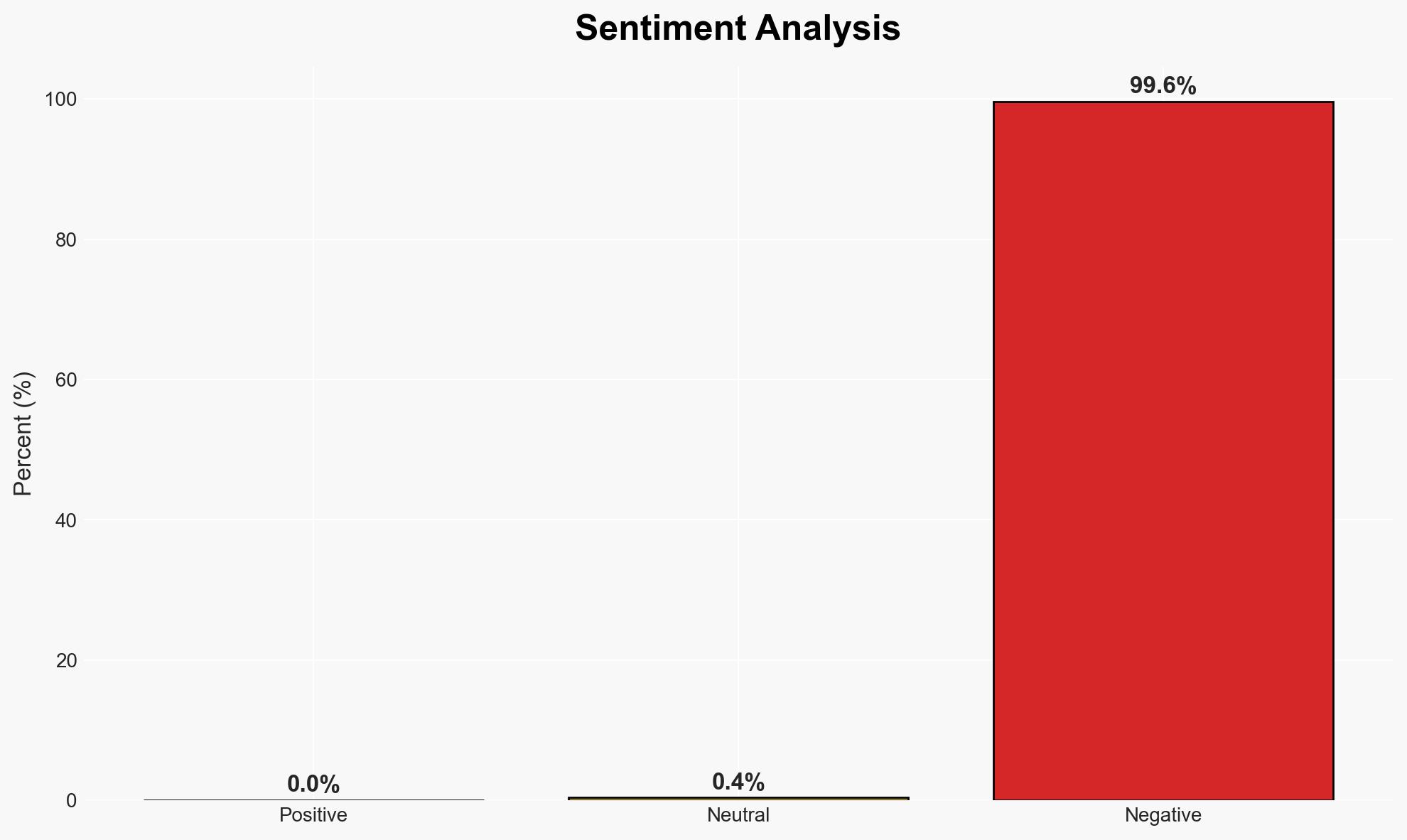

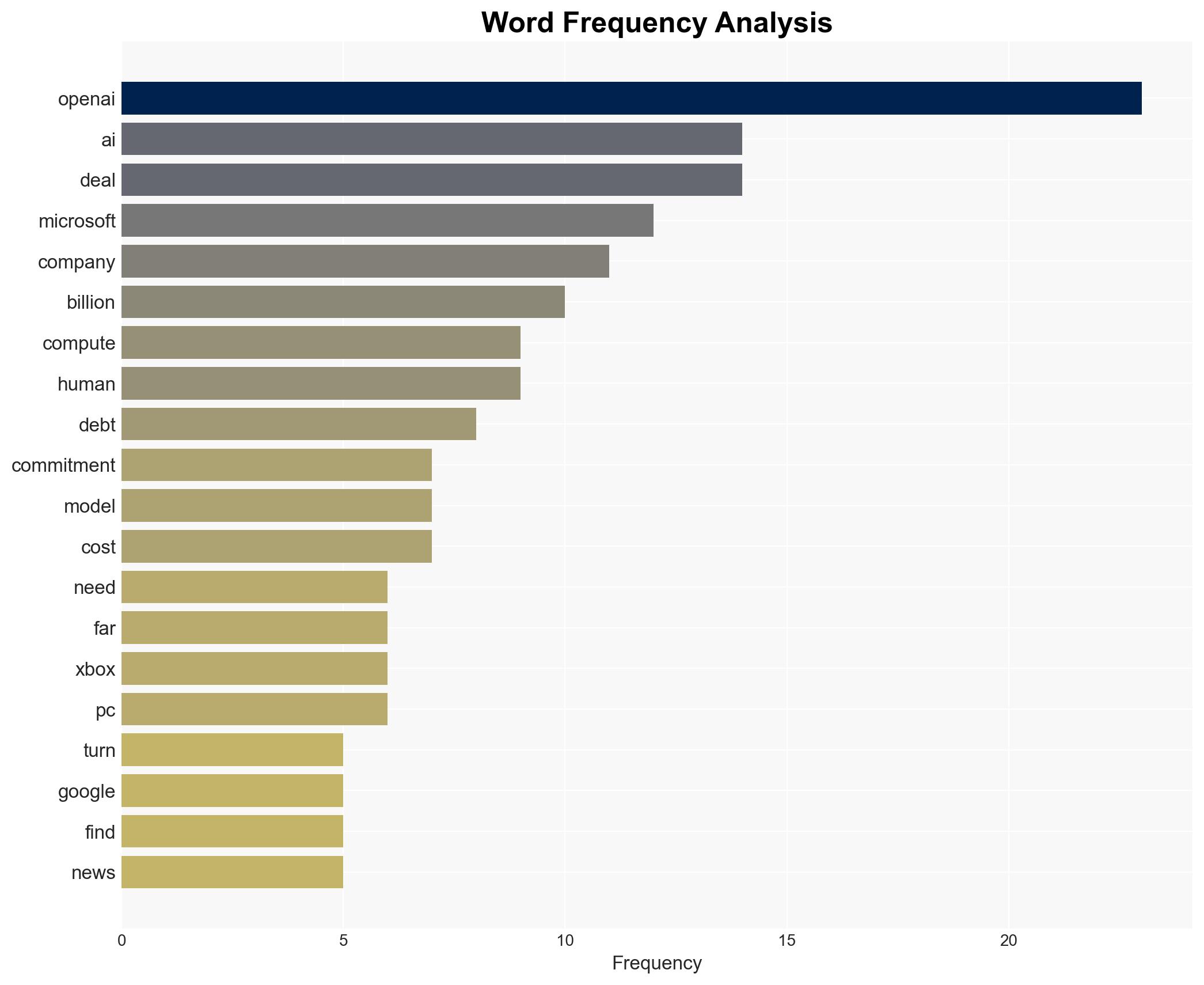

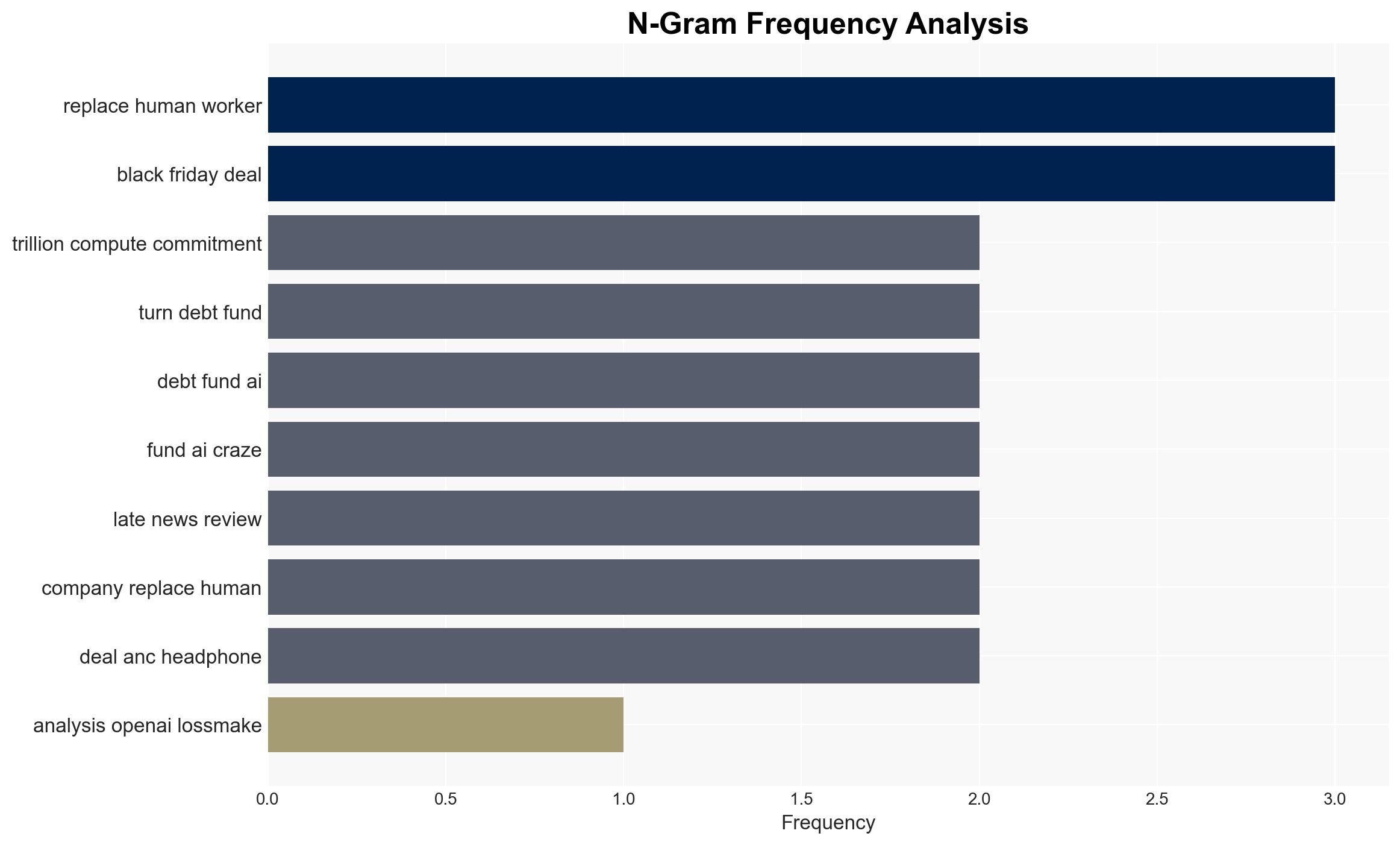

Intelligence Report: Analysis OpenAI is a loss-making machine with estimates that it has no road to profitability by 2030 and will need a further 207 billion in funding even if it gets there

1. BLUF (Bottom Line Up Front)

OpenAI is projected to remain unprofitable until at least 2030, requiring an estimated $207 billion in additional funding to sustain operations. This situation poses significant financial risks to stakeholders, including major investors like Microsoft. The most likely hypothesis is that OpenAI will continue to rely heavily on debt financing, which could lead to broader economic implications. Overall confidence in this assessment is moderate, given the uncertainties surrounding future AI market dynamics and technological advancements.

2. Competing Hypotheses

- Hypothesis A: OpenAI will achieve profitability by 2030 through strategic partnerships and revenue-generating initiatives. Evidence supporting this includes ongoing efforts to integrate AI into various sectors, potentially reducing operational costs. However, the need for substantial funding and the current reliance on debt are significant contradictions.

- Hypothesis B: OpenAI will continue to operate at a loss, heavily reliant on external funding and debt, without achieving profitability by 2030. This is supported by the current financial trajectory and the high costs associated with AI model development and deployment. Contradicting evidence includes potential breakthroughs in AI technology that could reduce costs or increase revenue streams.

- Assessment: Hypothesis B is currently better supported due to the substantial funding requirements and the lack of clear, immediate revenue streams. Key indicators that could shift this judgment include successful monetization of AI applications or significant cost reductions in AI operations.

3. Key Assumptions and Red Flags

- Assumptions: OpenAI’s current financial trajectory will continue; AI market demand will remain high; technological advancements will not drastically reduce operational costs; external funding sources will remain available.

- Information Gaps: Detailed financial data on OpenAI’s revenue streams and cost structures; insights into strategic partnerships and their financial impacts.

- Bias & Deception Risks: Potential bias in financial projections from stakeholders with vested interests; risk of overestimating AI market growth and underestimating operational challenges.

4. Implications and Strategic Risks

The continued financial instability of OpenAI could have cascading effects across multiple domains, particularly if major investors face financial strain.

- Political / Geopolitical: Increased competition among nations to dominate AI technology could escalate tensions, particularly if OpenAI’s financial model is perceived as unsustainable.

- Security / Counter-Terrorism: Potential vulnerabilities in AI systems could be exploited if financial constraints lead to reduced investment in cybersecurity.

- Cyber / Information Space: The reliance on AI could increase the risk of misinformation and cyber threats if systems are not adequately secured due to financial limitations.

- Economic / Social: The failure of OpenAI to achieve profitability could lead to job losses and economic instability, particularly in sectors heavily investing in AI.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor OpenAI’s financial disclosures and funding activities; assess the impact of AI integration in key sectors; engage with stakeholders to understand strategic plans.

- Medium-Term Posture (1–12 months): Develop resilience measures for sectors reliant on AI; explore partnerships to mitigate financial risks; enhance cybersecurity frameworks for AI systems.

- Scenario Outlook:

- Best: OpenAI achieves profitability through innovation and strategic partnerships, reducing reliance on debt.

- Worst: OpenAI’s financial instability leads to broader economic disruptions and loss of investor confidence.

- Most-Likely: OpenAI continues to operate at a loss, relying on external funding, with incremental progress toward profitability.

6. Key Individuals and Entities

- OpenAI

- Microsoft

- HSBC

- Gartner

7. Thematic Tags

Cybersecurity, AI economics, debt financing, technological innovation, market instability, strategic partnerships, geopolitical competition

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Cross-Impact Simulation: Simulate cascading interdependencies and system risks.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us