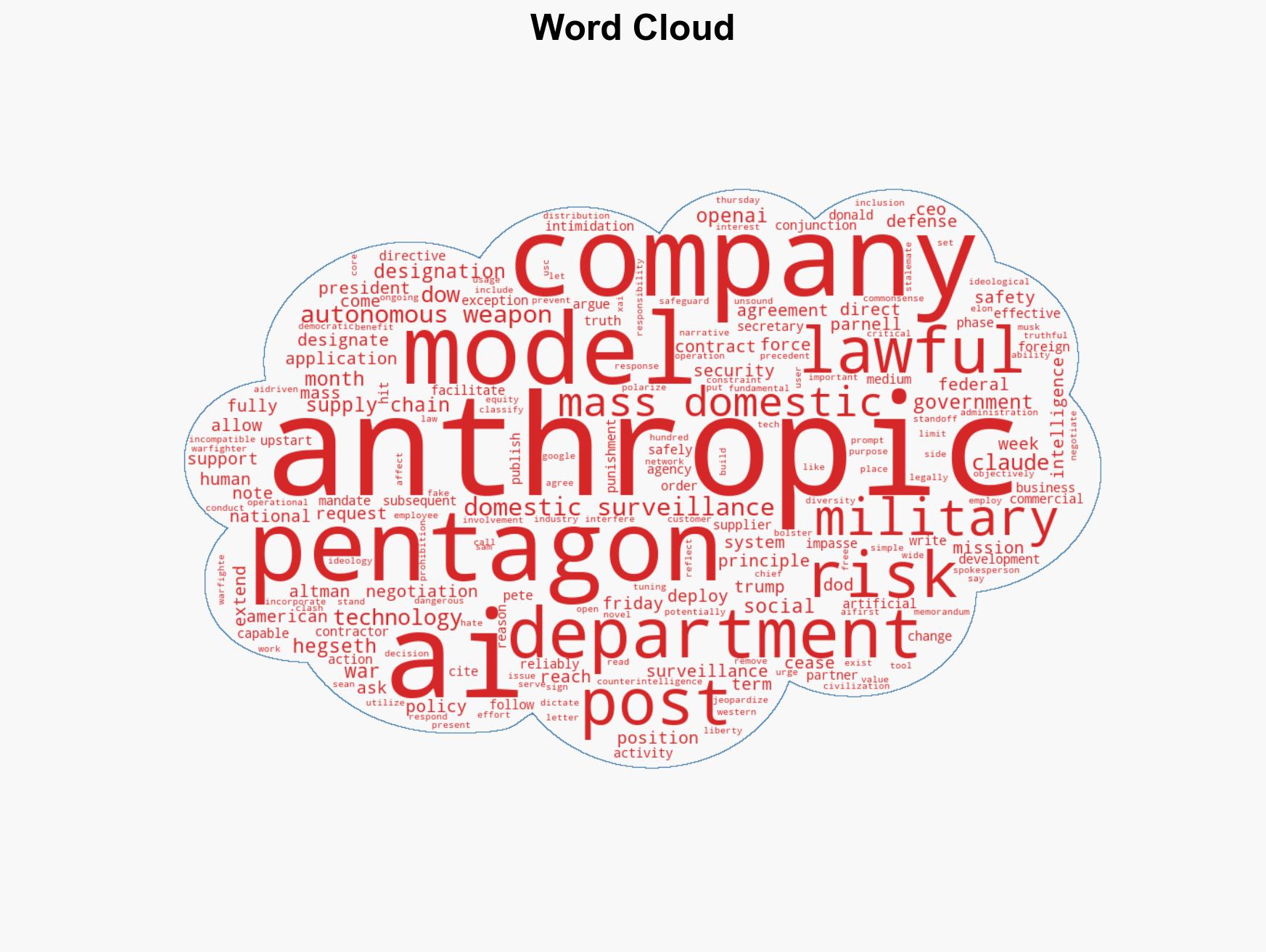

Pentagon Labels Anthropic as Supply Chain Threat Amid AI Policy Dispute with Defense Secretary’s Directive

Published on: 2026-02-28

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Pentagon Designates Anthropic Supply Chain Risk Over AI Military Dispute

1. BLUF (Bottom Line Up Front)

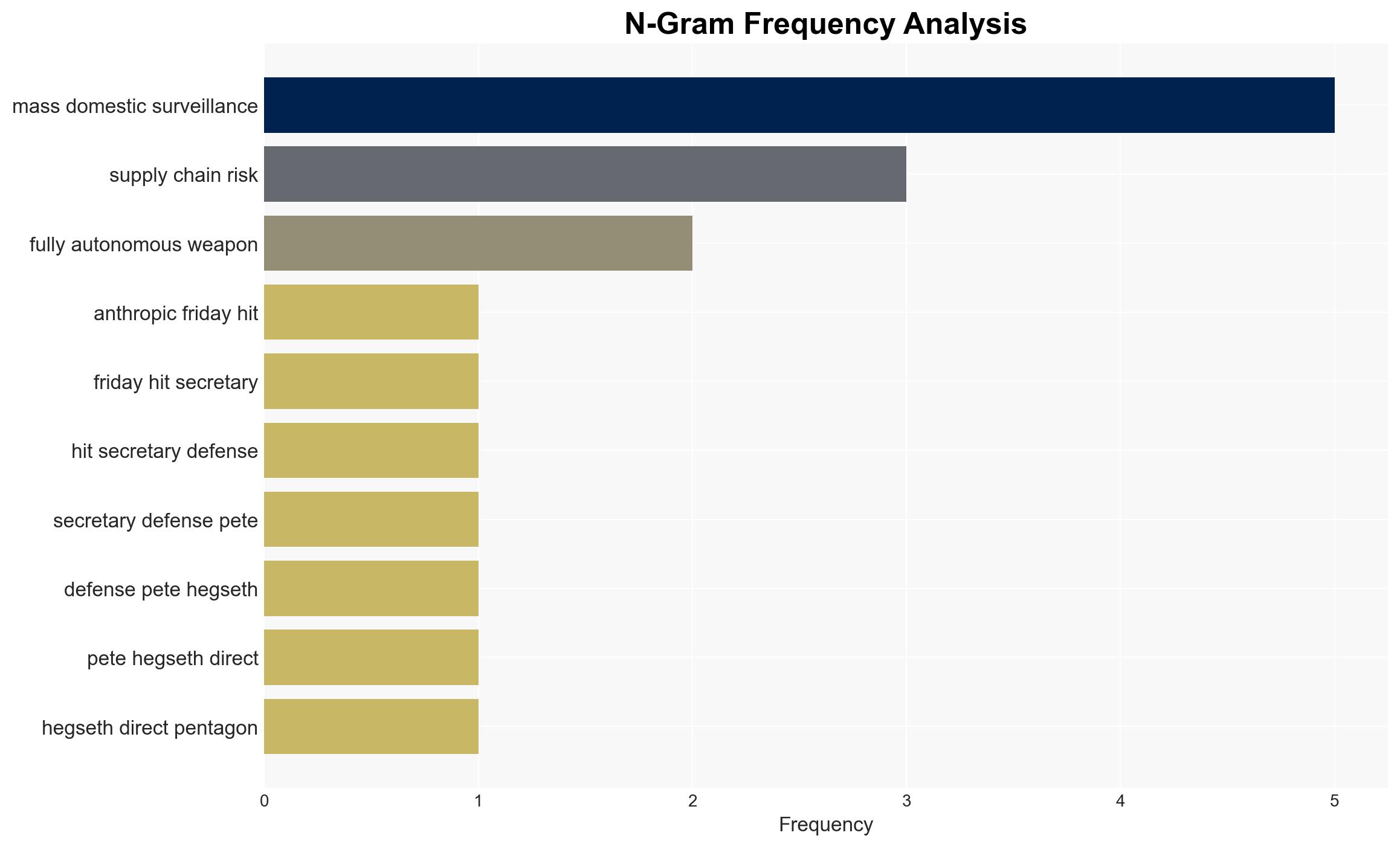

The Pentagon has designated Anthropic as a supply chain risk, primarily due to disagreements over the use of its AI technology for mass domestic surveillance and autonomous weapons. This decision affects U.S. military contractors and federal agencies, which are now mandated to cease using Anthropic’s technology. The most likely hypothesis is that this designation is a strategic move to ensure compliance with military AI usage policies. Overall confidence in this judgment is moderate.

2. Competing Hypotheses

- Hypothesis A: The designation of Anthropic as a supply chain risk is primarily a strategic maneuver by the Pentagon to enforce compliance with its AI policy, emphasizing unrestricted lawful use of AI technologies. This is supported by the Pentagon’s directive and the President’s order to phase out Anthropic technology.

- Hypothesis B: The designation is a reactionary measure due to Anthropic’s public stance against mass surveillance and autonomous weapons, potentially reflecting broader ideological conflicts within the government. This is supported by Anthropic’s statements and the emphasis on democratic values.

- Assessment: Hypothesis A is currently better supported due to the alignment of actions by the Pentagon and the President, indicating a coordinated policy enforcement effort. Indicators that could shift this judgment include changes in government policy or public backlash influencing policy reversals.

3. Key Assumptions and Red Flags

- Assumptions: The Pentagon’s actions are primarily driven by national security concerns; Anthropic’s technology poses a significant risk as perceived by the DoW; The U.S. government prioritizes unrestricted AI use for military applications.

- Information Gaps: Specific details of the negotiations between Anthropic and the Pentagon; Internal government assessments of Anthropic’s technology risks; Broader industry reactions to the designation.

- Bias & Deception Risks: Potential bias in government statements aiming to justify policy decisions; Anthropic’s public communications may be strategically framed to garner public support.

4. Implications and Strategic Risks

This development could lead to increased scrutiny of AI companies’ compliance with military policies, potentially affecting innovation and collaboration. It may also influence international perceptions of U.S. AI policy.

- Political / Geopolitical: Potential strain on U.S. relations with allies who may view the designation as overreach or ideological conflict.

- Security / Counter-Terrorism: Possible gaps in AI capabilities if alternative technologies are not readily available.

- Cyber / Information Space: Risk of increased cyber threats as entities may exploit perceived vulnerabilities in U.S. AI supply chains.

- Economic / Social: Economic impact on Anthropic and similar companies; potential chilling effect on AI innovation due to regulatory uncertainty.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor industry and international reactions; assess alternative AI suppliers for compliance and capability.

- Medium-Term Posture (1–12 months): Develop partnerships with compliant AI firms; enhance resilience against supply chain disruptions.

- Scenario Outlook:

- Best: Anthropic complies, leading to resumed collaboration.

- Worst: Escalation leads to broader AI industry conflict.

- Most-Likely: Continued negotiation with potential policy adjustments.

6. Key Individuals and Entities

- U.S. Secretary of Defense Pete Hegseth

- U.S. President Donald Trump

- Anthropic

- U.S. Department of War

7. Thematic Tags

cybersecurity, national security, AI policy, supply chain risk, military technology, government regulation, surveillance, autonomous weapons

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us