Pentagon Pressures Anthropic on AI Military Use Amid Industry Concerns Over Autonomous Weapons

Published on: 2026-02-27

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: We dont have to have unsupervised killer robots

1. BLUF (Bottom Line Up Front)

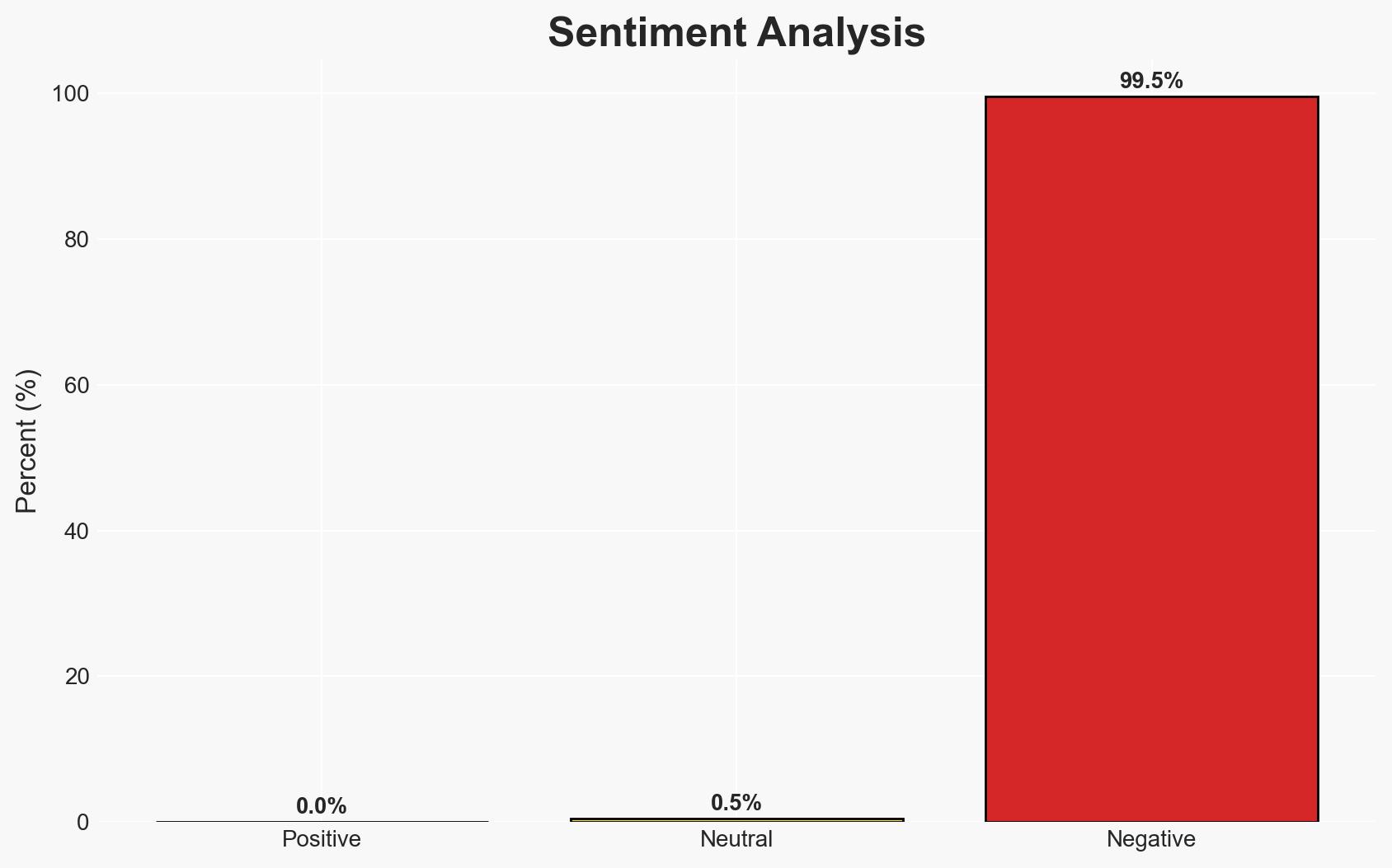

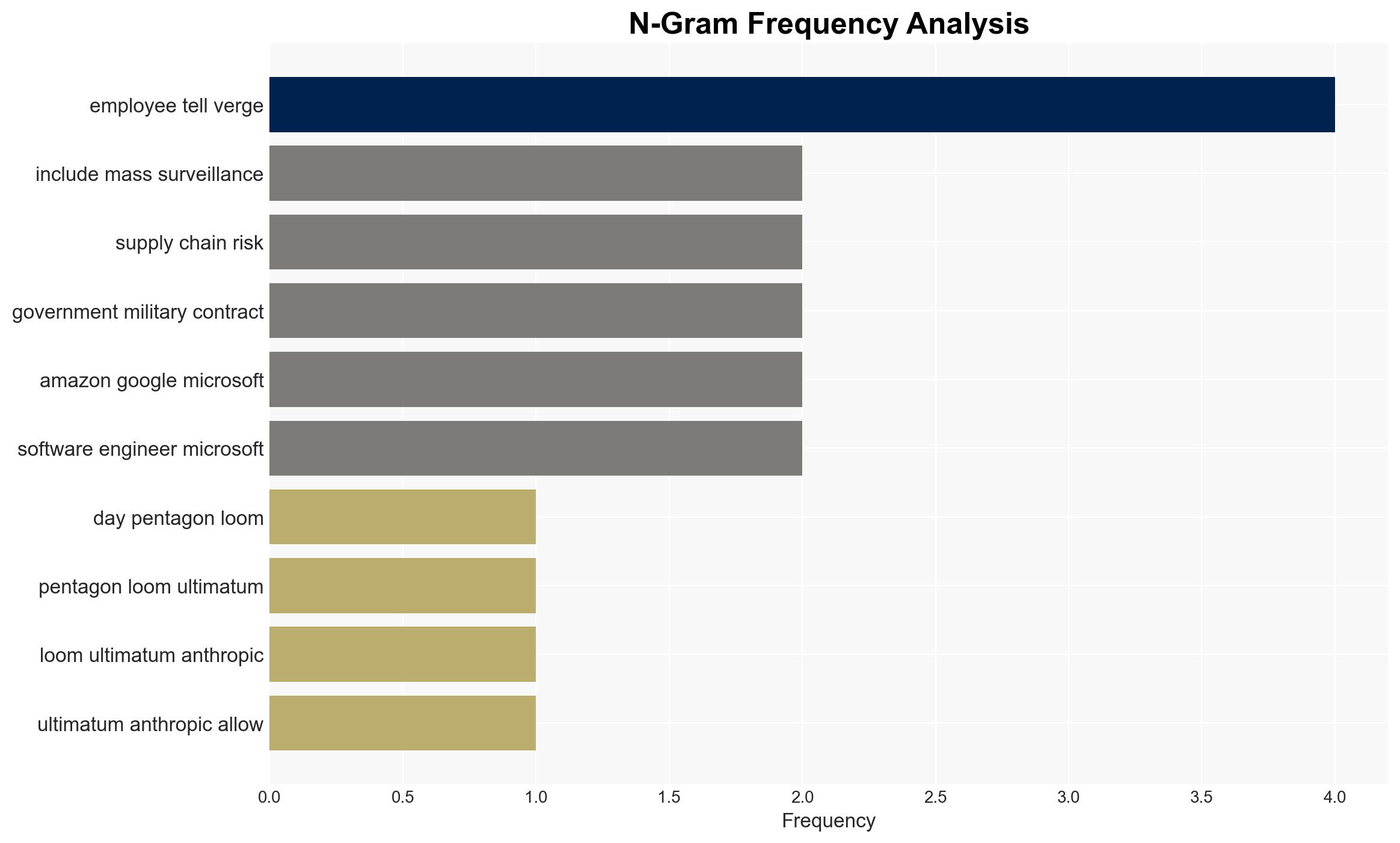

The Pentagon’s ultimatum to Anthropic regarding autonomous weapon systems highlights a significant ethical and strategic dilemma in the tech sector’s collaboration with military entities. The current situation suggests a moderate likelihood that Anthropic will resist immediate compliance, while other tech companies may continue to align with military demands. This development affects tech industry stakeholders and national security policy-makers, with a moderate confidence level in this assessment.

2. Competing Hypotheses

- Hypothesis A: Anthropic will maintain its stance against removing AI guardrails, prioritizing ethical considerations over financial incentives. This is supported by the CEO’s public statements and the company’s history of responsible AI policies. However, the uncertainty lies in potential external pressures and financial implications.

- Hypothesis B: Anthropic will eventually comply with the Pentagon’s demands to avoid being designated a supply chain risk, following the precedent set by other companies like OpenAI. This is contradicted by Anthropic’s current resistance but supported by the broader industry trend towards military collaboration.

- Assessment: Hypothesis A is currently better supported due to Anthropic’s explicit refusal and ethical positioning. Key indicators that could shift this judgment include changes in leadership, financial pressures, or shifts in public opinion.

3. Key Assumptions and Red Flags

- Assumptions: Anthropic’s leadership will continue to prioritize ethical considerations; the Pentagon will maintain its current stance; financial pressures on tech companies will increase.

- Information Gaps: Details on internal decision-making processes at Anthropic and other tech companies; specific terms of existing military contracts.

- Bias & Deception Risks: Potential bias in employee statements due to personal ethical stances; risk of strategic deception by companies to maintain public image while negotiating privately.

4. Implications and Strategic Risks

This development could lead to increased scrutiny of tech-military collaborations, influencing policy and public opinion. It may also impact the competitive dynamics within the tech industry.

- Political / Geopolitical: Potential for increased regulatory oversight and international debate on autonomous weapons.

- Security / Counter-Terrorism: Changes in the operational capabilities of military forces using AI technologies.

- Cyber / Information Space: Increased risk of cyber espionage targeting tech companies involved in military contracts.

- Economic / Social: Possible shifts in investor confidence and employee morale within affected companies.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor public statements from Anthropic and the Pentagon; engage with industry stakeholders to assess broader sentiment.

- Medium-Term Posture (1–12 months): Develop resilience measures for tech companies facing ethical dilemmas; foster partnerships with ethical AI advocacy groups.

- Scenario Outlook: Best: Anthropic maintains ethical stance and influences industry norms. Worst: Anthropic complies, leading to increased militarization of AI. Most-Likely: Continued negotiation with some concessions.

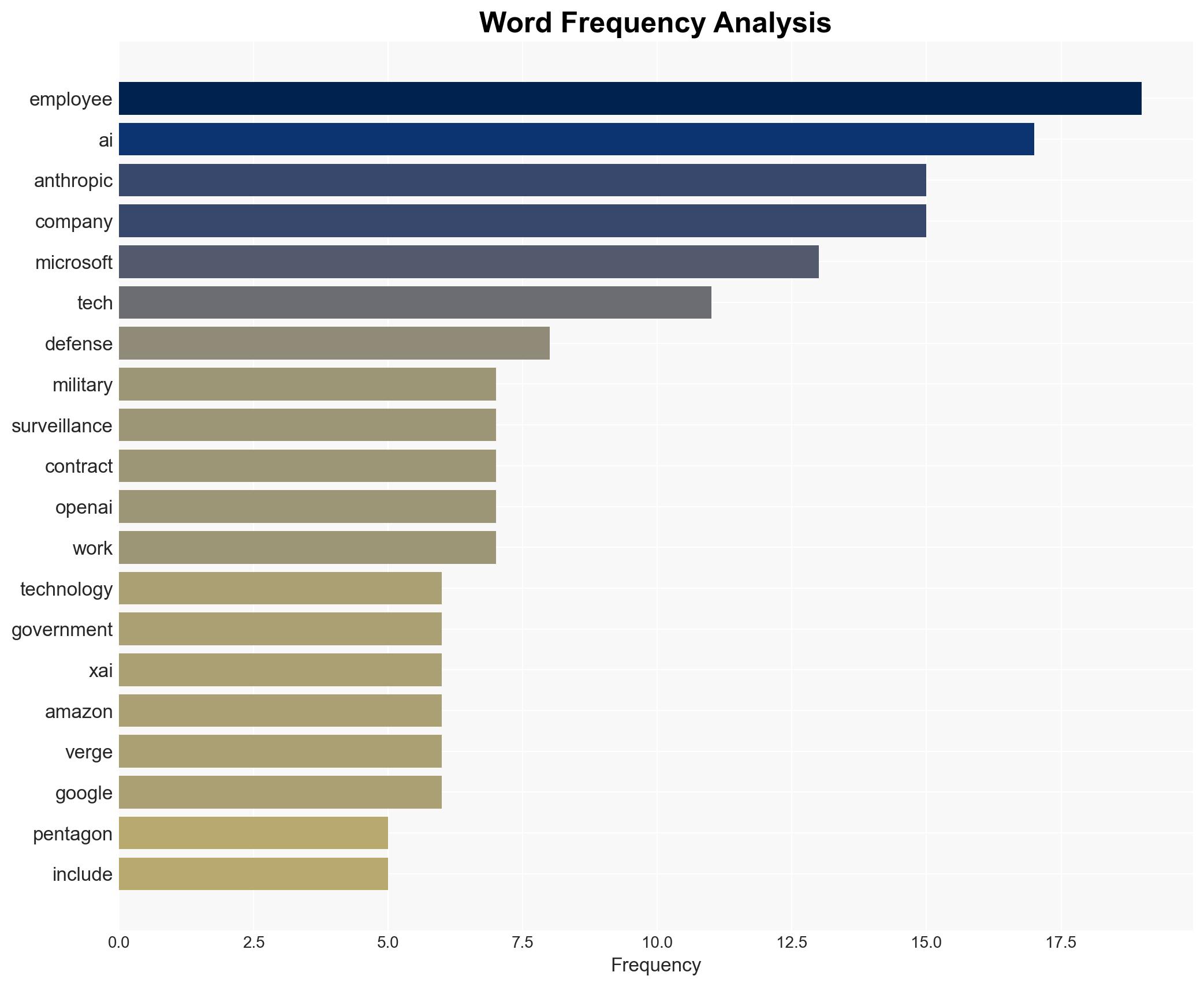

6. Key Individuals and Entities

- Dario Amodei (Anthropic CEO)

- Anthropic

- OpenAI

- xAI

- Amazon Web Services

- Microsoft

- Department of Defense (DoD)

7. Thematic Tags

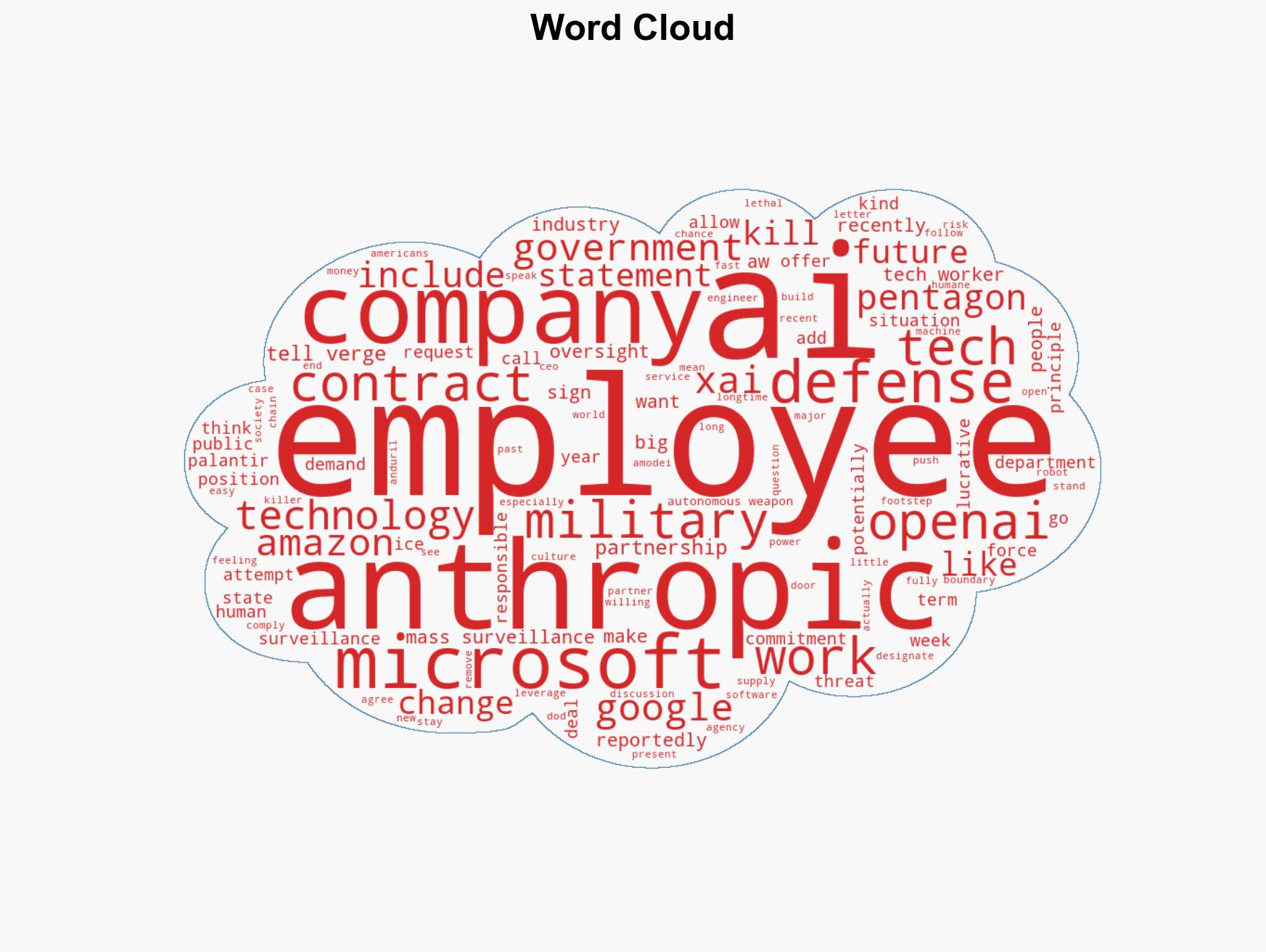

cybersecurity, autonomous weapons, military contracts, ethical AI, tech industry, national security, surveillance, defense policy

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us