Sam Altman Positions OpenAI as a Key Player in Military AI Amid Pentagon’s Restrictions on Competitors

Published on: 2026-02-28

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Sam Altman Is Marketing OpenAI as Americas Wartime AI Company Whether He Intends to or Not

1. BLUF (Bottom Line Up Front)

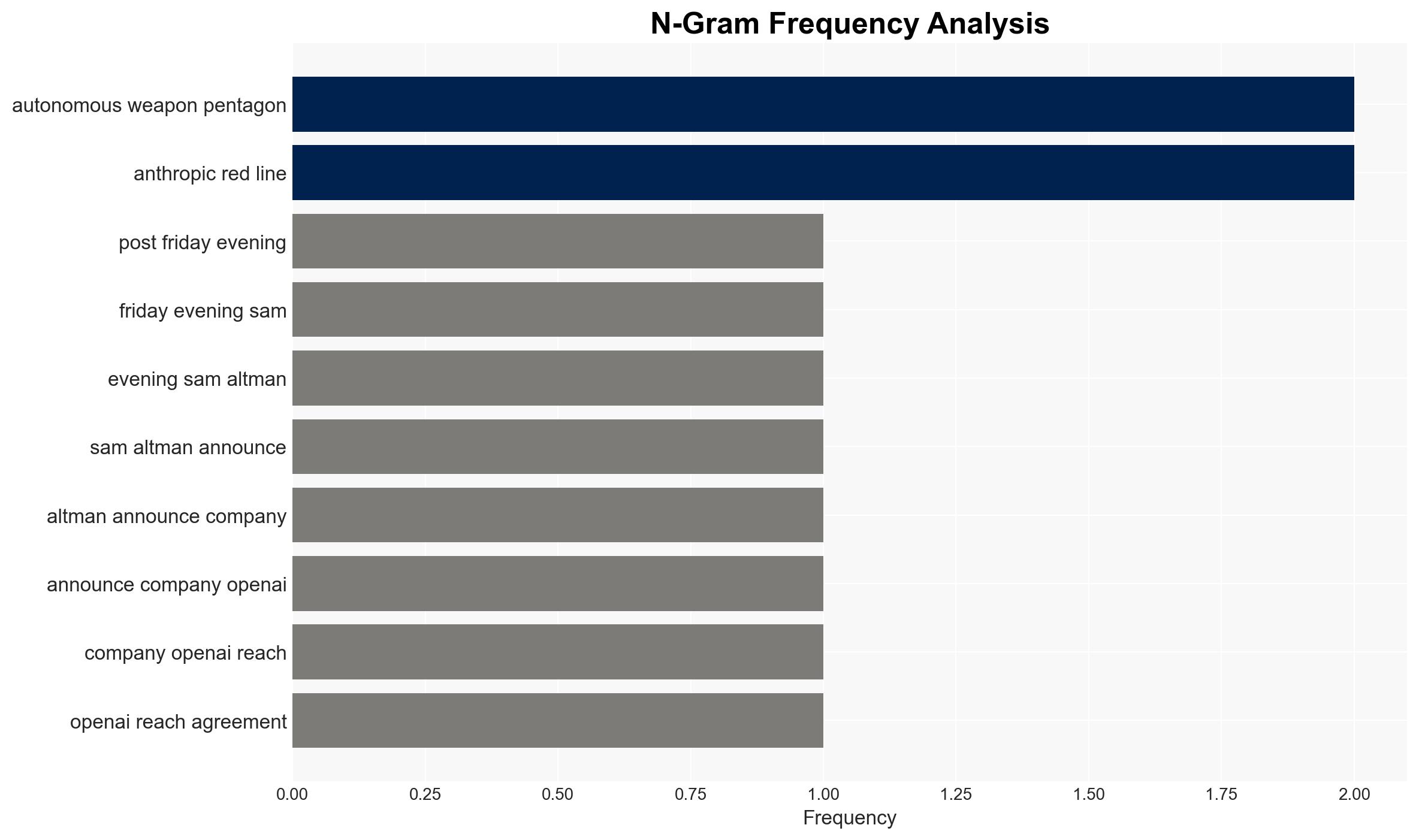

OpenAI, led by Sam Altman, has entered into a significant agreement with the U.S. Department of War, positioning itself as a key player in military AI applications. This development contrasts sharply with Anthropic’s exclusion due to its ethical stance on AI use, highlighting a strategic divergence in the AI industry. This situation is likely to impact U.S. national security policy and AI industry dynamics. Confidence in this assessment is moderate due to limited visibility into the full terms of the agreement and potential undisclosed motivations.

2. Competing Hypotheses

- Hypothesis A: OpenAI’s agreement with the Department of War is a strategic alignment to enhance national security capabilities, driven by a pragmatic approach to AI deployment. Supporting evidence includes the formal agreement and OpenAI’s willingness to cooperate with military objectives. Contradicting evidence includes Altman’s previous statements on ethical AI use, suggesting potential internal conflict or strategic repositioning.

- Hypothesis B: OpenAI’s engagement with the Department of War is primarily a competitive maneuver against Anthropic, leveraging government contracts to gain market dominance. This is supported by the timing of the agreement following Anthropic’s blacklisting and the historical rivalry between the two companies. However, this hypothesis is less supported by direct evidence of competitive intent beyond circumstantial timing.

- Assessment: Hypothesis A is currently better supported given the explicit agreement with the Department of War and the strategic importance of AI in national security. Key indicators that could shift this judgment include further disclosures about the agreement’s terms and any changes in OpenAI’s public stance on ethical AI use.

3. Key Assumptions and Red Flags

- Assumptions: OpenAI’s agreement is primarily motivated by national security interests; Anthropic’s exclusion is based on genuine security concerns; the U.S. government will continue to prioritize AI in defense strategy.

- Information Gaps: Specific terms and conditions of OpenAI’s agreement with the Department of War; detailed rationale behind Anthropic’s blacklisting; potential undisclosed strategic objectives of OpenAI.

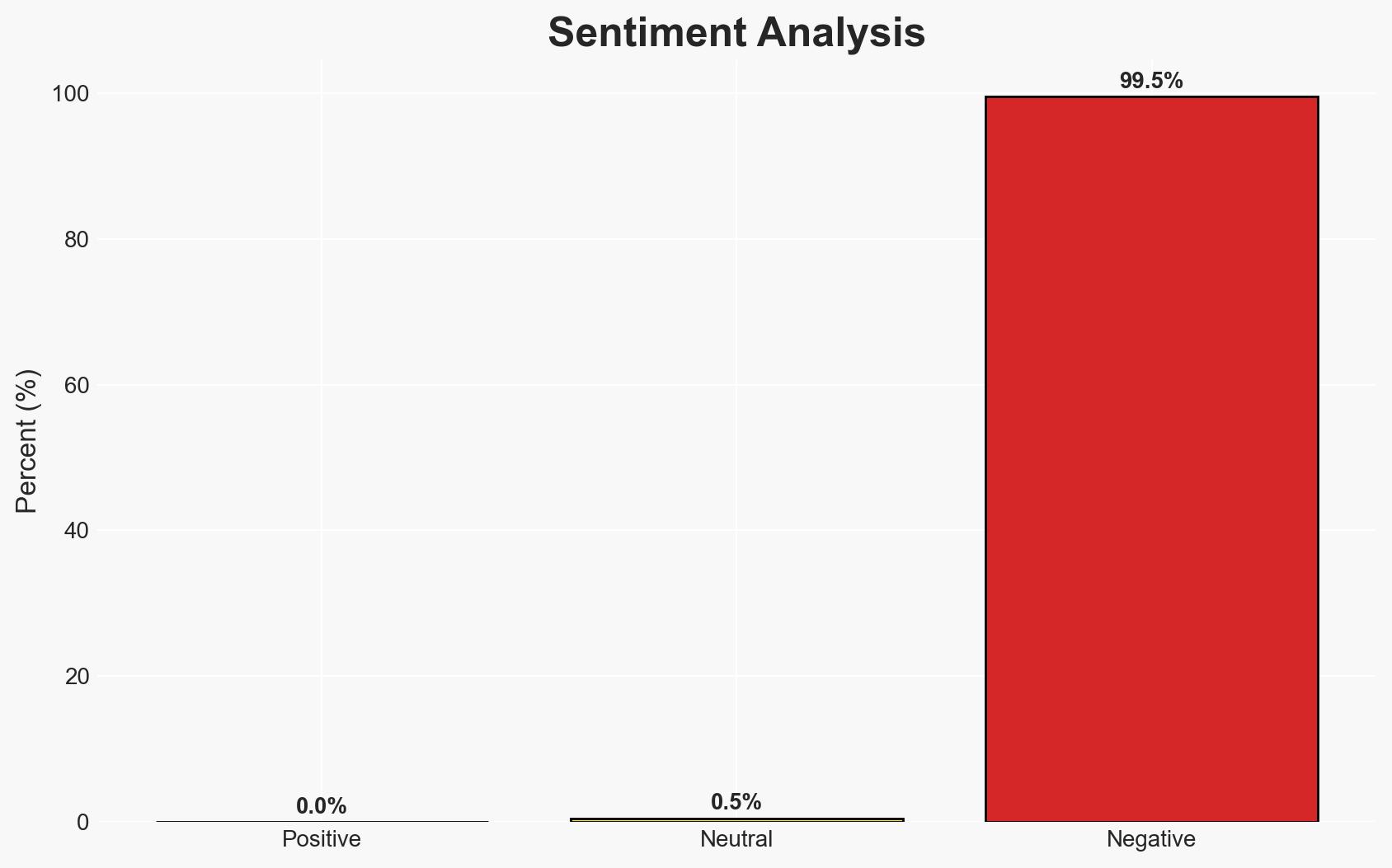

- Bias & Deception Risks: Potential bias in reporting due to the rivalry between OpenAI and Anthropic; possible manipulation of public perception by involved parties to influence market or policy outcomes.

4. Implications and Strategic Risks

This development could significantly influence the trajectory of AI integration into military operations and impact the competitive landscape of the AI industry. The alignment with national security objectives may accelerate AI adoption in defense but also raise ethical and operational challenges.

- Political / Geopolitical: Potential escalation in AI arms race; impact on U.S. relations with allies and adversaries regarding AI ethics and deployment.

- Security / Counter-Terrorism: Enhanced AI capabilities could improve threat detection and response but also introduce new vulnerabilities if not managed properly.

- Cyber / Information Space: Increased focus on AI security measures; potential for adversarial use of AI against U.S. interests.

- Economic / Social: Shifts in AI industry dynamics; possible public backlash against perceived militarization of AI technology.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Monitor further announcements from OpenAI and the Department of War; assess potential impacts on AI policy and industry standards.

- Medium-Term Posture (1–12 months): Develop resilience measures to address potential AI-related security risks; foster partnerships to align ethical standards in AI deployment.

- Scenario Outlook:

- Best Case: OpenAI’s engagement leads to enhanced national security capabilities with minimal ethical concerns, fostering industry growth.

- Worst Case: Ethical breaches or operational failures result in public backlash and regulatory challenges, destabilizing the AI sector.

- Most Likely: Gradual integration of AI into defense with ongoing ethical debates and competitive tensions shaping the industry landscape.

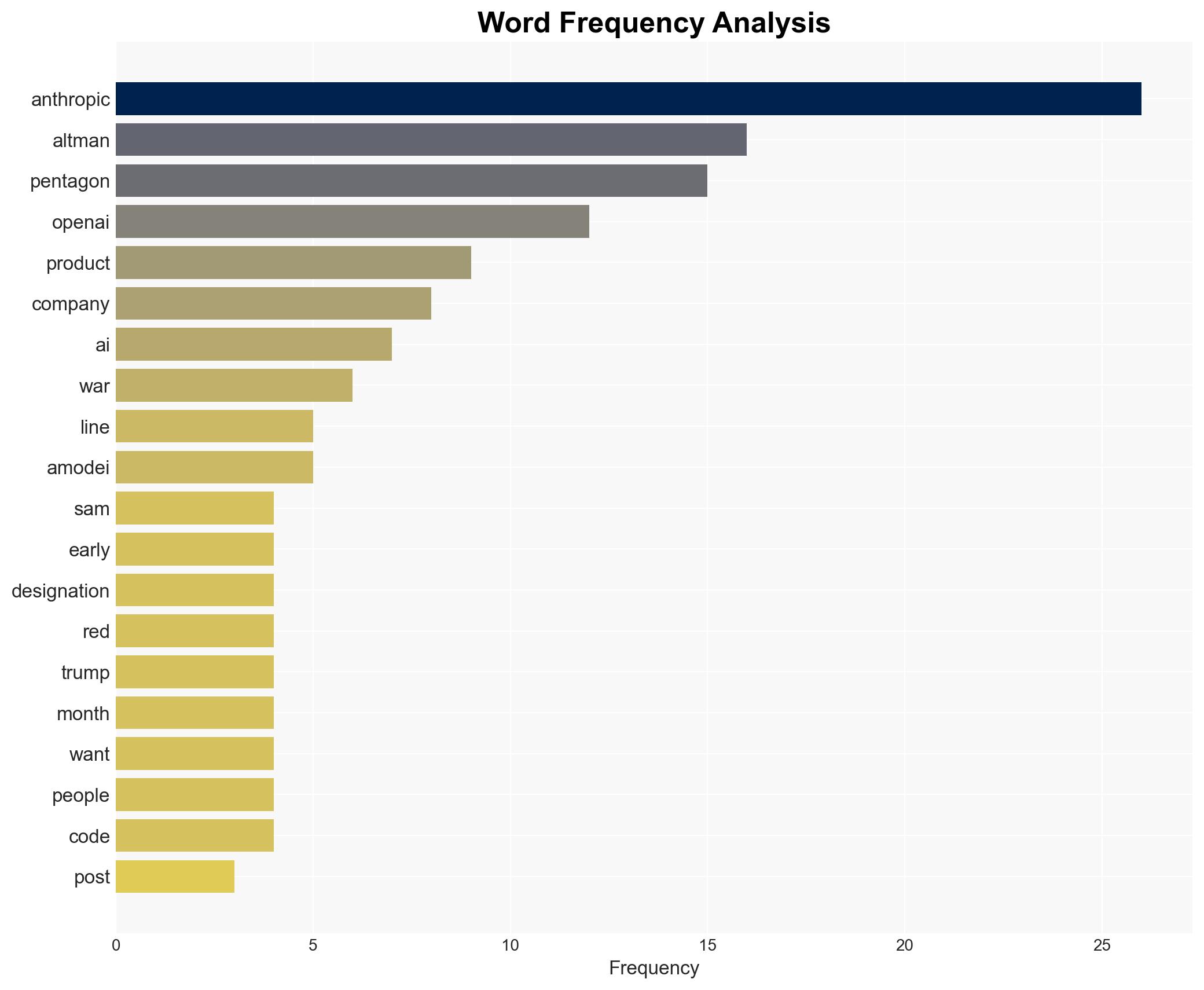

6. Key Individuals and Entities

- Sam Altman, CEO of OpenAI

- Dario Amodei, CEO of Anthropic

- Department of War

- Pete Hegseth, Secretary of War

- Jeremy Lewin, former State Department and DOGE official

7. Thematic Tags

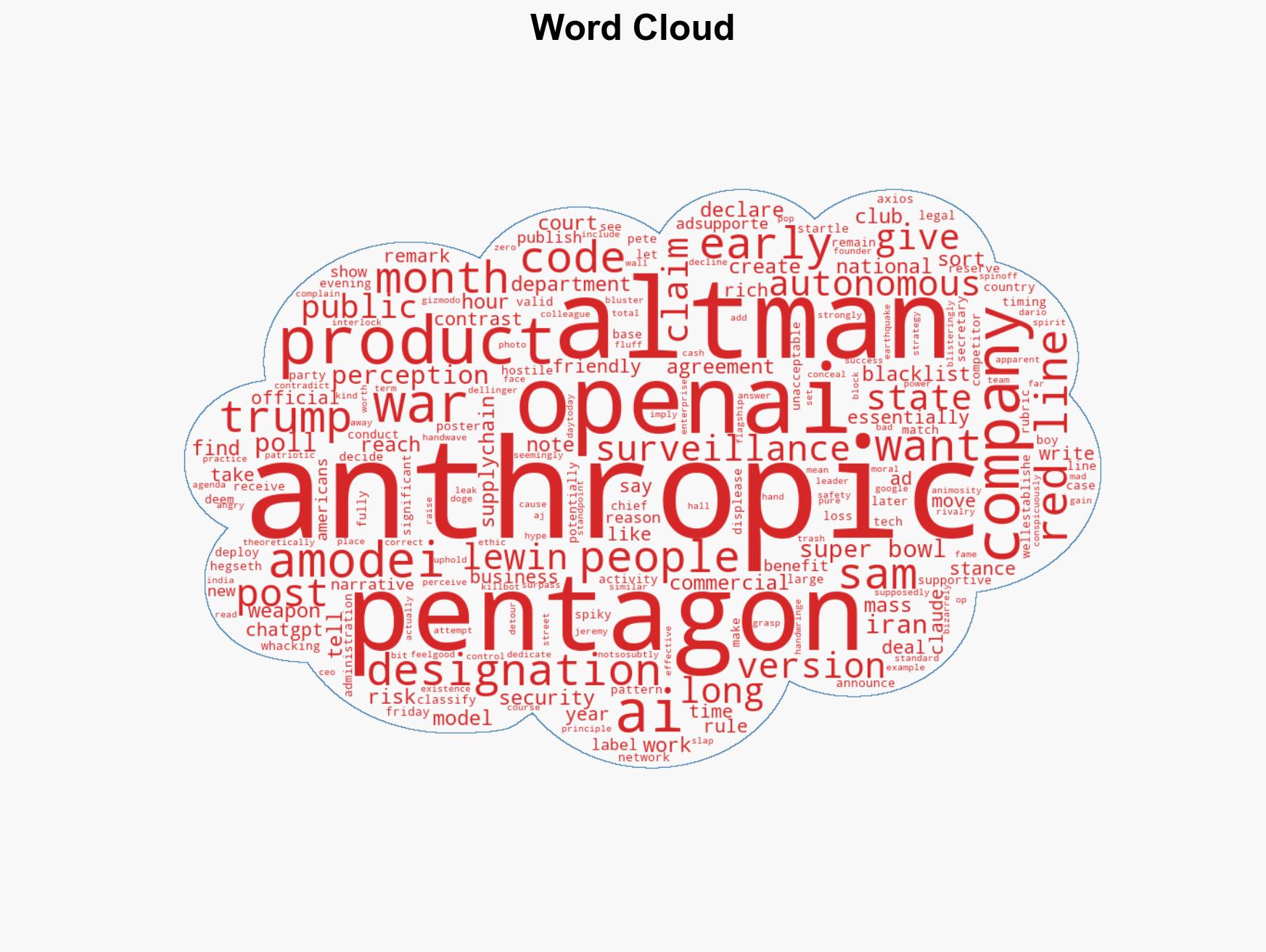

cybersecurity, AI ethics, national security, military technology, corporate rivalry, defense policy, AI deployment, strategic alignment

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us