SOCs Embrace Autonomous Operations to Combat Alert Overload by 2026

Published on: 2026-02-24

AI-powered OSINT brief from verified open sources. Automated NLP signal extraction with human verification. See our Methodology and Why WorldWideWatchers.

Intelligence Report: Why SOCs are moving toward autonomous security operations in 2026

1. BLUF (Bottom Line Up Front)

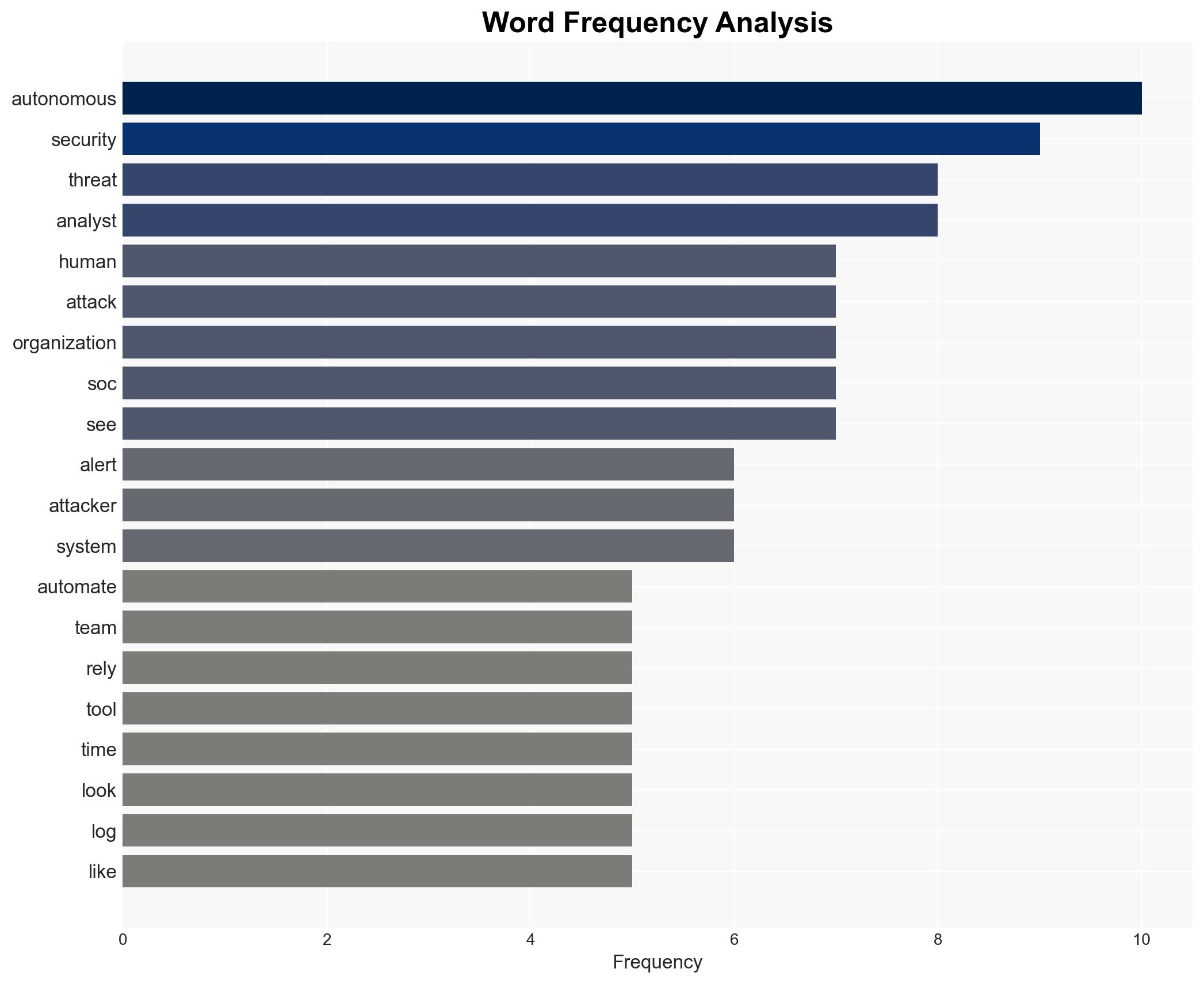

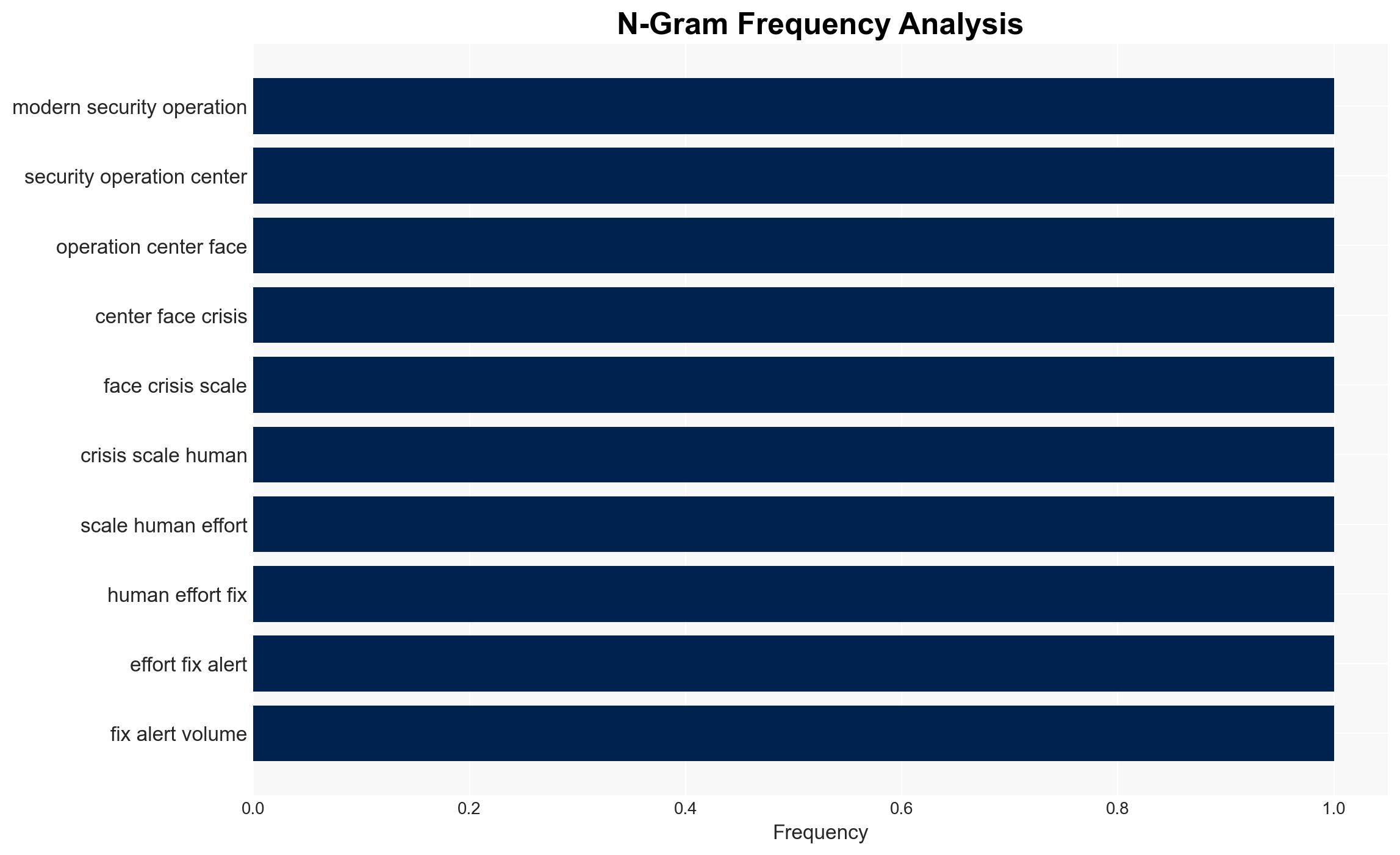

The shift towards autonomous security operations centers (SOCs) is driven by the unsustainable scale of manual alert processing and the increasing automation of cyber threats. The transition to AI-driven defense is critical to addressing these challenges, with moderate confidence that this approach will enhance security posture by 2026. Organizations, particularly mid-market enterprises, are most affected as they struggle with alert fatigue and operational blindness.

2. Competing Hypotheses

- Hypothesis A: Autonomous SOCs will significantly improve threat detection and response by integrating AI to handle alert volumes and identify sophisticated attack patterns. This is supported by the current failure of traditional SOCs to manage alert overload and the success of AI in identifying anomalies, as demonstrated in the Arup incident. However, uncertainties remain about the integration costs and potential AI limitations.

- Hypothesis B: The transition to autonomous SOCs will not substantially improve security outcomes due to potential over-reliance on AI, which could introduce new vulnerabilities or blind spots. This hypothesis is less supported given the current inadequacies of manual processes and the demonstrated potential of AI to address specific attack vectors.

- Assessment: Hypothesis A is currently better supported due to the demonstrated need for scalable solutions to manage alert volumes and the proven ability of AI to detect complex threats. Key indicators that could shift this judgment include significant AI failures or advancements in manual SOC capabilities.

3. Key Assumptions and Red Flags

- Assumptions: AI technology will continue to advance and become more cost-effective; organizations will have the capability to integrate AI into existing SOC frameworks; threat actors will increasingly automate attacks.

- Information Gaps: Specific data on the cost and time required for organizations to transition to autonomous SOCs; detailed case studies on AI failures in security contexts.

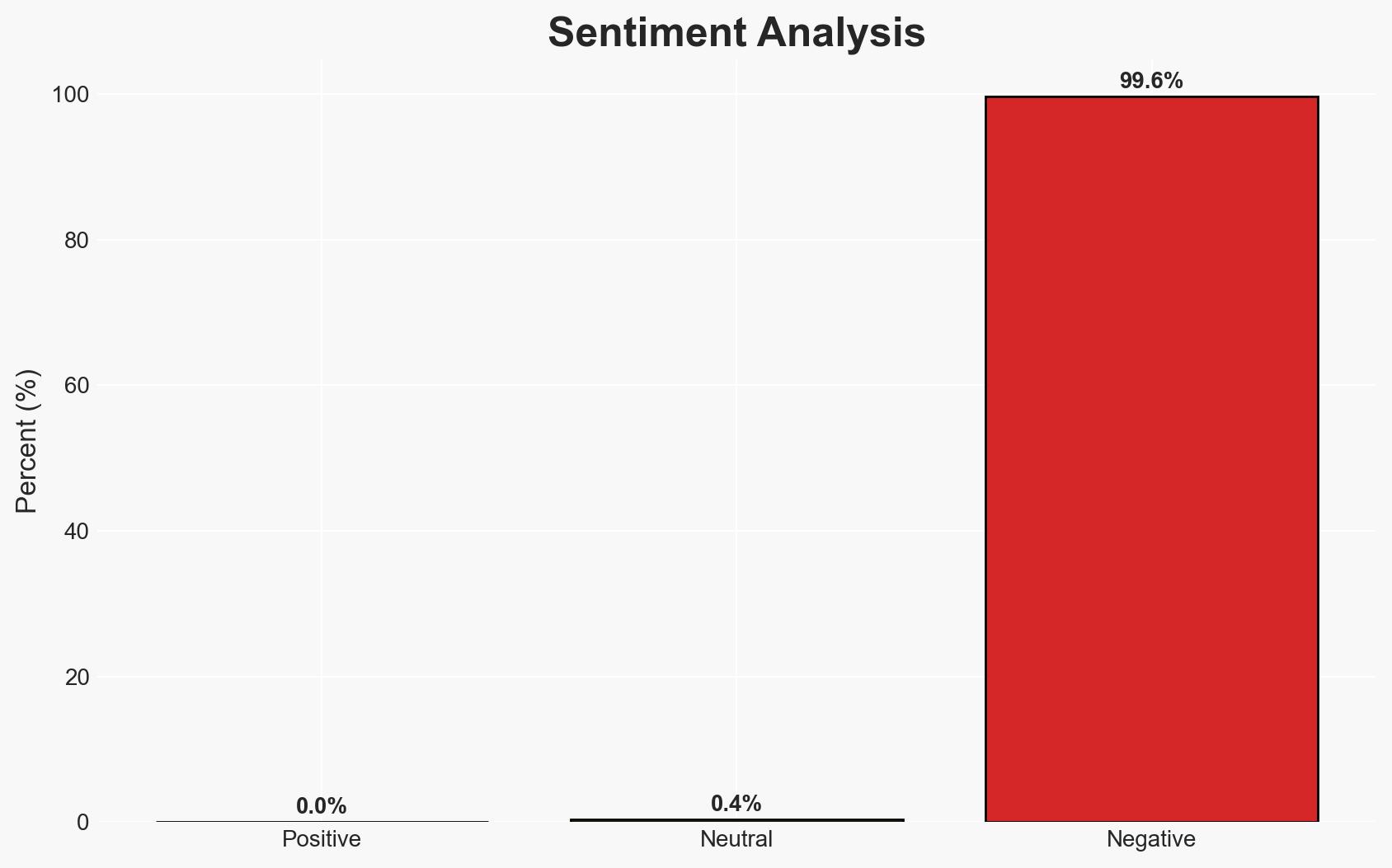

- Bias & Deception Risks: Potential overconfidence in AI capabilities; source bias from vendors promoting AI solutions; manipulation of AI systems by adversaries to create false positives or negatives.

4. Implications and Strategic Risks

The development towards autonomous SOCs could redefine cybersecurity strategies, influencing organizational structures and resource allocation. This evolution may also impact global cybersecurity norms and standards.

- Political / Geopolitical: Increased reliance on AI in cybersecurity could lead to international regulatory discussions and potential conflicts over AI governance.

- Security / Counter-Terrorism: Enhanced detection capabilities may reduce the success rate of cyber-attacks, but adversaries might develop more sophisticated AI-driven attack methods.

- Cyber / Information Space: The shift could lead to a new arms race in AI capabilities between defenders and attackers, impacting the broader cyber threat landscape.

- Economic / Social: Organizations may face significant costs in transitioning to autonomous SOCs, potentially affecting smaller enterprises disproportionately and leading to market consolidation.

5. Recommendations and Outlook

- Immediate Actions (0–30 days): Conduct a comprehensive audit of current SOC capabilities and identify critical areas for AI integration; initiate pilot programs to test AI-driven solutions.

- Medium-Term Posture (1–12 months): Develop partnerships with AI technology providers; invest in training for SOC personnel to manage and interpret AI outputs; establish protocols for AI oversight and accountability.

- Scenario Outlook:

- Best: Successful integration of AI leads to a significant reduction in cyber incidents.

- Worst: AI systems are compromised, leading to increased vulnerabilities.

- Most-Likely: Gradual improvement in threat detection with ongoing challenges in AI management and integration.

6. Key Individuals and Entities

- Not clearly identifiable from open sources in this snippet.

7. Thematic Tags

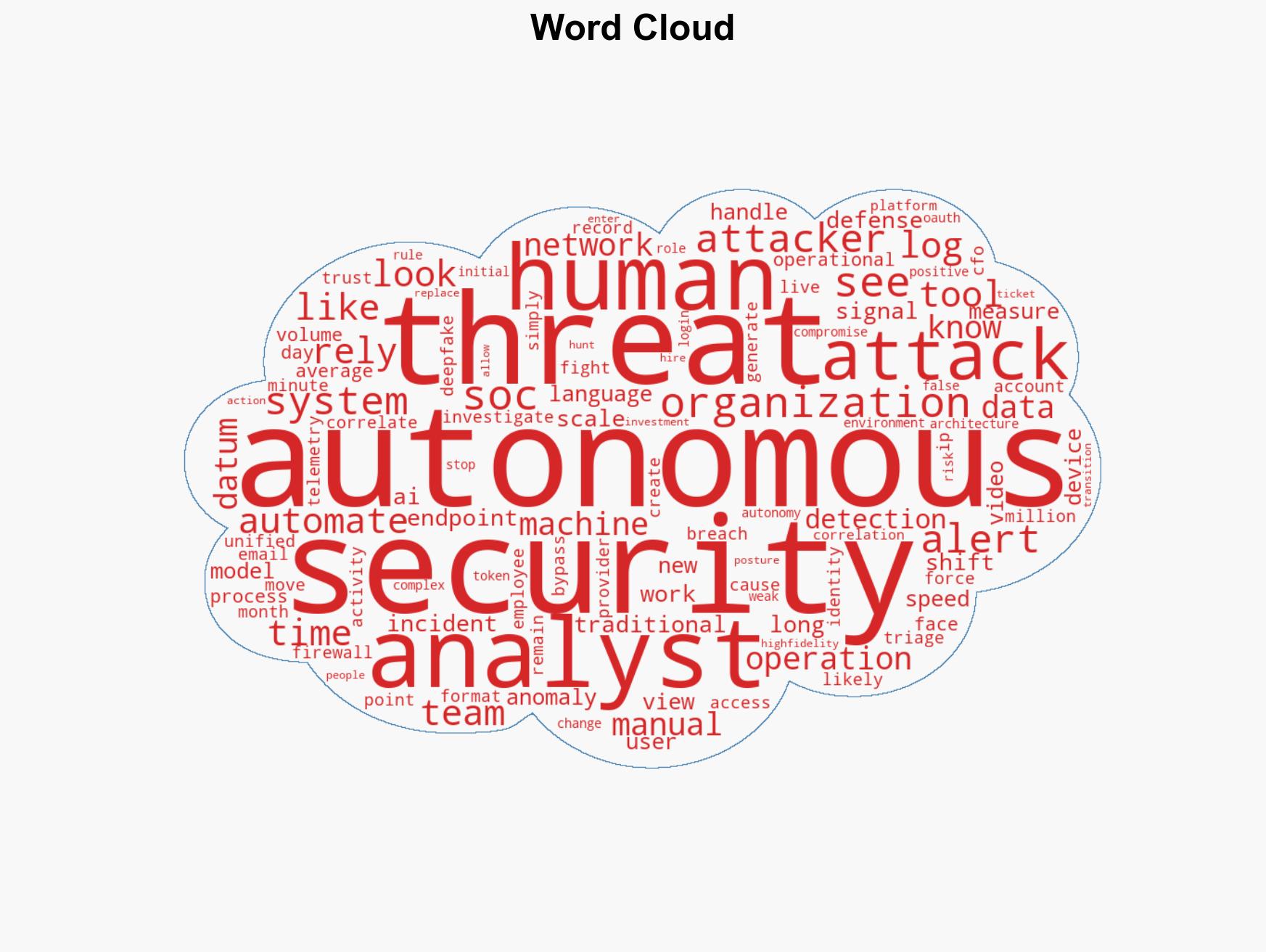

cybersecurity, artificial intelligence, SOC, threat detection, automation, cyber threats, AI governance

Structured Analytic Techniques Applied

- Adversarial Threat Simulation: Model and simulate actions of cyber adversaries to anticipate vulnerabilities and improve resilience.

- Indicators Development: Detect and monitor behavioral or technical anomalies across systems for early threat detection.

- Bayesian Scenario Modeling: Quantify uncertainty and predict cyberattack pathways using probabilistic inference.

- Network Influence Mapping: Map influence relationships to assess actor impact.

Explore more:

Cybersecurity Briefs ·

Daily Summary ·

Support us